\n

## Line Chart: LLM Training Loss Projections vs. Compute

### Overview

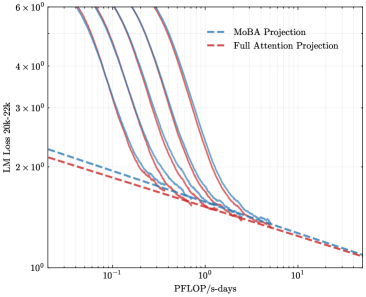

The image is a log-log line chart comparing the projected training loss of Large Language Models (LLMs) against computational resources. It displays multiple empirical loss curves (solid lines) and two theoretical projection lines (dashed). The chart illustrates the scaling behavior of model performance with increased compute.

### Components/Axes

* **Chart Type:** Log-Log Line Chart.

* **X-Axis:**

* **Label:** `PFLOP/s-days`

* **Scale:** Logarithmic.

* **Tick Marks (Approximate):** `10^-1` (0.1), `10^0` (1), `10^1` (10), `10^2` (100).

* **Y-Axis:**

* **Label:** `LLM Loss 30B-22%`

* **Scale:** Logarithmic.

* **Tick Marks (Approximate):** `10^0` (1), `2 x 10^0` (2), `3 x 10^0` (3), `4 x 10^0` (4), `6 x 10^0` (6).

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Entry 1:** `MoBA Projection` - Represented by a blue dashed line (`--`).

* **Entry 2:** `Full Attention Projection` - Represented by a red dashed line (`--`).

* **Data Series (Solid Lines):** There are approximately 7-8 solid lines in various colors (including shades of purple, blue, red, and gray). These are not explicitly labeled in the legend and likely represent empirical training runs or different model configurations.

### Detailed Analysis

* **Empirical Data (Solid Lines):**

* **Trend:** All solid lines slope steeply downward from left to right, indicating that LLM loss decreases significantly as the computational budget (PFLOP/s-days) increases.

* **Shape:** The curves are convex on the log-log plot, showing a diminishing returns relationship. The rate of loss improvement slows at higher compute values.

* **Convergence:** The solid lines appear to converge towards a similar region at the far right of the chart (high compute, ~100 PFLOP/s-days), suggesting a potential performance floor or asymptotic behavior.

* **Spread:** At lower compute values (e.g., 0.1 PFLOP/s-days), there is a wide vertical spread in loss values (from ~2 to >6), indicating high variance in efficiency or model quality at smaller scales.

* **Projection Lines (Dashed Lines):**

* **MoBA Projection (Blue Dashed):**

* **Trend:** A straight line sloping downward on the log-log plot, representing a power-law relationship.

* **Position:** It starts at a loss of ~2.2 at 0.1 PFLOP/s-days and ends at a loss of ~1.0 at 100 PFLOP/s-days. It lies *above* the Full Attention Projection line across the entire range.

* **Full Attention Projection (Red Dashed):**

* **Trend:** Also a straight, downward-sloping line on the log-log plot.

* **Position:** It starts at a loss of ~2.1 at 0.1 PFLOP/s-days and ends at a loss of ~1.0 at 100 PFLOP/s-days. It lies *below* the MoBA Projection line, suggesting a more optimistic (lower loss) forecast for the same compute.

### Key Observations

1. **Power-Law Scaling:** The straight dashed projection lines confirm that LLM loss is modeled to follow a power-law scaling with compute.

2. **Projection Divergence:** The two projection methods (MoBA vs. Full Attention) diverge more noticeably at lower compute levels and converge at very high compute (~100 PFLOP/s-days), where both predict a loss near 1.0.

3. **Empirical vs. Projected:** The solid empirical curves are generally steeper than the dashed projection lines at lower compute, suggesting that initial gains from scaling may outpace the projected power-law rate before settling into it.

4. **Performance Floor:** The clustering of all lines (empirical and projected) in the bottom-right corner suggests a strong consensus that pushing loss significantly below ~1.0 requires exponentially more compute.

### Interpretation

This chart is a technical visualization of **AI scaling laws**, specifically for LLMs. It demonstrates the fundamental principle that increasing computational resources (measured in PFLOP/s-days) leads to predictable, power-law reductions in model loss (a key performance metric).

* **What the data suggests:** The primary takeaway is that while more compute always helps, the efficiency of that compute (the loss reduction per added unit) diminishes. The comparison between "MoBA Projection" and "Full Attention Projection" likely evaluates two different architectural or methodological approaches for predicting this scaling. The "Full Attention" projection appears more optimistic, predicting slightly lower loss for the same compute budget.

* **How elements relate:** The solid lines provide real-world context against the theoretical dashed projections. Their convergence at high compute validates the core scaling hypothesis but also highlights that the exact trajectory (the path to that convergence) can vary based on model design and training methodology.

* **Notable Anomalies:** The significant spread of the solid lines at low compute is notable. It implies that at smaller scales, factors other than raw compute (like data quality, architecture details, or hyperparameter tuning) have a massive impact on performance. This variance collapses at scale, where compute becomes the dominant factor.

* **Peircean Investigation:** The chart is an **icon** (resembling the phenomenon of diminishing returns) and a **symbol** (using standardized axes and legends to represent abstract concepts like "loss" and "compute"). It functions as an **index** pointing to the underlying, empirically observed relationship between resource investment and model capability in modern AI research. The space between the two dashed lines represents a zone of theoretical uncertainty in forecasting AI progress.