## Scatter Plot: GFLOPS vs. Parameters for CNN and Transformer Architectures

### Overview

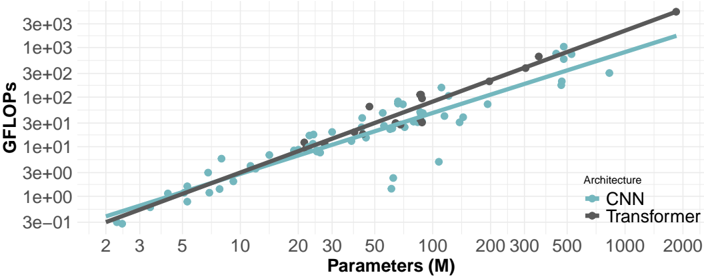

This image presents a scatter plot comparing the computational cost (GFLOPS) of Convolutional Neural Networks (CNNs) and Transformer architectures as a function of their number of parameters (in millions). Two regression lines are overlaid on the data to show the general trend for each architecture.

### Components/Axes

* **X-axis:** Parameters (M) - Scale is logarithmic, ranging from approximately 2 to 2000. Tick marks are present at 2, 3, 5, 10, 20, 30, 50, 100, 200, 300, 500, and 1000.

* **Y-axis:** GFLOPS - Scale is logarithmic, ranging from approximately 3e-01 to 3e+03. Tick marks are present at 3e-01, 1e+00, 3e+00, 1e+01, 3e+01, 1e+02, 3e+02, 1e+03, and 3e+03.

* **Legend:** Located in the top-right corner.

* "Architecture" label.

* CNN: Represented by light blue circles.

* Transformer: Represented by dark brown diamonds.

* **Data Points:** Scatter plot of individual CNN and Transformer models.

* **Regression Lines:** Two lines representing the trend for each architecture. The CNN line is light blue, and the Transformer line is dark brown. Shaded areas around the lines indicate confidence intervals.

### Detailed Analysis

**CNN Data (Light Blue Circles):**

The CNN data points generally follow an upward trend, indicating that as the number of parameters increases, the GFLOPS also increase. The trend is approximately linear on this log-log scale.

* At approximately 2M parameters, GFLOPS is around 0.3.

* At approximately 5M parameters, GFLOPS is around 1.

* At approximately 10M parameters, GFLOPS is around 3.

* At approximately 20M parameters, GFLOPS is around 8.

* At approximately 50M parameters, GFLOPS is around 20.

* At approximately 100M parameters, GFLOPS is around 50.

* At approximately 200M parameters, GFLOPS is around 120.

* At approximately 500M parameters, GFLOPS is around 250.

* At approximately 1000M parameters, GFLOPS is around 600.

There is some scatter around the regression line, indicating variability in GFLOPS for CNNs with similar parameter counts.

**Transformer Data (Dark Brown Diamonds):**

The Transformer data points also exhibit an upward trend, but appear to have a steeper slope than the CNN data.

* At approximately 2M parameters, GFLOPS is around 0.5.

* At approximately 5M parameters, GFLOPS is around 2.

* At approximately 10M parameters, GFLOPS is around 6.

* At approximately 20M parameters, GFLOPS is around 15.

* At approximately 50M parameters, GFLOPS is around 40.

* At approximately 100M parameters, GFLOPS is around 100.

* At approximately 200M parameters, GFLOPS is around 250.

* At approximately 500M parameters, GFLOPS is around 700.

* At approximately 1000M parameters, GFLOPS is around 1500.

The Transformer data also shows some scatter, but appears more tightly clustered around its regression line than the CNN data.

**Regression Lines:**

The regression lines visually confirm the upward trends for both architectures. The Transformer line has a noticeably steeper slope, indicating a faster increase in GFLOPS with increasing parameters compared to CNNs.

### Key Observations

* Transformers generally require more GFLOPS than CNNs for a given number of parameters.

* Both architectures exhibit a roughly linear relationship between parameters and GFLOPS on this log-log scale.

* There is variability within each architecture, as evidenced by the scatter of data points around the regression lines.

* The confidence intervals around the regression lines suggest some uncertainty in the estimated trends.

### Interpretation

The data suggests that Transformers are computationally more expensive than CNNs, particularly as the model size (number of parameters) increases. This is likely due to the attention mechanism inherent in Transformers, which requires more computations than the convolutional operations used in CNNs. The linear relationship on the log-log scale indicates that the computational cost scales approximately polynomially with the number of parameters for both architectures. The scatter in the data suggests that other factors, such as network depth, layer types, and specific implementation details, also influence the GFLOPS. The steeper slope of the Transformer line implies that the computational cost increases more rapidly with parameter count for Transformers, potentially limiting their scalability compared to CNNs. This information is valuable for researchers and engineers designing and deploying deep learning models, as it helps to understand the trade-offs between model size, computational cost, and performance.