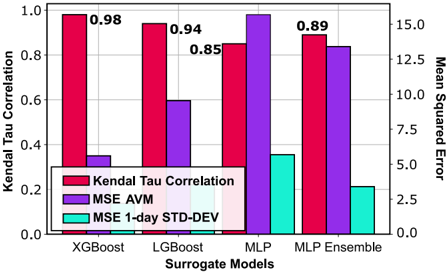

## Bar Chart: Performance Metrics of Surrogate Models

### Overview

The chart compares three performance metrics (Kendal Tau Correlation, MSE AVM, and MSE 1-day STD-DEV) across four surrogate models (XGBoost, LGBoost, MLP, MLP Ensemble). Each model is represented by grouped bars in red (Kendal Tau), purple (MSE AVM), and cyan (MSE 1-day STD-DEV). Values are labeled on top of bars, with axes scaled to 0–1 for Kendal Tau and 0–15 for Mean Squared Error.

### Components/Axes

- **X-axis**: Surrogate Models (XGBoost, LGBoost, MLP, MLP Ensemble)

- **Left Y-axis**: Kendal Tau Correlation (0–1)

- **Right Y-axis**: Mean Squared Error (0–15)

- **Legend**: Located at bottom-left, mapping colors to metrics:

- Red: Kendal Tau Correlation

- Purple: MSE AVM

- Cyan: MSE 1-day STD-DEV

### Detailed Analysis

#### Kendal Tau Correlation (Red Bars)

- **XGBoost**: 0.98 (highest)

- **LGBoost**: 0.94

- **MLP**: 0.85

- **MLP Ensemble**: 0.89

#### MSE AVM (Purple Bars)

- **XGBoost**: 0.35

- **LGBoost**: 0.60

- **MLP**: 1.00 (highest)

- **MLP Ensemble**: 0.85

#### MSE 1-day STD-DEV (Cyan Bars)

- **XGBoost**: 0.12

- **LGBoost**: 0.15

- **MLP**: 0.35

- **MLP Ensemble**: 0.20

### Key Observations

1. **Kendal Tau Correlation**: All models show strong correlation (>0.85), with XGBoost leading at 0.98.

2. **MSE AVM**: MLP has the highest error (1.00), while XGBoost performs best (0.35).

3. **MSE 1-day STD-DEV**: XGBoost has the lowest variability (0.12), followed by MLP Ensemble (0.20).

4. **MLP Ensemble**: Balances moderate Kendal Tau (0.89) with mid-range MSE metrics (0.85 AVM, 0.20 STD-DEV).

### Interpretation

- **Model Performance**: XGBoost excels in correlation and error metrics, suggesting robustness. MLP, while strong in correlation, shows high MSE AVM, indicating potential overfitting or instability.

- **MLP Ensemble**: Demonstrates a trade-off between correlation and error, performing better than individual MLP but worse than XGBoost/LGBoost in correlation.

- **Anomalies**: MLP’s high MSE AVM (1.00) contrasts with its moderate Kendal Tau (0.85), suggesting possible discrepancies in model stability or calibration.

- **Practical Implications**: XGBoost and LGBoost are optimal for high-correlation tasks, while MLP Ensemble may suit scenarios requiring balanced error metrics.