# Technical Analysis: BF16 vs. FP8 Training Loss on DeepSeek-V2

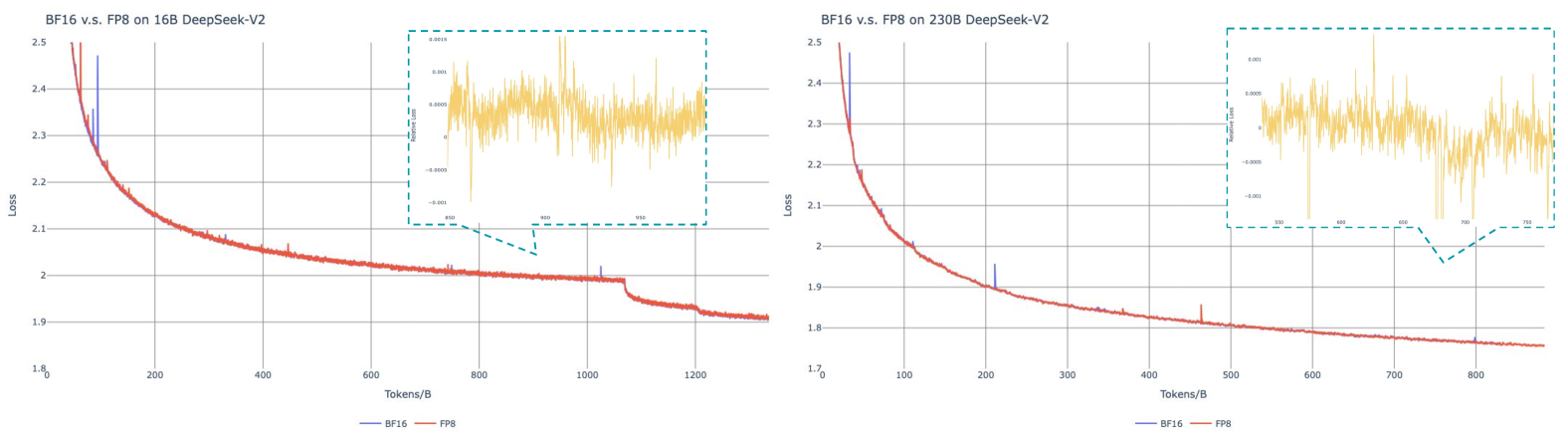

This document provides a detailed extraction of data and trends from two side-by-side line charts comparing the training loss of the DeepSeek-V2 model using two different numerical formats: **BF16** (Blue) and **FP8** (Red).

---

## 1. General Layout and Components

The image consists of two primary panels, each containing a main line chart and an inset "Relative Loss" chart.

* **Left Panel:** DeepSeek-V2 16B parameter model.

* **Right Panel:** DeepSeek-V2 230B parameter model.

* **Legend (Common):** Located at the bottom center of each panel.

* **Blue Line:** BF16

* **Red Line:** FP8

* **X-Axis (Common):** Tokens/B (Tokens in Billions).

* **Y-Axis (Common):** Loss.

---

## 2. Left Chart: BF16 v.s. FP8 on 16B DeepSeek-V2

### Main Chart Data

* **X-Axis Range:** 0 to ~1350 Tokens/B. Markers at intervals of 200 (0, 200, 400, 600, 800, 1000, 1200).

* **Y-Axis Range:** 1.8 to 2.5. Markers at 1.8, 1.9, 2.0, 2.1, 2.2, 2.3, 2.4, 2.5.

* **Trend Analysis:**

* **BF16 (Blue):** Shows a sharp exponential decay initially, stabilizing into a gradual decline. There are several distinct vertical "spikes" (noise/instability) visible, notably around 100, 350, 750, and 1050 Tokens/B.

* **FP8 (Red):** Follows the BF16 curve almost perfectly. The red line is layered on top of the blue line, indicating nearly identical convergence behavior.

* **Key Observation:** At approximately 1080 Tokens/B, there is a sharp step-down in loss for both formats, likely indicating a learning rate decay or a change in training curriculum.

### Inset Chart: Relative Loss (16B)

* **Focus Area:** Approximately 850 to 980 Tokens/B.

* **Y-Axis:** Relative Loss (Range: -0.001 to 0.0015).

* **Content:** A yellow line representing the variance between the two formats. The values oscillate around 0.0005, indicating that the difference between FP8 and BF16 is extremely marginal (less than 0.1%).

---

## 3. Right Chart: BF16 v.s. FP8 on 230B DeepSeek-V2

### Main Chart Data

* **X-Axis Range:** 0 to ~900 Tokens/B. Markers at intervals of 100 (0, 100, 200, 300, 400, 500, 600, 700, 800).

* **Y-Axis Range:** 1.7 to 2.5. Markers at 1.7, 1.8, 1.9, 2.0, 2.1, 2.2, 2.3, 2.4, 2.5.

* **Trend Analysis:**

* **BF16 (Blue):** Similar to the 16B model, it shows a smooth convergence curve. Spikes are visible at roughly 50, 210, 340, 480, and 800 Tokens/B.

* **FP8 (Red):** Overlays the BF16 line with high precision. The convergence path is indistinguishable from BF16 at this scale.

* **Key Observation:** The overall loss is lower than the 16B model (ending near 1.75 vs 1.9), consistent with the higher capacity of a 230B parameter model.

### Inset Chart: Relative Loss (230B)

* **Focus Area:** Approximately 550 to 780 Tokens/B.

* **Y-Axis:** Relative Loss (Range: -0.001 to 0.001).

* **Content:** The yellow line shows the relative difference. While there are sharp downward spikes (reaching -0.001), the mean relative loss stays very close to 0.000, suggesting even tighter parity between FP8 and BF16 on the larger model.

---

## 4. Summary of Technical Findings

| Feature | 16B Model Observation | 230B Model Observation |

| :--- | :--- | :--- |

| **Convergence** | Identical for BF16 and FP8 | Identical for BF16 and FP8 |

| **Final Loss** | ~1.91 | ~1.76 |

| **Stability** | Occasional spikes in both formats | Occasional spikes in both formats |

| **Relative Delta** | Centered near 0.0005 | Centered near 0.0000 |

**Conclusion:** The data demonstrates that training DeepSeek-V2 using **FP8** precision yields results that are numerically and behaviorally equivalent to **BF16** training across both small (16B) and large (230B) scales, while likely offering computational efficiency gains not explicitly charted here.