\n

## Bar Charts: Model Performance Comparison (GPT-3.5 vs. GPT-4)

### Overview

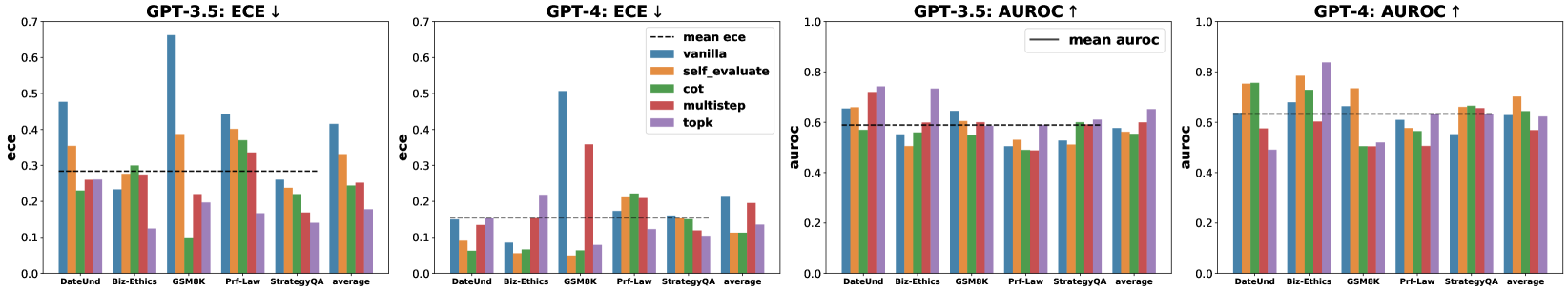

The image presents four bar charts comparing the performance of GPT-3.5 and GPT-4 models across different datasets (DateInd, Bio-Ethics, GSM8K, Prt-Law, StrategyQA) using two metrics: Expected Calibration Error (ECE) and Area Under the Receiver Operating Characteristic curve (AUROC). Each chart displays the performance of different prompting strategies: vanilla, self-evaluate, CoT (Chain-of-Thought), multistep, and topk. The charts are arranged horizontally, with GPT-3.5 ECE, GPT-4 ECE, GPT-3.5 AUROC, and GPT-4 AUROC presented in that order.

### Components/Axes

* **X-axis:** Datasets - DateInd, Bio-Ethics, GSM8K, Prt-Law, StrategyQA, average.

* **Y-axis (Charts 1 & 2):** ECE (Expected Calibration Error) - Scale from 0.0 to 0.7.

* **Y-axis (Charts 3 & 4):** AUROC (Area Under the Receiver Operating Characteristic curve) - Scale from 0.2 to 1.0.

* **Legend:**

* Vanilla (Blue)

* Self-evaluate (Orange)

* CoT (Green)

* Multistep (Red)

* Topk (Purple)

* Mean ECE (Charts 1 & 2) - Dashed Black Line

* Mean AUROC (Charts 3 & 4) - Solid Black Line

### Detailed Analysis or Content Details

**Chart 1: GPT-3.5 ECE**

* **Vanilla:** DateInd (~0.28), Bio-Ethics (~0.52), GSM8K (~0.18), Prt-Law (~0.25), StrategyQA (~0.28), average (~0.30).

* **Self-evaluate:** DateInd (~0.22), Bio-Ethics (~0.45), GSM8K (~0.15), Prt-Law (~0.20), StrategyQA (~0.24), average (~0.25).

* **CoT:** DateInd (~0.18), Bio-Ethics (~0.38), GSM8K (~0.12), Prt-Law (~0.18), StrategyQA (~0.20), average (~0.21).

* **Multistep:** DateInd (~0.20), Bio-Ethics (~0.40), GSM8K (~0.14), Prt-Law (~0.21), StrategyQA (~0.22), average (~0.23).

* **Topk:** DateInd (~0.24), Bio-Ethics (~0.48), GSM8K (~0.16), Prt-Law (~0.23), StrategyQA (~0.26), average (~0.27).

* **Mean ECE:** Approximately 0.32.

**Chart 2: GPT-4 ECE**

* **Vanilla:** DateInd (~0.15), Bio-Ethics (~0.35), GSM8K (~0.10), Prt-Law (~0.15), StrategyQA (~0.18), average (~0.19).

* **Self-evaluate:** DateInd (~0.12), Bio-Ethics (~0.30), GSM8K (~0.08), Prt-Law (~0.12), StrategyQA (~0.15), average (~0.15).

* **CoT:** DateInd (~0.10), Bio-Ethics (~0.25), GSM8K (~0.07), Prt-Law (~0.10), StrategyQA (~0.13), average (~0.13).

* **Multistep:** DateInd (~0.11), Bio-Ethics (~0.28), GSM8K (~0.08), Prt-Law (~0.11), StrategyQA (~0.14), average (~0.14).

* **Topk:** DateInd (~0.13), Bio-Ethics (~0.32), GSM8K (~0.09), Prt-Law (~0.13), StrategyQA (~0.16), average (~0.17).

* **Mean ECE:** Approximately 0.22.

**Chart 3: GPT-3.5 AUROC**

* **Vanilla:** DateInd (~0.55), Bio-Ethics (~0.55), GSM8K (~0.52), Prt-Law (~0.60), StrategyQA (~0.65), average (~0.57).

* **Self-evaluate:** DateInd (~0.60), Bio-Ethics (~0.60), GSM8K (~0.55), Prt-Law (~0.65), StrategyQA (~0.70), average (~0.62).

* **CoT:** DateInd (~0.65), Bio-Ethics (~0.65), GSM8K (~0.58), Prt-Law (~0.70), StrategyQA (~0.75), average (~0.67).

* **Multistep:** DateInd (~0.62), Bio-Ethics (~0.62), GSM8K (~0.56), Prt-Law (~0.68), StrategyQA (~0.72), average (~0.64).

* **Topk:** DateInd (~0.58), Bio-Ethics (~0.58), GSM8K (~0.54), Prt-Law (~0.62), StrategyQA (~0.68), average (~0.60).

* **Mean AUROC:** Approximately 0.64.

**Chart 4: GPT-4 AUROC**

* **Vanilla:** DateInd (~0.65), Bio-Ethics (~0.70), GSM8K (~0.60), Prt-Law (~0.75), StrategyQA (~0.80), average (~0.70).

* **Self-evaluate:** DateInd (~0.70), Bio-Ethics (~0.75), GSM8K (~0.65), Prt-Law (~0.80), StrategyQA (~0.85), average (~0.75).

* **CoT:** DateInd (~0.75), Bio-Ethics (~0.80), GSM8K (~0.70), Prt-Law (~0.85), StrategyQA (~0.90), average (~0.80).

* **Multistep:** DateInd (~0.72), Bio-Ethics (~0.78), GSM8K (~0.68), Prt-Law (~0.82), StrategyQA (~0.87), average (~0.77).

* **Topk:** DateInd (~0.68), Bio-Ethics (~0.72), GSM8K (~0.62), Prt-Law (~0.78), StrategyQA (~0.82), average (~0.72).

* **Mean AUROC:** Approximately 0.78.

### Key Observations

* GPT-4 consistently outperforms GPT-3.5 in both ECE and AUROC across all datasets and prompting strategies.

* For both models, the CoT prompting strategy generally yields the lowest ECE and highest AUROC.

* The Bio-Ethics dataset consistently shows higher ECE values compared to other datasets, indicating potential calibration issues.

* StrategyQA consistently shows higher AUROC values, suggesting better performance on question answering tasks.

* The average performance across all datasets shows a clear improvement with GPT-4.

### Interpretation

The data demonstrates that GPT-4 is a significantly more reliable and accurate model than GPT-3.5. The lower ECE scores for GPT-4 indicate that its confidence levels are better aligned with its actual accuracy, making it more trustworthy. The higher AUROC scores suggest improved discrimination between correct and incorrect predictions. The CoT prompting strategy appears to be particularly effective in eliciting better performance from both models, likely due to its ability to encourage more reasoned and step-by-step thinking. The variations in performance across datasets highlight the importance of considering the specific characteristics of the data when evaluating and deploying these models. The consistently high ECE on the Bio-Ethics dataset suggests that this domain presents unique challenges for model calibration, potentially due to the nuanced and complex nature of ethical reasoning. The overall trend indicates that advancements in model architecture and prompting techniques are leading to substantial improvements in the reliability and accuracy of large language models.