TECHNICAL ASSET FINGERPRINT

5a60f4cf52920174253cb5ef

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

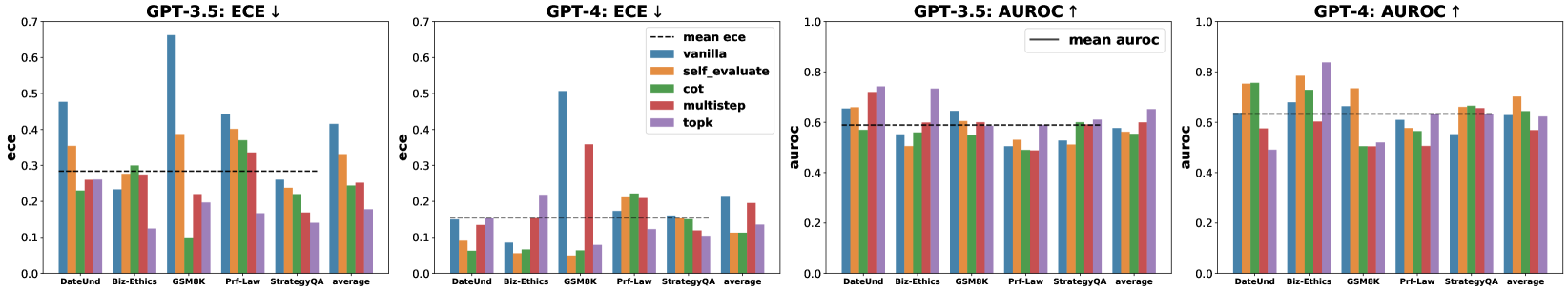

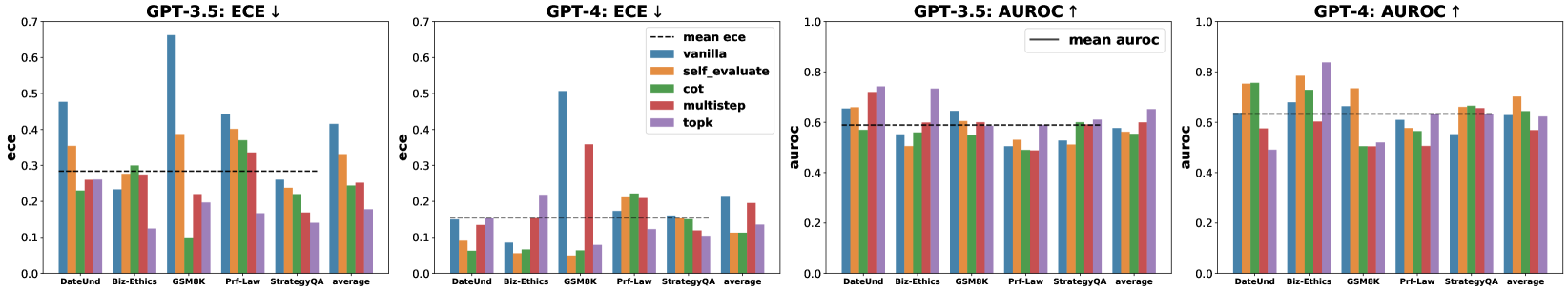

## Bar Charts: Model Calibration (ECE) and Performance (AUROC) Across Tasks

### Overview

The image displays four grouped bar charts arranged horizontally, comparing the performance of two large language models (GPT-3.5 and GPT-4) on five specific tasks and their average. The charts evaluate two key metrics: Expected Calibration Error (ECE, lower is better) and Area Under the Receiver Operating Characteristic curve (AUROC, higher is better). Each chart compares five different prompting or reasoning methods against a baseline.

### Components/Axes

* **Titles (Top of each chart):**

* Chart 1 (Left): `GPT-3.5: ECE ↓`

* Chart 2 (Center-Left): `GPT-4: ECE ↓`

* Chart 3 (Center-Right): `GPT-3.5: AUROC ↑`

* Chart 4 (Right): `GPT-4: AUROC ↑`

* **Y-Axis Labels:**

* Charts 1 & 2: `ece` (scale: 0.0 to 0.7)

* Charts 3 & 4: `auroc` (scale: 0.0 to 1.0)

* **X-Axis Categories (Common to all charts):** `DateUnd`, `Biz-Ethics`, `GSM8K`, `Prf-Law`, `StrategyQA`, `average`.

* **Legend (Located in the top-right of the second chart, applies to all):**

* `--- mean ece` (dashed black line, present in ECE charts)

* `— mean auroc` (solid black line, present in AUROC charts)

* `vanilla` (Blue bar)

* `self_evaluate` (Orange bar)

* `cot` (Green bar)

* `multistep` (Red bar)

* `topk` (Purple bar)

### Detailed Analysis

#### Chart 1: GPT-3.5: ECE ↓

* **Trend:** The `vanilla` method (blue) generally shows the highest ECE (worst calibration), particularly spiking on `GSM8K`. The `mean ece` line sits at approximately 0.28.

* **Data Points (Approximate ECE values):**

* **DateUnd:** Vanilla ~0.48, Self_evaluate ~0.35, Cot ~0.23, Multistep ~0.26, Topk ~0.25.

* **Biz-Ethics:** Vanilla ~0.23, Self_evaluate ~0.25, Cot ~0.30, Multistep ~0.28, Topk ~0.13.

* **GSM8K:** Vanilla ~0.66, Self_evaluate ~0.39, Cot ~0.10, Multistep ~0.22, Topk ~0.20.

* **Prf-Law:** Vanilla ~0.45, Self_evaluate ~0.40, Cot ~0.37, Multistep ~0.34, Topk ~0.17.

* **StrategyQA:** Vanilla ~0.26, Self_evaluate ~0.24, Cot ~0.22, Multistep ~0.19, Topk ~0.14.

* **Average:** Vanilla ~0.42, Self_evaluate ~0.33, Cot ~0.25, Multistep ~0.26, Topk ~0.18.

#### Chart 2: GPT-4: ECE ↓

* **Trend:** Overall ECE values are lower than GPT-3.5, indicating better calibration. The `vanilla` method again shows high ECE on `GSM8K`. The `mean ece` line is at approximately 0.16.

* **Data Points (Approximate ECE values):**

* **DateUnd:** Vanilla ~0.15, Self_evaluate ~0.09, Cot ~0.06, Multistep ~0.14, Topk ~0.14.

* **Biz-Ethics:** Vanilla ~0.08, Self_evaluate ~0.06, Cot ~0.07, Multistep ~0.16, Topk ~0.22.

* **GSM8K:** Vanilla ~0.51, Self_evaluate ~0.05, Cot ~0.06, Multistep ~0.36, Topk ~0.08.

* **Prf-Law:** Vanilla ~0.17, Self_evaluate ~0.22, Cot ~0.21, Multistep ~0.21, Topk ~0.12.

* **StrategyQA:** Vanilla ~0.16, Self_evaluate ~0.15, Cot ~0.15, Multistep ~0.12, Topk ~0.11.

* **Average:** Vanilla ~0.21, Self_evaluate ~0.11, Cot ~0.11, Multistep ~0.20, Topk ~0.14.

#### Chart 3: GPT-3.5: AUROC ↑

* **Trend:** Performance is relatively consistent across methods, with `topk` (purple) often performing well. The `mean auroc` line is at approximately 0.59.

* **Data Points (Approximate AUROC values):**

* **DateUnd:** Vanilla ~0.66, Self_evaluate ~0.67, Cot ~0.59, Multistep ~0.73, Topk ~0.74.

* **Biz-Ethics:** Vanilla ~0.55, Self_evaluate ~0.52, Cot ~0.60, Multistep ~0.60, Topk ~0.73.

* **GSM8K:** Vanilla ~0.65, Self_evaluate ~0.60, Cot ~0.56, Multistep ~0.60, Topk ~0.60.

* **Prf-Law:** Vanilla ~0.51, Self_evaluate ~0.50, Cot ~0.49, Multistep ~0.49, Topk ~0.52.

* **StrategyQA:** Vanilla ~0.52, Self_evaluate ~0.53, Cot ~0.60, Multistep ~0.61, Topk ~0.60.

* **Average:** Vanilla ~0.58, Self_evaluate ~0.56, Cot ~0.57, Multistep ~0.61, Topk ~0.66.

#### Chart 4: GPT-4: AUROC ↑

* **Trend:** AUROC values are generally higher than GPT-3.5. `Self_evaluate` (orange) and `cot` (green) show strong performance. The `mean auroc` line is at approximately 0.63.

* **Data Points (Approximate AUROC values):**

* **DateUnd:** Vanilla ~0.63, Self_evaluate ~0.75, Cot ~0.76, Multistep ~0.58, Topk ~0.49.

* **Biz-Ethics:** Vanilla ~0.65, Self_evaluate ~0.78, Cot ~0.73, Multistep ~0.60, Topk ~0.84.

* **GSM8K:** Vanilla ~0.66, Self_evaluate ~0.73, Cot ~0.51, Multistep ~0.51, Topk ~0.52.

* **Prf-Law:** Vanilla ~0.61, Self_evaluate ~0.57, Cot ~0.60, Multistep ~0.51, Topk ~0.64.

* **StrategyQA:** Vanilla ~0.55, Self_evaluate ~0.65, Cot ~0.67, Multistep ~0.65, Topk ~0.64.

* **Average:** Vanilla ~0.63, Self_evaluate ~0.70, Cot ~0.65, Multistep ~0.57, Topk ~0.63.

### Key Observations

1. **Calibration (ECE):** GPT-4 is significantly better calibrated (lower ECE) than GPT-3.5 across almost all tasks and methods. The `vanilla` prompting method is poorly calibrated for mathematical reasoning (`GSM8K`) in both models.

2. **Performance (AUROC):** GPT-4 also achieves higher AUROC scores than GPT-3.5 on average. The `topk` method is a top performer for GPT-3.5, while `self_evaluate` and `cot` are strong for GPT-4.

3. **Task Variability:** Performance and calibration vary greatly by task. `GSM8K` (math) is a notable outlier for poor calibration with vanilla prompting. `Prf-Law` appears to be a challenging task for calibration (higher ECE) and performance (lower AUROC) for both models.

4. **Method Impact:** Advanced methods (`cot`, `self_evaluate`, `topk`, `multistep`) generally improve calibration (lower ECE) over `vanilla` prompting, especially for GPT-3.5. Their impact on AUROC is more task- and model-dependent.

### Interpretation

This data suggests a clear progression from GPT-3.5 to GPT-4 in both **reliability** (better calibration, meaning its confidence scores are more accurate) and **discriminative power** (higher AUROC, meaning it's better at distinguishing between correct and incorrect answers).

The poor calibration of `vanilla` prompting on `GSM8K` indicates that base models are overconfident in their mathematical reasoning. Techniques like `self_evaluate` and `cot` dramatically improve this, suggesting that forcing the model to engage in step-by-step reasoning or self-assessment aligns its confidence with actual accuracy.

The variation in which method performs best (e.g., `topk` for GPT-3.5 vs. `self_evaluate` for GPT-4) implies that optimal prompting strategies are model-specific. The consistently lower performance on `Prf-Law` hints at inherent difficulties in the professional law domain for these models, possibly due to complex reasoning or specialized knowledge requirements. The "average" columns provide a useful summary but mask significant task-specific behaviors, underscoring the importance of evaluating AI models across diverse benchmarks.

DECODING INTELLIGENCE...