## Bar Charts: GPT-3.5 vs GPT-4 Performance Comparison

### Overview

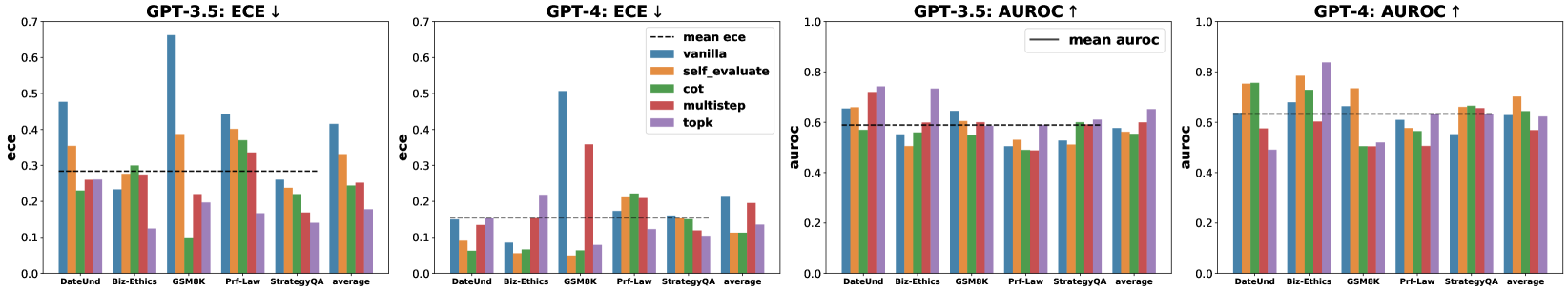

The image contains four grouped bar charts comparing performance metrics (ECE and AUROC) of GPT-3.5 and GPT-4 across six evaluation methods (vanilla, self_evaluate, cot, multistep, topk, average) and five datasets (DateUnd, Biz-Ethics, GSM8K, Prf-Law, StrategyQA). Each chart includes a dashed line representing the mean value across all methods.

### Components/Axes

- **X-axis**: Datasets (DateUnd, Biz-Ethics, GSM8K, Prf-Law, StrategyQA, average)

- **Y-axis**:

- Left charts: ECE (0.0–0.7 scale)

- Right charts: AUROC (0.0–1.0 scale)

- **Legend**:

- Blue: vanilla

- Orange: self_evaluate

- Green: cot

- Red: multistep

- Purple: topk

- Dashed black: average

- **Chart Titles**:

- Top-left: "GPT-3.5: ECE ↓"

- Top-right: "GPT-4: ECE ↓"

- Bottom-left: "GPT-3.5: AUROC ↑"

- Bottom-right: "GPT-4: AUROC ↑"

### Detailed Analysis

#### GPT-3.5 ECE (Left)

- **DateUnd**:

- vanilla: ~0.48

- self_evaluate: ~0.35

- cot: ~0.23

- multistep: ~0.26

- topk: ~0.25

- average: ~0.30

- **Biz-Ethics**:

- vanilla: ~0.23

- self_evaluate: ~0.28

- cot: ~0.10

- multistep: ~0.30

- topk: ~0.20

- average: ~0.22

- **GSM8K**:

- vanilla: ~0.67

- self_evaluate: ~0.39

- cot: ~0.10

- multistep: ~0.35

- topk: ~0.20

- average: ~0.34

- **Prf-Law**:

- vanilla: ~0.45

- self_evaluate: ~0.40

- cot: ~0.22

- multistep: ~0.33

- topk: ~0.18

- average: ~0.32

- **StrategyQA**:

- vanilla: ~0.27

- self_evaluate: ~0.23

- cot: ~0.21

- multistep: ~0.18

- topk: ~0.14

- average: ~0.21

#### GPT-4 ECE (Top-right)

- **DateUnd**:

- vanilla: ~0.15

- self_evaluate: ~0.12

- cot: ~0.08

- multistep: ~0.18

- topk: ~0.10

- average: ~0.13

- **Biz-Ethics**:

- vanilla: ~0.12

- self_evaluate: ~0.09

- cot: ~0.06

- multistep: ~0.15

- topk: ~0.08

- average: ~0.10

- **GSM8K**:

- vanilla: ~0.51

- self_evaluate: ~0.18

- cot: ~0.07

- multistep: ~0.35

- topk: ~0.12

- average: ~0.24

- **Prf-Law**:

- vanilla: ~0.33

- self_evaluate: ~0.28

- cot: ~0.15

- multistep: ~0.30

- topk: ~0.10

- average: ~0.21

- **StrategyQA**:

- vanilla: ~0.19

- self_evaluate: ~0.16

- cot: ~0.12

- multistep: ~0.14

- topk: ~0.11

- average: ~0.15

#### GPT-3.5 AUROC (Bottom-left)

- **DateUnd**:

- vanilla: ~0.65

- self_evaluate: ~0.67

- cot: ~0.58

- multistep: ~0.62

- topk: ~0.70

- average: ~0.64

- **Biz-Ethics**:

- vanilla: ~0.55

- self_evaluate: ~0.58

- cot: ~0.45

- multistep: ~0.60

- topk: ~0.65

- average: ~0.58

- **GSM8K**:

- vanilla: ~0.75

- self_evaluate: ~0.78

- cot: ~0.60

- multistep: ~0.72

- topk: ~0.80

- average: ~0.73

- **Prf-Law**:

- vanilla: ~0.68

- self_evaluate: ~0.70

- cot: ~0.55

- multistep: ~0.65

- topk: ~0.72

- average: ~0.67

- **StrategyQA**:

- vanilla: ~0.62

- self_evaluate: ~0.64

- cot: ~0.50

- multistep: ~0.60

- topk: ~0.68

- average: ~0.61

#### GPT-4 AUROC (Bottom-right)

- **DateUnd**:

- vanilla: ~0.70

- self_evaluate: ~0.72

- cot: ~0.65

- multistep: ~0.75

- topk: ~0.78

- average: ~0.71

- **Biz-Ethics**:

- vanilla: ~0.60

- self_evaluate: ~0.62

- cot: ~0.55

- multistep: ~0.65

- topk: ~0.68

- average: ~0.63

- **GSM8K**:

- vanilla: ~0.80

- self_evaluate: ~0.82

- cot: ~0.70

- multistep: ~0.85

- topk: ~0.88

- average: ~0.81

- **Prf-Law**:

- vanilla: ~0.72

- self_evaluate: ~0.74

- cot: ~0.68

- multistep: ~0.76

- topk: ~0.79

- average: ~0.74

- **StrategyQA**:

- vanilla: ~0.65

- self_evaluate: ~0.67

- cot: ~0.58

- multistep: ~0.66

- topk: ~0.70

- average: ~0.66

### Key Observations

1. **ECE Trends**:

- GPT-4 consistently shows lower ECE than GPT-3.5 across all datasets, indicating better calibration.

- The "multistep" method often performs best for ECE in both models.

- GSM8K dataset has the highest ECE values for both models, suggesting calibration challenges in complex reasoning tasks.

2. **AUROC Trends**:

- GPT-4 achieves higher AUROC than GPT-3.5 across all datasets, demonstrating improved discrimination.

- "topk" method frequently achieves the highest AUROC, particularly in GSM8K and StrategyQA.

- AUROC values are generally higher than ECE values, reflecting better overall model performance.

3. **Average Line**:

- The dashed black line (average) shows a clear downward trend in ECE and upward trend in AUROC from GPT-3.5 to GPT-4.

- Average ECE decreases from ~0.32 (GPT-3.5) to ~0.15 (GPT-4).

- Average AUROC increases from ~0.64 (GPT-3.5) to ~0.71 (GPT-4).

### Interpretation

The data demonstrates significant performance improvements in GPT-4 compared to GPT-3.5 across both calibration (ECE) and discrimination (AUROC) metrics. The consistent downward trend in ECE suggests better alignment between model confidence and accuracy in GPT-4. The upward AUROC trend indicates enhanced ability to distinguish between correct and incorrect predictions.

Notable patterns include:

- The "multistep" method showing strong calibration performance in both models

- "topk" method excelling in discrimination (AUROC) for complex tasks like GSM8K

- GSM8K dataset presenting the greatest calibration challenges for both models

- Self-evaluation ("self_evaluate") methods generally underperforming compared to other prompting strategies

These results suggest that GPT-4's architectural improvements enable better handling of complex reasoning tasks while maintaining more reliable confidence calibration. The performance variations across methods highlight the importance of prompt engineering in optimizing LLM outputs for specific evaluation criteria.