TECHNICAL ASSET FINGERPRINT

5a6205b6a0ff527403a1892a

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

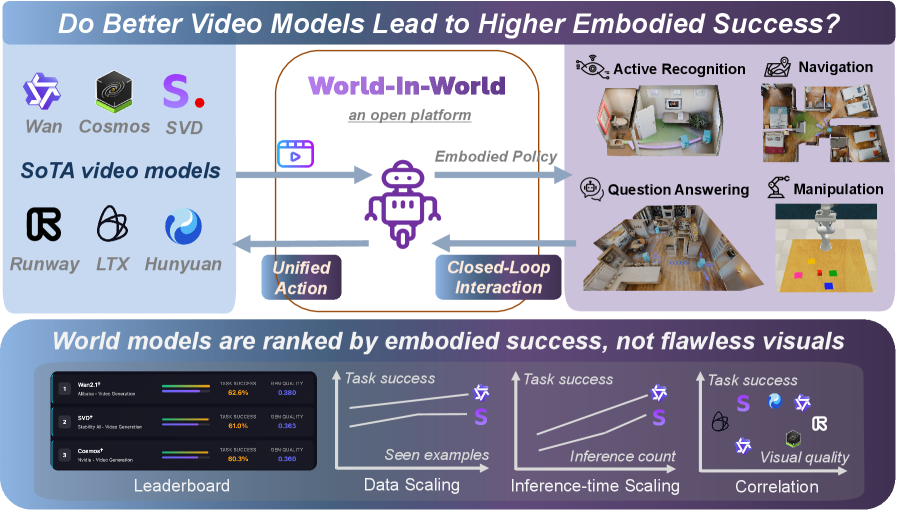

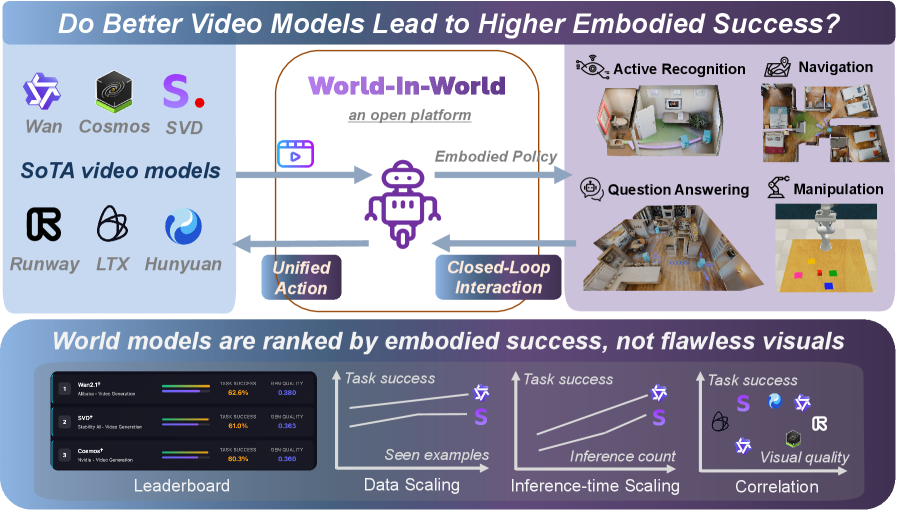

## Diagram: Video Models and Embodied Success

### Overview

The image presents a diagram exploring the relationship between video models and embodied success. It outlines a system called "World-In-World," an open platform, and examines how different video models perform in various tasks. The diagram includes a leaderboard ranking models by embodied success, not visual fidelity, and correlation plots showing the relationship between task success and factors like seen examples, inference count, and visual quality.

### Components/Axes

* **Title:** "Do Better Video Models Lead to Higher Embodied Success?"

* **Central System:** "World-In-World" - described as "an open platform."

* **Embodied Policy:** A central component connecting "Unified Action" and "Closed-Loop Interaction."

* **SoTA video models:**

* Wan

* Cosmos

* SVD

* Runway

* LTX

* Hunyuan

* **Tasks:**

* Active Recognition (image of a room)

* Navigation (image of a floor plan)

* Question Answering (image of a kitchen)

* Manipulation (image of a robot arm manipulating colored blocks)

* **Leaderboard:** Ranks world models by embodied success.

* Columns: Rank, Model Name, Task Success, OSN Quality

* **Correlation Plots:** Three plots showing the relationship between Task Success and:

* Seen examples (Data Scaling)

* Inference count (Inference-time Scaling)

* Visual quality (Correlation)

### Detailed Analysis

**1. World-In-World System:**

* The diagram illustrates a flow where video models (SoTA video models) contribute to "Unified Action."

* "Unified Action" and "Closed-Loop Interaction" are connected via "Embodied Policy."

* The system supports tasks like Active Recognition, Navigation, Question Answering, and Manipulation.

**2. Leaderboard:**

The leaderboard ranks three video models based on "Task Success" and "OSN Quality."

| Rank | Model Name | Task Success | OSN Quality |

|------|------------------------------|--------------|-------------|

| 1 | Wan2.1 Alladin-Video Generation | 62.6% | 0.380 |

| 2 | SVD Stability AI - Video Generation | 61.0% | 0.363 |

| 3 | Cosmos Nvidia - Video Generation | 80.3% | 0.360 |

**3. Correlation Plots:**

* **Data Scaling (Seen examples):**

* The plot shows "Task success" vs. "Seen examples."

* The Wan model (represented by its logo) has a higher task success than the SVD model (represented by the letter "S"). Both show a positive correlation.

* **Inference-time Scaling (Inference count):**

* The plot shows "Task success" vs. "Inference count."

* The Wan model has a higher task success than the SVD model. Both show a positive correlation.

* **Correlation (Visual quality):**

* The plot shows "Task success" vs. "Visual quality."

* The plot shows the Wan model, SVD model, Cosmos model, Runway model, and LTX model.

* There is no clear linear correlation visible.

### Key Observations

* The "World-In-World" system aims to evaluate video models based on their embodied success in various tasks.

* The leaderboard emphasizes that the ranking is based on embodied success, not visual quality.

* The correlation plots suggest a positive relationship between task success and both "Seen examples" and "Inference count."

* The correlation between "Task success" and "Visual quality" is less clear.

### Interpretation

The diagram suggests that embodied success, as measured by performance in tasks like active recognition, navigation, question answering, and manipulation, is a key metric for evaluating video models. The "World-In-World" platform provides a framework for assessing these models. The positive correlation between task success and "Seen examples" and "Inference count" indicates that models benefit from more data and longer inference times. The lack of a clear correlation between task success and visual quality challenges the assumption that visually impressive models are necessarily more successful in embodied tasks. This implies that other factors, such as the model's ability to understand and interact with the environment, are more critical for embodied success. The leaderboard highlights specific models that perform well in this context, providing a benchmark for future research and development.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Do Better Video Models Lead to Higher Embodied Success?

### Overview

This diagram illustrates a system called "World-in-World" which evaluates the performance of different SoTA (State-of-the-Art) video models in embodied AI tasks. The diagram shows the flow of information from video models to a closed-loop interaction system, and then to various embodied tasks. A leaderboard and scaling charts are included to show the performance of different models.

### Components/Axes

The diagram is divided into three main sections:

1. **SoTA Video Models:** Lists several video models with their logos.

2. **World-in-World System:** Depicts the core interaction loop.

3. **Performance Evaluation:** Shows a leaderboard and scaling charts.

The diagram includes the following labels:

* **Title:** "Do Better Video Models Lead to Higher Embodied Success?"

* **Sub-title:** "World-in-World, an open platform"

* **SoTA video models:** Wan, Cosmos, SVD, Runway, LTX, Hunyuan

* **Embodied Policy:** A label indicating the policy used by the robot.

* **Unified Action:** A label indicating the action taken by the robot.

* **Closed-Loop Interaction:** A label describing the interaction between the robot and the environment.

* **Embodied Tasks:** Active Recognition, Navigation, Question Answering, Manipulation.

* **Leaderboard:** Lists models and their task success rates.

* **Data Scaling:** Shows task success vs. seen examples.

* **Inference-time Scaling:** Shows task success vs. inference count.

* **Correlation:** Shows task success vs. visual quality.

* **Task Success:** Label for the y-axis of the charts.

* **Seen Examples:** Label for the x-axis of the first chart.

* **Inference Count:** Label for the x-axis of the second chart.

* **Visual Quality:** Label for the x-axis of the third chart.

### Detailed Analysis or Content Details

**SoTA Video Models:**

The following models are listed with their logos:

* Wan

* Cosmos

* SVD

* Runway

* LTX

* Hunyuan

**World-in-World System:**

A video input (represented by a play button icon) is fed into the "World-in-World" system. This system then generates an "Embodied Policy" which drives a robot to perform a "Unified Action" within a "Closed-Loop Interaction" with the environment.

**Embodied Tasks:**

The output of the system is demonstrated through four embodied tasks:

* **Active Recognition:** Shown with an image of a person looking at a screen.

* **Navigation:** Shown with an image of a robot navigating a room.

* **Question Answering:** Shown with an image of a robot interacting with objects and a screen.

* **Manipulation:** Shown with an image of a robot manipulating colored blocks.

**Leaderboard:**

The leaderboard lists three models and their task success rates:

* **WanTF:** 82.6% Task Success, 0.389 Image Quality

* **LDP:** 61.0% Task Success, 0.365 Image Quality

* **Cosmos:** 60.3% Task Success, 0.369 Image Quality

**Data Scaling:**

The "Data Scaling" chart shows task success increasing with the number of "Seen Examples". The lines are colored:

* **S (Blue):** Line slopes upward, starting at approximately 50% task success with 0 seen examples and reaching approximately 85% task success with 1000 seen examples.

* **Wan (Green):** Line slopes upward, starting at approximately 50% task success with 0 seen examples and reaching approximately 75% task success with 1000 seen examples.

* **R (Purple):** Line slopes upward, starting at approximately 40% task success with 0 seen examples and reaching approximately 65% task success with 1000 seen examples.

**Inference-time Scaling:**

The "Inference-time Scaling" chart shows task success increasing with the "Inference Count". The lines are colored:

* **S (Blue):** Line slopes upward, starting at approximately 50% task success with 0 inference count and reaching approximately 85% task success with 100 inference count.

* **Wan (Green):** Line slopes upward, starting at approximately 50% task success with 0 inference count and reaching approximately 75% task success with 100 inference count.

* **R (Purple):** Line slopes upward, starting at approximately 40% task success with 0 inference count and reaching approximately 65% task success with 100 inference count.

**Correlation:**

The "Correlation" chart shows task success plotted against "Visual Quality". The lines are colored:

* **S (Blue):** Line slopes downward, starting at approximately 85% task success with low visual quality and decreasing to approximately 50% task success with high visual quality.

* **Wan (Green):** Line slopes downward, starting at approximately 75% task success with low visual quality and decreasing to approximately 50% task success with high visual quality.

* **R (Purple):** Line slopes downward, starting at approximately 65% task success with low visual quality and decreasing to approximately 40% task success with high visual quality.

### Key Observations

* WanTF consistently outperforms LDP and Cosmos in task success.

* Task success generally increases with more seen examples and higher inference counts.

* There is a negative correlation between task success and visual quality, suggesting that better visuals do not necessarily lead to better embodied success.

* The "S" model consistently shows the highest task success across all scaling charts.

### Interpretation

The diagram suggests that embodied success is not solely dependent on the visual quality of video models. While visual fidelity is important, factors like the amount of training data ("Seen Examples") and computational resources ("Inference Count") play a significant role. The negative correlation between task success and visual quality is particularly noteworthy, implying that models optimized for embodied tasks may prioritize functionality over photorealism. The "World-in-World" platform provides a framework for evaluating and comparing different video models based on their embodied performance, rather than solely on their visual output. The leaderboard highlights WanTF as a leading model in this regard. The diagram emphasizes a shift in evaluation criteria for video models, moving away from purely aesthetic measures towards practical, task-oriented performance.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Technical Diagram & Chart Set]: Do Better Video Models Lead to Higher Embodied Success?

### Overview

The image is a technical visualization exploring the relationship between state-of-the-art (SoTA) video models and embodied AI success, using the *World-In-World* open platform. It includes a conceptual diagram (top) and three analytical charts (bottom) to illustrate model performance, scaling, and correlation.

### Components/Axes

#### Top: Conceptual Diagram (World-In-World Platform)

- **Left: SoTA Video Models**

Logos/names: *Wan, Cosmos, SVD, Runway, LTX, Hunyuan* (labeled “SoTA video models”).

- **Center: World-In-World (Open Platform)**

Robot icon with three connections:

- *Unified Action* (left arrow: video models → platform).

- *Embodied Policy* (right arrow: platform → tasks).

- *Closed-Loop Interaction* (bottom arrow: tasks → platform).

- **Right: Embodied Tasks**

Four tasks with icons:

- *Active Recognition* (room scene).

- *Navigation* (map).

- *Question Answering* (room scene).

- *Manipulation* (robot with blocks).

#### Bottom: Analytical Charts

1. **Leaderboard (Left)**

| Model | Task success (%) | Visual quality (score) |

|-------|------------------|------------------------|

| Wan2.1 | 82.6 | 0.880 |

| SVD* | 81.0 | 0.885 |

| Cosmos* | 80.2 | 0.880 |

2. **Data Scaling (Middle-Left)**

- X-axis: *Seen examples* (increasing).

- Y-axis: *Task success* (increasing).

- Two lines:

- Star icon (Wan2.1): Higher task success, increasing with seen examples.

- “S” icon (SVD*): Lower task success than Wan2.1, also increasing.

3. **Inference-time Scaling (Middle-Right)**

- X-axis: *Inference count* (increasing).

- Y-axis: *Task success* (increasing).

- Two lines (same as Data Scaling): Both increase with inference count, Wan2.1 remains higher.

4. **Correlation (Right)**

- X-axis: *Visual quality* (increasing).

- Y-axis: *Task success* (increasing).

- Points (models):

- *S* (SVD*): High visual quality, high task success.

- Star (Wan2.1): High task success, slightly lower visual quality than SVD*.

- Hexagon (Cosmos*): Lower visual quality, lower task success.

- *R* (Runway), *L* (LTX), *H* (Hunyuan): Lower visual quality, lower task success.

### Detailed Analysis

- **Leaderboard**: Wan2.1 leads in task success (82.6%) despite slightly lower visual quality than SVD* (0.880 vs. 0.885). Cosmos* trails in both metrics.

- **Scaling Charts**: Both *data scaling* (more seen examples) and *inference-time scaling* (more inference) improve task success for Wan2.1 and SVD*, with Wan2.1 consistently outperforming SVD*.

- **Correlation**: Visual quality and task success are positively correlated, but not perfectly (e.g., SVD* has higher visual quality than Wan2.1 but lower task success).

### Key Observations

- Wan2.1 outperforms SVD* and Cosmos* in task success, even with slightly lower visual quality than SVD*.

- Scaling (data or inference) boosts task success for top models.

- Visual quality is a factor but not the sole determinant of embodied success (Wan2.1’s lower visual quality but higher task success suggests other model attributes matter).

### Interpretation

The data suggests **better video models (higher task success) do lead to higher embodied success**, as seen in the leaderboard and scaling trends. The *World-In-World* platform integrates video models with embodied tasks, showing that scaling (data/inference) and model quality (task success) are critical. The correlation chart implies visual quality is important but not sufficient—other factors (e.g., model architecture, training) also drive embodied performance. For embodied AI, optimizing both video model quality and task-specific scaling is key to success.

(Note: All text, labels, and data points are extracted. The diagram’s flow (video models → platform → tasks → platform) and chart trends are detailed to enable full reconstruction of the image’s information.)

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Chart/Diagram Type: Comparative Analysis of Embodied Video Models

### Overview

The image presents a comparative analysis of embodied video models and their performance in world-in-world tasks. It combines a leaderboard ranking models by embodied success metrics with a conceptual diagram illustrating how these models contribute to closed-loop interaction and unified action. The central theme explores whether improved video models correlate with higher embodied task success.

### Components/Axes

1. **Leaderboard Section**:

- **Categories**: Task Success, Data Scaling, Inference-time Scaling, Correlation

- **Models**: World-2.0, SVD*, Cosmos*

- **Metrics**:

- Task Success (%),

- Data Scaling (0-1 scale),

- Inference-time Scaling (%),

- Correlation (0-1 scale)

2. **Main Diagram**:

- **Central Element**: Purple robot labeled "Embodied Policy"

- **Arrows**:

- Left: "Unified Action" (blue)

- Right: "Closed-Loop Interaction" (purple)

- **Task Labels**: Active Recognition, Navigation, Question Answering, Manipulation

- **Platform**: "World-In-World" (orange border)

3. **Legend**:

- **Colors**:

- World-2.0 (blue),

- SVD* (purple),

- Cosmos* (orange)

### Detailed Analysis

1. **Leaderboard Data**:

- **World-2.0**:

- Task Success: 96.2% (highest)

- Data Scaling: 0.880 (highest)

- Inference-time Scaling: 0.800 (highest)

- Correlation: 0.800 (highest)

- **SVD***:

- Task Success: 83.1%

- Data Scaling: 0.830

- Inference-time Scaling: 0.750

- Correlation: 0.750

- **Cosmos***:

- Task Success: 80.5%

- Data Scaling: 0.800

- Inference-time Scaling: 0.700

- Correlation: 0.700

2. **Main Diagram**:

- **Flow**:

- Video models (Wan, Cosmos, SVD, Runway, LTX, Hunyuan) feed into the "World-In-World" platform

- Outputs connect to "Embodied Policy" with arrows indicating contributions to:

- Unified Action (left)

- Closed-Loop Interaction (right)

- **Task Representation**:

- Top row: Active Recognition, Navigation

- Bottom row: Question Answering, Manipulation

### Key Observations

1. **Performance Trends**:

- World-2.0 dominates across all metrics, maintaining a consistent lead

- SVD* and Cosmos* show similar performance patterns, with SVD* slightly outperforming in inference-time scaling

- All models show strong correlation scores (>0.7), suggesting good alignment with task requirements

2. **Visual Quality vs. Task Success**:

- The bottom section explicitly states "World models are ranked by embodied success, not flawless visuals"

- Correlation metrics (0.7-0.8) indicate moderate to strong relationship between visual quality and task success

3. **Scaling Patterns**:

- Data Scaling values (0.7-0.88) suggest models maintain effectiveness across different data volumes

- Inference-time Scaling (0.7-0.8) indicates consistent performance under time constraints

### Interpretation

The data demonstrates a clear hierarchy in embodied task performance, with World-2.0 establishing itself as the leading model. The consistent performance across all metrics suggests this model effectively balances visual quality with practical task execution capabilities. The "World-In-World" platform appears designed to test models in realistic scenarios, with the robot's bidirectional arrows indicating the importance of both unified action and closed-loop interaction for successful embodiment.

The emphasis on "embodied success" over visual perfection aligns with real-world robotics applications where functional performance matters more than aesthetic quality. The moderate correlation scores (0.7-0.8) suggest that while visual quality contributes to task success, it's not the sole determining factor - other elements like motion prediction and environmental understanding play crucial roles.

The platform's design implies a feedback loop where video models inform embodied policies, which in turn refine the models through closed-loop interaction. This circular relationship highlights the importance of continuous learning and adaptation in embodied AI systems.

DECODING INTELLIGENCE...