\n

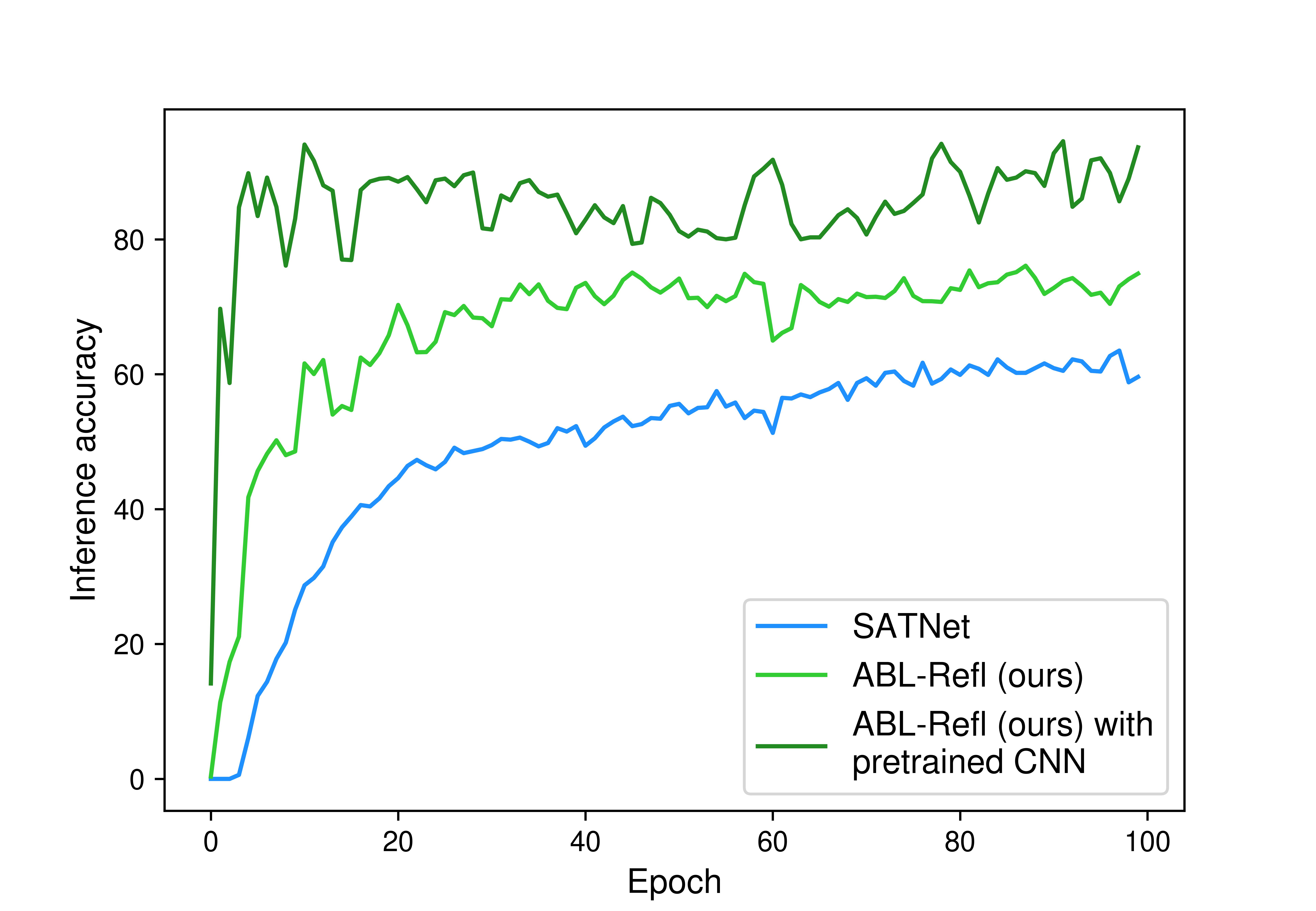

## Line Chart: Inference Accuracy vs. Epoch

### Overview

This line chart depicts the inference accuracy of three different models (SATNet, ABL-Refl, and ABL-Refl with pretrained CNN) over 100 epochs of training. The x-axis represents the epoch number, and the y-axis represents the inference accuracy.

### Components/Axes

* **X-axis:** Epoch (Scale: 0 to 100, approximately)

* **Y-axis:** Inference accuracy (Scale: 0 to 100, approximately)

* **Legend:** Located in the bottom-right corner of the chart.

* SATNet (Blue line)

* ABL-Refl (ours) (Olive line)

* ABL-Refl (ours) with pretrained CNN (Green line)

### Detailed Analysis

* **SATNet (Blue Line):** The blue line starts at approximately 5% accuracy at epoch 0, rises sharply to around 50% by epoch 10, then plateaus, fluctuating between approximately 50% and 62% for the remainder of the 100 epochs. There is a slight downward trend in the final epochs.

* **ABL-Refl (ours) (Olive Line):** The olive line begins at approximately 5% accuracy at epoch 0, increases rapidly to around 68% by epoch 10, and continues to rise, reaching a peak of approximately 75% around epoch 20. It then fluctuates between approximately 65% and 75% for the rest of the epochs, with a slight downward trend towards the end.

* **ABL-Refl (ours) with pretrained CNN (Green Line):** The green line starts at approximately 0% accuracy at epoch 0, experiences a very rapid increase to around 85% by epoch 10, and then fluctuates between approximately 75% and 90% for the remaining epochs. It shows more consistent performance and higher accuracy than the other two models.

Here's a more detailed breakdown of approximate values at specific epochs:

| Epoch | SATNet (Blue) | ABL-Refl (Olive) | ABL-Refl + CNN (Green) |

|---|---|---|---|

| 0 | ~5% | ~5% | ~0% |

| 10 | ~50% | ~68% | ~85% |

| 20 | ~55% | ~75% | ~88% |

| 40 | ~58% | ~70% | ~82% |

| 60 | ~60% | ~65% | ~78% |

| 80 | ~59% | ~72% | ~85% |

| 100 | ~57% | ~68% | ~88% |

### Key Observations

* The model utilizing a pretrained CNN (green line) consistently achieves the highest inference accuracy throughout the training process.

* SATNet (blue line) exhibits the lowest overall accuracy and the most stable, but lowest, performance.

* ABL-Refl (olive line) performs better than SATNet but consistently underperforms the CNN-pretrained version.

* All three models show a trend of initial rapid improvement followed by a plateau and slight decline in accuracy towards the end of the training period.

### Interpretation

The data suggests that incorporating a pretrained CNN significantly improves the inference accuracy of the ABL-Refl model. The pretrained CNN likely provides a strong initial feature representation, allowing the model to converge faster and achieve higher accuracy. The plateauing and slight decline in accuracy after a certain number of epochs could indicate overfitting or the need for further optimization techniques, such as regularization or learning rate scheduling. The relatively low performance of SATNet suggests that its architecture or training process may be less effective for this particular task compared to the ABL-Refl architecture, especially when combined with a pretrained CNN. The consistent performance of the green line suggests that the pretrained CNN is providing a robust and generalizable feature representation.