## Line Graph: Inference Accuracy vs. Epochs

### Overview

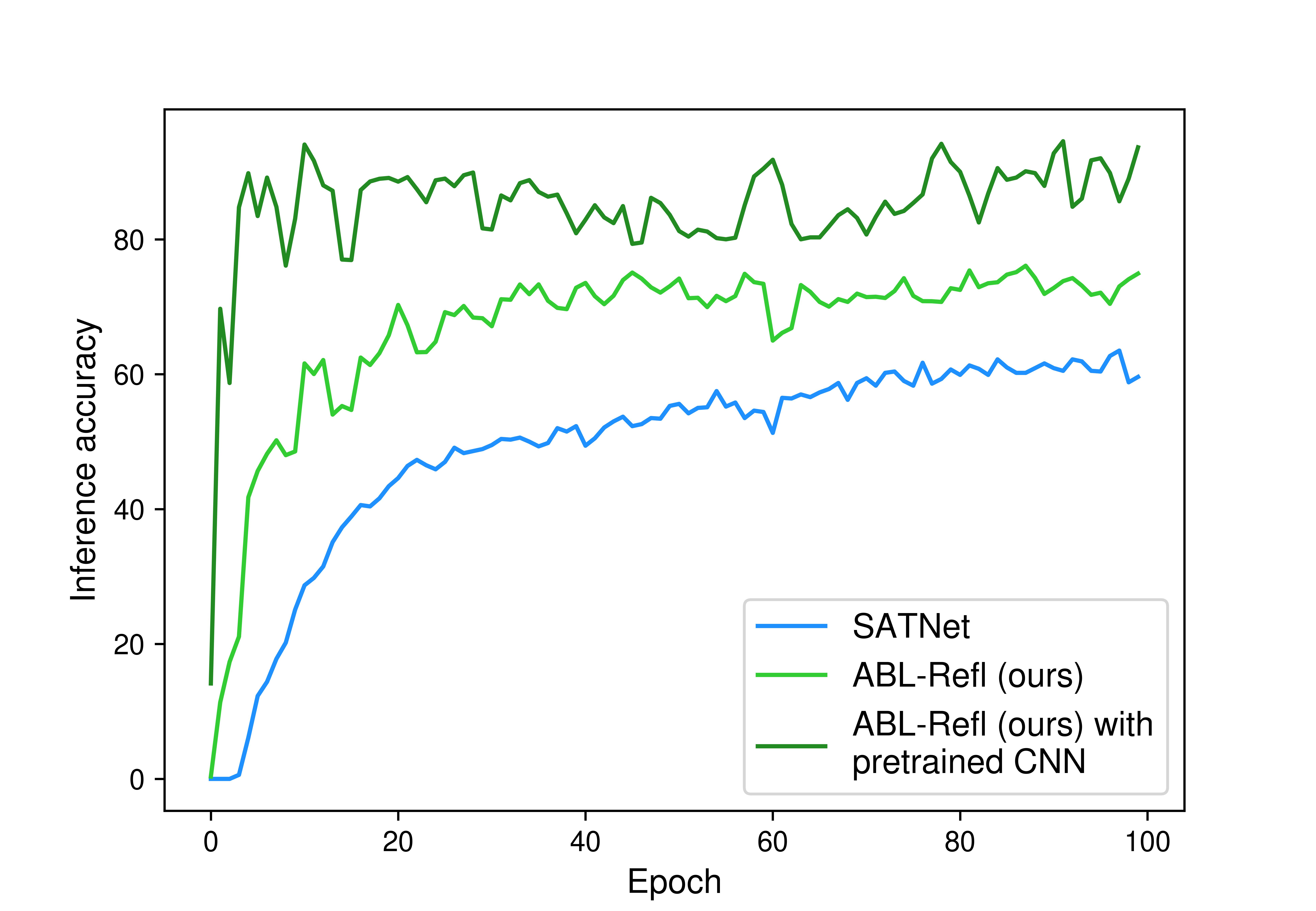

The image is a line graph comparing the inference accuracy of three models (SATNet, ABL-Refl, and ABL-Refl with pretrained CNN) across 100 training epochs. The y-axis represents inference accuracy (0–100%), and the x-axis represents epochs (0–100). Three distinct lines are plotted, each corresponding to a model, with the legend positioned in the bottom-right corner.

---

### Components/Axes

- **X-axis (Epochs)**: Labeled "Epoch," ranging from 0 to 100 in increments of 20.

- **Y-axis (Inference Accuracy)**: Labeled "Inference accuracy," ranging from 0 to 100 in increments of 20.

- **Legend**: Located in the bottom-right corner, with three entries:

- **Blue**: SATNet

- **Green**: ABL-Refl (ours)

- **Dark Green**: ABL-Refl (ours) with pretrained CNN

---

### Detailed Analysis

#### SATNet (Blue Line)

- **Initial Behavior**: Starts at 0% accuracy at epoch 0, sharply rising to ~10% by epoch 5.

- **Mid-Training**: Gradually increases to ~60% by epoch 100, with a notable dip to ~55% around epoch 60.

- **Trend**: Overall upward trajectory with moderate fluctuations.

#### ABL-Refl (Green Line)

- **Initial Behavior**: Begins at ~10% accuracy at epoch 0, rising sharply to ~70% by epoch 20.

- **Mid-Training**: Stabilizes between 70–75% accuracy, with minor fluctuations (e.g., ~65% at epoch 60).

- **Trend**: Smoother progression compared to SATNet, maintaining higher accuracy after epoch 20.

#### ABL-Refl with Pretrained CNN (Dark Green Line)

- **Initial Behavior**: Starts at ~90% accuracy at epoch 0, dipping to ~80% by epoch 10.

- **Mid-Training**: Fluctuates between 80–90% accuracy, peaking at ~95% around epoch 80.

- **Trend**: Most stable and highest-performing line, with minor volatility.

---

### Key Observations

1. **Pretrained CNN Advantage**: The dark green line (ABL-Refl with pretrained CNN) consistently outperforms the other models, maintaining ~80–90% accuracy throughout training.

2. **SATNet Variability**: The blue line (SATNet) exhibits the highest volatility, with a significant dip at epoch 60 and slower convergence.

3. **ABL-Refl Stability**: The green line (ABL-Refl) shows steady improvement, surpassing SATNet by epoch 20 and maintaining ~70% accuracy.

4. **Early Dip in Pretrained Model**: The dark green line’s drop to ~80% at epoch 10 may indicate an initial adjustment phase for the pretrained CNN.

---

### Interpretation

- **Model Effectiveness**: The pretrained CNN integration significantly enhances ABL-Refl’s performance, suggesting transfer learning improves generalization. SATNet’s lower accuracy and higher variance imply it is less effective for this task.

- **Training Dynamics**: ABL-Refl’s rapid early improvement (epoch 0–20) highlights its efficiency, while SATNet’s gradual climb indicates slower learning.

- **Anomalies**: The pretrained model’s epoch 10 dip could reflect temporary instability during CNN adaptation, but it recovers quickly to dominate later epochs.

- **Practical Implications**: For tasks requiring high inference accuracy, ABL-Refl with pretrained CNN is optimal. SATNet may require architectural adjustments or hyperparameter tuning to match performance.

---

### Spatial Grounding & Trend Verification

- **Legend Alignment**: Colors match line placements exactly (blue = SATNet, green = ABL-Refl, dark green = pretrained CNN).

- **Trend Consistency**:

- SATNet’s upward slope aligns with its ~60% final accuracy.

- ABL-Refl’s plateau at ~70% matches its mid-training stability.

- Pretrained CNN’s fluctuations around 80–90% confirm its dominance.

---

### Content Details

- **Data Points**:

- SATNet: ~10% (epoch 5), ~60% (epoch 100), ~55% (epoch 60 dip).

- ABL-Refl: ~70% (epoch 20), ~65% (epoch 60).

- Pretrained CNN: ~90% (epoch 0), ~80% (epoch 10), ~95% (epoch 80 peak).

---

### Final Notes

The graph underscores the benefits of pretrained CNNs in boosting inference accuracy, while highlighting SATNet’s limitations. Further analysis could explore why SATNet underperforms and whether pretraining strategies could be adapted for other models.