## Line Charts: Model Training Performance Comparison

### Overview

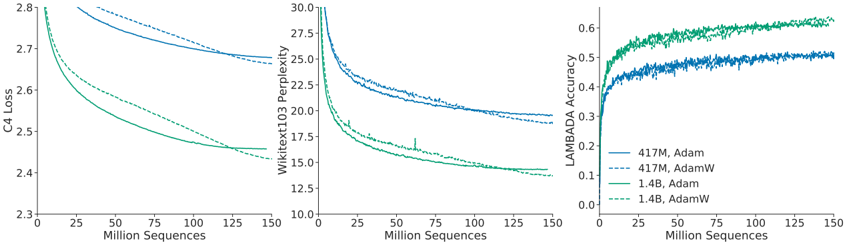

The image displays three horizontally aligned line charts comparing the training performance of two model sizes (417M and 1.4B parameters) using two optimization algorithms (Adam and AdamW). The charts track three different metrics over the course of training, measured in "Million Sequences" processed.

### Components/Axes

* **Shared X-Axis (All Charts):** "Million Sequences". Scale ranges from 0 to 150, with major tick marks at 0, 25, 50, 75, 100, 125, and 150.

* **Left Chart Y-Axis:** "C4 Loss". Scale ranges from 2.3 to 2.8, with major tick marks at 2.3, 2.4, 2.5, 2.6, 2.7, and 2.8.

* **Middle Chart Y-Axis:** "WikiText103 Perplexity". Scale ranges from 10.0 to 30.0, with major tick marks at 10.0, 12.5, 15.0, 17.5, 20.0, 22.5, 25.0, 27.5, and 30.0.

* **Right Chart Y-Axis:** "LAMBADA Accuracy". Scale ranges from 0.0 to 0.6, with major tick marks at 0.0, 0.1, 0.2, 0.3, 0.4, 0.5, and 0.6.

* **Legend (Located in bottom-right of the rightmost chart):**

* Solid blue line: `417M, Adam`

* Dashed blue line: `417M, AdamW`

* Solid green line: `1.4B, Adam`

* Dashed green line: `1.4B, AdamW`

### Detailed Analysis

**Left Chart - C4 Loss:**

* **Trend Verification:** All four lines show a steep initial decline that gradually flattens, indicating decreasing loss with more training sequences.

* **Data Series:**

* `1.4B, AdamW` (dashed green): Starts highest (~2.8), ends lowest (~2.43). Shows the most significant improvement.

* `1.4B, Adam` (solid green): Follows a similar path to its AdamW counterpart but ends slightly higher (~2.45).

* `417M, AdamW` (dashed blue): Starts around 2.78, ends around 2.68.

* `417M, Adam` (solid blue): Starts around 2.78, ends highest among the four (~2.70).

* **Key Point:** The 1.4B parameter models achieve substantially lower C4 loss than the 417M models. Within each model size, AdamW yields slightly lower final loss than Adam.

**Middle Chart - WikiText103 Perplexity:**

* **Trend Verification:** All lines show a sharp initial drop followed by a steady decline, indicating improving language modeling performance.

* **Data Series:**

* `1.4B, AdamW` (dashed green): Starts near 30.0, ends lowest at approximately 14.0.

* `1.4B, Adam` (solid green): Follows closely above the dashed green line, ending near 14.5. Contains a small, sharp upward spike around 60 million sequences.

* `417M, AdamW` (dashed blue): Starts near 30.0, ends around 19.0.

* `417M, Adam` (solid blue): Follows closely above the dashed blue line, ending around 19.5.

* **Key Point:** The pattern mirrors the C4 Loss chart. The 1.4B models achieve much lower perplexity. AdamW provides a consistent, small advantage over Adam for both model sizes.

**Right Chart - LAMBADA Accuracy:**

* **Trend Verification:** All lines show a rapid initial increase that plateaus, indicating improving accuracy on the LAMBADA task with more training.

* **Data Series:**

* `1.4B, AdamW` (dashed green): Rises fastest and reaches the highest accuracy, plateauing near 0.62.

* `1.4B, Adam` (solid green): Rises quickly but plateaus at a lower accuracy than its AdamW counterpart, around 0.58.

* `417M, AdamW` (dashed blue): Plateaus around 0.52.

* `417M, Adam` (solid blue): Plateaus slightly lower, around 0.50.

* **Key Point:** Larger model size is the dominant factor for higher accuracy. The advantage of AdamW over Adam is most pronounced for the 1.4B model on this metric.

### Key Observations

1. **Model Size Dominance:** Across all three metrics (C4 Loss, WikiText103 Perplexity, LAMBADA Accuracy), the 1.4B parameter models (green lines) consistently and significantly outperform the 417M parameter models (blue lines).

2. **Optimizer Consistency:** For both model sizes and across all metrics, the AdamW optimizer (dashed lines) consistently yields slightly better final performance than the standard Adam optimizer (solid lines).

3. **Training Convergence:** All curves show clear signs of convergence by 150 million sequences, with the rate of improvement slowing considerably after the initial 25-50 million sequences.

4. **Anomaly:** The `1.4B, Adam` (solid green) line in the WikiText103 Perplexity chart exhibits a brief, sharp increase (spike) around 60 million sequences before resuming its downward trend.

### Interpretation

This set of charts provides a clear empirical comparison demonstrating two key principles in training large language models:

1. **Scaling Law Effect:** Increasing model parameters from 417 million to 1.4 billion leads to a substantial and consistent improvement in model performance across diverse evaluation metrics (general loss, language modeling perplexity, and specific task accuracy). This visually validates the positive correlation between model scale and capability.

2. **Optimizer Efficacy:** The AdamW optimizer, which decouples weight decay from the gradient update, provides a small but reliable performance boost over the standard Adam optimizer. This advantage is observable during the entire training trajectory and is consistent across different model scales and evaluation tasks. The benefit appears slightly more pronounced for the larger model on the LAMBADA accuracy task.

The data suggests that for maximizing final model performance, using a larger model size is the most impactful choice, and pairing it with the AdamW optimizer provides an additional, consistent refinement. The charts effectively communicate that training is a process of diminishing returns, where the most dramatic gains occur early, followed by a long tail of gradual improvement.