## Scatter Plot: AI Model Performance vs. Model Size

### Overview

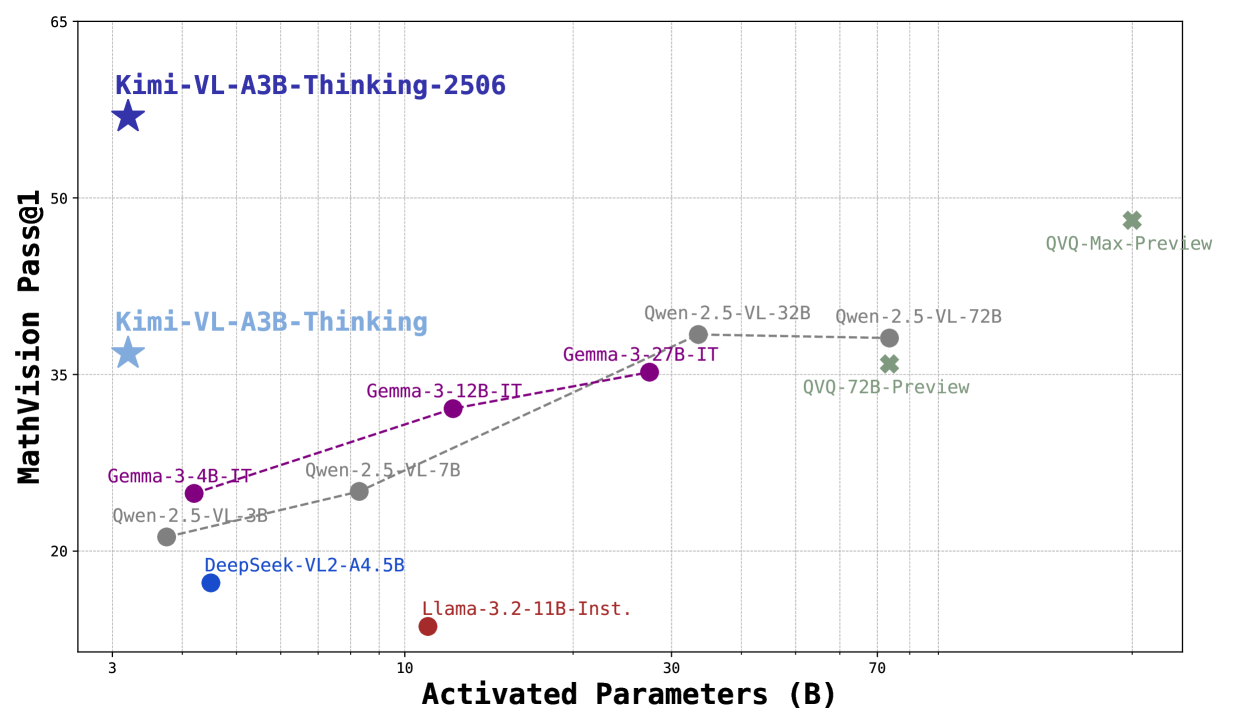

This image is a scatter plot comparing the performance of various multimodal AI models on a mathematical vision benchmark against their computational scale (activated parameters). The plot reveals a general trend where larger models tend to achieve higher scores, but with significant outliers demonstrating high efficiency.

### Components/Axes

* **X-Axis:** Labeled **"Activated Parameters (B)"**. The scale is logarithmic, with major tick marks at **3, 10, 30, and 70** billion parameters.

* **Y-Axis:** Labeled **"MathVision Pass@1"**. The scale is linear, ranging from **20 to 65**, with major grid lines at intervals of 15 (20, 35, 50, 65).

* **Data Series & Legend:** The plot contains multiple data series, each represented by a distinct color and marker shape. The legend is embedded directly as labels next to each data point.

* **Dark Blue Star:** `Kimi-VL-A3B-Thinking-2506`

* **Light Blue Star:** `Kimi-VL-A3B-Thinking`

* **Purple Circles (connected by dashed line):** `Gemma-3-4B-IT`, `Gemma-3-12B-IT`, `Gemma-3-27B-IT`

* **Gray Circles (connected by dashed line):** `Qwen-2.5-VL-3B`, `Qwen-2.5-VL-7B`, `Qwen-2.5-VL-32B`, `Qwen-2.5-VL-72B`

* **Blue Circle:** `DeepSeek-VL2-A4.5B`

* **Red Circle:** `Llama-3.2-11B-Inst.`

* **Green Crosses:** `QVQ-72B-Preview`, `QVQ-Max-Preview`

### Detailed Analysis

**Data Points (Approximate Coordinates: Activated Parameters (B), MathVision Pass@1):**

1. **Kimi-VL-A3B-Thinking-2506 (Dark Blue Star):** Positioned at the top-left. Coordinates: **(~3B, ~60)**. This is the highest-performing model on the chart.

2. **Kimi-VL-A3B-Thinking (Light Blue Star):** Positioned below the first star. Coordinates: **(~3B, ~37)**.

3. **Gemma-3 Series (Purple Circles, upward trend):**

* `Gemma-3-4B-IT`: **(~4B, ~25)**

* `Gemma-3-12B-IT`: **(~12B, ~32)**

* `Gemma-3-27B-IT`: **(~27B, ~35)**

* *Trend:* Performance increases with model size, but the rate of improvement slows.

4. **Qwen-2.5-VL Series (Gray Circles, upward then plateauing trend):**

* `Qwen-2.5-VL-3B`: **(~3B, ~21)**

* `Qwen-2.5-VL-7B`: **(~7B, ~25)**

* `Qwen-2.5-VL-32B`: **(~32B, ~38)**

* `Qwen-2.5-VL-72B`: **(~72B, ~38)**

* *Trend:* Strong improvement from 3B to 32B, then a plateau between 32B and 72B.

5. **DeepSeek-VL2-A4.5B (Blue Circle):** Coordinates: **(~4.5B, ~18)**. Positioned below the Gemma-3-4B-IT point.

6. **Llama-3.2-11B-Inst. (Red Circle):** Coordinates: **(~11B, ~15)**. This is the lowest-performing model on the chart for its size.

7. **QVQ Series (Green Crosses, high-parameter region):**

* `QVQ-72B-Preview`: **(~72B, ~36)**. Positioned slightly below the Qwen-2.5-VL-72B point.

* `QVQ-Max-Preview`: **(~120B?, ~49)**. The rightmost point, with an estimated parameter count beyond the 70B tick.

### Key Observations

1. **Efficiency Outliers:** The `Kimi-VL-A3B-Thinking-2506` model is a dramatic outlier, achieving the highest score (~60) with one of the smallest parameter counts (~3B). This indicates exceptional parameter efficiency for this specific task.

2. **Performance Plateau:** The `Qwen-2.5-VL` series shows a clear performance plateau, where increasing parameters from 32B to 72B yields no improvement in the MathVision Pass@1 score.

3. **Size-Performance Disconnect:** Larger models do not guarantee better performance. `Llama-3.2-11B-Inst.` (~11B) underperforms both smaller models (e.g., `Qwen-2.5-VL-3B`) and similarly sized models (e.g., `Gemma-3-12B-IT`).

4. **General Trend:** Excluding the major outliers, there is a loose positive correlation between activated parameters and benchmark score, as seen in the Gemma-3 and the initial segment of the Qwen-2.5-VL series.

### Interpretation

This chart visualizes the trade-off and variance in **efficiency versus scale** for multimodal AI models on a mathematical reasoning task.

* **The "Kimi" models** suggest that architectural innovations or training techniques (implied by the "-Thinking" suffix) can lead to breakthroughs in efficiency, achieving state-of-the-art results with a fraction of the parameters used by competitors.

* The **plateau in the Qwen series** indicates diminishing returns for simply scaling a particular model architecture on this benchmark. It suggests that beyond a certain point (~32B for this model family), other factors like data quality, training methodology, or architectural limits become the primary bottleneck.

* The **underperformance of Llama-3.2-11B-Inst.** highlights that not all models of a certain size are created equal; their training data, objective alignment, and architecture critically determine their capability on specialized tasks like visual math.

* The **QVQ-Max-Preview** point shows that very large scale can still push performance higher, but it requires a massive increase in parameters to achieve a score that is still below the much smaller "Kimi" model.

**In summary, the data argues that for specialized reasoning tasks, intelligent model design and training can be far more impactful than brute-force scaling. The chart serves as a benchmark for evaluating not just raw performance, but the efficiency and effectiveness of different AI development approaches.**