TECHNICAL ASSET FINGERPRINT

5ae7acb399fdc3a7fcd55e37

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Process Diagram: LLM Prompting Methods for Financial Question Answering

### Overview

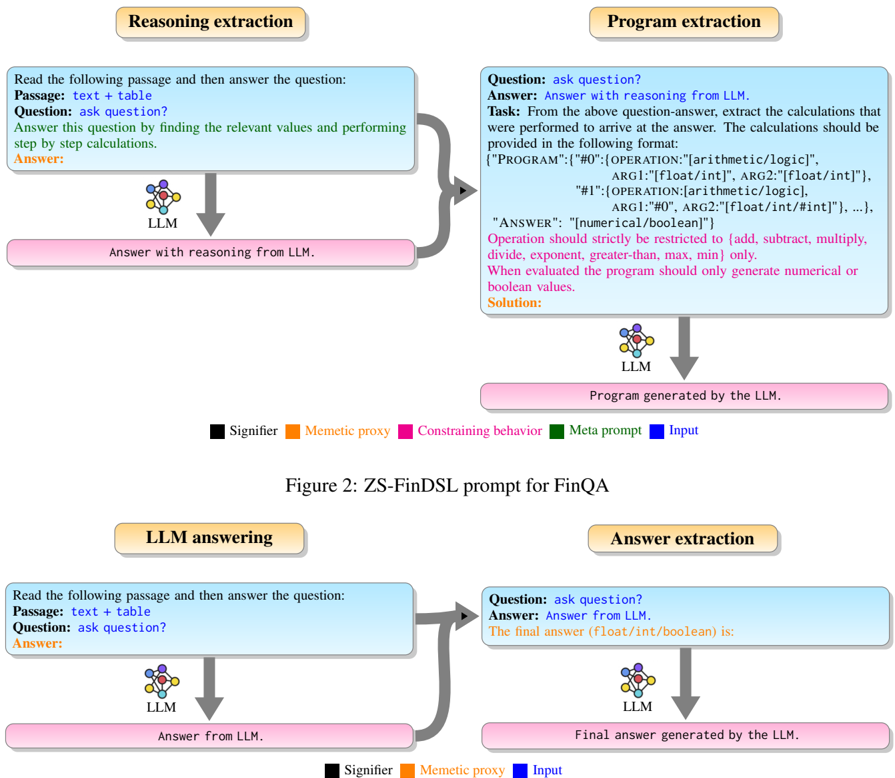

The image displays a technical flowchart comparing two distinct methodologies for prompting a Large Language Model (LLM) to answer financial questions, specifically within the FinQA context. The diagram is split into two primary sections: a top section illustrating the "ZS-FinDSL prompt" method and a bottom section showing a more direct "LLM answering" method. Each section uses color-coded boxes and arrows to denote different types of components and the flow of information.

### Components/Axes

The diagram is not a data chart with axes but a process flowchart. Its components are defined by labeled boxes, connecting arrows, and a color-coded legend.

**Legend (Present in both sections, positioned at the bottom):**

* **Black Box:** Signifier

* **Orange Box:** Memetic proxy

* **Pink Box:** Constraining behavior

* **Green Box:** Meta prompt

* **Blue Box:** Input

**Top Section: Figure 2: ZS-FinDSL prompt for FinQA**

This section details a two-stage process: "Reasoning extraction" followed by "Program extraction."

1. **Reasoning extraction (Left Column):**

* **Input Box (Blue - "Input"):** Contains the prompt template.

* Text: "Read the following passage and then answer the question:"

* Text: "Passage: text + table"

* Text: "Question: ask question?"

* Text (in green): "Answer this question by finding the relevant values and performing step by step calculations."

* Text (in orange): "Answer:"

* **Process Icon:** An icon labeled "LLM" with a downward arrow.

* **Output Box (Pink - "Constraining behavior"):** "Answer with reasoning from LLM."

2. **Program extraction (Right Column):**

* **Input Box (Blue - "Input"):** Contains a more complex prompt template.

* Text: "Question: ask question?"

* Text: "Answer: Answer with reasoning from LLM."

* Text (in green): "Task: From the above question-answer, extract the calculations that were performed to arrive at the answer. The calculations should be provided in the following format:"

* Text (code block): `{"PROGRAM":{"#0":{"OPERATION":"[arithmetic/logic]", ARG1:"[float/int]", ARG2:"[float/int]"}, "#1":{"OPERATION:[arithmetic/logic]", ARG1:"#0", ARG2:"[float/int/#int]"}, ...}, "ANSWER": "[numerical/boolean]"}`

* Text (in pink): "Operation should strictly be restricted to {add, subtract, multiply, divide, exponent, greater-than, max, min} only. When evaluated the program should only generate numerical or boolean values."

* Text (in orange): "Solution:"

* **Process Icon:** An icon labeled "LLM" with a downward arrow.

* **Output Box (Pink - "Constraining behavior"):** "Program generated by the LLM."

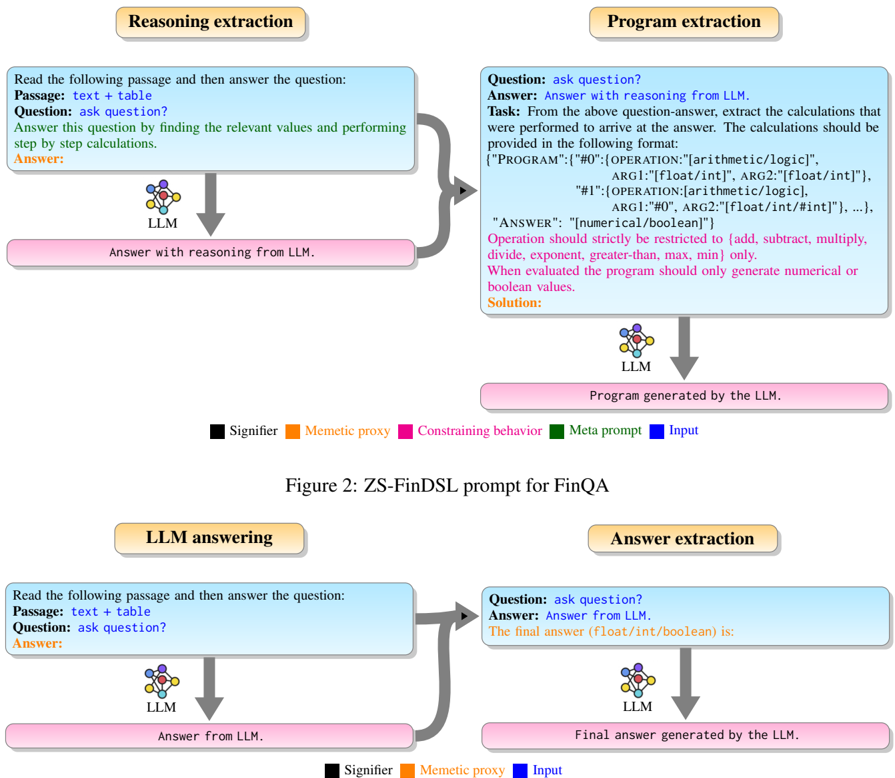

**Bottom Section: LLM answering and Answer extraction**

This section illustrates a simpler, single-stage process.

1. **LLM answering (Left Column):**

* **Input Box (Blue - "Input"):** Identical to the input box in the top section's "Reasoning extraction."

* Text: "Read the following passage and then answer the question:"

* Text: "Passage: text + table"

* Text: "Question: ask question?"

* Text (in orange): "Answer:"

* **Process Icon:** An icon labeled "LLM" with a downward arrow.

* **Output Box (Pink - "Constraining behavior"):** "Answer from LLM."

2. **Answer extraction (Right Column):**

* **Input Box (Blue - "Input"):**

* Text: "Question: ask question?"

* Text: "Answer: Answer from LLM."

* Text (in green): "The final answer (float/int/boolean) is:"

* **Process Icon:** An icon labeled "LLM" with a downward arrow.

* **Output Box (Pink - "Constraining behavior"):** "Final answer generated by the LLM."

### Detailed Analysis

The diagram meticulously outlines the structure of prompts and expected outputs for two different approaches.

* **ZS-FinDSL Method (Top):** This is a structured, two-step method.

1. **Step 1 (Reasoning):** The LLM is first prompted to generate a natural language, step-by-step reasoning answer to a financial question based on a passage containing text and a table.

2. **Step 2 (Program Synthesis):** The LLM is then prompted again, using its own reasoning answer from Step 1 as input. Its new task is to *extract* the underlying calculations and represent them as a formal program in a specific JSON-like schema (`{"PROGRAM":{...}, "ANSWER":...}`). The operations are strictly limited to a defined set (add, subtract, etc.). This step is heavily constrained (pink box) to produce a structured, executable output.

* **Direct Answer Method (Bottom):** This is a simpler, more direct method.

1. **Step 1 (Answering):** The LLM is prompted to directly answer the question, presumably with reasoning, but without the explicit instruction for step-by-step calculation found in the ZS-FinDSL method.

2. **Step 2 (Extraction):** A second prompt asks the LLM to extract just the final numerical or boolean answer from its previous response.

### Key Observations

1. **Structural Complexity:** The ZS-FinDSL method is significantly more complex, involving a transformation from natural language reasoning to a formal program representation. The direct method seeks only to isolate the final answer.

2. **Prompt Engineering:** The green text ("Meta prompt") in each input box defines the specific task for that stage. The ZS-FinDSL program extraction prompt is highly detailed, specifying the exact output format and allowed operations.

3. **Constraining Behavior:** The pink output boxes represent the constrained output from the LLM at each stage. In ZS-FinDSL, the constraint is a structured program; in the direct method, it's the final answer value.

4. **Flow:** Both methods use a two-stage pipeline where the output of the first LLM call becomes part of the input for the second LLM call.

### Interpretation

This diagram illustrates a research or engineering approach to improving the reliability and interpretability of LLMs on financial reasoning tasks (FinQA).

* **The ZS-FinDSL method** aims to force the LLM to externalize its reasoning process into a verifiable, executable program. This "program extraction" acts as a form of **chain-of-thought verification**. By requiring the model to output a structured program, developers can potentially audit the exact calculations performed, debug errors, and ensure the final answer is derived through a logical sequence of arithmetic operations. This addresses the "black box" problem of LLM reasoning.

* **The direct answer method** represents a more conventional approach but may be less transparent. The second "answer extraction" step suggests an attempt to parse the final answer from a potentially verbose response, which can be error-prone.

* **The comparison** highlights a trade-off: the ZS-FinDSL method imposes a higher upfront cost in prompt design and complexity but yields a more structured, auditable, and potentially more reliable output. The direct method is simpler but may lack verifiability. The diagram serves as a blueprint for implementing the more rigorous ZS-FinDSL prompting strategy.

DECODING INTELLIGENCE...