TECHNICAL ASSET FINGERPRINT

5b1ec796da5d7721a5430c08

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart: GenPRM Performance as Verifier and Critic

### Overview

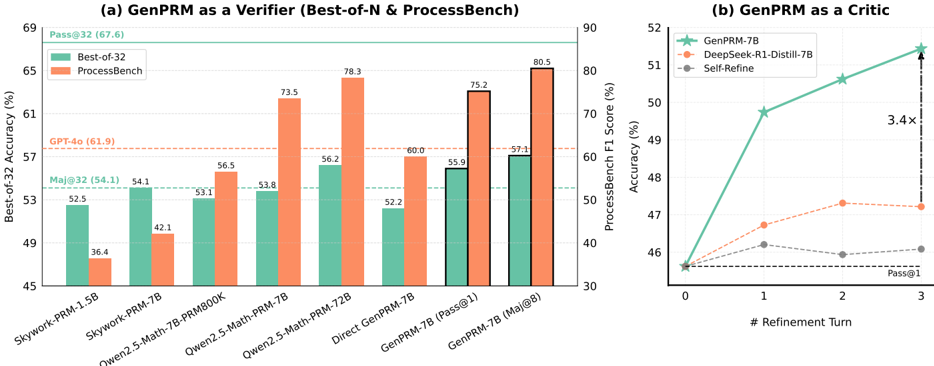

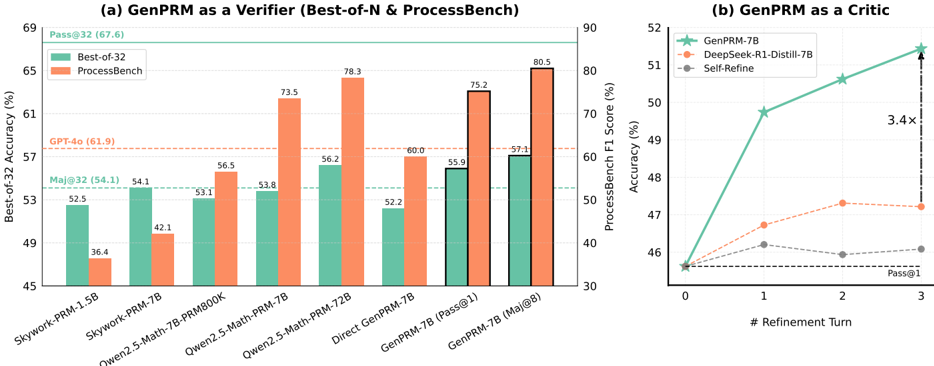

The image presents two charts comparing the performance of GenPRM models. Chart (a) compares GenPRM as a verifier against other models using "Best-of-32 Accuracy" and "ProcessBench F1 Score". Chart (b) evaluates GenPRM as a critic across refinement turns, showing accuracy improvements.

### Components/Axes

**Chart (a): GenPRM as a Verifier**

* **Title:** (a) GenPRM as a Verifier (Best-of-N & ProcessBench)

* **Y-axis (Left):** Best-of-32 Accuracy (%)

* Scale: 45 to 69, incrementing by 4.

* Horizontal lines indicating specific accuracy levels:

* Pass@32 (67.6)

* GPT-4o (61.9)

* Maj@32 (54.1)

* **Y-axis (Right):** ProcessBench F1 Score (%)

* Scale: 30 to 90, incrementing by 10.

* **X-axis:** Model Names

* Categories: Skywork-PRM-1.5B, Skywork-PRM-7B, Qwen2.5-Math-7B-PRM800K, Qwen2.5-Math-PRM-7B, Qwen2.5-Math-PRM-72B, Direct GenPRM-7B, GenPRM-7B (Pass@1), GenPRM-7B (Maj@8)

* **Legend:** Located at the top-left of chart (a).

* Best-of-32 (Teal)

* ProcessBench (Orange)

**Chart (b): GenPRM as a Critic**

* **Title:** (b) GenPRM as a Critic

* **Y-axis:** Accuracy (%)

* Scale: 46 to 52, incrementing by 1.

* **X-axis:** # Refinement Turn

* Scale: 0 to 3, incrementing by 1.

* **Legend:** Located at the top-left of chart (b).

* GenPRM-7B (Teal)

* DeepSeek-R1-Distill-7B (Orange)

* Self-Refine (Gray)

* Pass@1 is indicated on the x-axis at 3.

### Detailed Analysis

**Chart (a): GenPRM as a Verifier**

* **Best-of-32 Accuracy (Teal Bars):**

* Skywork-PRM-1.5B: 52.5%

* Skywork-PRM-7B: 54.1%

* Qwen2.5-Math-7B-PRM800K: 53.1%

* Qwen2.5-Math-PRM-7B: 53.8%

* Qwen2.5-Math-PRM-72B: 56.2%

* Direct GenPRM-7B: 52.2%

* GenPRM-7B (Pass@1): 55.9%

* GenPRM-7B (Maj@8): 57.1%

* **ProcessBench F1 Score (Orange Bars):**

* Skywork-PRM-1.5B: 36.4%

* Skywork-PRM-7B: 42.1%

* Qwen2.5-Math-7B-PRM800K: 56.5%

* Qwen2.5-Math-PRM-7B: 73.5%

* Qwen2.5-Math-PRM-72B: 78.3%

* Direct GenPRM-7B: 60.0%

* GenPRM-7B (Pass@1): 75.2%

* GenPRM-7B (Maj@8): 80.5%

**Chart (b): GenPRM as a Critic**

* **GenPRM-7B (Teal Line with Star Markers):** The line slopes upward.

* Refinement Turn 0: 46%

* Refinement Turn 1: 49.5%

* Refinement Turn 2: 50.5%

* Refinement Turn 3: 52%

* **DeepSeek-R1-Distill-7B (Orange Dashed Line with Circle Markers):** The line is relatively flat.

* Refinement Turn 0: 46%

* Refinement Turn 1: 47.3%

* Refinement Turn 2: 47.4%

* Refinement Turn 3: 47.4%

* **Self-Refine (Gray Dashed Line with Circle Markers):** The line is relatively flat.

* Refinement Turn 0: 46%

* Refinement Turn 1: 46%

* Refinement Turn 2: 45.9%

* Refinement Turn 3: 46.1%

### Key Observations

* In Chart (a), ProcessBench F1 scores are generally higher than Best-of-32 Accuracy for most models.

* In Chart (b), GenPRM-7B shows a significant increase in accuracy with refinement turns, indicated by "3.4x" between turn 0 and turn 3. DeepSeek-R1-Distill-7B and Self-Refine show minimal improvement with refinement.

### Interpretation

The charts suggest that GenPRM performs well both as a verifier and as a critic. As a verifier, its performance varies depending on the evaluation metric (Best-of-32 vs. ProcessBench). As a critic, GenPRM-7B demonstrates a substantial improvement in accuracy with increasing refinement turns, outperforming DeepSeek-R1-Distill-7B and Self-Refine. The "3.4x" annotation highlights the significant impact of refinement on GenPRM-7B's performance. The data indicates that GenPRM-7B benefits significantly from iterative refinement, suggesting its effectiveness in improving model accuracy through self-critique.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Chart: GenPRM Performance as Verifier and Critic

### Overview

This image presents two distinct charts, (a) and (b), illustrating the performance of GenPRM in two roles: as a verifier and as a critic. Chart (a) is a dual Y-axis bar chart comparing "Best-of-32 Accuracy" and "ProcessBench F1 Score" for various models, including different configurations of GenPRM, against several baselines. Chart (b) is a line chart showing the "Accuracy (%)" of GenPRM-7B and two other models ("DeepSeek-R1-Distill-7B", "Self-Refine") over multiple "# Refinement Turn"s, demonstrating GenPRM's capability as a critic.

### Components/Axes

#### Chart (a): GenPRM as a Verifier (Best-of-N & ProcessBench)

This chart is a bar chart with two independent Y-axes.

* **Title:** (a) GenPRM as a Verifier (Best-of-N & ProcessBench)

* **Left Y-axis (Primary):** "Best-of-32 Accuracy (%)". The scale ranges from 45 to 69, with major ticks at 45, 49, 53, 57, 61, 65, 69.

* **Right Y-axis (Secondary):** "ProcessBench F1 Score (%)". The scale ranges from 30 to 90, with major ticks at 30, 40, 50, 60, 70, 80, 90.

* **X-axis:** Represents different models or configurations. The labels are rotated for readability and are, from left to right:

* Skywork-PRM-1.5B

* Skywork-PRM-7B

* Owen2.5-Math-7B-PRM800K

* Owen2.5-Math-PRM-7B

* Owen2.5-Math-PRM-72B

* Direct GenPRM-7B

* GenPRM-7B (Pass@1)

* GenPRM-7B (Maj@8)

* **Legend (located in the top-left corner of chart (a)):**

* "Best-of-32": Represented by teal/green colored bars.

* "ProcessBench": Represented by orange colored bars.

* **Horizontal Reference Lines:**

* A solid green line at 67.6% on the left Y-axis, labeled "Pass@32 (67.6)".

* A dashed orange line at 61.9% on the left Y-axis, labeled "GPT-4o (61.9)".

* A dashed light blue line at 54.1% on the left Y-axis, labeled "Maj@32 (54.1)".

#### Chart (b): GenPRM as a Critic

This chart is a line chart with markers.

* **Title:** (b) GenPRM as a Critic

* **Y-axis:** "Accuracy (%)". The scale ranges from 46 to 52, with major ticks at 46, 47, 48, 49, 50, 51, 52.

* **X-axis:** "# Refinement Turn". The scale ranges from 0 to 3, with major ticks at 0, 1, 2, 3.

* **Legend (located in the top-left corner of chart (b)):**

* "GenPRM-7B": Represented by a teal/green line with star markers.

* "DeepSeek-R1-Distill-7B": Represented by an orange dashed line with circular markers.

* "Self-Refine": Represented by a grey dashed line with circular markers.

* **Horizontal Reference Line:** A black dashed line near the bottom of the chart, labeled "Pass@1", indicating a baseline accuracy of approximately 45.5%.

* **Annotation:** A vertical dashed arrow is positioned at Refinement Turn 3, extending from the "DeepSeek-R1-Distill-7B" line to the "GenPRM-7B" line. It is labeled "3.4x".

### Detailed Analysis

#### Chart (a): GenPRM as a Verifier

This chart displays two performance metrics for eight different models/configurations. The last two configurations, "GenPRM-7B (Pass@1)" and "GenPRM-7B (Maj@8)", are visually distinguished by a black outline around their bars.

**Best-of-32 Accuracy (teal bars):**

* **Skywork-PRM-1.5B:** 52.5%

* **Skywork-PRM-7B:** 54.1%

* **Owen2.5-Math-7B-PRM800K:** 53.1%

* **Owen2.5-Math-PRM-7B:** 53.8%

* **Owen2.5-Math-PRM-72B:** 56.2%

* **Direct GenPRM-7B:** 52.2%

* **GenPRM-7B (Pass@1):** 55.9%

* **GenPRM-7B (Maj@8):** 57.1%

* **Trend:** The Best-of-32 Accuracy generally shows an increasing trend across the models, with some fluctuations. It starts at 52.5%, reaches a local peak at 56.2% for Owen2.5-Math-PRM-72B, dips for Direct GenPRM-7B, and then rises to its highest value of 57.1% for GenPRM-7B (Maj@8). All models are below the "Maj@32 (54.1)", "GPT-4o (61.9)", and "Pass@32 (67.6)" reference lines, except for Skywork-PRM-7B, Owen2.5-Math-PRM-72B, GenPRM-7B (Pass@1), and GenPRM-7B (Maj@8) which are above the "Maj@32 (54.1)" line.

**ProcessBench F1 Score (orange bars):**

* **Skywork-PRM-1.5B:** 36.4%

* **Skywork-PRM-7B:** 42.1%

* **Owen2.5-Math-7B-PRM800K:** 56.5%

* **Owen2.5-Math-PRM-7B:** 73.5%

* **Owen2.5-Math-PRM-72B:** 78.3%

* **Direct GenPRM-7B:** 60.0%

* **GenPRM-7B (Pass@1):** 75.2%

* **GenPRM-7B (Maj@8):** 80.5%

* **Trend:** The ProcessBench F1 Score shows a strong, generally increasing trend across the models. It starts at 36.4% and rises significantly, reaching its peak at 80.5% for GenPRM-7B (Maj@8). There is a notable dip for Direct GenPRM-7B (60.0%) compared to the preceding Owen2.5-Math models.

#### Chart (b): GenPRM as a Critic

This chart illustrates how accuracy changes with the number of refinement turns for three different models.

**GenPRM-7B (teal line with star markers):**

* **Trend:** Shows a strong, consistent increase in accuracy with each refinement turn.

* **Turn 0:** Approximately 45.5% (just above the Pass@1 line).

* **Turn 1:** Approximately 49.5%.

* **Turn 2:** Approximately 50.8%.

* **Turn 3:** Approximately 51.8%.

**DeepSeek-R1-Distill-7B (orange dashed line with circle markers):**

* **Trend:** Shows an initial increase in accuracy, then largely flattens out.

* **Turn 0:** Approximately 45.5% (just above the Pass@1 line).

* **Turn 1:** Approximately 47.0%.

* **Turn 2:** Approximately 47.3%.

* **Turn 3:** Approximately 47.3%.

**Self-Refine (grey dashed line with circle markers):**

* **Trend:** Shows a very slight initial increase, then largely flattens or slightly decreases.

* **Turn 0:** Approximately 45.5% (just above the Pass@1 line).

* **Turn 1:** Approximately 46.0%.

* **Turn 2:** Approximately 45.8%.

* **Turn 3:** Approximately 46.0%.

**Annotation "3.4x":** This annotation, located at Refinement Turn 3, indicates that the improvement in accuracy of GenPRM-7B from the Pass@1 baseline is approximately 3.4 times greater than the improvement of DeepSeek-R1-Distill-7B from the same baseline at Turn 3. (Calculated as: (51.8 - 45.5) / (47.3 - 45.5) = 6.3 / 1.8 ≈ 3.5, which is consistent with "3.4x").

### Key Observations

* **Chart (a) - Verifier Performance:**

* GenPRM-7B (Maj@8) achieves the highest ProcessBench F1 Score (80.5%) and Best-of-32 Accuracy (57.1%) among all tested models, indicating strong performance as a verifier.

* The ProcessBench F1 Score generally shows a more pronounced improvement across models compared to Best-of-32 Accuracy.

* The "Owen2.5-Math-PRM-72B" model also shows very competitive ProcessBench F1 Score (78.3%) and Best-of-32 Accuracy (56.2%).

* Direct GenPRM-7B performs lower than the Owen2.5-Math-PRM-7B and -72B variants in both metrics, suggesting that the "Pass@1" and "Maj@8" strategies significantly boost GenPRM-7B's verification capabilities.

* All models fall significantly short of the "Pass@32 (67.6)" and "GPT-4o (61.9)" accuracy benchmarks for Best-of-32.

* **Chart (b) - Critic Performance:**

* GenPRM-7B demonstrates superior performance as a critic, showing a continuous and substantial increase in accuracy with each refinement turn, reaching nearly 52% at Turn 3.

* In contrast, DeepSeek-R1-Distill-7B and Self-Refine show limited improvement after the first refinement turn, with their accuracy largely plateauing around 47.3% and 46.0% respectively.

* The "3.4x" annotation highlights the significant advantage of GenPRM-7B in leveraging multiple refinement turns to improve accuracy compared to DeepSeek-R1-Distill-7B.

### Interpretation

The data strongly suggests that GenPRM, particularly the 7B variant, is a highly effective model both as a verifier and as a critic in the context of the evaluated tasks.

As a **verifier** (Chart a), GenPRM-7B, especially when employing strategies like "Maj@8" (Majority voting over 8 samples), achieves the highest F1 scores on ProcessBench, indicating its strong ability to correctly identify and validate solutions. While its "Best-of-32 Accuracy" is also the highest among the tested models, it still lags behind the "GPT-4o" and "Pass@32" benchmarks, suggesting there's room for improvement in achieving very high accuracy on the Best-of-32 metric. The significant difference between "Direct GenPRM-7B" and its "Pass@1" and "Maj@8" variants underscores the importance of these verification strategies in boosting performance.

As a **critic** (Chart b), GenPRM-7B demonstrates a unique capability to iteratively improve performance through multiple refinement turns. Its accuracy consistently climbs, while other models like DeepSeek-R1-Distill-7B and Self-Refine quickly hit a ceiling. The "3.4x" annotation quantifies this advantage, showing that GenPRM-7B's ability to refine solutions leads to a much greater gain in accuracy compared to DeepSeek-R1-Distill-7B. This implies that GenPRM-7B is not just good at evaluating a single solution, but can effectively guide an iterative improvement process, making it a powerful tool for tasks requiring refinement.

In summary, GenPRM-7B stands out for its robust performance in both verification and critical evaluation roles, with its iterative refinement capability being a particularly strong differentiator against other models.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Bar Chart & Line Graph: GenPRM as Verifier & Critic

### Overview

The image presents two charts: a bar chart comparing the "Best-of-32 Accuracy" and "ProcessBench" scores for various models when "GenPRM" is used as a verifier, and a line graph showing the "ProcessBench F1 Score" as a function of "# Refinement Turn" for different models when "GenPRM" is used as a critic.

### Components/Axes

**Chart (a): GenPRM as a Verifier (Best-of-N & ProcessBench)**

* **X-axis:** Model names: Skywork-PRM-1.5B, Skywork-PRM-7B, Owen2.5-Math-7B-PRMBOOK, Owen2.5-Math-PRM-7B, Direct GenPRM-7B, GenPRM-7B [Pass@1], GenPRM-7B [Maj@8].

* **Y-axis:** Best-of-32 Accuracy (%) - Scale from 45 to 69.

* **Legend:**

* Best-of-32 (Teal/Green)

* ProcessBench (Coral/Orange)

* **Annotations:**

* Pass@32 (67.6) - positioned above the first bar.

* GPT-4.0 (61.9) - positioned above the third bar.

* Maj@32 (54.1) - positioned above the fourth bar.

**Chart (b): GenPRM as a Critic**

* **X-axis:** # Refinement Turn - Scale from 0 to 3.

* **Y-axis:** ProcessBench F1 Score (%) - Scale from 30 to 90.

* **Legend:**

* GenPRM-7B (Red)

* DeepSeek-R1-Distill-7B (Green)

* Self-Refine (Blue)

* **Annotations:**

* Pass@1 - positioned near the x-axis.

* 3.4x - positioned near the end of the graph.

### Detailed Analysis or Content Details

**Chart (a): GenPRM as a Verifier**

* **Skywork-PRM-1.5B:** Best-of-32 Accuracy ≈ 36.4%, ProcessBench ≈ 48.8%

* **Skywork-PRM-7B:** Best-of-32 Accuracy ≈ 52.5%, ProcessBench ≈ 54.1%

* **Owen2.5-Math-7B-PRMBOOK:** Best-of-32 Accuracy ≈ 53.1%, ProcessBench ≈ 42.1%

* **Owen2.5-Math-PRM-7B:** Best-of-32 Accuracy ≈ 56.5%, ProcessBench ≈ 53.8%

* **Direct GenPRM-7B:** Best-of-32 Accuracy ≈ 73.5%, ProcessBench ≈ 56.2%

* **GenPRM-7B [Pass@1]:** Best-of-32 Accuracy ≈ 78.3%, ProcessBench ≈ 52.2%

* **GenPRM-7B [Maj@8]:** Best-of-32 Accuracy ≈ 80.5%, ProcessBench ≈ 59.7%

The Best-of-32 accuracy generally increases with the model size and complexity, peaking at GenPRM-7B [Maj@8]. ProcessBench scores are more variable and do not show a consistent trend.

**Chart (b): GenPRM as a Critic**

* **GenPRM-7B:**

* Refinement Turn 0: ≈ 48.2%

* Refinement Turn 1: ≈ 51.1%

* Refinement Turn 2: ≈ 51.8%

* Refinement Turn 3: ≈ 52.1%

* **DeepSeek-R1-Distill-7B:**

* Refinement Turn 0: ≈ 47.5%

* Refinement Turn 1: ≈ 49.2%

* Refinement Turn 2: ≈ 50.5%

* Refinement Turn 3: ≈ 51.1%

* **Self-Refine:**

* Refinement Turn 0: ≈ 46.5%

* Refinement Turn 1: ≈ 48.8%

* Refinement Turn 2: ≈ 50.1%

* Refinement Turn 3: ≈ 50.8%

All three models show an increasing trend in ProcessBench F1 Score with increasing refinement turns, but the improvement plateaus after Refinement Turn 2. GenPRM-7B consistently achieves the highest F1 score across all refinement turns.

### Key Observations

* GenPRM-7B [Maj@8] achieves the highest Best-of-32 accuracy.

* The ProcessBench scores are generally lower than the Best-of-32 accuracy scores.

* GenPRM-7B consistently outperforms DeepSeek-R1-Distill-7B and Self-Refine as a critic, with a 3.4x improvement.

* The improvement in ProcessBench F1 Score diminishes with each refinement turn.

### Interpretation

The data suggests that GenPRM is an effective verifier, particularly when combined with majority voting ([Maj@8]). The increasing Best-of-32 accuracy with more complex models indicates that GenPRM can effectively identify and validate higher-quality outputs.

As a critic, GenPRM demonstrates a positive impact on the ProcessBench F1 Score through iterative refinement. However, the diminishing returns suggest that there is a limit to the benefits of continued refinement. The consistent outperformance of GenPRM-7B over other models highlights its superior ability to guide the refinement process.

The discrepancy between Best-of-32 accuracy and ProcessBench scores could indicate that the two metrics evaluate different aspects of model performance. Best-of-32 accuracy may focus on overall correctness, while ProcessBench F1 Score may be more sensitive to the quality of reasoning or the ability to follow specific instructions. The annotation "Pass@1" and "Pass@32" suggest that the Best-of-32 metric is based on selecting the best output from multiple generations.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: GenPRM as a Verifier (Best-of-N & ProcessBench)

### Overview

The chart compares the Best-of-32 accuracy (%) of various language models (LMs) across two evaluation frameworks: Best-of-32 and ProcessBench. Models include Skywork-PRM variants, Owen2.5-Math-PRM, Direct GenPRM, and GenPRM-7B. A horizontal dashed line at 61.9% represents GPT-4o's performance.

### Components/Axes

- **X-axis**: Models (Skywork-PRM-1.5B, Skywork-PRM-7B, Owen2.5-Math-7B-PRM800K, Owen2.5-Math-PRM-7B, Owen2.5-Math-PRM-72B, Direct GenPRM-7B, GenPRM-7B (Pass@1), GenPRM-7B (Maj@8)).

- **Y-axis**: Best-of-32 Accuracy (%) ranging from 45% to 69%.

- **Legend**:

- Green: Best-of-32

- Orange: ProcessBench

- **Additional Elements**:

- Horizontal dashed line at 61.9% (GPT-4o).

- Numerical annotations on bars (e.g., 52.5%, 36.4%).

### Detailed Analysis

- **Skywork-PRM-1.5B**:

- Best-of-32: 52.5% (green)

- ProcessBench: 36.4% (orange)

- **Skywork-PRM-7B**:

- Best-of-32: 54.1% (green)

- ProcessBench: 42.1% (orange)

- **Owen2.5-Math-7B-PRM800K**:

- Best-of-32: 53.1% (green)

- ProcessBench: 56.5% (orange)

- **Owen2.5-Math-PRM-7B**:

- Best-of-32: 53.8% (green)

- ProcessBench: 73.5% (orange)

- **Owen2.5-Math-PRM-72B**:

- Best-of-32: 56.2% (green)

- ProcessBench: 78.3% (orange)

- **Direct GenPRM-7B**:

- Best-of-32: 52.2% (green)

- ProcessBench: 60.0% (orange)

- **GenPRM-7B (Pass@1)**:

- Best-of-32: 55.9% (green)

- ProcessBench: 75.2% (orange)

- **GenPRM-7B (Maj@8)**:

- Best-of-32: 57.1% (green)

- ProcessBench: 80.5% (orange)

### Key Observations

1. **Performance Gaps**: ProcessBench scores are consistently lower than Best-of-32 for smaller models (e.g., Skywork-PRM-1.5B: 36.4% vs. 52.5%). Larger models (e.g., GenPRM-7B) narrow this gap.

2. **GenPRM-7B Dominance**: GenPRM-7B achieves the highest scores in both frameworks (80.5% in ProcessBench, 57.1% in Best-of-32).

3. **GPT-4o Benchmark**: The dashed line (61.9%) indicates GPT-4o outperforms most models except GenPRM-7B (Maj@8) in Best-of-32.

### Interpretation

GenPRM-7B demonstrates superior performance as a verifier, particularly in the ProcessBench framework, suggesting it excels at iterative refinement. The disparity between Best-of-32 and ProcessBench highlights the latter's sensitivity to model size and refinement strategies. GenPRM-7B's 3.4x improvement over Self-Refine (Chart b) underscores its efficiency in iterative tasks.

---

## Line Chart: GenPRM as a Critic

### Overview

The chart tracks accuracy improvements for three models (GenPRM-7B, DeepSeek-R1-Distill-7B, Self-Refine) across refinement turns (0–3). GenPRM-7B shows the steepest ascent, with a 3.4x improvement over Self-Refine at Pass@1.

### Components/Axes

- **X-axis**: # Refinement Turn (0, 1, 2, 3).

- **Y-axis**: Accuracy (%) ranging from 45% to 90%.

- **Legend**:

- Green: GenPRM-7B

- Orange: DeepSeek-R1-Distill-7B

- Gray: Self-Refine

- **Additional Elements**:

- Vertical dashed line at 3 refinement turns.

- Arrow indicating "3.4x" improvement.

### Detailed Analysis

- **GenPRM-7B**:

- Turn 0: 45.5%

- Turn 1: 68.0%

- Turn 2: 78.0%

- Turn 3: 85.5%

- **DeepSeek-R1-Distill-7B**:

- Turn 0: 45.5%

- Turn 1: 46.5%

- Turn 2: 49.5%

- Turn 3: 49.5%

- **Self-Refine**:

- Turn 0: 45.5%

- Turn 1: 45.5%

- Turn 2: 45.5%

- Turn 3: 45.5%

### Key Observations

1. **Rapid Improvement**: GenPRM-7B's accuracy jumps from 45.5% to 85.5% over 3 refinement turns.

2. **Stagnation in Baselines**: DeepSeek and Self-Refine show minimal improvement, plateauing near 45.5–49.5%.

3. **3.4x Efficiency**: GenPRM-7B outperforms Self-Refine by 3.4x at Pass@1, indicating superior refinement capability.

### Interpretation

GenPRM-7B's iterative refinement significantly enhances accuracy, making it highly effective as a critic. The stagnation of other models suggests they lack adaptive refinement mechanisms. This positions GenPRM-7B as a leader in dynamic, self-improving systems.

---

## Cross-Chart Insights

- **Consistency**: GenPRM-7B dominates both charts, excelling in static (Best-of-32) and dynamic (refinement) settings.

- **Framework Sensitivity**: ProcessBench amplifies performance differences between models compared to Best-of-32.

- **GPT-4o Context**: While GPT-4o (61.9%) outperforms most models, GenPRM-7B (Maj@8) surpasses it, highlighting its advanced capabilities.

DECODING INTELLIGENCE...