## Diagram Type: LLM-based Retrieval and Reasoning Pipeline

### Overview

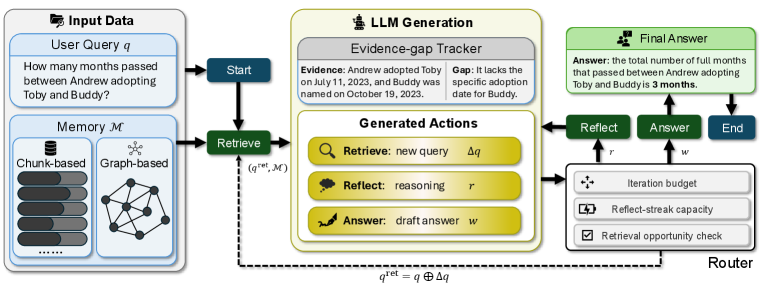

This technical diagram illustrates an iterative workflow for a Large Language Model (LLM) system designed to answer complex queries by retrieving information from memory, identifying knowledge gaps, and reasoning through steps. The process is governed by a "Router" that manages the iteration budget and decides whether to continue retrieving information or finalize an answer.

### Components/Axes

The diagram is organized into four primary functional regions from left to right:

1. **Input Data (Top-Left):**

* **User Query $q$:** A text box containing the example question: *"How many months passed between Andrew adopting Toby and Buddy?"*

* **Memory $\mathcal{M}$:** A container showing two types of data storage:

* **Chunk-based:** Represented by a database icon and a stack of five horizontal bars (progress-style indicators) of varying lengths.

* **Graph-based:** Represented by a network graph icon with nodes and connecting edges.

2. **LLM Generation (Center):**

* **Evidence-gap Tracker:** A grey-headed box that splits information into:

* **Evidence:** *"Andrew adopted Toby on July 11, 2023, and Buddy was named on October 19, 2023."*

* **Gap:** *"It lacks the specific adoption date for Buddy."*

* **Generated Actions:** A white box containing three potential outputs:

* **Retrieve:** new query $\Delta q$ (Magnifying glass icon)

* **Reflect:** reasoning $r$ (Thought bubble icon)

* **Answer:** draft answer $w$ (Pen icon)

3. **Router (Bottom-Right):**

* A control unit that manages the flow based on three internal checks:

* **Iteration budget** (Four-way arrow icon)

* **Reflect-streak capacity** (Battery/recharge icon)

* **Retrieval opportunity check** (Checkbox icon)

* **Control Buttons:** Three dark green/blue buttons sit above the router: **Reflect**, **Answer**, and **End**.

4. **Final Answer (Top-Right):**

* A light green box containing the terminal output: *"Answer: the total number of full months that passed between Andrew adopting Toby and Buddy is 3 months."*

---

### Content Details

#### Process Flow and Logic

* **Initialization:** The process begins at the **Start** button (dark blue) which triggers the **Retrieve** action (dark green).

* **Retrieval Phase:** The system pulls from **Memory $\mathcal{M}$** (both chunk and graph-based). The input to the LLM is denoted as $(q^{ret}, \mathcal{M})$.

* **Generation Phase:** The LLM analyzes the retrieved data. In the provided example, it identifies that it knows Toby's adoption date but only Buddy's naming date, marking the adoption date for Buddy as a "Gap."

* **Action Selection:** The LLM generates potential actions: a refined query ($\Delta q$), reasoning ($r$), or a draft answer ($w$).

* **Routing & Feedback Loop:**

* The **Router** evaluates the generated actions against its constraints (budget, streak, opportunities).

* **Feedback Loop (Dashed Line):** If more information is needed, a dashed line carries a refined query $q^{ret} = q \oplus \Delta q$ back to the **Retrieve** step.

* **Finalization:** If the reasoning is sufficient, the flow moves through the **Reflect** ($r$) or **Answer** ($w$) paths to the **Final Answer** box.

* **Termination:** Once the **Final Answer** is produced, the process moves to the **End** state.

---

### Key Observations

* **Iterative Refinement:** The formula $q^{ret} = q \oplus \Delta q$ indicates that the system doesn't just search once; it appends or modifies the original query based on discovered gaps to perform targeted follow-up retrievals.

* **Hybrid Memory:** The system utilizes both unstructured (Chunk-based) and structured (Graph-based) memory, suggesting a RAG (Retrieval-Augmented Generation) architecture that can handle both semantic search and relational data.

* **Guardrails:** The "Router" contains specific logic to prevent infinite loops ("Reflect-streak capacity") and manage computational costs ("Iteration budget").

---

### Interpretation

The data demonstrates a **Self-Correction/Reasoning Loop** in an AI agent. Rather than providing a hallucinated or incomplete answer when faced with missing data (the "Gap" regarding Buddy's adoption date), the system is designed to recognize what it *doesn't* know.

The "Evidence-gap Tracker" acts as a logical bridge; it forces the model to explicitly state its premises before concluding. The final answer of "3 months" (calculating from July 11 to October 19) suggests that through the iterative retrieval loop ($\Delta q$), the system likely found the missing adoption date or determined that the naming date was the relevant proxy, allowing it to complete the reasoning chain. This architecture prioritizes accuracy and transparency over simple one-shot generation.