\n

## Diagram: LLM Reasoning Process Flow

### Overview

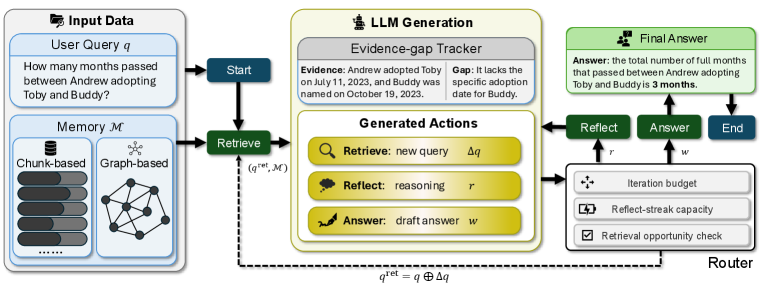

The image depicts a diagram illustrating the process flow of a Large Language Model (LLM) reasoning system. It shows how a user query interacts with a memory module, an LLM generation component, and a router to arrive at a final answer. The diagram highlights the iterative nature of the process, involving retrieval, reflection, and answering steps.

### Components/Axes

The diagram is segmented into four main areas: "Input Data", "LLM Generation", "Final Answer", and "Router". Within these areas are several components:

* **Input Data:** Contains a "User Query" box and a "Memory 𝓜" section, which is further divided into "Chunk-based" (represented by stacked cylinders) and "Graph-based" (represented by a network of nodes and edges).

* **LLM Generation:** Includes an "Evidence-gap Tracker" with "Evidence" and "Gap" sections, and a "Generated Actions" section with three actions: "Retrieve", "Reflect", and "Answer".

* **Final Answer:** Displays the "Answer" and associated steps: "Reflect", "Answer", and "End".

* **Router:** Contains three parameters: "Iteration budget", "Reflect-streak capacity", and "Retrieval opportunity check".

* **Flow Arrows:** Indicate the direction of information flow between components.

* **Text Labels:** Numerous text labels describe the function of each component and the actions performed.

* **Equation:** `q^ret = q ⊕ Δq` is present at the bottom of the diagram.

### Detailed Analysis or Content Details

The diagram illustrates the following process:

1. **Input:** A "User Query" is presented: "How many months passed between Andrew adopting Toby and Buddy?".

2. **Memory Retrieval:** The query initiates a "Retrieve" action (green arrow) from the LLM Generation to the "Memory 𝓜". The memory is represented by two structures: "Chunk-based" and "Graph-based".

3. **LLM Generation:** The retrieved information (q^(ret), 𝓜) is fed into the LLM Generation component.

4. **Evidence-Gap Tracking:** The LLM processes the information, tracking "Evidence" ("Andrew adopted Toby on July 11, 2023, and Buddy was named on October 19, 2023.") and identifying a "Gap" ("It lacks the specific adoption date for Buddy.").

5. **Generated Actions:** Based on the evidence and gap, the LLM generates three actions:

* "Retrieve": represented by a magnifying glass icon, suggesting a new query (Δq).

* "Reflect": represented by a brain icon, indicating reasoning (r).

* "Answer": represented by a document icon, indicating a draft answer (w).

6. **Router:** The generated actions are routed based on parameters like "Iteration budget", "Reflect-streak capacity", and "Retrieval opportunity check".

7. **Iteration:** The "Reflect" and "Answer" actions loop back into the LLM Generation, creating an iterative process.

8. **Final Answer:** After sufficient iterations, the process reaches the "Final Answer" stage, providing the answer: "The total number of full months that passed between Andrew adopting Toby and Buddy is 3 months."

9. **End:** The process concludes with an "End" step.

The equation `q^ret = q ⊕ Δq` suggests that the retrieved query (q^ret) is a combination of the original query (q) and a delta query (Δq) obtained through the retrieval process.

### Key Observations

* The diagram emphasizes the iterative nature of the LLM reasoning process.

* The "Evidence-gap Tracker" highlights the importance of identifying missing information.

* The "Router" component suggests a mechanism for controlling the iterative process.

* The diagram provides a high-level overview of the process without delving into the specific algorithms or techniques used.

* The diagram is visually clean and uses icons to represent different actions.

### Interpretation

The diagram illustrates a sophisticated LLM reasoning system that goes beyond simple question answering. It demonstrates a process of iterative refinement, where the LLM actively seeks out missing information, reasons about the available evidence, and generates a draft answer. The "Router" component suggests a control mechanism to prevent infinite loops or inefficient iterations. The diagram highlights the importance of both knowledge retrieval and reasoning in achieving accurate and reliable answers. The use of an "Evidence-gap Tracker" is a key feature, indicating a focus on identifying and addressing uncertainties in the available information. The equation `q^ret = q ⊕ Δq` formalizes the idea that the retrieval process is not simply about finding relevant information, but about refining the original query based on what is learned. This suggests a dynamic and adaptive approach to knowledge retrieval. The diagram is a conceptual model, and the specific implementation details may vary. However, it provides a valuable framework for understanding the key components and processes involved in LLM reasoning.