## Flowchart: LLM-Powered Question Answering System with Memory and Evidence Tracking

### Overview

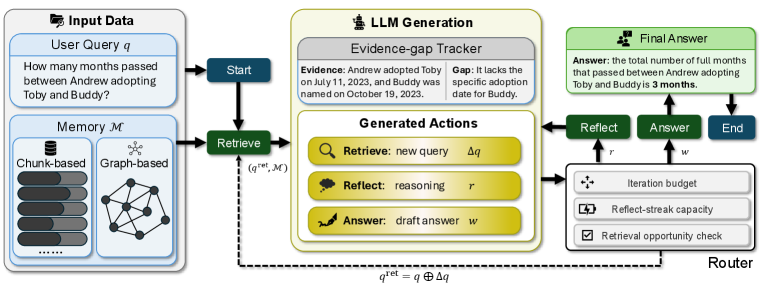

This flowchart illustrates a technical system for answering user queries using a combination of memory retrieval, LLM generation, and evidence tracking. The process begins with a user query, progresses through memory retrieval and LLM generation with evidence gap detection, and concludes with a final answer after iterative refinement checks.

### Components/Axes

1. **Input Data Section** (Top-left):

- **User Query (q)**: "How many months passed between Andrew adopting Toby and Buddy?"

- **Memory (M)**:

- Chunk-based storage (stacked horizontal bars)

- Graph-based storage (network diagram with nodes/edges)

2. **LLM Generation Section** (Center):

- **Evidence-gap Tracker**:

- Evidence: "Andrew adopted Toby on July 11, 2023"

- Gap: "It lacks the specific adoption date for Buddy"

- **Generated Actions**:

- Retrieve: "new query Δq" (magnifying glass icon)

- Reflect: "reasoning r" (lightbulb icon)

- Answer: "draft answer w" (writing hand icon)

3. **Router Section** (Bottom-right):

- **Iteration Budget**: Unspecified constraint

- **Reflect-streak Capacity**: Unspecified constraint

- **Retrieval Opportunity Check**: Binary decision (✓/✗)

4. **Final Answer Section** (Top-right):

- **Answer**: "3 months"

- **End State**: Terminal node

### Detailed Analysis

- **Flow Direction**: Left-to-right with feedback loops from Router to Retrieve

- **Key Nodes**:

- Start node (blue) → Retrieve node (green) → LLM Generation (yellow) → Router (gray) → Final Answer (green)

- **Memory Representation**:

- Chunk-based: 5 horizontal bars (4 dark gray, 1 light gray)

- Graph-based: 6 interconnected nodes with 9 edges

- **Evidence-gap Tracker**:

- Evidence timestamp: July 11, 2023

- Gap timestamp: October 19, 2023 (implied by Buddy's naming date)

### Key Observations

1. The system explicitly tracks evidence gaps (missing Buddy's adoption date)

2. Three iterative refinement steps (Retrieve/Reflect/Answer) before finalizing

3. Memory uses both chunked and graph-based storage modalities

4. Router enforces three constraints before answer acceptance

5. Final answer requires 3 full months between adoption dates

### Interpretation

This system demonstrates a hybrid approach to QA that combines:

1. **Retrieval Augmentation**: Using both chunked and graph-based memory for context

2. **Evidence Validation**: Explicit gap detection prevents hallucinated answers

3. **Iterative Refinement**: Multiple reasoning cycles improve answer quality

4. **Constraint Enforcement**: The router's checks ensure answer reliability

The evidence gap detection is particularly critical - without Buddy's adoption date (October 19, 2023), the system correctly identifies missing information before calculating the 3-month difference from Toby's adoption (July 11, 2023). The graph-based memory suggests semantic relationships between entities, while chunk-based storage provides temporal context. The router's constraints likely prevent infinite loops while maintaining answer quality through controlled iteration.