## Line Charts: Entropy and Attention Analysis During Generation

### Overview

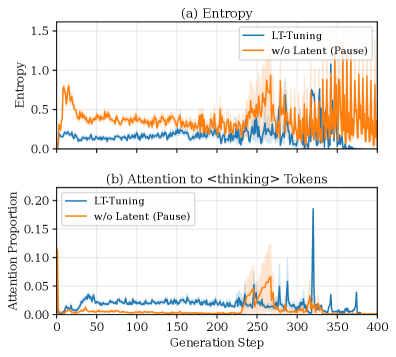

The image contains two vertically stacked line charts, labeled (a) and (b), which compare the behavior of two models—"LT-Tuning" and "w/o Latent (Pause)"—across 400 generation steps. The charts track "Entropy" and "Attention Proportion to `<thinking>` Tokens," respectively. The overall visual suggests a comparison of model stability and focus during a text generation process.

### Components/Axes

* **Chart (a) - Top Chart:**

* **Title:** `(a) Entropy`

* **Y-axis Label:** `Entropy`

* **Y-axis Scale:** Linear, ranging from 0.0 to 1.5, with major ticks at 0.0, 0.5, 1.0, and 1.5.

* **X-axis (Shared):** `Generation Step`, ranging from 0 to 400, with major ticks every 50 steps.

* **Legend:** Positioned in the top-right corner of the plot area.

* Blue line: `LT-Tuning`

* Orange line: `w/o Latent (Pause)`

* **Chart (b) - Bottom Chart:**

* **Title:** `(b) Attention to <thinking> Tokens`

* **Y-axis Label:** `Attention Proportion`

* **Y-axis Scale:** Linear, ranging from 0.00 to 0.20, with major ticks at 0.00, 0.05, 0.10, 0.15, and 0.20.

* **X-axis (Shared):** `Generation Step`, ranging from 0 to 400.

* **Legend:** Positioned in the top-right corner of the plot area, identical to chart (a).

* Blue line: `LT-Tuning`

* Orange line: `w/o Latent (Pause)`

### Detailed Analysis

**Chart (a): Entropy**

* **LT-Tuning (Blue Line):** The line starts near 0.0. It exhibits a small, sharp peak to approximately 0.25 around step 25. Following this, it fluctuates at a low level, generally between 0.0 and 0.2, with a slight downward trend, approaching 0.0 by step 400. The line is relatively smooth with minor noise.

* **w/o Latent (Pause) (Orange Line):** This line starts higher, around 0.5. It shows an immediate, sharp peak to approximately 0.8 within the first 10-20 steps. It then declines to fluctuate around 0.3-0.4 until approximately step 250. After step 250, the line becomes highly volatile, with large, rapid oscillations between ~0.1 and ~1.2, continuing until step 400. The shaded area (likely representing variance or confidence interval) is much wider for this series, especially after step 250.

**Chart (b): Attention to `<thinking>` Tokens**

* **LT-Tuning (Blue Line):** The attention proportion starts near 0.00. It shows a small, broad rise to about 0.03-0.04 between steps 25-75. It then remains very low, near 0.00-0.01, until a dramatic, singular spike occurs at approximately step 325, reaching a peak of about 0.18. After this spike, it returns to near 0.00.

* **w/o Latent (Pause) (Orange Line):** This line also starts near 0.00. It remains flat until approximately step 225, where it begins a gradual rise. It forms a broader, multi-peaked region of elevated attention between steps 250-275, with a maximum peak of about 0.06. After step 275, it declines back to near 0.00. The shaded variance region is most prominent during its peak period.

### Key Observations

1. **Divergent Behavior Post-Step 250:** The most significant pattern is the dramatic divergence in behavior between the two models after generation step 250. The "w/o Latent (Pause)" model's entropy becomes highly unstable, while the "LT-Tuning" model's entropy remains low and stable.

2. **Attention Spike vs. Hump:** The "LT-Tuning" model exhibits a single, sharp, high-magnitude attention spike late in the process (step 325). In contrast, the "w/o Latent (Pause)" model shows a lower, broader "hump" of attention earlier (steps 250-275).

3. **Initial Transient:** Both models show an initial transient phase in the first ~50 steps, with the "w/o Latent (Pause)" model showing a much larger initial entropy spike.

4. **Correlation of Instability and Diffuse Attention:** The period of high entropy volatility in the "w/o Latent (Pause)" model (steps 250-400) coincides with its period of elevated, but diffuse, attention to thinking tokens.

### Interpretation

The data suggests a fundamental difference in how the two models manage their internal state during generation. The "LT-Tuning" method appears to promote stability (low, stable entropy) and punctuated, decisive focus (a single sharp attention spike). This could indicate a model that processes "thinking" in a concentrated, efficient burst.

Conversely, the model "w/o Latent (Pause)" demonstrates instability (high, volatile entropy) and a more diffuse, prolonged period of attention. This pattern might reflect a model that struggles to maintain a coherent internal state, leading to erratic confidence (entropy) and a scattered, less efficient allocation of attention to its reasoning process. The late, sharp spike in the LT-Tuning model's attention, occurring after a long period of low entropy, could signify a critical decision point or the culmination of a latent reasoning process that the "w/o Latent" model fails to replicate, instead exhibiting noisy behavior. The charts visually argue that the "LT-Tuning" technique leads to more controlled and potentially more reliable generation dynamics.