## Diagram: Feedforward Neural Network Architecture

### Overview

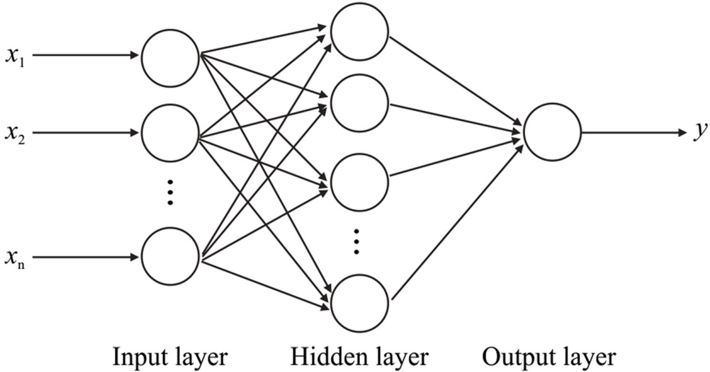

The image is a black-and-white schematic diagram illustrating the architecture of a simple feedforward neural network (also known as a multilayer perceptron). It depicts the flow of data from input nodes, through a single hidden layer, to a single output node. The diagram is designed to show the network's structure and connectivity pattern.

### Components/Axes

The diagram is organized into three distinct vertical columns, each representing a layer of the network. The layers are labeled with text placed directly beneath them.

1. **Input Layer (Left Column):**

* **Label:** "Input layer" (text centered below the column).

* **Components:** Three circular nodes are explicitly drawn. The top node is labeled with the mathematical variable **`x₁`** to its left. The middle node is labeled **`x₂`**. The bottom node is labeled **`xₙ`**.

* **Ellipsis:** Between the `x₂` and `xₙ` nodes, a vertical ellipsis (three dots) is drawn, indicating that there are an arbitrary number of additional input nodes not shown.

* **Function:** This layer receives the raw input features.

2. **Hidden Layer (Center Column):**

* **Label:** "Hidden layer" (text centered below the column).

* **Components:** Four circular nodes are explicitly drawn in a vertical column. No individual labels are assigned to these nodes.

* **Ellipsis:** Between the third and fourth visible nodes, a vertical ellipsis is drawn, indicating that the hidden layer can contain an arbitrary number of neurons.

* **Function:** This layer performs intermediate computations and feature transformations.

3. **Output Layer (Right Column):**

* **Label:** "Output layer" (text centered below the column).

* **Components:** A single circular node.

* **Output Label:** An arrow points from this node to the right, terminating at the mathematical variable **`y`**.

* **Function:** This layer produces the final prediction or output of the network.

4. **Connections (Arrows):**

* **Pattern:** The diagram shows a **fully connected** (dense) architecture.

* **Input to Hidden:** Every node in the Input layer has a directed arrow pointing to every node in the Hidden layer. This creates a dense web of connections between the first two columns.

* **Hidden to Output:** Every node in the Hidden layer has a directed arrow pointing to the single node in the Output layer.

* **Direction:** All arrows point from left to right, indicating the forward flow of data during inference.

### Detailed Analysis

* **Spatial Layout:** The components are arranged linearly from left to right, following the standard convention for depicting data flow in neural networks. The labels are positioned clearly beneath their respective columns.

* **Node Representation:** All neurons (nodes) are represented as identical, empty circles. The diagram does not depict biases, activation functions, or specific weights on the connections.

* **Variable Notation:** The inputs are denoted using standard mathematical subscript notation (`x₁`, `x₂`, `xₙ`), and the output is denoted as `y`. This is a common convention in machine learning literature.

* **Scalability Indication:** The use of ellipses (`...`) in both the input and hidden layers is a critical detail. It explicitly communicates that the diagram is a generic template, and the actual network can have a variable number of input features (`n`) and hidden neurons.

### Key Observations

1. **Single Hidden Layer:** The network architecture consists of exactly one hidden layer, classifying it as a "shallow" neural network as opposed to a "deep" network with multiple hidden layers.

2. **Full Connectivity:** The dense web of arrows between layers confirms that every neuron in one layer is connected to every neuron in the next layer, which is the defining characteristic of a dense or fully connected layer.

3. **Dimensionality:** The input layer has `n` nodes (where `n` is variable), the hidden layer has an unspecified number of nodes (let's call it `m`), and the output layer has 1 node. This suggests the network is designed for a regression task or binary classification (where the output `y` could be a probability).

4. **No Biases Shown:** The diagram is a high-level architectural view and omits the bias terms that are typically associated with each neuron in the hidden and output layers.

### Interpretation

This diagram serves as a fundamental visual abstraction of a neural network's structure. Its primary purpose is pedagogical and conceptual.

* **What it Demonstrates:** It visually explains the core concept of a feedforward neural network: input data (`x₁...xₙ`) is propagated forward through a series of transformations (the hidden layer) to produce an output (`y`). The hidden layer acts as a learned representation of the input data, extracting intermediate features that are useful for making the final prediction.

* **Relationship Between Elements:** The arrows represent the learnable parameters (weights) of the model. The strength of these connections is adjusted during training. The diagram implies that the output `y` is a complex, non-linear function of all inputs, mediated by the hidden layer.

* **Notable Implications:**

* **Universal Approximation:** A network with this architecture (one hidden layer with a sufficient number of neurons and appropriate activation functions) is theoretically capable of approximating any continuous function, a property known as the Universal Approximation Theorem.

* **Simplicity vs. Depth:** While powerful, this shallow architecture may struggle with very complex patterns in high-dimensional data (like images or language) compared to deep networks, which can learn hierarchical representations.

* **Template for Complexity:** This diagram is the foundational building block. More complex architectures (convolutional, recurrent, transformers) add specialized layers and connection patterns on top of this basic premise of layered, differentiable computation.