## Line Graph: Loss vs. FLOPs (EFLOPs) for Vanilla Pythia-70M and PonderingPythia-70M

### Overview

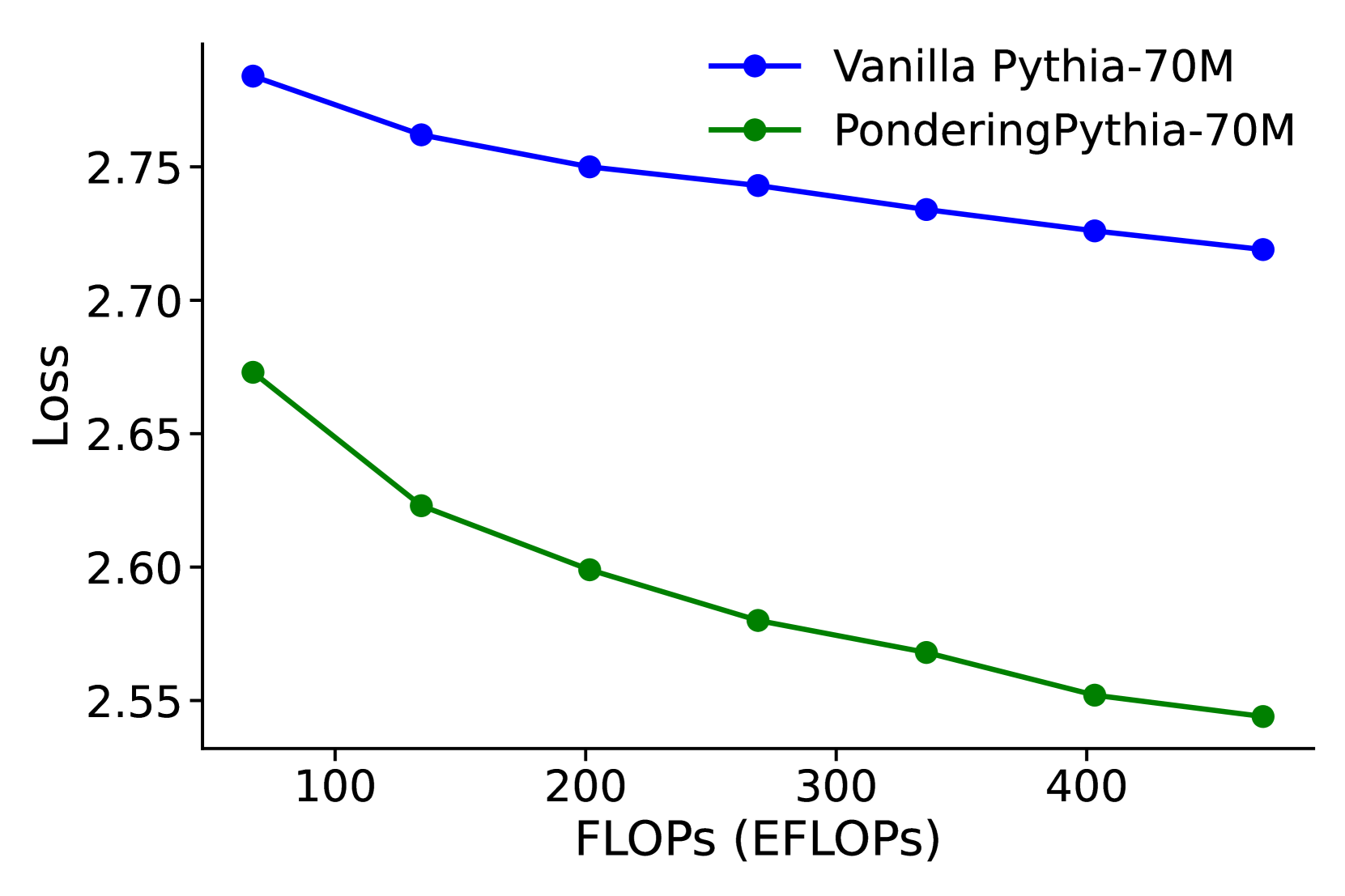

The image is a line graph comparing the **Loss** (y-axis) of two language models—**Vanilla Pythia-70M** (blue line) and **PonderingPythia-70M** (green line)—across varying computational costs (**FLOPs**, x-axis). The graph shows how loss decreases as FLOPs increase for both models, with PonderingPythia-70M consistently outperforming the Vanilla version.

---

### Components/Axes

- **X-axis**: **FLOPs (EFLOPs)**

- Range: 100 to 400 EFLOPs (increments of 50).

- Label: "FLOPs (EFLOPs)" in bold black text.

- **Y-axis**: **Loss**

- Range: 2.55 to 2.8 (increments of 0.05).

- Label: "Loss" in bold black text.

- **Legend**:

- Position: Top-right corner.

- Entries:

- **Blue circle**: "Vanilla Pythia-70M"

- **Green circle**: "PonderingPythia-70M"

---

### Detailed Analysis

#### Vanilla Pythia-70M (Blue Line)

- **Trend**: Loss decreases monotonically as FLOPs increase.

- **Data Points**:

- 100 EFLOPs: ~2.85

- 150 EFLOPs: ~2.78

- 200 EFLOPs: ~2.75

- 250 EFLOPs: ~2.74

- 300 EFLOPs: ~2.73

- 350 EFLOPs: ~2.72

- 400 EFLOPs: ~2.72

#### PonderingPythia-70M (Green Line)

- **Trend**: Loss decreases more steeply than Vanilla Pythia-70M.

- **Data Points**:

- 100 EFLOPs: ~2.67

- 150 EFLOPs: ~2.63

- 200 EFLOPs: ~2.60

- 250 EFLOPs: ~2.58

- 300 EFLOPs: ~2.57

- 350 EFLOPs: ~2.56

- 400 EFLOPs: ~2.55

---

### Key Observations

1. **Performance Gap**: PonderingPythia-70M achieves **~0.1 lower loss** than Vanilla Pythia-70M at all FLOP levels.

2. **Efficiency**: PonderingPythia-70M’s loss decreases more rapidly with increasing FLOPs, suggesting better optimization.

3. **Diminishing Returns**: Both models show reduced loss improvement at higher FLOP levels (e.g., Vanilla’s loss drops by only ~0.03 between 250–400 EFLOPs).

---

### Interpretation

The graph demonstrates that **PonderingPythia-70M** is computationally more efficient than the Vanilla version, achieving lower loss with the same or fewer FLOPs. This suggests architectural or training improvements in PonderingPythia-70M that enhance performance per FLOP. The diminishing returns at higher FLOPs imply that beyond a certain point, additional computational resources yield minimal loss reduction, highlighting potential inefficiencies in scaling.

The consistent outperformance of PonderingPythia-70M across all FLOP levels indicates it may be preferable for applications prioritizing accuracy over raw computational power.