## Heatmap: Neural Network Parameter Importance Across Layers

### Overview

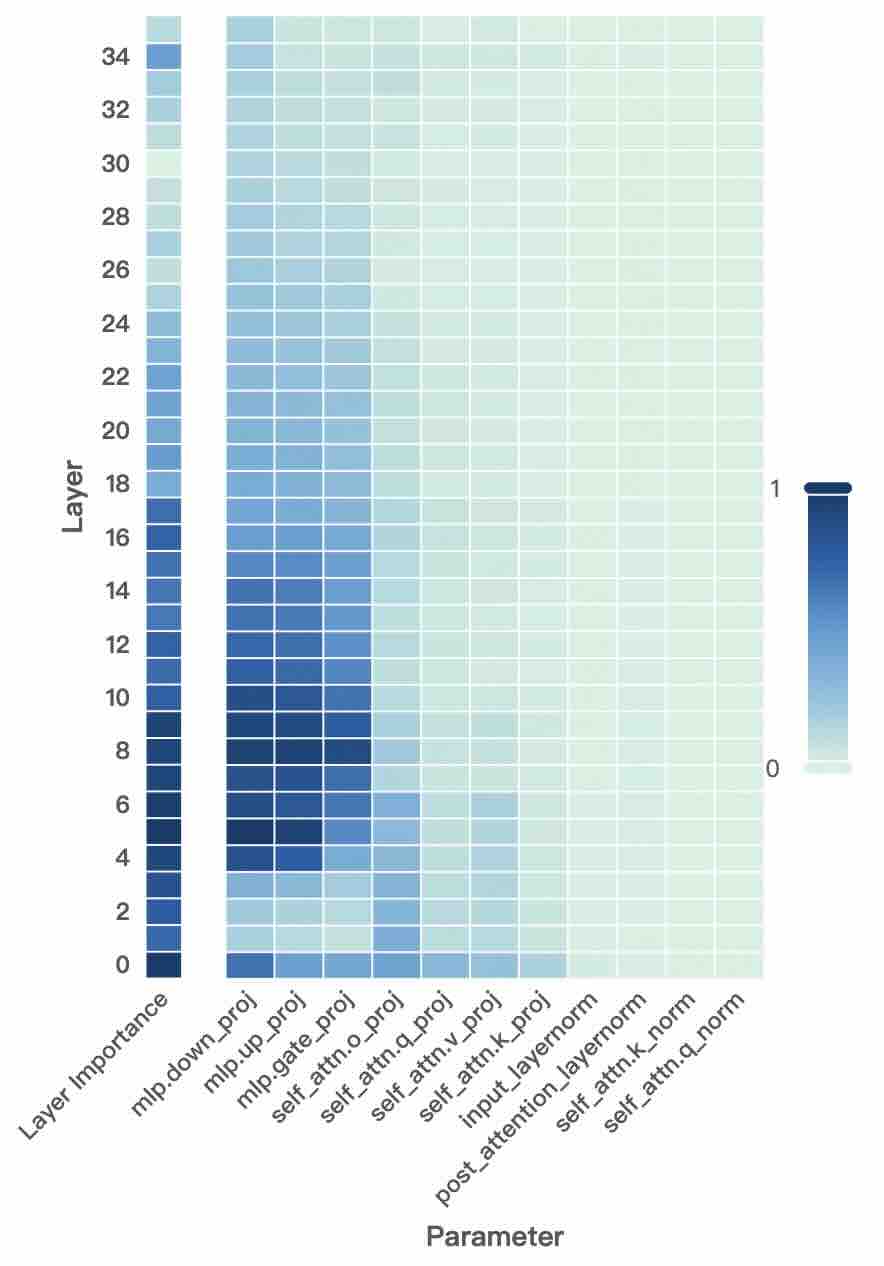

The image is a heatmap visualizing the relative importance of different parameters across the layers of a neural network, likely a transformer-based model. The chart uses a color gradient to represent importance values, with darker blue indicating higher importance (closer to 1) and lighter blue/green indicating lower importance (closer to 0).

### Components/Axes

* **Y-Axis (Vertical):** Labeled **"Layer"**. It represents the depth of the network, with layer numbers increasing from bottom to top. The axis is marked with even numbers: 0, 2, 4, 6, 8, 10, 12, 14, 16, 18, 20, 22, 24, 26, 28, 30, 32, 34.

* **X-Axis (Horizontal):** Labeled **"Parameter"**. It lists specific components or weight matrices within each layer. The labels are rotated approximately 45 degrees for readability. From left to right, the parameters are:

1. `Layer Importance` (This appears to be a summary column for the entire layer).

2. `mlp.down_proj`

3. `mlp.up_proj`

4. `mlp.gate_proj`

5. `self_attn.o_proj`

6. `self_attn.q_proj`

7. `self_attn.v_proj`

8. `self_attn.k_proj`

9. `input_layernorm`

10. `post_attention_layernorm`

11. `self_attn.k_norm`

12. `self_attn.q_norm`

* **Color Scale/Legend:** Positioned on the right side of the chart. It is a vertical bar showing a gradient from a very light greenish-blue at the bottom (labeled **0**) to a dark blue at the top (labeled **1**). This scale maps color intensity to an importance value between 0 and 1.

### Detailed Analysis

The heatmap is a grid where each cell's color corresponds to the importance of a specific parameter at a specific layer.

* **`Layer Importance` Column (Far Left):** This column shows a strong vertical trend. Importance is highest (darkest blue) in the lowest layers (0-8), remains moderately high through the middle layers (10-20), and then gradually decreases (becomes lighter) in the highest layers (22-34). Layer 0 is the darkest cell in this column.

* **MLP Parameters (`mlp.down_proj`, `mlp.up_proj`, `mlp.gate_proj`):** These three columns show a very similar pattern. They exhibit high importance (dark blue) in the lower to middle layers, roughly from layer 4 to layer 18. The intensity peaks around layers 8-14. Importance drops off significantly in the higher layers (above 20), becoming very light.

* **Self-Attention Output & Query Projections (`self_attn.o_proj`, `self_attn.q_proj`):** These columns show moderate importance concentrated in the lower-middle layers. The darkest cells appear between layers 4 and 12, with `self_attn.q_proj` showing slightly higher intensity than `self_attn.o_proj` in that range. They fade to low importance in higher layers.

* **Self-Attention Value & Key Projections (`self_attn.v_proj`, `self_attn.k_proj`):** These parameters show lower overall importance compared to the previous groups. There is a faint band of slightly higher importance (light blue) in the lower layers (approximately 0-10), but it is much less pronounced. They are very light (near 0) for most layers.

* **Normalization Layers (`input_layernorm`, `post_attention_layernorm`, `self_attn.k_norm`, `self_attn.q_norm`):** These four rightmost columns are uniformly very light greenish-blue across all layers, indicating consistently low importance values (near 0) throughout the network. There is no significant variation by layer.

### Key Observations

1. **Layer-Depth Gradient:** There is a clear overall trend where parameters in the lower and middle layers of the network are deemed more important than those in the highest layers.

2. **Parameter-Type Hierarchy:** A distinct hierarchy of importance exists among parameter types:

* **High Importance:** MLP projection layers (`down_proj`, `up_proj`, `gate_proj`).

* **Moderate Importance:** Self-attention output and query projections (`o_proj`, `q_proj`).

* **Low Importance:** Self-attention value and key projections (`v_proj`, `k_proj`).

* **Very Low Importance:** All normalization layers.

3. **Concentration of Importance:** The most critical parameters (darkest blues) are not evenly distributed but are concentrated in a "band" spanning the lower-middle layers (approximately layers 4 through 18).

4. **Uniformity of Norm Layers:** The normalization parameters show almost no variation in importance across the entire depth of the network, suggesting they play a consistently minor role according to this metric.

### Interpretation

This heatmap likely visualizes the results of a parameter pruning or importance scoring analysis (e.g., using methods like movement pruning, Taylor expansion, or gradient-based saliency) on a trained transformer model. The data suggests several key insights about the model's functional anatomy:

* **Core Computational Pathways:** The high importance of MLP projections, especially in mid-layers, indicates these components are crucial for the model's core feature transformation and processing capabilities. The network relies heavily on these non-linear transformations.

* **Selective Attention Mechanism:** Within the attention mechanism, the query (`q_proj`) and output (`o_proj`) projections are more vital than the key (`k_proj`) and value (`v_proj`) projections. This could imply that the model's ability to *form* queries and *integrate* attention results is more critical than the precise representation of keys and values for this particular task or metric.

* **Depth-Dependent Processing:** The concentration of importance in lower-middle layers aligns with theories that early-to-mid layers in deep networks are responsible for building rich, abstract representations, while the very highest layers may perform more task-specific, fine-grained adjustments that are less sensitive to individual parameter perturbation.

* **Normalization as a Stable Foundation:** The uniformly low importance of normalization layers does not mean they are unimportant for model function or training stability. Instead, it suggests that their specific parameter values are highly robust or redundant; small changes to them have minimal impact on the model's output according to this importance measure. They provide a stable, but not highly tunable, foundation.

**Notable Anomaly:** The `Layer Importance` summary column shows a slightly different trend than the individual MLP parameters. Its importance decays more smoothly and remains somewhat higher in the top layers compared to the sharp drop-off of `mlp.*` parameters. This could indicate that while specific MLP weights become less critical, the layer as a whole retains some functional significance, possibly due to residual connections or other components not broken out in this chart.