## Line Chart: Accuracy vs. Attack Ratio for Different Federated Learning Algorithms

### Overview

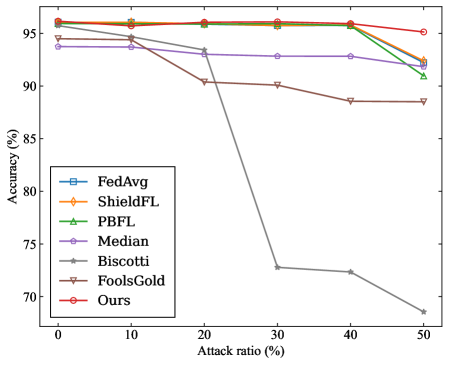

The image is a line chart comparing the accuracy of different federated learning algorithms under varying attack ratios. The x-axis represents the attack ratio (percentage), and the y-axis represents the accuracy (percentage). Several algorithms are compared, including FedAvg, ShieldFL, PBFL, Median, Biscotti, FoolsGold, and "Ours". The chart illustrates how the accuracy of each algorithm changes as the attack ratio increases.

### Components/Axes

* **X-axis:** Attack ratio (%), with markers at 0, 10, 20, 30, 40, and 50.

* **Y-axis:** Accuracy (%), ranging from 70 to 95, with markers at 70, 75, 80, 85, 90, and 95.

* **Legend:** Located on the left side of the chart, listing the algorithms and their corresponding line colors and markers:

* FedAvg (blue, square marker)

* ShieldFL (orange, diamond marker)

* PBFL (green, triangle marker)

* Median (purple, circle marker)

* Biscotti (gray, star marker)

* FoolsGold (brown, inverted triangle marker)

* Ours (red, circle marker)

### Detailed Analysis

* **FedAvg (blue, square marker):** The accuracy starts at approximately 94% at 0% attack ratio. It remains relatively stable, decreasing slightly to around 92% at 50% attack ratio.

* **ShieldFL (orange, diamond marker):** The accuracy starts at approximately 96% at 0% attack ratio. It remains relatively stable, decreasing slightly to around 92% at 50% attack ratio.

* **PBFL (green, triangle marker):** The accuracy starts at approximately 96% at 0% attack ratio. It remains relatively stable, decreasing slightly to around 91% at 50% attack ratio.

* **Median (purple, circle marker):** The accuracy starts at approximately 94% at 0% attack ratio. It decreases slightly to around 93% at 20% attack ratio, and then decreases slightly again to around 92% at 50% attack ratio.

* **Biscotti (gray, star marker):** The accuracy starts at approximately 96% at 0% attack ratio. It decreases sharply to approximately 73% at 30% attack ratio, and then decreases further to approximately 69% at 50% attack ratio.

* **FoolsGold (brown, inverted triangle marker):** The accuracy starts at approximately 96% at 0% attack ratio. It decreases slightly to approximately 89% at 50% attack ratio.

* **Ours (red, circle marker):** The accuracy starts at approximately 96% at 0% attack ratio. It remains relatively stable, decreasing slightly to around 95% at 40% attack ratio, and then decreases slightly again to around 92% at 50% attack ratio.

### Key Observations

* Biscotti's accuracy is significantly more affected by the attack ratio compared to other algorithms.

* FedAvg, ShieldFL, PBFL, Median, FoolsGold, and "Ours" algorithms maintain relatively high accuracy even with increasing attack ratios.

* The "Ours" algorithm appears to perform well, maintaining high accuracy across all attack ratios tested.

### Interpretation

The chart demonstrates the robustness of different federated learning algorithms against attacks. The Biscotti algorithm is highly susceptible to attacks, as its accuracy drops dramatically with increasing attack ratios. In contrast, FedAvg, ShieldFL, PBFL, Median, FoolsGold, and "Ours" are more resilient, maintaining relatively stable accuracy even when the attack ratio is high. The "Ours" algorithm shows a slight advantage, exhibiting the least decrease in accuracy as the attack ratio increases, suggesting it is the most robust among the algorithms tested. The data suggests that the choice of aggregation algorithm significantly impacts the security and reliability of federated learning systems in adversarial environments.