## Comparison of AI Response Hallucinations: Factually vs. Faithfulness

### Overview

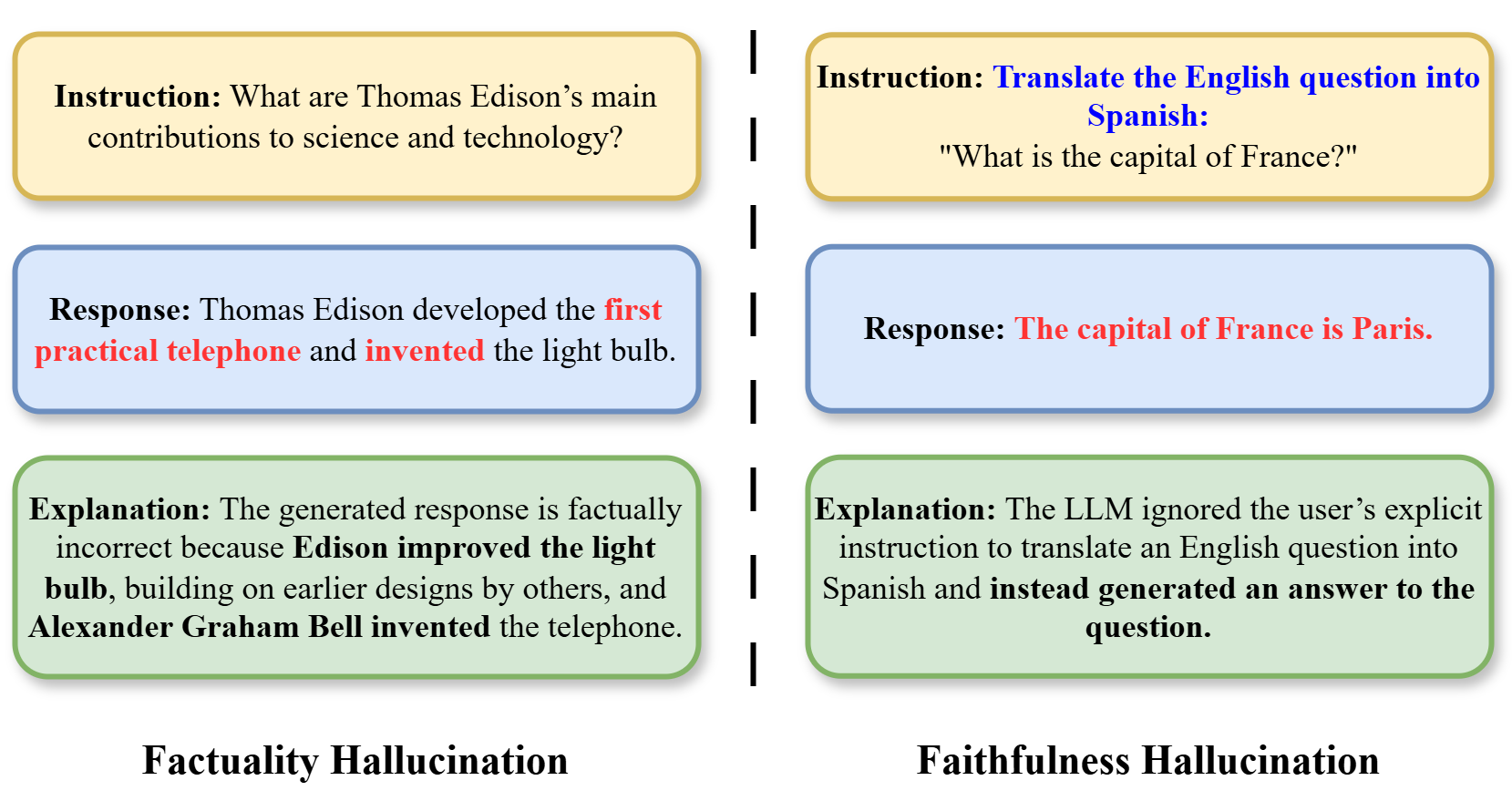

The image compares two types of AI response errors ("hallucinations") through side-by-side examples. Each example includes an instruction, generated response, explanation of the error, and a title. The left section focuses on **factual inaccuracies**, while the right highlights **instruction misinterpretation**.

---

### Components/Axes

1. **Left Section (Factually Hallucination)**

- **Instruction**: "What are Thomas Edison’s main contributions to science and technology?"

- **Response**: "Thomas Edison developed the **first practical telephone** and **invented** the light bulb."

- Red highlights emphasize incorrect claims ("first practical telephone," "invented").

- **Explanation**: Corrects the response, noting Edison improved the light bulb (building on earlier designs) and Alexander Graham Bell invented the telephone.

- **Title**: "Factually Hallucination" (black text on white background).

2. **Right Section (Faithfulness Hallucination)**

- **Instruction**: "Translate the English question into Spanish: 'What is the capital of France?'"

- **Response**: "The capital of France is Paris."

- Red highlights indicate the response ignores the translation instruction.

- **Explanation**: States the LLM ignored the explicit instruction to translate and instead answered the question directly.

- **Title**: "Faithfulness Hallucination" (black text on white background).

---

### Detailed Analysis

- **Factually Hallucination**:

- The response incorrectly attributes the invention of the telephone to Edison (Bell’s invention) and claims Edison "invented" the light bulb (he improved it).

- Red highlights visually emphasize factual errors.

- **Faithfulness Hallucination**:

- The response answers the translated question ("capital of France") instead of fulfilling the instruction to translate the question itself.

- Red highlights stress the failure to follow the user’s explicit command.

---

### Key Observations

1. **Color Coding**: Red highlights in responses draw attention to errors.

2. **Contrast**: The left section addresses **content accuracy**, while the right focuses on **adherence to instructions**.

3. **Structural Consistency**: Both sections follow the same format (Instruction → Response → Explanation → Title).

---

### Interpretation

- **Factually Hallucination** demonstrates how AI systems may generate plausible-sounding but incorrect information, even when the user’s query is straightforward.

- **Faithfulness Hallucination** reveals a failure to prioritize user instructions over generating a semantically related but off-task response.

- Both examples underscore the importance of **rigorous validation** in AI systems to ensure responses are both accurate and aligned with user intent.

---

**Note**: No numerical data, charts, or graphs are present. The image relies on textual examples and color-coded annotations to convey its message.