## Scatter Plot Series: SelfCheckGPT Method Scores vs. Human Scores

### Overview

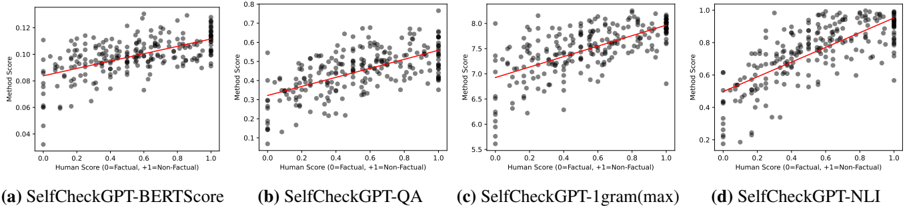

The image displays a series of four scatter plots arranged horizontally, labeled (a) through (d). Each plot compares the score of a different "SelfCheckGPT" method (y-axis) against a "Human Score" (x-axis) for the same set of data points. All plots include a red linear regression line indicating the general trend. The overall purpose is to visualize the correlation between various automated factuality-checking methods and human judgment.

### Components/Axes

* **Layout:** Four distinct scatter plots in a 1x4 horizontal grid.

* **Common X-Axis (All Plots):**

* **Label:** `Human Score (0=Factual, 1=Non-Factual)`

* **Scale:** Linear, ranging from 0.0 to 1.0.

* **Tick Marks:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Common Y-Axis Label (All Plots):** `Method Score`

* **Plot-Specific Y-Axis Scales & Titles:**

* **Plot (a):** Title: `(a) SelfCheckGPT-BERTScore`. Y-axis scale: ~0.04 to 0.12. Ticks: 0.04, 0.06, 0.08, 0.10, 0.12.

* **Plot (b):** Title: `(b) SelfCheckGPT-QA`. Y-axis scale: ~0.1 to 0.8. Ticks: 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8.

* **Plot (c):** Title: `(c) SelfCheckGPT-1gram(max)`. Y-axis scale: ~5.5 to 8.0. Ticks: 5.5, 6.0, 6.5, 7.0, 7.5, 8.0.

* **Plot (d):** Title: `(d) SelfCheckGPT-NLI`. Y-axis scale: ~0.2 to 1.0. Ticks: 0.2, 0.4, 0.6, 0.8, 1.0.

* **Data Representation:**

* **Points:** Gray, semi-transparent circles, representing individual data samples.

* **Trend Line:** A solid red line in each plot, representing the linear regression fit.

* **Legend:** There is no separate legend box. The method names are provided as subplot titles below each graph.

### Detailed Analysis

* **Plot (a) SelfCheckGPT-BERTScore:**

* **Trend:** The red line shows a clear positive slope. As the Human Score increases from 0 (Factual) to 1 (Non-Factual), the BERTScore method score increases from approximately 0.07 to 0.11.

* **Data Distribution:** Points are widely scattered. For factual content (Human Score ~0), method scores range from ~0.04 to ~0.10. For non-factual content (Human Score ~1), scores cluster between ~0.09 and ~0.12, with some outliers below.

* **Plot (b) SelfCheckGPT-QA:**

* **Trend:** Strong positive slope. The method score rises from ~0.3 at Human Score=0 to ~0.6 at Human Score=1.

* **Data Distribution:** Significant spread. At the factual end (0.0), scores vary from ~0.1 to ~0.5. At the non-factual end (1.0), scores are more concentrated between ~0.5 and ~0.7.

* **Plot (c) SelfCheckGPT-1gram(max):**

* **Trend:** Positive slope. The score increases from ~6.8 at Human Score=0 to ~7.7 at Human Score=1.

* **Data Distribution:** Points are densely packed along the trend line. The range at Human Score=0 is roughly 6.0 to 7.5, and at Human Score=1, it's roughly 7.2 to 8.0.

* **Plot (d) SelfCheckGPT-NLI:**

* **Trend:** The steepest positive slope among the four. The score increases from ~0.5 at Human Score=0 to ~0.9 at Human Score=1.

* **Data Distribution:** Very wide vertical spread, especially for mid-range human scores. At Human Score=0, scores span from ~0.2 to ~0.8. At Human Score=1, scores are mostly between ~0.7 and ~1.0.

### Key Observations

1. **Universal Positive Correlation:** All four methods show a positive correlation with human judgment. Higher method scores are associated with content humans label as non-factual (1.0).

2. **Varying Correlation Strength:** The tightness of the data points around the regression line varies. `SelfCheckGPT-1gram(max)` (c) appears to have the most consistent correlation (points closest to the line), while `SelfCheckGPT-NLI` (d) shows the highest variance in scores for a given human score.

3. **Differing Score Ranges:** The absolute scale of the "Method Score" is entirely different for each technique, indicating they are measuring different underlying metrics or using different normalization schemes.

4. **Clustering at Extremes:** In several plots (notably b and d), there is a noticeable clustering of data points at the extreme human scores of 0.0 and 1.0, with fewer points in the ambiguous middle range (0.3-0.7).

### Interpretation

The data demonstrates that all four SelfCheckGPT variants are effective proxies for human factuality assessment, as evidenced by the consistent positive correlation. The methods successfully assign higher scores to content deemed non-factual by humans.

The differences in scatter and slope suggest varying characteristics:

* **SelfCheckGPT-1gram(max)** appears to be the most **precise and consistent** predictor, with scores tightly following the human judgment trend.

* **SelfCheckGPT-NLI** shows the **strongest discriminative power** (steepest slope), meaning it produces the largest score difference between factual and non-factual content. However, its high variance suggests it may be less reliable for individual predictions or is sensitive to other factors beyond simple factuality.

* **SelfCheckGPT-BERTScore** and **SelfCheckGPT-QA** show moderate correlation and spread, positioning them as potentially balanced approaches.

The clustering at score extremes (0 and 1) might indicate the dataset used for evaluation contains many clear-cut examples of factual and non-factual text, with fewer ambiguous cases. This could influence the perceived performance of the methods. The primary takeaway is that automated metrics can align with human judgment on factuality, but their behavior (precision vs. discriminative power) differs significantly based on the underlying technique (n-gram overlap, QA consistency, NLI, etc.).