TECHNICAL ASSET FINGERPRINT

5d15c1716a8a15d6cedeea71

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

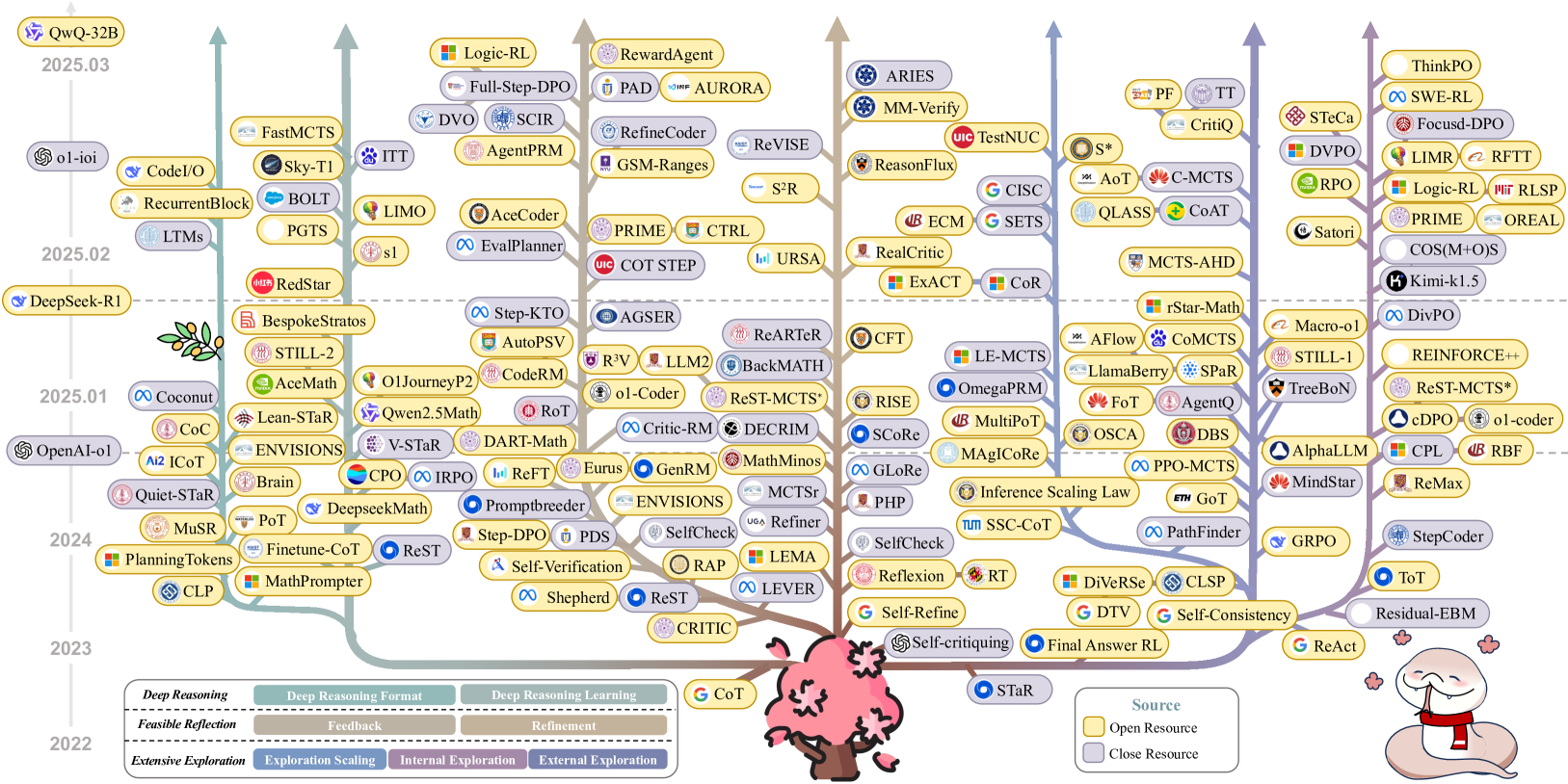

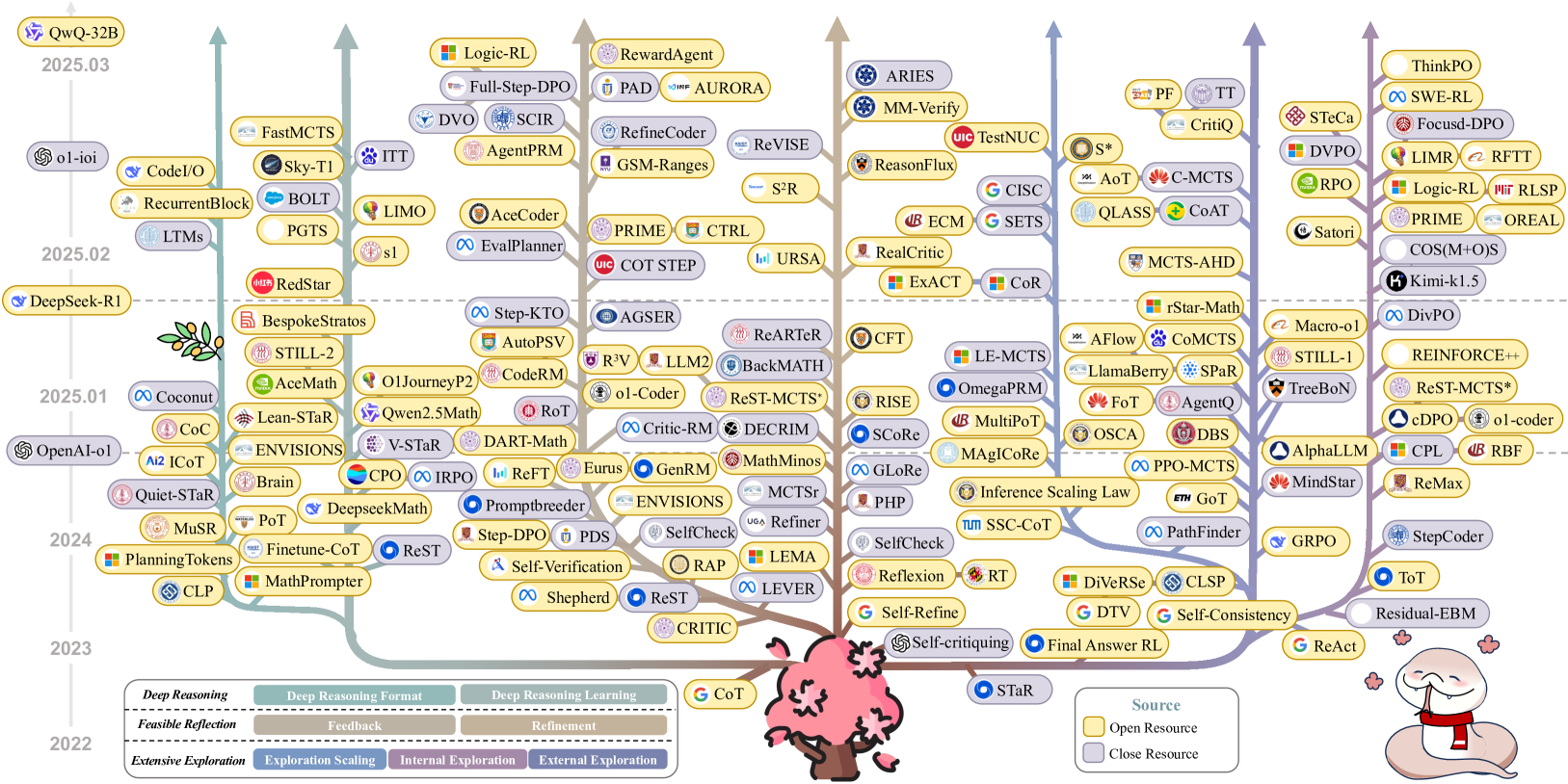

## Diagram: AI Research Project Timeline

### Overview

The image is a diagram illustrating a timeline of AI research projects, categorized by their reasoning approach (Deep Reasoning, Deep Reasoning Format, Deep Reasoning Learning). The projects are visually connected by lines, resembling a tree structure, with the timeline spanning from 2022 to 2025.03. The diagram uses color-coding to distinguish between open-source and closed-source projects.

### Components/Axes

* **Timeline:** Vertical axis with markers for 2022, 2023, 2024, 2025.01, 2025.02, and 2025.03.

* **Categories:**

* Deep Reasoning (Feasible Reflection, Extensive Exploration)

* Deep Reasoning Format (Feedback, Exploration Scaling, Internal Exploration)

* Deep Reasoning Learning (Refinement, External Exploration)

* **Nodes:** Represent individual AI research projects, labeled with their names (e.g., QwQ-32B, OpenAI-o1, Logic-RL).

* **Connections:** Lines connecting the nodes, indicating relationships or dependencies between projects.

* **Legend (bottom-right):**

* Yellow: Open Resource

* Blue: Close Resource

### Detailed Analysis

**Timeline Breakdown:**

* **2025.03:**

* QwQ-32B

* **2025.02:**

* DeepSeek-R1

* LTMs

* RecurrentBlock

* CodeI/O

* o1-ioi

* FastMCTS

* Sky-T1

* BOLT

* PGTS

* ITT

* LIMO

* s1

* RedStar (Chinese characters present)

* Translation: Red Star

* **2025.01:**

* OpenAI-o1

* Coconut

* CoC

* Ai2 ICOT

* Quiet-STaR

* Lean-STaR

* Qwen2.5Math

* V-STaR

* DART-Math

* O1JourneyP2

* Bespoke Stratos

* STILL-2

* AceMath

* **2024:**

* MuSR

* Planning Tokens

* CLP

* Brain

* CPO

* IRPO

* ReFT

* Eurus

* GenRM

* DeepseekMath

* Promptbreeder

* ENVISIONS

* PoT

* Finetune-CoT

* ReST

* MathPrompter

* **2023:**

* Shepherd

* ReST

* CRITIC

* **2022:**

* G CoT

* STaR

**Project Categories and Connections:**

* **Deep Reasoning:** Projects like "QwQ-32B" and "OpenAI-o1" are positioned on the left side of the diagram.

* **Deep Reasoning Format:** Projects like "LIMO" and "Critic-RM" are positioned in the center of the diagram.

* **Deep Reasoning Learning:** Projects like "ThinkPO" and "ReAct" are positioned on the right side of the diagram.

**Source Type:**

* Open Resource: Projects with a yellow background (e.g., Logic-RL, Full-Step-DPO, RewardAgent)

* Close Resource: Projects with a blue background (e.g., DeepSeek-R1, OpenAI-o1, ITT)

### Key Observations

* The diagram shows a progression of AI research projects over time, with a concentration of projects around 2024 and 2025.

* The "tree" structure suggests a branching or evolutionary relationship between the projects.

* The color-coding provides information about the accessibility of the projects (open vs. closed source).

### Interpretation

The diagram provides a visual representation of the AI research landscape, highlighting the different approaches to deep reasoning and the relationships between various projects. The timeline indicates the relative recency of different projects, while the color-coding offers insights into the openness and accessibility of the research. The diagram suggests a growing interest and activity in AI research, with a diverse range of projects exploring different aspects of deep reasoning. The branching structure implies that later projects build upon or are inspired by earlier ones.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Scatter Plot: Large Language Model (LLM) Performance Over Time

### Overview

This image presents a scatter plot visualizing the performance of various Large Language Models (LLMs) over time, from 2022 to 2025.03. The x-axis represents a performance score, and the y-axis represents the date. Each point on the plot represents a specific LLM, and the color of the point appears to indicate a grouping or category. The plot is densely populated, suggesting a rapid increase in the number of LLMs and their performance over the observed period.

### Components/Axes

* **X-axis:** "Performance Score" - Scale is not explicitly labeled, but ranges approximately from 0 to 100.

* **Y-axis:** "Date" - Labeled with years 2022, 2023, 2024, 2025.01, 2025.02, 2025.03.

* **Legend:** Located at the bottom of the image, containing a large number of LLM names, each associated with a unique color. The legend is divided into sections based on the LLM's origin or framework (e.g., OpenAI, DeepSeek-RI, QwQ-32B).

* **Title:** "Flexible Data Scientist Scaling Coalition" at the bottom-left.

* **Source:** "Source: OpenCompass" at the bottom-right.

### Detailed Analysis or Content Details

The plot contains a multitude of LLMs. Due to the density of the plot, precise numerical values are difficult to extract. However, the following observations can be made based on the visual trends and legend:

**2022:**

* A small number of LLMs are present, with performance scores generally below ~30.

* Notable LLMs: MuStar, Planning Falcon, Brain.

**2023:**

* A significant increase in the number of LLMs.

* Performance scores generally range from ~30 to ~60.

* Notable LLMs: OpenAI-4, Llama2, DeepSeek-Coder, Vicuna.

**2024:**

* Further increase in the number of LLMs.

* Performance scores generally range from ~40 to ~80.

* Notable LLMs: DeepSeek-Math, WizardLM, Qwen1.5.

**2025.01:**

* Continued increase in LLM count.

* Performance scores generally range from ~50 to ~90.

* Notable LLMs: Coconut, Lean-Star, Still-2.

**2025.02:**

* The highest density of LLMs on the plot.

* Performance scores generally range from ~60 to ~95.

* Notable LLMs: FastMCTS, Sky-TI, RedStar.

**2025.03:**

* The most recent data point, with a continued high density of LLMs.

* Performance scores range from ~60 to ~98.

* Notable LLMs: Logic-RL, RewardAgent, Aries.

**Color-Coded Groups (based on legend):**

* **QwQ-32B (Purple):** LLMs in this group show a consistent upward trend in performance, reaching scores above 90 in 2025.03.

* **ol-ioI (Green):** LLMs in this group show a similar upward trend, with performance scores increasing from around 40 in 2023 to above 80 in 2025.03.

* **DeepSeek-RI (Orange):** LLMs in this group demonstrate a strong performance increase, with scores ranging from 50 to 90+ over the period.

* **OpenAI-4 (Blue):** LLMs in this group show a relatively stable performance, with scores around 70-80.

* **Other Groups (various colors):** A wide range of other LLMs are present, with varying performance levels and trends.

### Key Observations

* **Exponential Growth:** The number of LLMs is increasing exponentially over time.

* **Performance Improvement:** LLM performance is consistently improving, with higher scores observed in more recent years.

* **Competition:** The density of the plot suggests intense competition among LLM developers.

* **Grouping:** The color-coding of LLMs suggests different development groups or frameworks.

* **Outliers:** Some LLMs consistently outperform others within their respective groups.

### Interpretation

The data suggests a rapidly evolving landscape in the field of Large Language Models. The exponential growth in the number of LLMs, coupled with consistent performance improvements, indicates significant investment and innovation in this area. The color-coding of LLMs provides insights into the different players and their respective strategies. The plot highlights the competitive nature of the field, with numerous LLMs vying for top performance. The "Flexible Data Scientist Scaling Coalition" and "OpenCompass" source suggest this data is focused on models useful for data science tasks and is being tracked by an open-source initiative. The increasing performance scores over time demonstrate the effectiveness of ongoing research and development efforts. The plot serves as a valuable snapshot of the current state of LLM technology and its potential for future advancements. The data suggests that LLMs are becoming increasingly powerful and versatile, with applications across a wide range of domains.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Taxonomy of AI Reasoning Methods and Models (2022-2025)

### Overview

This image is a complex, tree-like taxonomy diagram illustrating the evolution and categorization of AI reasoning methods, models, and research directions from 2022 to March 2025. It organizes numerous named techniques and models into vertical streams, with time progressing from bottom (2022) to top (2025.03). The diagram uses color-coding, icons, and connecting lines to show relationships and lineage.

### Components/Axes

* **Vertical Timeline (Left Axis):** Years and specific months are marked: 2022, 2023, 2024, 2025.01, 2025.02, 2025.03.

* **Horizontal Categories (Bottom Legend):** The diagram's branches are categorized into three primary research directions, each with sub-categories:

* **Deep Reasoning** (Teal/Green palette)

* Deep Reasoning Format

* Deep Reasoning Learning

* **Feasible Reflection** (Brown/Tan palette)

* Feedback

* Refinement

* **Extensive Exploration** (Blue/Purple palette)

* Exploration Scaling

* Internal Exploration

* External Exploration

* **Source Legend (Bottom Right):** A box defines the meaning of node border colors:

* **Yellow Border:** Open Resource

* **Grey Border:** Close Resource

* **Node Elements:** Each node is a rounded rectangle containing a model/method name (e.g., "CoT", "STaR", "o1-coder"). Many nodes have a small icon to their left, indicating the associated organization or framework (e.g., Google's "G", OpenAI's spiral, Anthropic's "A", various university logos).

* **Connectors:** Lines of varying colors (matching the category palettes) connect nodes, indicating relationships, inspiration, or evolutionary paths. The lines converge at a central pink tree-like structure at the bottom, symbolizing a common root or foundation.

### Detailed Analysis

The diagram is densely populated. Below is a structured extraction of the labeled nodes, organized by their vertical timeline position and approximate horizontal category stream.

**2022 (Bottom)**

* **Root/Foundation:** `CoT` (Chain-of-Thought), `STaR` (Self-Taught Reasoner).

**2023**

* **Left (Deep Reasoning):** `PlanningTokens`, `CLP`, `Finetune-CoT`, `MathPrompter`, `ReST`.

* **Center-Left (Feasible Reflection):** `CRITIC`, `Shepherd`, `Self-Verification`, `PDS`, `Step-DPO`, `Promptbreeder`, `ReFT`, `IRPO`, `CPO`, `DeepseekMath`, `PoT` (Program of Thoughts), `Brain`, `ENVISIONS`, `Lean-STaR`, `CoC`, `Quiet-STaR`, `MuSR`.

* **Center-Right (Extensive Exploration):** `Self-Refine`, `Self-critiquing`, `Final Answer RL`, `G ReAct`, `DTV`, `DiVeRSe`, `CLSP`, `RT`, `Reflexion`, `SelfCheck`, `PHP`, `GLoRe`, `SCoRe`, `RISE`, `CFT`, `ReARTe`, `BackMATH`, `ReST-MCTS*`, `o1-Coder`, `DECRIM`, `MathMinos`, `MCTSr`, `Refiner`, `LEMA`, `LEVER`, `RAP`, `GenRM`, `Eurus`, `DART-Math`, `V-STaR`, `Qwen2.5Math`, `O1JourneyP2`, `s1`, `PGTS`, `LIMO`, `ITT`, `Sky-T1`, `BOLT`, `RecurrentBlock`, `CodeI/O`, `LTM`s.

* **Right (Extensive Exploration - continued):** `PathFinder`, `GRPO`, `StepCoder`, `ToT` (Tree-of-Thoughts), `Residual-EBM`, `ReMax`, `RBF`, `CPL`, `AlphaLLM`, `MindStar`, `GoT` (Graph-of-Thoughts), `Inference Scaling Law`, `SSC-CoT`, `PPO-MCTS`, `DBS`, `OSCA`, `FoT`, `AgentQ`, `MAGIcoRe`, `MultiPoT`, `OmegaPRM`, `LE-MCTS`, `CoMCTS`, `AFlow`, `LlamaBerry`, `SPaR`, `rStar-Math`, `MCTS-AHD`, `QLASS`, `CoAT`, `CISC`, `SETS`, `ECM`, `ReasonFlux`, `TestNUC`, `MM-Verify`, `ARIES`, `ReVISE`, `S²R`, `URSA`, `CTRL`, `PRIME`, `COT STEP`, `EvalPlanner`, `AceCoder`, `AGSER`, `Step-KTO`, `AutoPSV`, `CodeRM`, `R³V`, `LLM2`, `RoT`, `BespokeStratos`, `RedStar`, `STILL-2`, `AceMath`, `Coconut`, `OpenAI-o1`, `DeepSeek-R1`.

**2024**

* **Left (Deep Reasoning):** `PlanningTokens`, `CLP`.

* **Center/Right Streams:** Many models from 2023 continue or have derivatives. Notable new entries around the 2024 mark include: `Logic-RL`, `Full-Step-DPO`, `DVO`, `SCIR`, `AgentPRM`, `RefineCoder`, `GSM-Ranges`, `PAD`, `AURORA`, `RewardAgent`, `RLSP`, `OREAL`, `Satori`, `COS(M+O)S`, `Kimi-k1.5`, `DivPO`, `STILL-1`, `Macro-o1`, `REINFORCE++`, `cDPO`, `RBF`.

**2025.01**

* **Left:** `Coconut`, `OpenAI-o1`.

* **Center/Right:** `DeepSeek-R1` (positioned between 2025.01 and 2025.02).

**2025.02**

* **Left:** `o1-ioi`, `LTM`s.

* **Center/Right:** `RedStar`, `BespokeStratos`, `STILL-2`, `AceMath`, `ENVISIONS`.

**2025.03 (Top)**

* **Left:** `QwQ-32B`.

* **Center/Right:** `Logic-RL`, `Full-Step-DPO`, `DVO`, `SCIR`, `AgentPRM`, `RefineCoder`, `GSM-Ranges`, `PAD`, `AURORA`, `RewardAgent`, `ARIES`, `MM-Verify`, `TestNUC`, `ReasonFlux`, `CISC`, `SETS`, `ECM`, `RealCritic`, `ExACT`, `CoR`, `S*`, `AoT`, `C-MCTS`, `QLASS`, `CoAT`, `PF`, `TT`, `CriticQ`, `STeCa`, `DVPO`, `LIMR`, `RFTT`, `RLSP`, `PRIME`, `OREAL`, `Satori`, `COS(M+O)S`, `Kimi-k1.5`, `ThinkPO`, `SWE-RL`, `Focusd-DPO`.

### Key Observations

1. **Temporal Clustering:** There is a significant density of new methods and models appearing in late 2024 and early 2025, indicating a period of rapid advancement and diversification in AI reasoning research.

2. **Categorical Overlap:** Many models, especially those related to Monte Carlo Tree Search (MCTS) variants (e.g., `ReST-MCTS*`, `CoMCTS`, `PPO-MCTS`), appear in the "Extensive Exploration" category but have connections to other streams.

3. **Prominent Organizations:** Icons for Google (G), OpenAI, Anthropic (A), and various academic institutions (e.g., CMU, Stanford, Tsinghua) are frequently attached to nodes, showing the key players in this field.

4. **Evolutionary Lines:** The connecting lines show clear evolutionary paths. For example, foundational methods like `CoT` and `STaR` at the root branch into numerous specialized techniques over time.

5. **Resource Type Distribution:** Both open-source (yellow border) and closed-source (grey border) models are intermixed throughout the taxonomy, suggesting parallel development in both domains.

### Interpretation

This diagram serves as a **conceptual map of the "reasoning" sub-field within large language model (LLM) research**. It visually argues that progress is not linear but occurs along multiple, semi-parallel tracks:

1. **Deep Reasoning:** Focuses on improving the model's internal reasoning format and learning processes (e.g., specialized training for math, code).

2. **Feasible Reflection:** Centers on mechanisms for models to critique, verify, and refine their own outputs using feedback loops.

3. **Extensive Exploration:** Emphasizes search and exploration strategies (like tree search, MCTS) to navigate large solution spaces, often leveraging external tools or verifiers.

The **central pink tree** is a powerful metaphor, suggesting that the diverse "branches" of modern reasoning techniques all grow from a common trunk of early foundational work (`CoT`, `STaR`). The explosion of branches in 2024-2025 highlights the field's shift from proving the viability of reasoning in LLMs to aggressively scaling and specializing these capabilities. The intermingling of open and closed resources indicates a vibrant, competitive ecosystem where ideas likely flow between academic and industrial labs. The diagram is less a strict hierarchy and more a **phylogenetic chart**, showing ancestry, divergence, and the complex ecosystem of ideas driving AI reasoning forward.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Evolution of AI Models and Resource Types (2022-2025.03)

### Overview

The image depicts a vertical timeline (2022-2025.03) with branching pathways representing AI model development. Key elements include:

- A central vertical axis labeled with years (2022-2025.03)

- Colored boxes (yellow/gray) connected by arrows, representing AI models

- A legend at the bottom explaining color coding

- Decorative elements: a pink tree with cherry blossoms and a cartoon snake

### Components/Axes

1. **Timeline Axis** (Left side):

- Vertical axis labeled with years: 2022, 2023, 2024, 2025.01, 2025.02, 2025.03

- Dotted horizontal lines separate years

2. **Model Boxes**:

- Yellow boxes: Open Resource (per legend)

- Gray boxes: Close Resource (per legend)

- Examples: "DeepSeek-R1" (2022), "OpenAI-01" (2022), "Qwen2.5Math" (2025.01)

3. **Legend** (Bottom center):

- Yellow: Open Resource

- Gray: Close Resource

4. **Decorative Elements**:

- Pink tree with cherry blossoms (bottom center)

- Cartoon snake with red scarf (bottom right)

### Detailed Analysis

**Timeline Progression**:

- **2022**:

- Open Resource: DeepSeek-R1, CoT, MathPrompter

- Close Resource: OpenAI-01, Quiet-STaR

- **2023**:

- Open Resource: PlanningTokens, MuSR, Step-DPO

- Close Resource: ReST, SelfCheck

- **2024**:

- Open Resource: FastMCTS, Sky-T1, LIMOs

- Close Resource: ReVISE, AgentPRM

- **2025.01**:

- Open Resource: Qwen2.5Math, DART-Math

- Close Resource: ReST-MCTS*, AgentQ

- **2025.02**:

- Open Resource: Logic-RL, Full-Step-DPO

- Close Resource: RewardAgent, Aurora

- **2025.03**:

- Open Resource: ARiES, MM-Verify

- Close Resource: ThinkPO, SWE-RL

**Arrows and Relationships**:

- Arrows connect models across years, suggesting evolutionary pathways

- Some models branch into multiple successors (e.g., "ReST" → "ReST-MCTS*")

- Cross-year connections indicate iterative development

### Key Observations

1. **Resource Type Distribution**:

- 2022: 60% Open Resource (3/5 models)

- 2025.03: 50% Open Resource (2/4 models)

- Trend: Slight decline in Open Resource models over time

2. **Model Complexity**:

- Later years show longer model names (e.g., "ReST-MCTS*")

- 2025 models include specialized variants (e.g., "ReST-MCTS*")

3. **Decorative Symbolism**:

- Cherry blossoms may symbolize growth/innovation

- Snake with red scarf could represent "hidden" or "dangerous" aspects of AI

### Interpretation

The flowchart illustrates the evolution of AI models from 2022 to 2025, showing:

1. **Resource Shift**: While Open Resource models dominated early development, later years show increased parity with Close Resource models

2. **Specialization Trend**: Later models (2025) show more specialized variants (e.g., "ReST-MCTS*")

3. **Iterative Development**: Arrows suggest models build on predecessors, with some pathways converging (e.g., multiple models leading to "ReST-MCTS*")

4. **Symbolic Elements**: The cherry blossoms and snake may represent the dual nature of AI progress - beautiful innovation with potential risks

The data suggests a maturing AI ecosystem where resource types (Open vs. Close) become more balanced over time, with increasing specialization in later years. The decorative elements add narrative depth, hinting at both the aesthetic and perilous aspects of AI development.

DECODING INTELLIGENCE...