\n

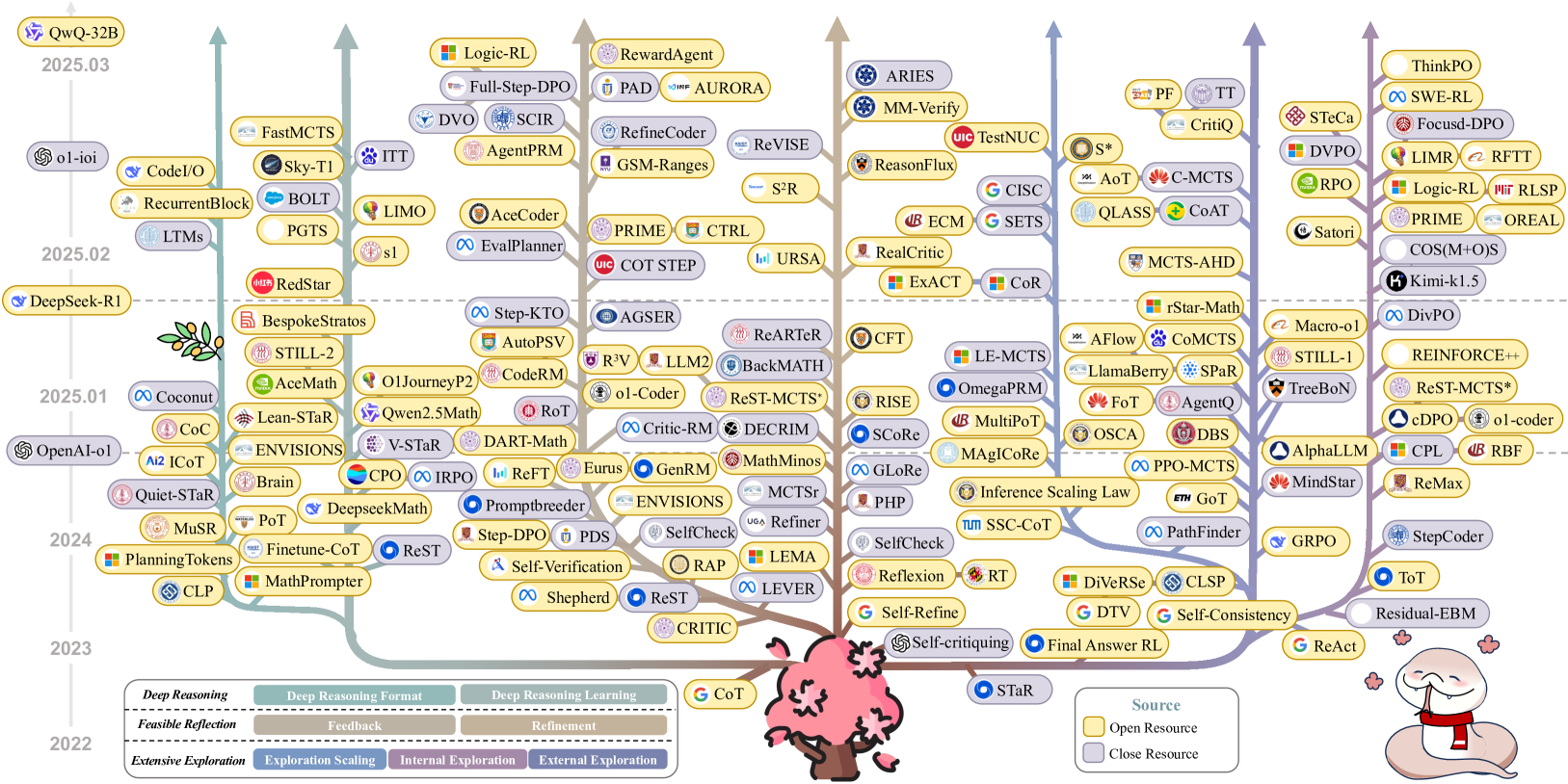

## Scatter Plot: Large Language Model (LLM) Performance Over Time

### Overview

This image presents a scatter plot visualizing the performance of various Large Language Models (LLMs) over time, from 2022 to 2025.03. The x-axis represents a performance score, and the y-axis represents the date. Each point on the plot represents a specific LLM, and the color of the point appears to indicate a grouping or category. The plot is densely populated, suggesting a rapid increase in the number of LLMs and their performance over the observed period.

### Components/Axes

* **X-axis:** "Performance Score" - Scale is not explicitly labeled, but ranges approximately from 0 to 100.

* **Y-axis:** "Date" - Labeled with years 2022, 2023, 2024, 2025.01, 2025.02, 2025.03.

* **Legend:** Located at the bottom of the image, containing a large number of LLM names, each associated with a unique color. The legend is divided into sections based on the LLM's origin or framework (e.g., OpenAI, DeepSeek-RI, QwQ-32B).

* **Title:** "Flexible Data Scientist Scaling Coalition" at the bottom-left.

* **Source:** "Source: OpenCompass" at the bottom-right.

### Detailed Analysis or Content Details

The plot contains a multitude of LLMs. Due to the density of the plot, precise numerical values are difficult to extract. However, the following observations can be made based on the visual trends and legend:

**2022:**

* A small number of LLMs are present, with performance scores generally below ~30.

* Notable LLMs: MuStar, Planning Falcon, Brain.

**2023:**

* A significant increase in the number of LLMs.

* Performance scores generally range from ~30 to ~60.

* Notable LLMs: OpenAI-4, Llama2, DeepSeek-Coder, Vicuna.

**2024:**

* Further increase in the number of LLMs.

* Performance scores generally range from ~40 to ~80.

* Notable LLMs: DeepSeek-Math, WizardLM, Qwen1.5.

**2025.01:**

* Continued increase in LLM count.

* Performance scores generally range from ~50 to ~90.

* Notable LLMs: Coconut, Lean-Star, Still-2.

**2025.02:**

* The highest density of LLMs on the plot.

* Performance scores generally range from ~60 to ~95.

* Notable LLMs: FastMCTS, Sky-TI, RedStar.

**2025.03:**

* The most recent data point, with a continued high density of LLMs.

* Performance scores range from ~60 to ~98.

* Notable LLMs: Logic-RL, RewardAgent, Aries.

**Color-Coded Groups (based on legend):**

* **QwQ-32B (Purple):** LLMs in this group show a consistent upward trend in performance, reaching scores above 90 in 2025.03.

* **ol-ioI (Green):** LLMs in this group show a similar upward trend, with performance scores increasing from around 40 in 2023 to above 80 in 2025.03.

* **DeepSeek-RI (Orange):** LLMs in this group demonstrate a strong performance increase, with scores ranging from 50 to 90+ over the period.

* **OpenAI-4 (Blue):** LLMs in this group show a relatively stable performance, with scores around 70-80.

* **Other Groups (various colors):** A wide range of other LLMs are present, with varying performance levels and trends.

### Key Observations

* **Exponential Growth:** The number of LLMs is increasing exponentially over time.

* **Performance Improvement:** LLM performance is consistently improving, with higher scores observed in more recent years.

* **Competition:** The density of the plot suggests intense competition among LLM developers.

* **Grouping:** The color-coding of LLMs suggests different development groups or frameworks.

* **Outliers:** Some LLMs consistently outperform others within their respective groups.

### Interpretation

The data suggests a rapidly evolving landscape in the field of Large Language Models. The exponential growth in the number of LLMs, coupled with consistent performance improvements, indicates significant investment and innovation in this area. The color-coding of LLMs provides insights into the different players and their respective strategies. The plot highlights the competitive nature of the field, with numerous LLMs vying for top performance. The "Flexible Data Scientist Scaling Coalition" and "OpenCompass" source suggest this data is focused on models useful for data science tasks and is being tracked by an open-source initiative. The increasing performance scores over time demonstrate the effectiveness of ongoing research and development efforts. The plot serves as a valuable snapshot of the current state of LLM technology and its potential for future advancements. The data suggests that LLMs are becoming increasingly powerful and versatile, with applications across a wide range of domains.