## Diagram: Question Answering Approaches

### Overview

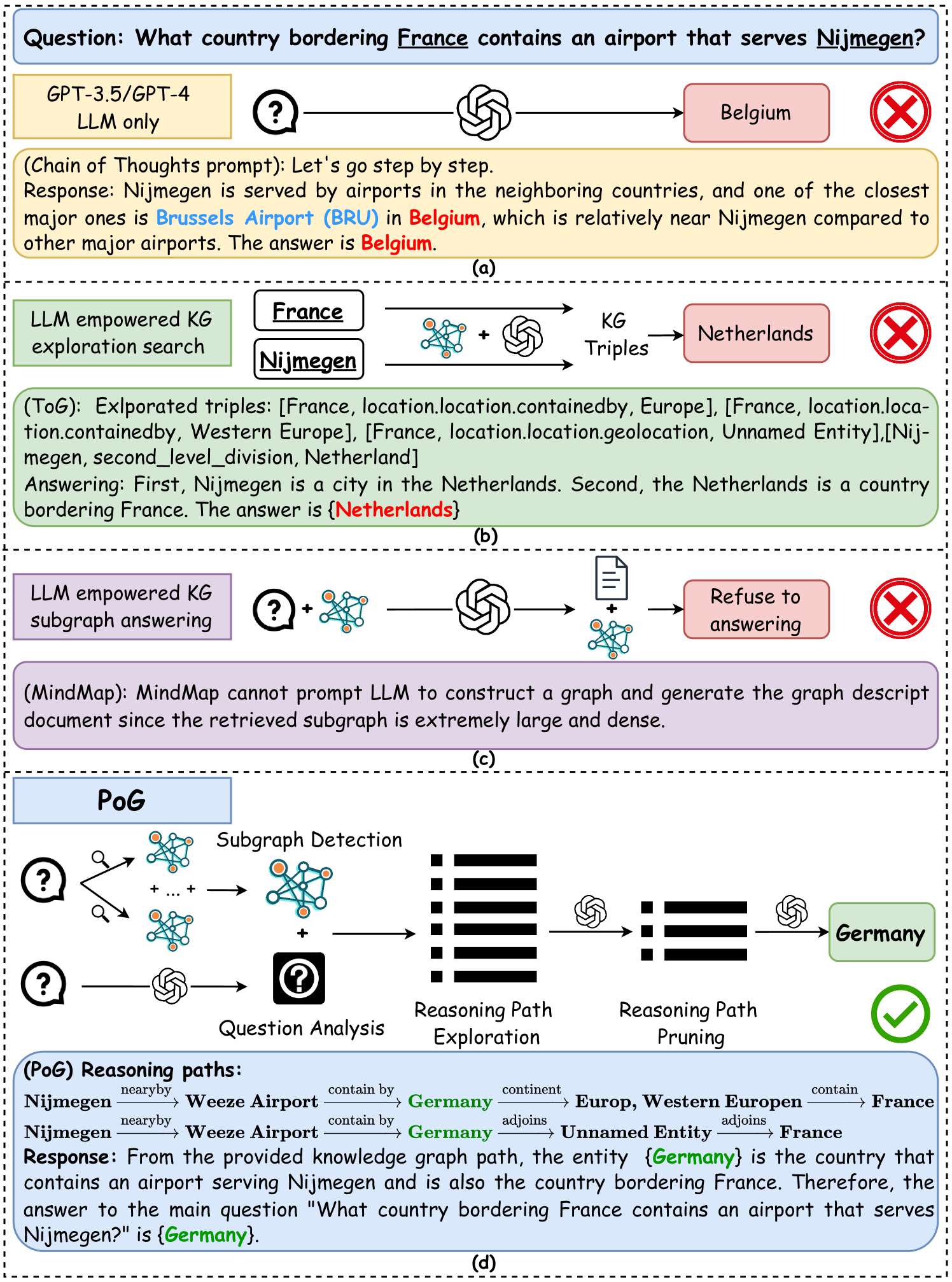

The image presents a comparison of different approaches to answering the question: "What country bordering France contains an airport that serves Nijmegen?". It showcases four methods: GPT-3.5/GPT-4 LLM only, LLM empowered KG exploration search, LLM empowered KG subgraph answering, and PoG (Proof of Graph) reasoning paths. Each method's process and final answer are displayed, along with an indication of whether the answer is correct or incorrect.

### Components/Axes

The image is divided into four sections, labeled (a), (b), (c), and (d). Each section represents a different approach to answering the question.

* **Section (a): GPT-3.5/GPT-4 LLM only**

* Input: Question mark icon connected to a transformer icon (representing the LLM).

* Output: "Belgium" (incorrect answer) marked with a red "X" icon.

* Intermediate steps: A text box containing the chain of thought reasoning.

* **Section (b): LLM empowered KG exploration search**

* Input: "France" and "Nijmegen" boxes, a question mark icon, a knowledge graph icon, and a transformer icon.

* Output: "Netherlands" (incorrect answer) marked with a red "X" icon.

* Intermediate steps: A text box containing the explored triples and the answering process.

* **Section (c): LLM empowered KG subgraph answering**

* Input: Question mark icon, a knowledge graph icon, and a transformer icon.

* Output: "Refuse to answering" marked with a red "X" icon.

* Intermediate steps: A text box stating that the MindMap cannot prompt LLM to construct a graph.

* **Section (d): PoG (Proof of Graph) reasoning paths**

* Input: Question mark icon, a knowledge graph icon, and a transformer icon.

* Output: "Germany" (correct answer) marked with a green checkmark icon.

* Intermediate steps: A diagram showing the reasoning path exploration and pruning process, along with a text box containing the reasoning paths.

### Detailed Analysis or Content Details

**Section (a): GPT-3.5/GPT-4 LLM only**

* **Question:** What country bordering France contains an airport that serves Nijmegen?

* **Model:** GPT-3.5/GPT-4 LLM only

* **Process:** Chain of Thoughts prompt. The model reasons that Nijmegen is served by airports in neighboring countries, with Brussels Airport (BRU) in Belgium being one of the closest.

* **Answer:** Belgium (Incorrect)

**Section (b): LLM empowered KG exploration search**

* **Input:** France, Nijmegen, KG Triples

* **Process:** Explores triples related to France and Nijmegen.

* Triples: \[France, location.location.containedby, Europe], [France, location.location.containedby, Western Europe], [France, location.location.geolocation, Unnamed Entity], [Nijmegen, second\_level\_division, Netherland]

* **Reasoning:** Nijmegen is a city in the Netherlands, and the Netherlands is a country bordering France.

* **Answer:** Netherlands (Incorrect)

**Section (c): LLM empowered KG subgraph answering**

* **Process:** Attempts to construct a graph and generate a graph description document.

* **Result:** Refuses to answer due to the retrieved subgraph being extremely large and dense.

**Section (d): PoG (Proof of Graph) reasoning paths**

* **Process:**

* Subgraph Detection: Multiple knowledge graph icons are combined.

* Question Analysis: A question mark icon is combined with a transformer icon.

* Reasoning Path Exploration: A list icon is processed by a transformer icon.

* Reasoning Path Pruning: A list icon is processed by a transformer icon.

* **Reasoning Paths:**

* Nijmegen nearby Weeze Airport contain by Germany continent Europ, Western Europen contain France

* Nijmegen nearby Weeze Airport contain by Germany adjoins Unnamed Entity adjoins France

* **Response:** From the provided knowledge graph path, the entity {Germany} is the country that contains an airport serving Nijmegen and is also the country bordering France. Therefore, the answer to the main question "What country bordering France contains an airport that serves Nijmegen?" is {Germany}.

* **Answer:** Germany (Correct)

### Key Observations

* The GPT-3.5/GPT-4 LLM only approach and the LLM empowered KG exploration search both provide incorrect answers.

* The LLM empowered KG subgraph answering approach fails to provide an answer due to the complexity of the subgraph.

* The PoG reasoning paths approach provides the correct answer by leveraging a structured reasoning process and knowledge graph information.

### Interpretation

The image demonstrates the varying effectiveness of different approaches to question answering, particularly when dealing with complex queries that require reasoning over knowledge graphs. The GPT-3.5/GPT-4 LLM only approach, while capable of generating fluent text, lacks the structured knowledge and reasoning capabilities to arrive at the correct answer. The LLM empowered KG exploration search improves upon this by incorporating knowledge graph information, but still falls short due to its limited reasoning capabilities. The LLM empowered KG subgraph answering approach highlights the challenges of dealing with large and dense knowledge graphs. The PoG reasoning paths approach, which combines knowledge graph information with a structured reasoning process, proves to be the most effective in this case, successfully identifying the correct answer. This suggests that combining the strengths of LLMs with structured knowledge and reasoning techniques is crucial for achieving accurate and reliable question answering.