\n

## Diagram: LLM Reasoning Process for Question Answering

### Overview

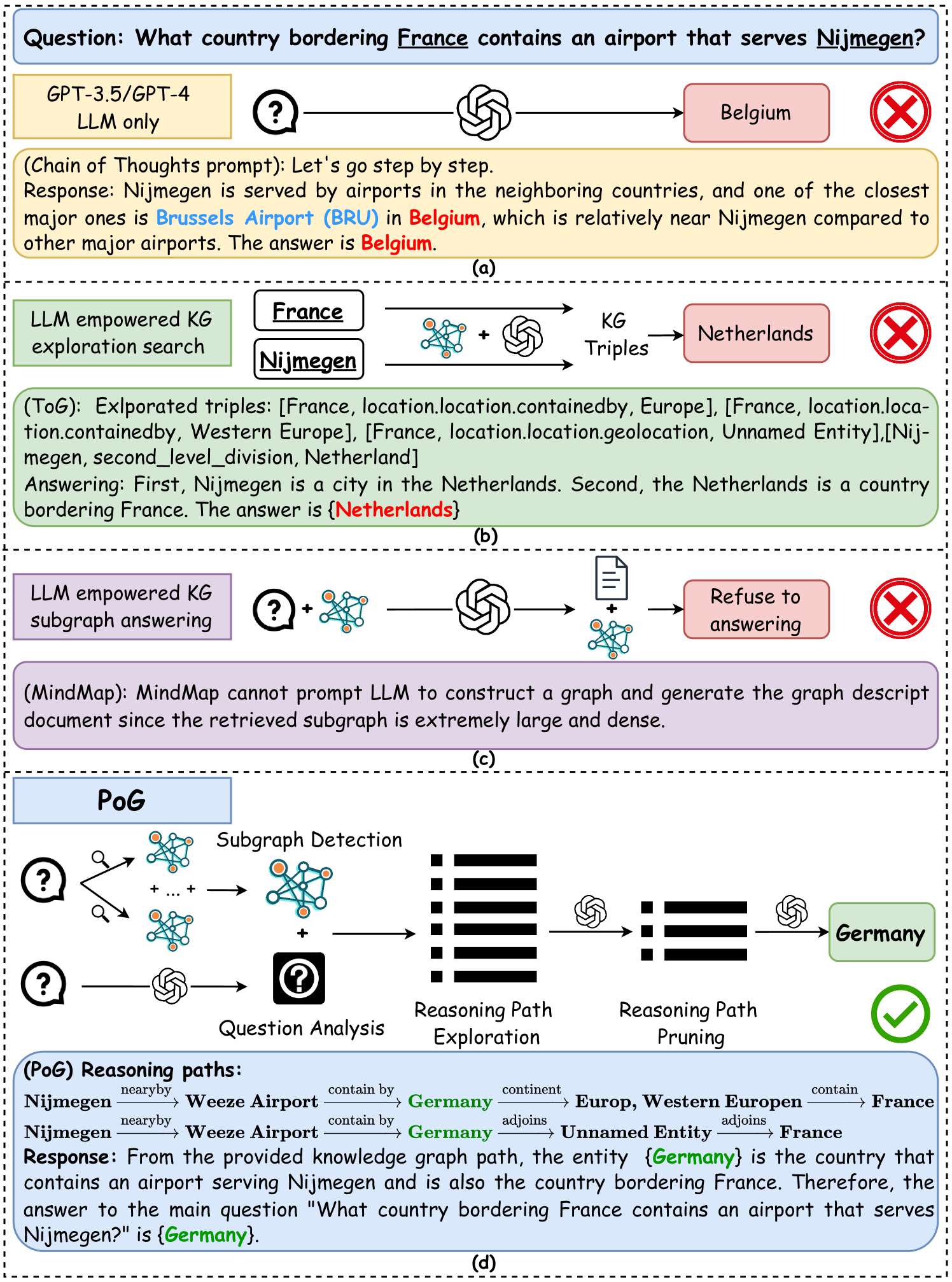

This diagram illustrates a multi-step reasoning process employed by a Large Language Model (LLM) – specifically GPT-3.5/GPT-4 – to answer a complex question: "What country bordering France contains an airport that serves Nijmegen?". The diagram is divided into four sections (a, b, c, d) representing different stages of the reasoning process, from initial response generation to final answer selection. Each stage utilizes different techniques, including chain-of-thought prompting, knowledge graph (KG) exploration, and subgraph answering.

### Components/Axes

The diagram consists of several key components:

* **Question Box:** Top-left corner, containing the initial question.

* **LLM Blocks:** Representing the LLM's processing steps.

* **Knowledge Graph (KG) Visualizations:** Showing entities and relationships.

* **Flow Arrows:** Indicating the direction of information flow.

* **Answer Boxes:** Highlighting the LLM's responses at each stage.

* **Checkmarks/Crosses:** Indicating success or failure of a reasoning step.

* **Stage Labels:** (a), (b), (c), (d) denoting the different stages.

* **Sub-component Labels:** "Question Analysis", "Reasoning Path Exploration", "Reasoning Path Pruning".

### Detailed Analysis or Content Details

**Stage (a): GPT-3.5/GPT-4 LLM only**

* **Question:** "What country bordering France contains an airport that serves Nijmegen?"

* **LLM Response:** "Nijmegen is served by airports in the neighboring countries, and one of the closest major ones is Brussels Airport (BRU) in Belgium, which is relatively near Nijmegen compared to other major airports. The answer is Belgium."

* **Output:** Belgium.

**Stage (b): LLM empowered KG exploration search**

* **Entities:** France, Nijmegen.

* **KG Triples (ToG):** “[France, location.location.containedby, Europe]”, “[France, location.location.geolocaton, Unnamed Entity]”, “[Nijmegen, second_level_division, Netherlands]”.

* **LLM Response:** "First, Nijmegen is a city in the Netherlands. Second, the Netherlands is a country bordering France. The answer is {Netherlands}."

* **Output:** Netherlands.

**Stage (c): LLM empowered KG subgraph answering**

* **Text:** "(MindMap): MindMap cannot prompt LLM to construct a large and generate the graph descript document since the retrieved subgraph is extremely large and dense."

* **Output:** Refuse to answering.

**Stage (d): PoG (Path of Graph)**

* **Reasoning Paths:**

* Nijmegen → nearby → Weeze Airport → contain by → Germany

* Weeze Airport → contain by → Germany

* Germany → adjoins → Unnamed Entity → adjoins → France

* **LLM Response:** "From the provided knowledge graph path, the entity {Germany} is the country that contains an airport serving Nijmegen and also the country bordering France. Therefore, the answer to the main question 'What country bordering France contains an airport that serves Nijmegen?' is {Germany}."

* **Output:** Germany.

### Key Observations

* The LLM initially provides an incorrect answer (Belgium) based on proximity to an airport.

* KG exploration leads to a second answer (Netherlands), which is also incorrect.

* Subgraph answering fails due to the complexity of the retrieved graph.

* The final stage, utilizing a Path of Graph (PoG) approach, successfully identifies the correct answer (Germany) by tracing relationships within the knowledge graph.

* The diagram highlights the importance of structured knowledge representation (KG) and reasoning paths for accurate question answering.

* The diagram demonstrates the iterative nature of the LLM's reasoning process, with multiple attempts and refinements.

### Interpretation

The diagram illustrates the challenges and advancements in LLM-based question answering. The initial response demonstrates the LLM's ability to leverage general knowledge but also its susceptibility to errors. The subsequent stages showcase the benefits of integrating external knowledge sources (KG) and employing structured reasoning techniques (PoG). The failure of subgraph answering underscores the limitations of current LLMs in handling complex knowledge graphs. The final success with PoG suggests a promising direction for improving the accuracy and reliability of LLM-based question answering systems. The diagram is a visual representation of the LLM's "thought process," revealing the steps taken to arrive at a solution and the potential pitfalls encountered along the way. The use of checkmarks and crosses provides a clear indication of the success or failure of each reasoning step, allowing for a detailed analysis of the LLM's performance.