\n

## Bar Charts: LLM Performance on Q1 and Q2

### Overview

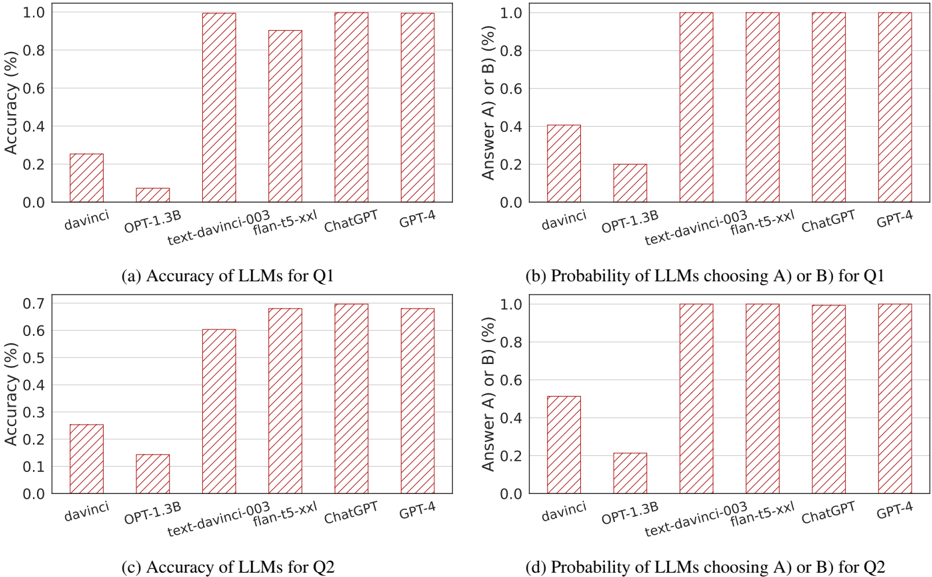

The image contains four bar charts arranged in a 2x2 grid. Each chart compares the performance of several Large Language Models (LLMs) – davinci, OPT-1.3B, text-davinci-003, flan-t5-xxl, ChatGPT, and GPT-4 – on either accuracy or probability of choosing a specific answer (A or B). Two charts focus on Question 1 (Q1), and the other two focus on Question 2 (Q2). The Y-axis represents percentage values, and the X-axis represents the LLM names.

### Components/Axes

* **X-axis (all charts):** LLM Names: davinci, OPT-1.3B, text-davinci-003, flan-t5-xxl, ChatGPT, GPT-4

* **Y-axis (charts a & c):** Accuracy (%) - Scale ranges from 0.0 to 1.0, with markers at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Y-axis (charts b & d):** Answer A or B (%) - Scale ranges from 0.0 to 1.0, with markers at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Chart Titles:**

* (a) Accuracy of LLMs for Q1

* (b) Probability of LLMs choosing A or B for Q1

* (c) Accuracy of LLMs for Q2

* (d) Probability of LLMs choosing A or B for Q2

* **Bar Color:** All bars are a consistent shade of light red/pink.

### Detailed Analysis or Content Details

**Chart (a): Accuracy of LLMs for Q1**

* **davinci:** Approximately 0.22 Accuracy (%)

* **OPT-1.3B:** Approximately 0.25 Accuracy (%)

* **text-davinci-003:** Approximately 0.70 Accuracy (%)

* **flan-t5-xxl:** Approximately 0.85 Accuracy (%)

* **ChatGPT:** Approximately 0.90 Accuracy (%)

* **GPT-4:** Approximately 0.95 Accuracy (%)

**Chart (b): Probability of LLMs choosing A or B for Q1**

* **davinci:** Approximately 0.10 Answer A or B (%)

* **OPT-1.3B:** Approximately 0.20 Answer A or B (%)

* **text-davinci-003:** Approximately 0.90 Answer A or B (%)

* **flan-t5-xxl:** Approximately 0.95 Answer A or B (%)

* **ChatGPT:** Approximately 0.98 Answer A or B (%)

* **GPT-4:** Approximately 0.99 Answer A or B (%)

**Chart (c): Accuracy of LLMs for Q2**

* **davinci:** Approximately 0.15 Accuracy (%)

* **OPT-1.3B:** Approximately 0.30 Accuracy (%)

* **text-davinci-003:** Approximately 0.60 Accuracy (%)

* **flan-t5-xxl:** Approximately 0.65 Accuracy (%)

* **ChatGPT:** Approximately 0.70 Accuracy (%)

* **GPT-4:** Approximately 0.75 Accuracy (%)

**Chart (d): Probability of LLMs choosing A or B for Q2**

* **davinci:** Approximately 0.10 Answer A or B (%)

* **OPT-1.3B:** Approximately 0.20 Answer A or B (%)

* **text-davinci-003:** Approximately 0.90 Answer A or B (%)

* **flan-t5-xxl:** Approximately 0.95 Answer A or B (%)

* **ChatGPT:** Approximately 0.98 Answer A or B (%)

* **GPT-4:** Approximately 0.99 Answer A or B (%)

### Key Observations

* GPT-4 consistently demonstrates the highest accuracy for both Q1 and Q2.

* davinci and OPT-1.3B consistently show the lowest accuracy.

* The probability of choosing A or B is very high for all models except davinci and OPT-1.3B, approaching 1.0 for the more advanced models.

* Accuracy scores are generally lower for Q2 compared to Q1 across all models.

* The gap in performance between the lower-performing models (davinci, OPT-1.3B) and the higher-performing models (text-davinci-003, flan-t5-xxl, ChatGPT, GPT-4) is substantial.

### Interpretation

The data suggests a clear hierarchy in the capabilities of these LLMs. GPT-4 is the most accurate and confident (highest probability of choosing an answer) across both questions. The older and smaller models, davinci and OPT-1.3B, perform significantly worse. The high probability scores for the more advanced models indicate they are consistently making a choice, while the lower accuracy suggests they are not always choosing the *correct* answer.

The lower accuracy scores for Q2 compared to Q1 could indicate that Q2 is inherently more difficult, or that the models are more sensitive to the specific phrasing or content of Q2. The consistent pattern across all models suggests the difficulty lies within the question itself, rather than a specific model weakness.

The charts demonstrate the rapid advancements in LLM technology, with newer models exhibiting substantially improved performance compared to their predecessors. The data provides a quantitative comparison of these models, highlighting their strengths and weaknesses in answering these specific questions. The consistent performance of text-davinci-003, flan-t5-xxl, ChatGPT, and GPT-4 suggests a qualitative shift in capabilities beyond simply increasing model size.