\n

## Diagram: Deep Learning Task and Side-Channel Attack Flow

### Overview

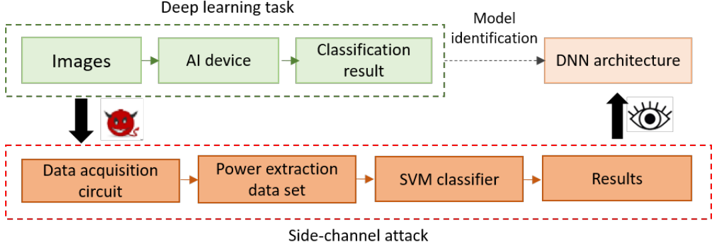

The image is a technical flowchart diagram illustrating a two-part process: a legitimate "Deep learning task" and a malicious "Side-channel attack" that targets it. The diagram shows how an attacker can use physical side-channel information (power consumption) to infer the architecture of a Deep Neural Network (DNN) being used for image classification.

### Components/Axes

The diagram is divided into two main sections, visually separated by dashed boxes and color-coding.

**1. Top Section: Deep Learning Task (Green Dashed Box)**

* **Title:** "Deep learning task" (centered above the box).

* **Components (Green Boxes, left to right):**

* `Images`

* `AI device`

* `Classification result`

* **Flow:** Solid black arrows connect the components in sequence: `Images` → `AI device` → `Classification result`.

* **Output:** A dotted black arrow labeled `Model identification` points from the `Classification result` box to an orange box outside the dashed line labeled `DNN architecture`.

**2. Bottom Section: Side-channel Attack (Red Dashed Box)**

* **Title:** "Side-channel attack" (centered below the box).

* **Components (Orange Boxes, left to right):**

* `Data acquisition circuit`

* `Power extraction data set`

* `SVM classifier`

* `Results`

* **Flow:** Solid black arrows connect the components in sequence: `Data acquisition circuit` → `Power extraction data set` → `SVM classifier` → `Results`.

**3. Inter-Section Connections & Symbols**

* **Attack Vector:** A thick, solid black arrow points downward from the `Images` box in the top section to the `Data acquisition circuit` box in the bottom section. Next to this arrow is a red devil emoji (😈), symbolizing a malicious attack.

* **Inference Path:** A thick, solid black arrow points upward from the `Results` box in the bottom section to the `DNN architecture` box. Next to this arrow is an eye emoji (👁️), symbolizing observation or inference.

### Detailed Analysis

The diagram depicts a cause-and-effect relationship between a standard AI workflow and a security exploit.

* **Legitimate Process:** An AI system processes input `Images` on an `AI device` to produce a `Classification result`. This process inherently uses a specific `DNN architecture`.

* **Attack Process:** An attacker uses a `Data acquisition circuit` to measure physical signals (implied to be power consumption) from the AI device while it processes images. This raw data is processed into a `Power extraction data set`. This dataset is then fed into a Support Vector Machine (`SVM classifier`) to analyze patterns. The output `Results` of this analysis are used to infer or identify the target `DNN architecture`.

* **Spatial Grounding:** The attack components are positioned directly below the corresponding legitimate components they target. The `Data acquisition circuit` is below `Images`, and the final `Results` feed back up to the `DNN architecture`, creating a closed loop of attack and inference.

### Key Observations

1. **Attack Target:** The attack does not target the input images or the final classification result directly. Instead, it targets the intermediate physical implementation (the "AI device") to extract information about the model's structure (`DNN architecture`).

2. **Methodology:** The attack uses a classic side-channel analysis pipeline: data acquisition → feature extraction (power traces) → machine learning classification (SVM) → result interpretation.

3. **Information Flow:** The diagram shows two parallel flows: the intended data flow (images to classification) and the adversarial information flow (power traces to architecture identification). The devil and eye emojis clearly mark the malicious intent and the observational goal, respectively.

4. **Component Isolation:** The use of separate dashed boxes (green for legitimate, red for attack) and distinct box colors (green vs. orange) effectively isolates the two systems for clear analysis.

### Interpretation

This diagram illustrates a significant security vulnerability in hardware implementations of deep learning models. It demonstrates that the physical side effects of computation (like power draw) can leak sensitive information about the software model being executed.

* **What it means:** Even if the model's weights and parameters are kept secret, its architectural "fingerprint" can be stolen through careful measurement and analysis. This is a form of model extraction or reverse engineering.

* **Why it matters:** Such attacks can compromise intellectual property, enable adversarial attacks tailored to a specific architecture, or undermine security in sensitive applications where model secrecy is required.

* **Underlying Principle:** The diagram embodies the core principle of side-channel attacks: exploiting the gap between a system's abstract logical function (running a DNN) and its physical implementation (consuming power in a pattern correlated with that function). The SVM classifier acts as the tool that bridges this gap, learning the mapping from power patterns to architectural features.