## Diagram: Model Input/Output Flowchart with Reasoning Examples

### Overview

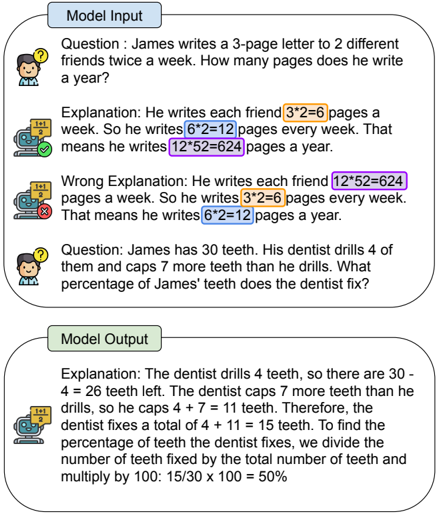

The image is a flowchart-style diagram illustrating a two-stage process: "Model Input" and "Model Output." It demonstrates how a language model processes word problems, showing examples of both correct and incorrect reasoning paths. The diagram uses text blocks, icons, and color-coded highlights to trace the flow of logic.

### Components/Axes

The diagram is vertically divided into two main rounded rectangular containers:

1. **Top Container (Light Blue Header):** Labeled "Model Input."

2. **Bottom Container (Light Green Header):** Labeled "Model Output."

Within these containers, the flow is organized into distinct text blocks, each accompanied by an icon on the left:

* **Question Icon:** A person with a question mark.

* **Correct Explanation Icon:** A computer with a green checkmark.

* **Wrong Explanation Icon:** A computer with a red "X".

* **Model Output Icon:** A computer with a green checkmark.

Text is presented in a standard sans-serif font. Key numbers and operations within the explanations are highlighted with colored backgrounds (orange, purple, blue).

### Detailed Analysis

#### **Model Input Section (Top Container)**

This section contains three sequential text blocks.

1. **First Question Block (Top):**

* **Icon:** Person with question mark.

* **Text:** "Question : James writes a 3-page letter to 2 different friends twice a week. How many pages does he write a year?"

2. **Correct Explanation Block (Middle):**

* **Icon:** Computer with green checkmark.

* **Text:** "Explanation: He writes each friend `3*2=6` pages a week. So he writes `6*2=12` pages every week. That means he writes `12*52=624` pages a year."

* **Highlights:** `3*2=6` (orange), `6*2=12` (blue), `12*52=624` (purple).

3. **Wrong Explanation Block (Bottom):**

* **Icon:** Computer with red "X".

* **Text:** "Wrong Explanation: He writes each friend `12*52=624` pages a week. So he writes `3*2=6` pages a year. That means he writes `6*2=12` pages a year."

* **Highlights:** `12*52=624` (purple), `3*2=6` (orange), `6*2=12` (blue).

4. **Second Question Block (Very Bottom of Input Section):**

* **Icon:** Person with question mark.

* **Text:** "Question : James has 30 teeth. His dentist drills 4 of them and caps 7 more teeth than he drills. What percentage of James's teeth does the dentist fix?"

#### **Model Output Section (Bottom Container)**

This section contains a single, detailed text block responding to the second question.

1. **Model Explanation Block:**

* **Icon:** Computer with green checkmark.

* **Text:** "Explanation: The dentist drills 4 teeth, so there are 30 - 4 = 26 teeth left. The dentist caps 7 more teeth than he drills, so he caps 4 + 7 = 11 teeth. Therefore, the dentist fixes a total of 4 + 11 = 15 teeth. To find the percentage of teeth the dentist fixes, we divide the number of teeth fixed by the total number of teeth and multiply by 100: 15/30 x 100 = 50%"

### Key Observations

1. **Reasoning Contrast:** The "Model Input" section explicitly contrasts a correct and an incorrect solution path for the same problem. The error in the "Wrong Explanation" is a severe logical misordering of operations, leading to nonsensical results (e.g., writing 624 pages per week).

2. **Highlighting System:** The colored highlights (orange for `3*2`, blue for `6*2`, purple for `12*52`) are used consistently across the correct and incorrect explanations to track how the same numerical components are misused in the flawed logic.

3. **Model Output Structure:** The model's output for the second problem follows a clear, step-by-step arithmetic breakdown, explicitly stating each intermediate calculation (`30 - 4`, `4 + 7`, `4 + 11`) before arriving at the final percentage.

4. **Visual Flow:** The diagram uses a top-to-bottom flow within each container, with icons providing immediate visual cues about the nature of each text block (question, correct answer, wrong answer).

### Interpretation

This diagram serves as a pedagogical or diagnostic tool for understanding AI model reasoning. It demonstrates:

* **The Importance of Logical Sequence:** The core difference between the correct and wrong explanations isn't the numbers used, but the *order and relationship* between operations. The wrong explanation applies the final step (`12*52`) prematurely and nonsensically.

* **Transparency in Reasoning:** The model's output is designed to be interpretable, showing its work step-by-step. This allows users to verify the logic, not just the final answer.

* **Error Analysis Framework:** By presenting a flawed reasoning path alongside a correct one, the diagram highlights common failure modes in automated problem-solving, such as misapplying formulas or losing track of units (pages per week vs. pages per year).

* **Peircean Investigation:** The diagram invites abductive reasoning—looking at the evidence (the highlighted numbers and their misplacement) to infer the likely cause of the error: a failure in procedural understanding, where the model (or a simulated flawed model) manipulates symbols without grounding them in the problem's real-world context. The correct output for the second problem shows the model successfully grounding each step ("drills 4 teeth," "caps 7 more than he drills") in the narrative before performing calculations.