## Diagram: Actor-Environment Interaction Loop

### Overview

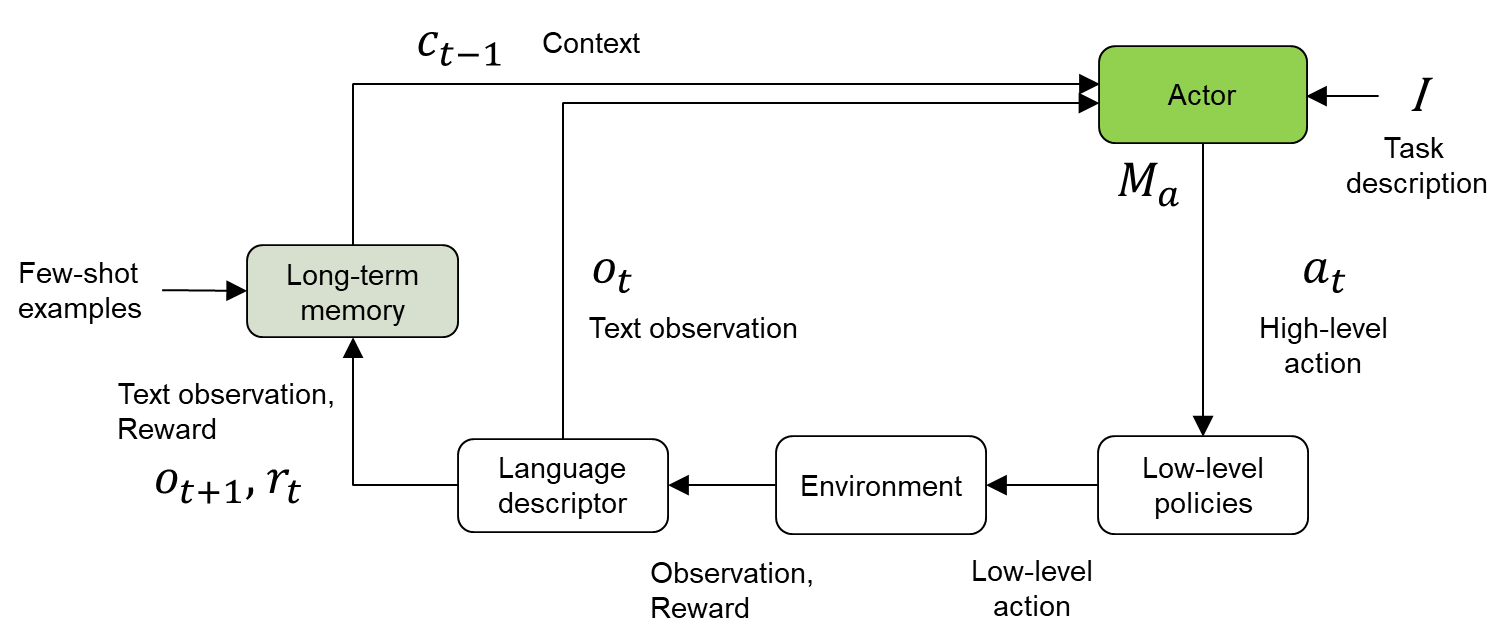

The image is a diagram illustrating an actor-environment interaction loop, incorporating elements of long-term memory, language descriptors, and low-level policies. The diagram depicts the flow of information and actions between the actor and the environment, with context and task descriptions influencing the actor's behavior.

### Components/Axes

* **Actor:** A green rounded rectangle labeled "Actor" at the top-right. It receives input from "Context" (c<sub>t-1</sub>), "Task description" (I), and "Long-term memory". It outputs "High-level action" (a<sub>t</sub>) and "M<sub>a</sub>".

* **Long-term memory:** A green rounded rectangle on the left, receiving "Few-shot examples" as input and providing context (c<sub>t-1</sub>) to the "Actor". It also receives "Text observation, Reward" (o<sub>t+1</sub>, r<sub>t</sub>).

* **Language descriptor:** A white rounded rectangle at the bottom-left, receiving "Text observation" (o<sub>t</sub>) and outputting to the "Environment".

* **Environment:** A white rounded rectangle at the bottom-center, receiving input from the "Language descriptor" ("Low-level action") and outputting "Observation, Reward" to the "Language descriptor".

* **Low-level policies:** A white rounded rectangle at the bottom-right, receiving "High-level action" (a<sub>t</sub>) from the "Actor" and outputting "Low-level action" to the "Environment".

* **Arrows:** Arrows indicate the flow of information and actions between the components.

### Detailed Analysis

* **Actor:** The "Actor" receives three inputs:

* "Context" (c<sub>t-1</sub>) from the "Long-term memory".

* "Task description" (I).

* "M<sub>a</sub>" from the "Long-term memory".

The "Actor" outputs "High-level action" (a<sub>t</sub>) to the "Low-level policies".

* **Long-term memory:** The "Long-term memory" receives "Few-shot examples" and "Text observation, Reward" (o<sub>t+1</sub>, r<sub>t</sub>). It outputs "Context" (c<sub>t-1</sub>) to the "Actor".

* **Language descriptor:** The "Language descriptor" receives "Text observation" (o<sub>t</sub>) and outputs to the "Environment".

* **Environment:** The "Environment" receives input from the "Language descriptor" ("Low-level action") and outputs "Observation, Reward" to the "Language descriptor".

* **Low-level policies:** The "Low-level policies" receives "High-level action" (a<sub>t</sub>) from the "Actor" and outputs "Low-level action" to the "Environment".

### Key Observations

* The diagram illustrates a closed-loop system where the "Actor" interacts with the "Environment" through "Low-level policies" and "Language descriptor".

* The "Long-term memory" provides context to the "Actor" based on "Few-shot examples" and past experiences ("Text observation, Reward").

* The "Language descriptor" translates "Text observation" into a format understandable by the "Environment".

### Interpretation

The diagram represents a reinforcement learning framework where an "Actor" learns to perform tasks in an "Environment". The "Actor" uses "Long-term memory" to store and retrieve relevant information, allowing it to adapt to new situations based on "Few-shot examples". The "Language descriptor" enables the system to process textual observations from the "Environment". The "Low-level policies" translate high-level actions from the "Actor" into concrete actions that can be executed in the "Environment". The loop represents the continuous interaction between the "Actor" and the "Environment", where the "Actor" learns from its experiences and improves its performance over time.