## Diagram: Reinforcement Learning with Language Models

### Overview

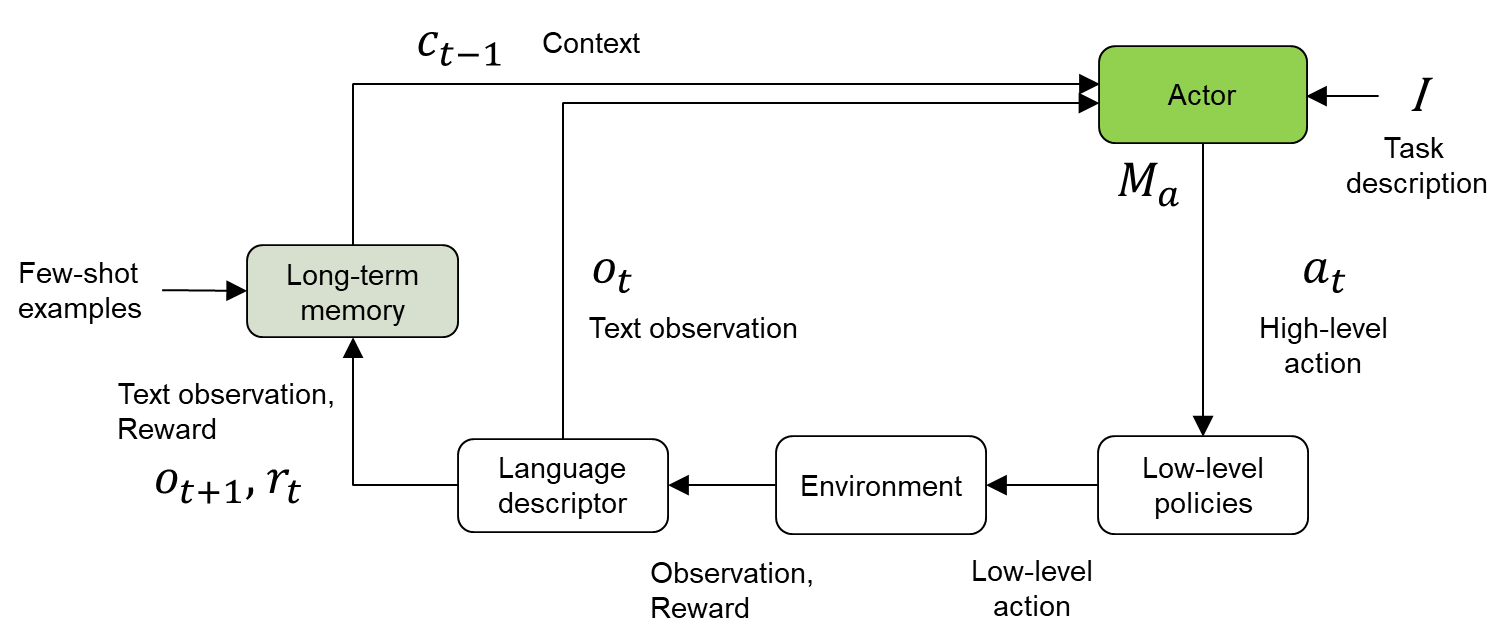

This diagram illustrates a reinforcement learning framework incorporating language models. It depicts the interaction between an "Actor" (a language model) and an "Environment," mediated by textual observations and rewards. The diagram highlights the use of long-term memory and a language descriptor to enhance the learning process.

### Components/Axes

The diagram consists of the following components:

* **Actor:** A green rectangular box labeled "Actor" and "M<sub>a</sub>".

* **Environment:** A grey rectangular box labeled "Environment".

* **Long-term memory:** A light-green rectangular box labeled "Long-term memory".

* **Language descriptor:** A light-blue rectangular box labeled "Language descriptor".

* **Low-level policies:** A dark-grey rectangular box labeled "Low-level policies".

* **Context (c<sub>t-1</sub>):** Text label indicating the context input to the Actor.

* **Task description (I):** Text label indicating the task input to the Actor.

* **Text observation (O<sub>t</sub>):** Text label indicating the observation from the Environment to the Actor.

* **High-level action (a<sub>t</sub>):** Text label indicating the action from the Actor to the Environment.

* **Text observation, Reward (O<sub>t+1</sub>, r<sub>t</sub>):** Text label indicating the observation and reward from the Environment to the Long-term memory.

* **Observation, Reward:** Text label indicating the observation and reward from the Environment to the Language descriptor.

* **Few-shot examples:** Text label indicating the input to the Long-term memory.

* **Low-level action:** Text label indicating the action from the Low-level policies to the Environment.

Arrows indicate the flow of information between these components.

### Detailed Analysis / Content Details

The diagram shows a cyclical process:

1. The **Actor** receives **Context (c<sub>t-1</sub>)** and **Task description (I)** as inputs.

2. The **Actor** generates a **High-level action (a<sub>t</sub>)**.

3. The **High-level action (a<sub>t</sub>)** is sent to the **Environment**.

4. The **Environment** produces an **Observation** and **Reward**.

5. The **Observation** and **Reward** are sent to both the **Language descriptor** and the **Long-term memory**.

6. The **Long-term memory** receives **Few-shot examples** as input.

7. The **Language descriptor** sends a **Text observation (O<sub>t</sub>)** to the **Actor**.

8. The **Environment** sends a **Text observation, Reward (O<sub>t+1</sub>, r<sub>t</sub>)** to the **Long-term memory**.

9. The **Actor** receives **Text observation (O<sub>t</sub>)**.

10. The **Environment** receives a **Low-level action** from the **Low-level policies**.

The diagram does not contain numerical data or specific values. It is a conceptual representation of a system.

### Key Observations

The diagram emphasizes the role of language in both the observation and action spaces of the reinforcement learning agent. The inclusion of "Long-term memory" and "Language descriptor" suggests an attempt to address challenges related to long-horizon tasks and complex environments. The separation of "High-level" and "Low-level" actions indicates a hierarchical reinforcement learning approach.

### Interpretation

This diagram represents a sophisticated reinforcement learning architecture that leverages the power of language models. The "Actor" acts as a policy network, generating high-level actions based on the current context and task description. The "Environment" simulates the real world, providing observations and rewards. The "Language descriptor" likely translates the environment's state into a textual representation that the "Actor" can understand. The "Long-term memory" allows the agent to store and retrieve past experiences, improving its ability to generalize and learn from limited data. The "Low-level policies" likely translate the high-level actions into concrete control signals for the environment.

The diagram suggests a system designed to tackle complex tasks that require reasoning, planning, and adaptation. The use of language as a communication channel between the agent and the environment is a key feature, enabling the agent to leverage prior knowledge and learn from natural language instructions. The hierarchical structure allows for efficient exploration and exploitation of the environment.