## Flowchart: Hierarchical Reinforcement Learning System Architecture

### Overview

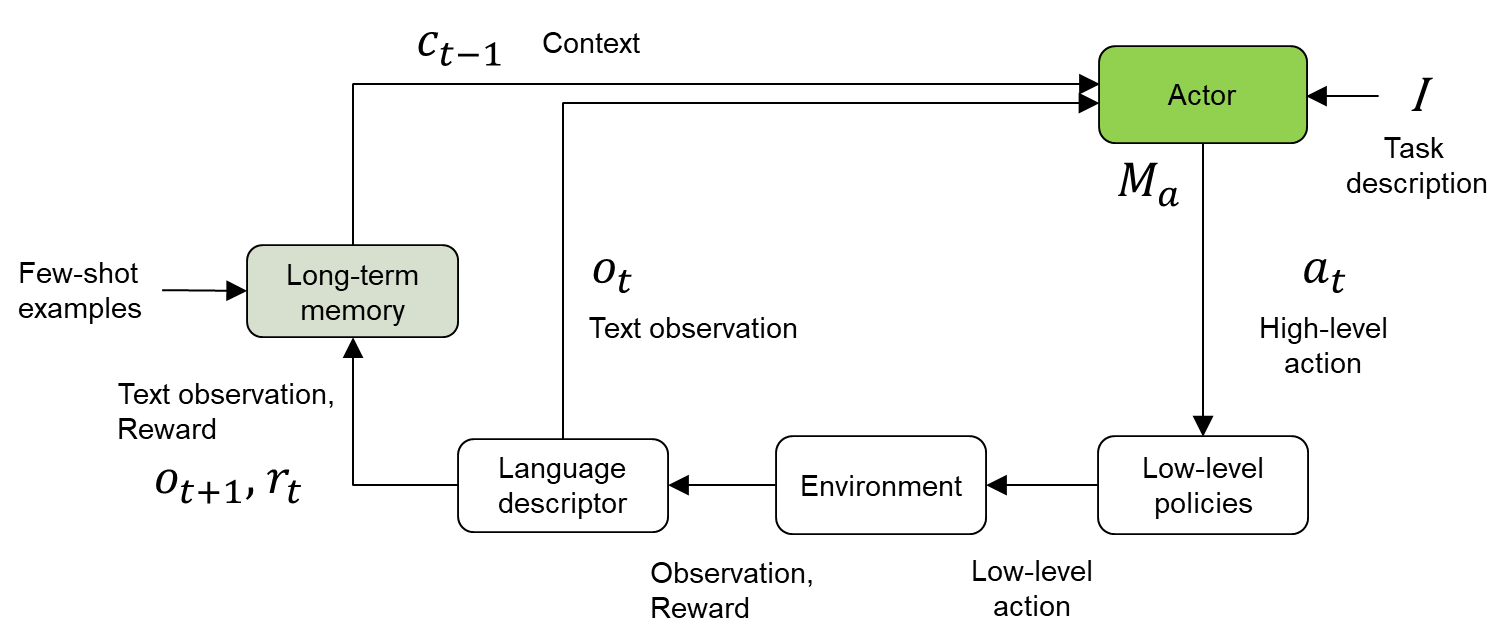

The diagram illustrates a hierarchical reinforcement learning system where context, memory, and environmental interactions drive decision-making. Key components include long-term memory, language processing, environmental feedback, and policy execution layers. The system emphasizes integration of high-level task descriptions with low-level action execution through an "Actor" component.

### Components/Axes

1. **Input Streams**:

- `C_{t-1}`: Context (previous state)

- Few-shot examples: Training data for memory initialization

2. **Memory System**:

- Long-term memory: Stores contextual knowledge and past experiences

3. **Processing Modules**:

- Language descriptor: Converts observations into structured text

- Environment: Simulates real-world interactions

- Low-level policies: Translates high-level actions into executable steps

4. **Control Flow**:

- Actor: Central decision-maker integrating task descriptions and memory

- Feedback loops: Between environment observations and memory updates

### Detailed Analysis

- **Context Flow**:

- `C_{t-1}` (context) and few-shot examples → Long-term memory

- Long-term memory + Text observation (`O_t`) → Language descriptor

- **Environment Interaction**:

- Language descriptor output → Environment

- Environment provides: Observation (`O_{t+1}`), Reward (`R_t`)

- **Policy Execution**:

- Low-level policies → Actor (high-level action `A_t`)

- Actor receives: Task description (`I`), Memory (`M_a`)

- **Temporal Dynamics**:

- Time steps denoted by subscripts (`t`, `t+1`)

- Memory (`M_a`) persists across iterations

### Key Observations

1. **Hierarchical Structure**:

- Clear separation between high-level task description (`I`) and low-level policy execution

2. **Memory Integration**:

- Long-term memory acts as persistent knowledge base influencing all decisions

3. **Feedback Loops**:

- Environment observations (`O_{t+1}`) and rewards (`R_t`) continuously update the system

4. **Actor-Critic Architecture**:

- Actor handles high-level decisions while low-level policies manage execution details

### Interpretation

This architecture demonstrates a sophisticated RL system designed for complex tasks requiring:

1. **Contextual Awareness**: Through persistent memory (`M_a`) and historical context (`C_{t-1}`)

2. **Language Grounding**: Via the language descriptor module converting raw observations into structured text

3. **Multi-timescale Learning**: Combining immediate rewards (`R_t`) with long-term memory retention

4. **Modular Design**: Separation of concern between task description, policy execution, and environmental interaction

The system appears optimized for tasks requiring both strategic planning (high-level actions) and precise execution (low-level policies), with continuous learning through environmental feedback. The bidirectional flow between environment and memory suggests adaptive capabilities that could handle non-stationary environments or evolving task requirements.