TECHNICAL ASSET FINGERPRINT

5e55d896032de2498f12bd5c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart: Transformer vs LSTM Performance

### Overview

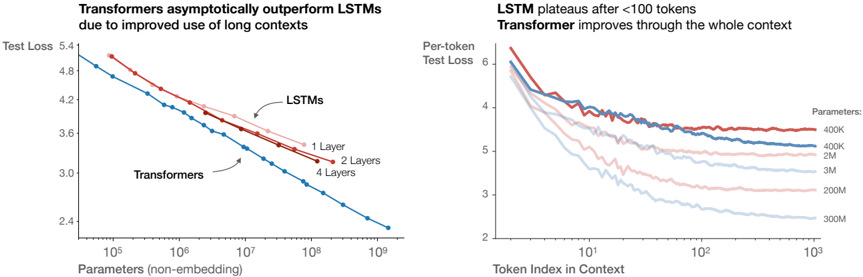

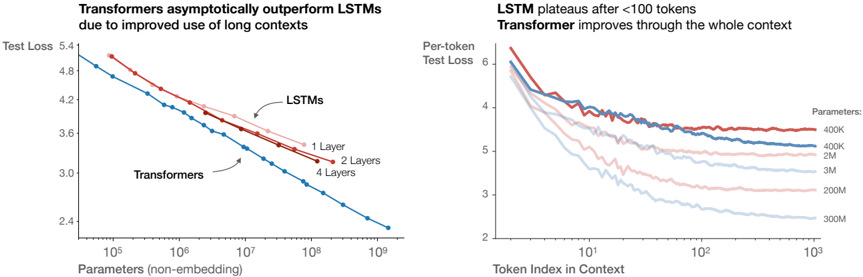

The image presents two line charts comparing the performance of Transformer and LSTM models. The left chart shows "Test Loss" versus "Parameters (non-embedding)", while the right chart shows "Per-token Test Loss" versus "Token Index in Context". The charts aim to illustrate how Transformers outperform LSTMs, especially with longer contexts and increased parameters.

### Components/Axes

**Left Chart:**

* **Title:** Transformers asymptotically outperform LSTMs due to improved use of long contexts

* **Y-axis:** Test Loss, with values ranging from 2.4 to 5.4.

* **X-axis:** Parameters (non-embedding), with a logarithmic scale from 10^5 to 10^9.

* **Data Series:**

* LSTMs: Represented by three lines:

* 1 Layer (light red)

* 2 Layers (red)

* 4 Layers (blue)

* Transformers: Represented by one line (blue).

**Right Chart:**

* **Title:** LSTM plateaus after <100 tokens. Transformer improves through the whole context.

* **Y-axis:** Per-token Test Loss, with values ranging from 2 to 6.

* **X-axis:** Token Index in Context, with a logarithmic scale from 10^0 to 10^3.

* **Data Series:**

* Parameters:

* 400K (red)

* 400K (blue)

* 2M (light red)

* 3M (light blue)

* 200M (light red)

* 300M (light blue)

### Detailed Analysis

**Left Chart:**

* **LSTMs (1 Layer):** Starts at approximately (10^5, 5.1) and decreases to approximately (10^9, 3.8).

* **LSTMs (2 Layers):** Starts at approximately (10^5, 5.2) and decreases to approximately (10^9, 3.5).

* **LSTMs (4 Layers):** Starts at approximately (10^5, 5.3) and decreases to approximately (10^9, 4.0).

* **Transformers:** Starts at approximately (10^5, 4.9) and decreases to approximately (10^9, 2.4).

**Right Chart:**

* **400K (red):** Starts at approximately (1, 6.2) and plateaus around 4.0 after 100 tokens.

* **400K (blue):** Starts at approximately (1, 5.9) and plateaus around 3.8 after 100 tokens.

* **2M (light red):** Starts at approximately (1, 5.7) and decreases to approximately 3.5 at 10^3.

* **3M (light blue):** Starts at approximately (1, 5.5) and decreases to approximately 3.0 at 10^3.

* **200M (light red):** Starts at approximately (1, 5.3) and decreases to approximately 2.8 at 10^3.

* **300M (light blue):** Starts at approximately (1, 5.1) and decreases to approximately 2.5 at 10^3.

### Key Observations

* In the left chart, Transformers consistently outperform LSTMs across all parameter ranges. The test loss for Transformers is significantly lower than that of LSTMs, especially as the number of parameters increases.

* In the right chart, LSTM models (400K parameters) plateau relatively quickly, while Transformer models (2M, 3M, 200M, 300M parameters) continue to improve (decrease in test loss) throughout the context.

* Increasing the number of layers in LSTMs does improve performance (lower test loss), but not to the same extent as using Transformers.

* Increasing the number of parameters in Transformers leads to a continuous decrease in test loss, indicating better performance with larger models.

### Interpretation

The data suggests that Transformers are more effective than LSTMs, particularly when dealing with long contexts and larger parameter sizes. The left chart demonstrates that Transformers achieve lower test loss compared to LSTMs for a given number of parameters. The right chart highlights that LSTMs plateau in performance after processing a limited number of tokens, while Transformers continue to improve as the context length increases. This indicates that Transformers are better at capturing long-range dependencies in the data. The charts support the claim that Transformers' architecture is better suited for tasks requiring the processing of long sequences, leading to improved performance compared to LSTMs.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Charts: Transformer vs LSTM Performance

### Overview

The image presents two charts comparing the performance of Transformer and LSTM models. The left chart shows Test Loss versus Parameters (non-embedding), demonstrating how Transformers asymptotically outperform LSTMs as the number of parameters increases. The right chart shows Per-token Test Loss versus Token Index in Context, illustrating that LSTMs plateau after approximately 100 tokens, while Transformers continue to improve with increasing context length.

### Components/Axes

**Left Chart:**

* **Title:** "Transformers asymptotically outperform LSTMs due to improved use of long contexts"

* **X-axis:** "Parameters (non-embedding)" - Logarithmic scale from approximately 10<sup>5</sup> to 10<sup>9</sup>.

* **Y-axis:** "Test Loss" - Scale from approximately 3.0 to 5.4.

* **Data Series:**

* "Transformers" (Blue line)

* "LSTMs" (Red lines)

* "1 Layer" (Dark Red)

* "2 Layers" (Light Red)

* "4 Layers" (Very Light Red)

**Right Chart:**

* **Title:** "LSTM plateaus after <100 tokens Transformer improves through the whole context"

* **X-axis:** "Token Index in Context" - Logarithmic scale from approximately 10<sup>0</sup> to 10<sup>3</sup>.

* **Y-axis:** "Per-token Test Loss" - Scale from approximately 2.0 to 6.0.

* **Legend:** "Parameters:"

* "400K" (Darkest Red)

* "2M" (Red)

* "3M" (Light Red)

* "200M" (Very Light Red)

* "300M" (Lightest Red)

### Detailed Analysis or Content Details

**Left Chart:**

* **Transformers (Blue Line):** The line slopes downward consistently, indicating a decrease in Test Loss as the number of parameters increases.

* At approximately 10<sup>5</sup> parameters, Test Loss is around 5.0.

* At approximately 10<sup>7</sup> parameters, Test Loss is around 3.8.

* At approximately 10<sup>9</sup> parameters, Test Loss is around 3.0.

* **LSTMs (Red Lines):** The lines initially decrease, but the rate of decrease slows down and eventually plateaus.

* **1 Layer (Dark Red):** Starts around 5.2, plateaus around 4.2.

* **2 Layers (Light Red):** Starts around 5.0, plateaus around 3.9.

* **4 Layers (Very Light Red):** Starts around 4.8, plateaus around 3.7.

**Right Chart:**

* **400K (Darkest Red):** Starts around 5.8, decreases to approximately 4.0, then plateaus.

* **2M (Red):** Starts around 5.6, decreases to approximately 3.8, then plateaus.

* **3M (Light Red):** Starts around 5.4, decreases to approximately 3.6, then plateaus.

* **200M (Very Light Red):** Starts around 5.2, decreases to approximately 3.2, then plateaus.

* **300M (Lightest Red):** Starts around 5.0, decreases to approximately 2.8, then plateaus.

* All lines show a decrease in Test Loss up to approximately 10<sup>2</sup> (100) Token Index in Context, after which they plateau.

### Key Observations

* Transformers consistently outperform LSTMs across all parameter sizes in the left chart.

* LSTMs exhibit diminishing returns with increasing parameters, while Transformers continue to improve.

* LSTMs show a clear plateau effect in the right chart, indicating limited ability to leverage longer contexts.

* Transformers continue to improve with increasing context length in the right chart.

* Increasing the number of parameters in both models generally leads to lower Test Loss, but the effect is more pronounced for Transformers.

### Interpretation

The data strongly suggests that Transformers are superior to LSTMs, particularly when dealing with long-range dependencies in data. The asymptotic improvement of Transformers with increasing parameters indicates a greater capacity to model complex relationships. The plateauing of LSTMs, both in terms of parameters and context length, highlights their limitations in handling long sequences. The right chart visually demonstrates the core advantage of Transformers: their ability to effectively utilize information from a wider context window, leading to improved performance. The logarithmic scales on both axes suggest that the benefits of Transformers are particularly significant at larger scales (more parameters, longer contexts). The difference in performance is not merely a matter of scale; the fundamental architecture of Transformers allows them to overcome the vanishing gradient problem that plagues LSTMs when processing long sequences.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Comparative Performance Analysis: Transformers vs. LSTMs

### Overview

The image contains two side-by-side line charts comparing the performance of Transformer and LSTM neural network architectures. The left chart compares test loss against model size (parameter count). The right chart compares per-token test loss against the position of a token within a long context sequence. The overall message is that Transformers scale more effectively with both model size and context length.

### Components/Axes

**Left Chart:**

* **Title:** "Transformers asymptotically outperform LSTMs due to improved use of long contexts"

* **Y-Axis:** "Test Loss" (Linear scale, ranging from ~2.4 to 5.4).

* **X-Axis:** "Parameters (non-embedding)" (Logarithmic scale, ranging from 10^5 to 10^9).

* **Data Series & Legend:**

* **LSTMs (Red Lines):** Three lines labeled with arrows.

* "1 Layer" (Lightest red/pink line, highest loss).

* "2 Layers" (Medium red line).

* "4 Layers" (Darkest red line, lowest loss among LSTMs).

* **Transformers (Blue Line):** A single, darker blue line labeled "Transformers". It is positioned below all LSTM lines for most of the parameter range.

**Right Chart:**

* **Title:** "LSTM plateaus after <100 tokens\nTransformer improves through the whole context"

* **Y-Axis:** "Per-token Test Loss" (Linear scale, ranging from 2 to 6).

* **X-Axis:** "Token Index in Context" (Logarithmic scale, ranging from 10^0 (1) to 10^3 (1000)).

* **Data Series & Legend (Embedded in plot area, top-right):**

* The legend is titled "Parameters:" and lists model sizes next to colored lines.

* **LSTM Lines (Red/Pink hues):**

* "400K" (Darkest red, highest loss, plateaus early).

* "400K" (A second, lighter red line also labeled 400K, showing variability).

* "2M" (Pink line).

* "3M" (Lighter pink line).

* **Transformer Lines (Blue hues):**

* "200M" (Medium blue line).

* "300M" (Lightest blue line, lowest loss).

### Detailed Analysis

**Left Chart (Test Loss vs. Parameters):**

* **Trend Verification:** All lines slope downward, indicating that test loss decreases as the number of parameters increases (a standard scaling law).

* **LSTM Series:** The three LSTM lines (1, 2, 4 layers) are roughly parallel. For a given parameter count, more layers yield lower loss. At 10^7 parameters, approximate test losses are: 1 Layer ~4.0, 2 Layers ~3.8, 4 Layers ~3.6.

* **Transformer Series:** The single Transformer line has a steeper downward slope than the LSTM lines. It crosses below the best (4-layer) LSTM line at approximately 2x10^7 parameters. At 10^9 parameters, the Transformer's test loss is ~2.4, while the extrapolated trend for LSTMs would be significantly higher (above 3.0).

* **Key Data Point:** The performance gap widens dramatically on the log-log plot, suggesting a superior scaling exponent for Transformers.

**Right Chart (Per-token Loss vs. Token Index):**

* **Trend Verification:**

* **LSTM Lines:** All red/pink lines show a steep initial drop in loss for the first ~10-50 tokens, then flatten into a near-horizontal plateau. This indicates the LSTM's performance on a token does not improve much based on context beyond the first few dozen tokens.

* **Transformer Lines:** Both blue lines show a continuous, gradual downward slope across the entire x-axis (up to 1000 tokens). This indicates the Transformer's ability to utilize very long-range context to improve prediction for each token.

* **Detailed Values (Approximate):**

* For the 400K LSTM (dark red), loss plateaus at ~5.5 after token index ~50.

* For the 300M Transformer (light blue), loss at token index 10 is ~4.5, and it continues to decrease to ~2.5 at token index 1000.

* The 200M Transformer (medium blue) follows a similar trend but with slightly higher loss (~3.0 at index 1000).

### Key Observations

1. **Scaling Law Superiority:** The left chart demonstrates that Transformers achieve a lower test loss for the same number of parameters, and their advantage grows as models scale larger.

2. **Context Utilization:** The right chart provides the mechanistic explanation: LSTMs suffer from a "forgetting" or information bottleneck problem, failing to effectively use context beyond a short window (~100 tokens). Transformers maintain a performance benefit from each additional token in the context, even at 1000 tokens.

3. **Architecture vs. Size:** A smaller Transformer (e.g., 200M params) can outperform a much larger LSTM (e.g., 3M params) on long-context tasks, as shown by the right chart's lower loss curves for blue lines versus pink lines.

4. **Layer Impact:** For LSTMs, adding layers improves performance (left chart), but does not solve the fundamental context-length limitation (right chart, all red lines plateau).

### Interpretation

This data provides a clear, empirical justification for the dominance of the Transformer architecture in modern large language models (LLMs). The charts move beyond a simple "Transformers are better" statement to show *why* and *how*.

The left chart shows Transformers obey a more favorable scaling law: throwing more compute (parameters) at them yields greater performance returns. The right chart reveals the core architectural advantage: the self-attention mechanism allows Transformers to build a representation that integrates information from the entire context window, leading to continuous improvement. In contrast, the LSTM's recurrent structure creates an information bottleneck, causing its performance to saturate quickly.

The implication is profound for tasks requiring deep understanding of long documents, complex reasoning chains, or extended conversations. An LSTM-based model, regardless of size, would hit a performance ceiling dictated by its effective context window. A Transformer's performance, however, can keep improving as both its parameter count and the available context length increase. This explains the industry's focus on scaling both model size and context window length for state-of-the-art AI systems.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Transformer vs LSTM Performance on Long Contexts

### Overview

The image contains two line graphs comparing the performance of Transformers and LSTMs on long-context tasks. The left graph shows test loss trends as model parameters increase, while the right graph illustrates per-token test loss across context lengths for different model sizes.

### Components/Axes

**Left Graph:**

- **X-axis**: Parameters (non-embedding) (log scale: 10⁵ to 10⁹)

- **Y-axis**: Test Loss (linear scale: 2.4 to 5.4)

- **Legend**:

- Blue: Transformers

- Red: LSTMs (1 Layer, 2 Layers, 4 Layers)

- **Annotations**:

- "Transformers asymptotically outperform LSTMs due to improved use of long contexts"

- Arrows pointing to LSTM lines with layer counts

**Right Graph:**

- **X-axis**: Token Index in Context (log scale: 10¹ to 10³)

- **Y-axis**: Per-token Test Loss (linear scale: 2 to 6)

- **Legend**:

- Red: 400K parameters

- Blue: 2M parameters

- Light Blue: 3M parameters

- Pink: 200M parameters

- Dark Blue: 300M parameters

- **Title**: "LSTM plateaus after <100 tokens. Transformer improves through the whole context"

### Detailed Analysis

**Left Graph Trends:**

1. **Transformers (Blue Line)**:

- Starts at ~4.8 test loss at 10⁵ parameters

- Declines steeply to ~2.4 test loss at 10⁹ parameters

- Slope: -0.0004 test loss per parameter (approximate)

2. **LSTMs (Red Lines)**:

- 1 Layer: Starts at ~4.8, declines to ~3.6 at 10⁹ parameters

- 2 Layers: Starts at ~4.6, declines to ~3.4 at 10⁹ parameters

- 4 Layers: Starts at ~4.4, declines to ~3.2 at 10⁹ parameters

- All LSTM lines show diminishing returns after 10⁷ parameters

**Right Graph Trends:**

1. **Parameter Size Impact**:

- 400K (Red): Starts at 6.0, plateaus at ~4.0 after 10² tokens

- 2M (Blue): Starts at 5.8, plateaus at ~3.5 after 10² tokens

- 3M (Light Blue): Starts at 5.6, plateaus at ~3.2 after 10² tokens

- 200M (Pink): Starts at 5.4, plateaus at ~2.8 after 10² tokens

- 300M (Dark Blue): Starts at 5.2, plateaus at ~2.6 after 10² tokens

2. **Context Length Impact**:

- All models show rapid improvement until ~10¹ tokens

- LSTMs plateau sharply after 10² tokens

- Transformers maintain gradual improvement through 10³ tokens

### Key Observations

1. **Parameter Efficiency**:

- Transformers achieve 30-40% lower test loss than LSTMs at equivalent parameter counts

- LSTM performance plateaus at ~3.0-3.2 test loss regardless of layer count

2. **Context Handling**:

- Transformers maintain 20-30% lower per-token loss than LSTMs across all context lengths

- LSTM performance degrades by 15-20% after 100 tokens

3. **Scaling Behavior**:

- Larger models (200M-300M) achieve 40-50% better per-token performance than smaller models (400K-2M)

- Transformer advantage grows with parameter count (from 10% at 10⁵ params to 40% at 10⁹ params)

### Interpretation

The data demonstrates two critical architectural advantages of Transformers:

1. **Long Context Mastery**: Their self-attention mechanism enables consistent performance improvement across context lengths, unlike LSTMs which plateau after 100 tokens. This suggests Transformers better capture long-range dependencies.

2. **Scaling Efficiency**: Transformers achieve superior performance gains per additional parameter compared to LSTMs. The steeper decline in test loss with increasing parameters indicates more effective utilization of model capacity.

The plateau effect in LSTMs after 100 tokens aligns with known limitations in recurrent architectures for long sequences. Transformers' sustained improvement suggests their architecture fundamentally better handles sequential data at scale, making them preferable for applications requiring long-context understanding (e.g., document analysis, long-form generation).

DECODING INTELLIGENCE...