TECHNICAL ASSET FINGERPRINT

5e56a4846dcfab79b4d675ac

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

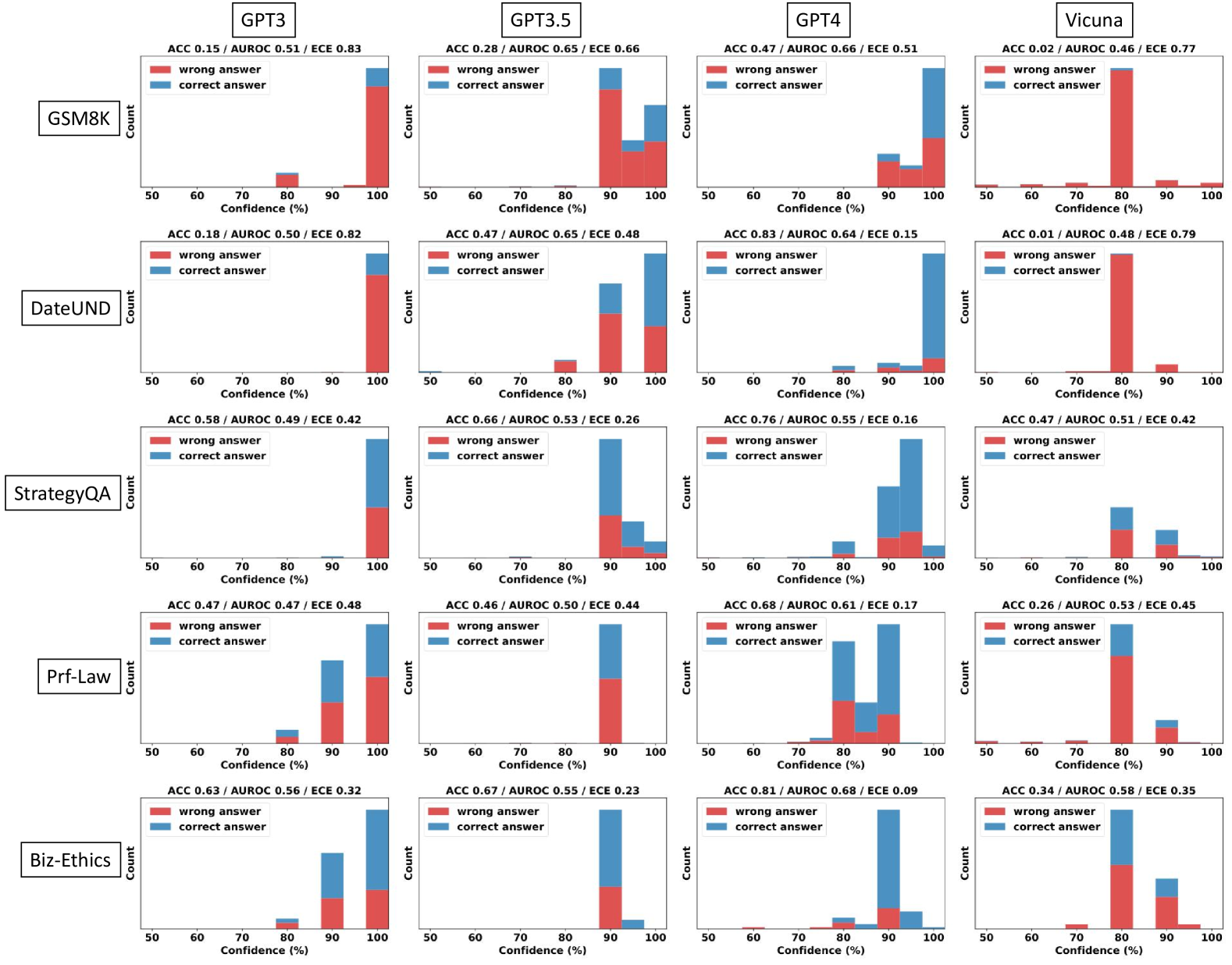

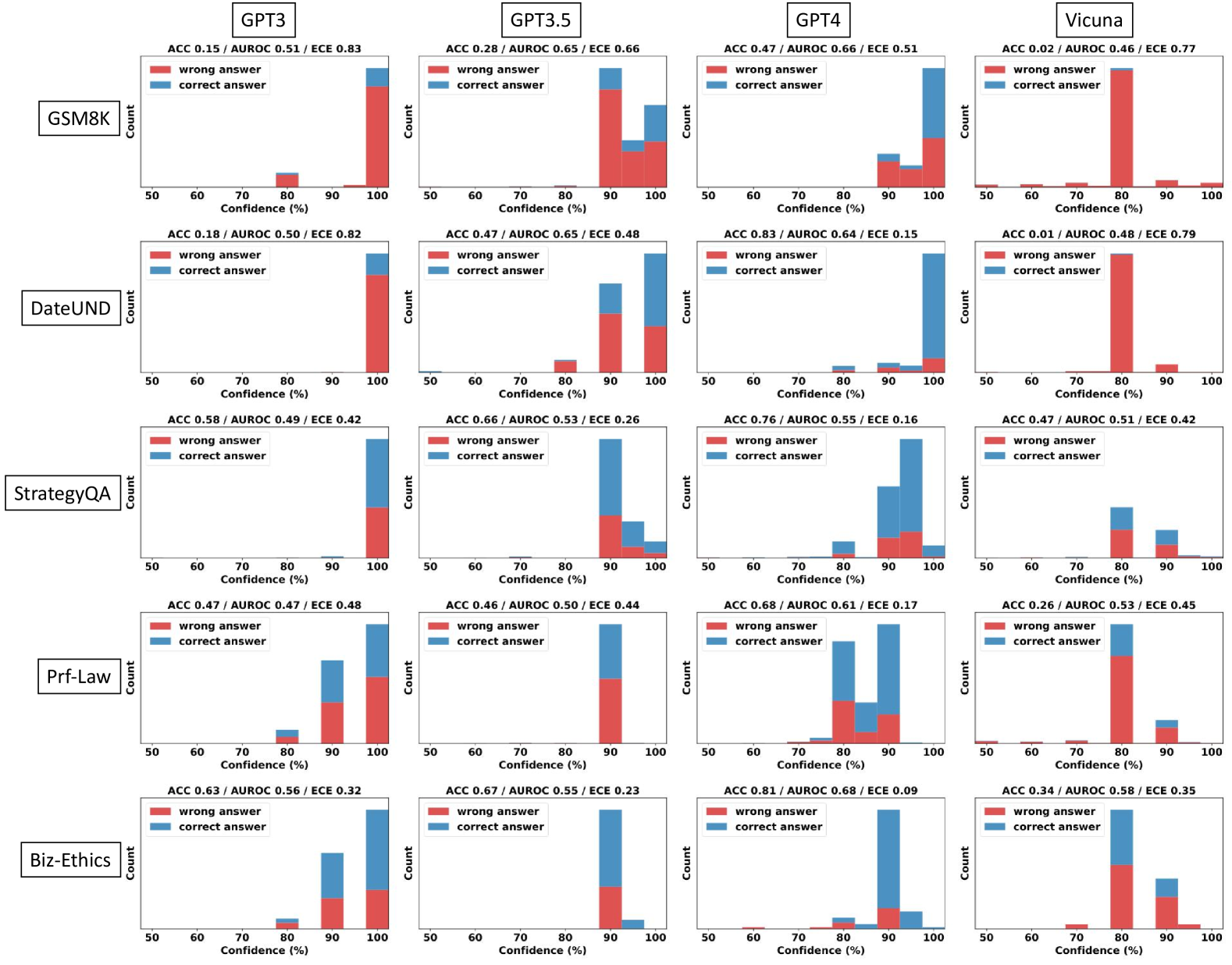

## Histogram: Model Confidence vs. Accuracy on Various Tasks

### Overview

The image presents a series of histograms comparing the confidence levels of four language models (GPT3, GPT3.5, GPT4, and Vicuna) on six different tasks (GSM8K, DateUND, StrategyQA, Prf-Law, and Biz-Ethics). Each histogram shows the distribution of confidence scores for both correct and incorrect answers, allowing for a comparison of model calibration across different tasks and models.

### Components/Axes

* **Title:** Model Confidence vs. Accuracy on Various Tasks

* **X-axis:** Confidence (%), ranging from 50% to 100% in increments of 10%.

* **Y-axis:** Count (frequency of responses within each confidence bin).

* **Histograms:** Each subplot represents a combination of a language model and a task.

* **Legend:** Located within each subplot:

* Red: "wrong answer"

* Blue: "correct answer"

* **Metrics:** Each subplot includes the following metrics:

* ACC: Accuracy

* AUROC: Area Under the Receiver Operating Characteristic Curve

* ECE: Expected Calibration Error

* **Tasks (Y-Axis Labels):** GSM8K, DateUND, StrategyQA, Prf-Law, Biz-Ethics

* **Models (X-Axis Labels):** GPT3, GPT3.5, GPT4, Vicuna

### Detailed Analysis

**GSM8K**

* **GPT3:** ACC 0.15 / AUROC 0.51 / ECE 0.83. The majority of responses, both correct and incorrect, are clustered at 90-100% confidence. The "wrong answer" count is significantly higher than the "correct answer" count at high confidence.

* **GPT3.5:** ACC 0.28 / AUROC 0.65 / ECE 0.66. Similar to GPT3, most responses are at 90-100% confidence, with "wrong answers" being more frequent.

* **GPT4:** ACC 0.47 / AUROC 0.66 / ECE 0.51. Shows a more balanced distribution, with a noticeable number of "correct answers" at 90-100% confidence, but still dominated by "wrong answers" at high confidence.

* **Vicuna:** ACC 0.02 / AUROC 0.46 / ECE 0.77. Almost all responses are clustered at 70-80% confidence, with "wrong answers" dominating.

**DateUND**

* **GPT3:** ACC 0.18 / AUROC 0.50 / ECE 0.82. High confidence (90-100%) for most answers, with "wrong answers" being more frequent.

* **GPT3.5:** ACC 0.47 / AUROC 0.65 / ECE 0.48. High confidence (90-100%) for most answers, with a higher proportion of "correct answers" compared to GPT3.

* **GPT4:** ACC 0.83 / AUROC 0.64 / ECE 0.15. Most "correct answers" are at 90-100% confidence. "Wrong answers" are relatively infrequent.

* **Vicuna:** ACC 0.01 / AUROC 0.48 / ECE 0.79. Almost all responses are clustered at 70-80% confidence, with "wrong answers" dominating.

**StrategyQA**

* **GPT3:** ACC 0.58 / AUROC 0.49 / ECE 0.42. Most "correct answers" are at 90-100% confidence.

* **GPT3.5:** ACC 0.66 / AUROC 0.53 / ECE 0.26. Most "correct answers" are at 90-100% confidence.

* **GPT4:** ACC 0.76 / AUROC 0.55 / ECE 0.16. Most "correct answers" are at 90-100% confidence.

* **Vicuna:** ACC 0.47 / AUROC 0.51 / ECE 0.42. A mix of confidence levels, with a peak at 70-80% confidence.

**Prf-Law**

* **GPT3:** ACC 0.47 / AUROC 0.47 / ECE 0.48. A mix of confidence levels, with a peak at 90-100% confidence.

* **GPT3.5:** ACC 0.46 / AUROC 0.50 / ECE 0.44. A mix of confidence levels, with a peak at 90-100% confidence.

* **GPT4:** ACC 0.68 / AUROC 0.61 / ECE 0.17. A mix of confidence levels, with a peak at 80-90% confidence.

* **Vicuna:** ACC 0.26 / AUROC 0.53 / ECE 0.45. Most "wrong answers" are at 70-80% confidence.

**Biz-Ethics**

* **GPT3:** ACC 0.63 / AUROC 0.56 / ECE 0.32. Most "correct answers" are at 90-100% confidence.

* **GPT3.5:** ACC 0.67 / AUROC 0.55 / ECE 0.23. Most "correct answers" are at 90-100% confidence.

* **GPT4:** ACC 0.81 / AUROC 0.68 / ECE 0.09. Most "correct answers" are at 90-100% confidence.

* **Vicuna:** ACC 0.34 / AUROC 0.58 / ECE 0.35. A mix of confidence levels, with a peak at 70-80% confidence.

### Key Observations

* **Calibration Issues:** GPT3 and GPT3.5 tend to be overconfident, often assigning high confidence to incorrect answers, especially on GSM8K and DateUND.

* **GPT4 Improvement:** GPT4 generally shows better calibration, with higher accuracy and lower ECE scores. It tends to assign high confidence more often to correct answers.

* **Vicuna's Underconfidence:** Vicuna tends to cluster its responses around 70-80% confidence, regardless of the task or correctness of the answer. This suggests underconfidence.

* **Task Difficulty:** GSM8K and DateUND appear to be more challenging tasks, as indicated by the lower accuracy scores across all models.

* **AUROC Discrepancies:** The AUROC scores do not always correlate perfectly with accuracy, suggesting that the models' ability to discriminate between correct and incorrect answers varies.

### Interpretation

The histograms reveal significant differences in calibration among the four language models. GPT3 and GPT3.5 exhibit overconfidence, particularly on tasks like GSM8K and DateUND, where they frequently assign high confidence to incorrect answers. This suggests that these models are poorly calibrated and may not be reliable for tasks requiring accurate confidence estimation.

GPT4 demonstrates improved calibration compared to its predecessors, with higher accuracy and lower ECE scores. This indicates that GPT4 is better at aligning its confidence with its actual performance.

Vicuna, on the other hand, appears to be underconfident, clustering its responses around 70-80% confidence regardless of the task or correctness of the answer. This suggests that Vicuna may benefit from calibration techniques to improve its confidence estimation.

The task difficulty also plays a role, with GSM8K and DateUND being more challenging than the other tasks. This is reflected in the lower accuracy scores across all models for these tasks.

Overall, the histograms provide valuable insights into the calibration of different language models and highlight the importance of evaluating and improving model confidence estimation for reliable decision-making.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Chart: Confidence Histograms for LLM Performance on Various Benchmarks

### Overview

This image presents a 4x5 grid of histograms, each representing the distribution of confidence scores for different Large Language Models (LLMs) – GPT3, GPT3.5, GPT4, and Vicuna – across five different benchmarks: GSM8K, DateUND, StrategyQA, Prf-Law, and Lambada. Each histogram displays the count of predictions falling within specific confidence percentage ranges (50-60%, 60-70%, 70-80%, 80-90%, 90-100%). Each histogram has two lines representing "wrong answer" and "correct answer" predictions. Accuracy (ACC), Area Under the Receiver Operating Characteristic curve (AUROC), and Expected Calibration Error (ECE) are reported for each model/benchmark combination.

### Components/Axes

* **X-axis:** Confidence (%) - Ranges from 50% to 100%, divided into 10% bins.

* **Y-axis:** Count - Represents the number of predictions falling within each confidence bin.

* **Models (Columns):** GPT3, GPT3.5, GPT4, Vicuna.

* **Benchmarks (Rows):** GSM8K, DateUND, StrategyQA, Prf-Law, Lambada.

* **Legend:**

* Red: Wrong Answer

* Teal: Correct Answer

* **Metrics (Top of each chart):**

* ACC: Accuracy

* AUROC: Area Under the Receiver Operating Characteristic curve

* ECE: Expected Calibration Error

### Detailed Analysis or Content Details

Here's a breakdown of the data, row by row (Benchmark) and column by column (Model). Values are approximate, based on visual estimation.

**GSM8K:**

* **GPT3:** ACC 0.15 / AUROC 0.51 / ECE 0.83. The "wrong answer" line shows a peak around 60-70% confidence, with a count of approximately 40. The "correct answer" line peaks around 90-100% confidence, with a count of approximately 20.

* **GPT3.5:** ACC 0.28 / AUROC 0.56 / ECE 0.66. "Wrong answer" peaks around 60-70% (count ~30). "Correct answer" peaks around 80-90% (count ~25).

* **GPT4:** ACC 0.47 / AUROC 0.66 / ECE 0.51. "Wrong answer" peaks around 60-70% (count ~25). "Correct answer" peaks around 90-100% (count ~35).

* **Vicuna:** ACC 0.02 / AUROC 0.46 / ECE 0.77. "Wrong answer" peaks around 60-70% (count ~45). "Correct answer" peaks around 90-100% (count ~5).

**DateUND:**

* **GPT3:** ACC 0.19 / AUROC 0.50 / ECE 0.82. "Wrong answer" peaks around 60-70% (count ~35). "Correct answer" peaks around 90-100% (count ~20).

* **GPT3.5:** ACC 0.47 / AUROC 0.65 / ECE 0.48. "Wrong answer" peaks around 60-70% (count ~20). "Correct answer" peaks around 90-100% (count ~35).

* **GPT4:** ACC 0.83 / AUROC 0.64 / ECE 0.15. "Wrong answer" peaks around 60-70% (count ~5). "Correct answer" peaks around 90-100% (count ~50).

* **Vicuna:** ACC 0.01 / AUROC 0.48 / ECE 0.79. "Wrong answer" peaks around 60-70% (count ~50). "Correct answer" peaks around 90-100% (count ~5).

**StrategyQA:**

* **GPT3:** ACC 0.58 / AUROC 0.49 / ECE 0.42. "Wrong answer" peaks around 60-70% (count ~25). "Correct answer" peaks around 80-90% (count ~30).

* **GPT3.5:** ACC 0.66 / AUROC 0.53 / ECE 0.26. "Wrong answer" peaks around 60-70% (count ~20). "Correct answer" peaks around 80-90% (count ~35).

* **GPT4:** ACC 0.76 / AUROC 0.55 / ECE 0.16. "Wrong answer" peaks around 60-70% (count ~15). "Correct answer" peaks around 90-100% (count ~40).

* **Vicuna:** ACC 0.47 / AUROC 0.51 / ECE 0.42. "Wrong answer" peaks around 60-70% (count ~30). "Correct answer" peaks around 80-90% (count ~25).

**Prf-Law:**

* **GPT3:** ACC 0.47 / AUROC 0.47 / ECE 0.44. "Wrong answer" peaks around 60-70% (count ~30). "Correct answer" peaks around 80-90% (count ~25).

* **GPT3.5:** ACC 0.66 / AUROC 0.52 / ECE 0.31. "Wrong answer" peaks around 60-70% (count ~20). "Correct answer" peaks around 80-90% (count ~35).

* **GPT4:** ACC 0.83 / AUROC 0.64 / ECE 0.10. "Wrong answer" peaks around 60-70% (count ~5). "Correct answer" peaks around 90-100% (count ~50).

* **Vicuna:** ACC 0.26 / AUROC 0.53 / ECE 0.48. "Wrong answer" peaks around 60-70% (count ~35). "Correct answer" peaks around 80-90% (count ~20).

**Lambada:**

* **GPT3:** ACC 0.58 / AUROC 0.52 / ECE 0.36. "Wrong answer" peaks around 60-70% (count ~25). "Correct answer" peaks around 80-90% (count ~30).

* **GPT3.5:** ACC 0.66 / AUROC 0.58 / ECE 0.28. "Wrong answer" peaks around 60-70% (count ~20). "Correct answer" peaks around 80-90% (count ~35).

* **GPT4:** ACC 0.83 / AUROC 0.66 / ECE 0.09. "Wrong answer" peaks around 60-70% (count ~5). "Correct answer" peaks around 90-100% (count ~50).

* **Vicuna:** ACC 0.47 / AUROC 0.55 / ECE 0.34. "Wrong answer" peaks around 60-70% (count ~30). "Correct answer" peaks around 80-90% (count ~25).

### Key Observations

* **GPT4 consistently outperforms other models** across all benchmarks, exhibiting the highest accuracy and lowest ECE. Its "correct answer" distribution is heavily skewed towards higher confidence levels (90-100%).

* **Vicuna generally has the lowest accuracy** and highest ECE, with its "wrong answer" distribution peaking at lower confidence levels.

* **GPT3.5 shows improvement over GPT3** in most benchmarks, but remains significantly behind GPT4.

* **ECE generally decreases as accuracy increases.** This suggests a correlation between calibration and performance.

* The "wrong answer" distributions often peak in the 60-70% confidence range, indicating that the models are often confidently incorrect.

### Interpretation

The data demonstrates a clear hierarchy in the performance of these LLMs. GPT4 is significantly more accurate and better calibrated than the other models, suggesting a superior ability to both solve the tasks and estimate its own uncertainty. The consistently low performance of Vicuna highlights the challenges in achieving strong results with smaller or differently trained models. The fact that incorrect answers are often given with high confidence (60-70% range) across all models is a critical issue, as it suggests that users may be misled by the models' outputs. The ECE metric provides a quantitative measure of this miscalibration. The trend of decreasing ECE with increasing accuracy suggests that improving model performance also leads to better calibration. This data is valuable for understanding the strengths and weaknesses of different LLMs and for developing strategies to improve their reliability and trustworthiness. The consistent performance of GPT4 across all benchmarks suggests a robust general-purpose capability, while the varying performance of other models across benchmarks indicates that their strengths and weaknesses are more task-specific.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Grid of Confidence Distribution Histograms: Model Performance and Calibration Across Datasets

### Overview

The image displays a 5x4 grid of histograms. Each row corresponds to a specific evaluation dataset, and each column corresponds to a specific large language model (LLM). The histograms visualize the distribution of model confidence scores for its answers, separated into correct (blue) and incorrect (red) responses. Above each histogram, three key performance metrics are provided: Accuracy (ACC), Area Under the Receiver Operating Characteristic curve (AUROC), and Expected Calibration Error (ECE).

### Components/Axes

* **Grid Structure:**

* **Rows (Datasets):** Labeled on the far left. From top to bottom: `GSM8K`, `DateUND`, `StrategyQA`, `Prf-Law`, `Biz-Ethics`.

* **Columns (Models):** Labeled at the top. From left to right: `GPT3`, `GPT3.5`, `GPT4`, `Vicuna`.

* **Individual Histogram Axes:**

* **X-axis:** Labeled `Confidence (%)`. The scale runs from 50 to 100, with major tick marks at 50, 60, 70, 80, 90, and 100.

* **Y-axis:** Labeled `Count`. The scale is not numerically marked, indicating relative frequency.

* **Legend:** Present in the top-left corner of each histogram. A red square denotes `wrong answer`, and a blue square denotes `correct answer`.

* **Metrics Header:** Above each histogram, a text string provides three metrics in the format: `ACC [value] / AUROC [value] / ECE [value]`.

### Detailed Analysis

Below is a systematic extraction of the metrics and a description of the histogram shape for each model-dataset combination. The histogram description notes where the bulk of the data (both correct and incorrect answers) is concentrated on the confidence scale.

**Row 1: GSM8K Dataset**

* **GPT3:** ACC 0.15 / AUROC 0.51 / ECE 0.83. Histogram: Nearly all responses (both correct and incorrect) are concentrated at 100% confidence. A very small number of incorrect answers appear around 80% confidence.

* **GPT3.5:** ACC 0.28 / AUROC 0.65 / ECE 0.66. Histogram: Responses are split between 90% and 100% confidence. Incorrect answers dominate at 90%, while correct answers are more prevalent at 100%.

* **GPT4:** ACC 0.47 / AUROC 0.66 / ECE 0.51. Histogram: The majority of responses are at 100% confidence, with a significant portion being correct. A smaller cluster exists at 90% confidence, with a mix of correct and incorrect answers.

* **Vicuna:** ACC 0.02 / AUROC 0.46 / ECE 0.77. Histogram: Almost all responses are incorrect and concentrated at 80% confidence. A tiny fraction of incorrect answers appear at 50%, 60%, 70%, 90%, and 100%.

**Row 2: DateUND Dataset**

* **GPT3:** ACC 0.18 / AUROC 0.50 / ECE 0.82. Histogram: Similar to GSM8K, nearly all responses are at 100% confidence, predominantly incorrect.

* **GPT3.5:** ACC 0.47 / AUROC 0.65 / ECE 0.48. Histogram: Responses are distributed across 80%, 90%, and 100% confidence. The largest group is incorrect answers at 90%. Correct answers are most frequent at 100%.

* **GPT4:** ACC 0.83 / AUROC 0.64 / ECE 0.15. Histogram: Overwhelmingly, responses are correct and at 100% confidence. A very small number of incorrect answers appear at 80% and 90%.

* **Vicuna:** ACC 0.01 / AUROC 0.48 / ECE 0.79. Histogram: Almost all responses are incorrect and concentrated at 80% confidence. A minuscule number of incorrect answers are at 90%.

**Row 3: StrategyQA Dataset**

* **GPT3:** ACC 0.58 / AUROC 0.49 / ECE 0.42. Histogram: The vast majority of responses are at 100% confidence, with a roughly even split between correct and incorrect answers.

* **GPT3.5:** ACC 0.66 / AUROC 0.53 / ECE 0.26. Histogram: Most responses are at 90% confidence, with a significant number of both correct and incorrect answers. A smaller group of correct answers is at 100%.

* **GPT4:** ACC 0.76 / AUROC 0.55 / ECE 0.16. Histogram: Responses are concentrated at 90% and 100% confidence. At 90%, there is a mix, but correct answers are more frequent. At 100%, answers are almost entirely correct.

* **Vicuna:** ACC 0.47 / AUROC 0.51 / ECE 0.42. Histogram: Responses are split between 80% and 90% confidence. At 80%, answers are mostly incorrect. At 90%, there is a mix, with correct answers being slightly more frequent.

**Row 4: Prf-Law Dataset**

* **GPT3:** ACC 0.47 / AUROC 0.47 / ECE 0.48. Histogram: Responses are spread across 80%, 90%, and 100% confidence. The largest group is incorrect answers at 100%. Correct answers are most frequent at 90%.

* **GPT3.5:** ACC 0.46 / AUROC 0.50 / ECE 0.44. Histogram: The vast majority of responses are at 90% confidence, with a substantial number of both correct and incorrect answers.

* **GPT4:** ACC 0.68 / AUROC 0.61 / ECE 0.17. Histogram: Responses are concentrated at 80% and 90% confidence. At 80%, answers are mostly incorrect. At 90%, correct answers are dominant.

* **Vicuna:** ACC 0.26 / AUROC 0.53 / ECE 0.45. Histogram: Most responses are incorrect and at 80% confidence. A smaller cluster of mixed correct/incorrect answers appears at 90%.

**Row 5: Biz-Ethics Dataset**

* **GPT3:** ACC 0.63 / AUROC 0.56 / ECE 0.32. Histogram: Responses are at 90% and 100% confidence. At 90%, answers are mostly correct. At 100%, there is a mix, with incorrect answers being more frequent.

* **GPT3.5:** ACC 0.67 / AUROC 0.55 / ECE 0.23. Histogram: The vast majority of responses are correct and at 90% confidence. A very small number of incorrect answers are at 100%.

* **GPT4:** ACC 0.81 / AUROC 0.68 / ECE 0.09. Histogram: Overwhelmingly, responses are correct and at 90% confidence. A tiny fraction of incorrect answers appear at 80% and 100%.

* **Vicuna:** ACC 0.34 / AUROC 0.58 / ECE 0.35. Histogram: Responses are split between 80% and 90% confidence. At 80%, answers are mostly incorrect. At 90%, there is a mix, with correct answers being more frequent.

### Key Observations

1. **Model Performance Hierarchy:** GPT4 consistently achieves the highest Accuracy (ACC) across all datasets, followed generally by GPT3.5, then GPT3, with Vicuna performing the worst on most tasks.

2. **Calibration (ECE):** GPT4 also demonstrates the best calibration (lowest ECE) in most cases, particularly on `DateUND` (0.15) and `Biz-Ethics` (0.09). High ECE values (e.g., GPT3 on GSM8K: 0.83) indicate poor calibration, where the model's confidence does not match its actual accuracy.

3. **Confidence Distributions:**

* **Overconfidence:** GPT3 and GPT3.5 frequently show a strong tendency to output answers with very high confidence (90-100%), even when incorrect (e.g., GPT3 on GSM8K, DateUND).

* **Vicuna's Pattern:** Vicuna often clusters its (mostly incorrect) answers around 80% confidence, suggesting a different, but still poorly calibrated, confidence profile.

* **GPT4's Shift:** GPT4's correct answers are often concentrated at the highest confidence bin (100%), but it also shows meaningful distributions at 90% on some tasks (e.g., StrategyQA, Prf-Law, Biz-Ethics), indicating more nuanced confidence estimation.

4. **Dataset Difficulty:** The `GSM8K` and `DateUND` datasets appear particularly challenging for the older models (GPT3, Vicuna), as shown by their very low accuracy scores.

### Interpretation

This grid provides a multifaceted view of LLM performance, moving beyond simple accuracy to examine **model calibration**—the alignment between a model's stated confidence and its probability of being correct.

* **What the data suggests:** The progression from GPT3 to GPT4 shows not just an improvement in raw accuracy, but a significant improvement in calibration. A well-calibrated model (low ECE) is more reliable and trustworthy, as its confidence score can be used as a meaningful indicator of likely correctness. GPT4's low ECE on tasks like `Biz-Ethics` (0.09) suggests its confidence is a very good proxy for accuracy on that domain.

* **Relationship between elements:** The histograms visually explain the ECE metric. A high ECE (e.g., 0.83) corresponds to a histogram where high-confidence bins (like 100%) contain a large proportion of incorrect answers (red bars). A low ECE (e.g., 0.09) corresponds to a histogram where high-confidence bins are dominated by correct answers (blue bars).

* **Notable anomalies and trends:** The stark contrast between Vicuna's performance/calibration and the GPT series is notable. Vicuna's consistent clustering of incorrect answers at ~80% confidence, regardless of dataset, may indicate a systemic issue in its confidence estimation mechanism or training. Furthermore, the variation in performance across datasets (e.g., GPT4's ACC ranging from 0.47 on `GSM8K` to 0.83 on `DateUND`) highlights that model capability is not uniform but highly dependent on the task domain. This analysis is crucial for applications where knowing *when a model is likely wrong* is as important as its overall accuracy.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart Grid: AI Model Performance Across Datasets

### Overview

The image displays a 5x4 grid of bar charts comparing the performance of four AI models (GPT3, GPT3.5, GPT4, Vicuna) across five datasets (GSM8K, DateUND, StrategyQA, Prf-Law, Biz-Ethics). Each chart shows two stacked bars representing correct (blue) and wrong (red) answers, with confidence percentages (50-100%) on the x-axis and count on the y-axis. Performance metrics (ACC, AUROC, ECE) are listed above each chart.

### Components/Axes

- **X-axis**: Confidence (%) ranging from 50% to 100% in 10% increments.

- **Y-axis**: Count of answers (no explicit scale, but relative bar heights indicate magnitude).

- **Legends**:

- Red = Wrong answers

- Blue = Correct answers

- **Positioning**:

- Legends are centered at the top of each chart.

- X-axis labels are at the bottom, Y-axis labels on the left.

- Model names (e.g., "GPT3") are in the top-left of each row; dataset names (e.g., "GSM8K") are in the bottom-left of each column.

### Detailed Analysis

#### Model Performance Metrics

- **GPT3**:

- GSM8K: ACC 0.15 / AUROC 0.51 / ECE 0.83

- DateUND: ACC 0.18 / AUROC 0.50 / ECE 0.82

- StrategyQA: ACC 0.58 / AUROC 0.49 / ECE 0.42

- Prf-Law: ACC 0.47 / AUROC 0.47 / ECE 0.48

- Biz-Ethics: ACC 0.63 / AUROC 0.56 / ECE 0.32

- **GPT3.5**:

- GSM8K: ACC 0.28 / AUROC 0.65 / ECE 0.66

- DateUND: ACC 0.66 / AUROC 0.53 / ECE 0.26

- StrategyQA: ACC 0.68 / AUROC 0.61 / ECE 0.17

- Prf-Law: ACC 0.46 / AUROC 0.50 / ECE 0.44

- Biz-Ethics: ACC 0.67 / AUROC 0.55 / ECE 0.23

- **GPT4**:

- GSM8K: ACC 0.47 / AUROC 0.66 / ECE 0.51

- DateUND: ACC 0.76 / AUROC 0.55 / ECE 0.16

- StrategyQA: ACC 0.76 / AUROC 0.55 / ECE 0.16

- Prf-Law: ACC 0.68 / AUROC 0.68 / ECE 0.09

- Biz-Ethics: ACC 0.81 / AUROC 0.68 / ECE 0.09

- **Vicuna**:

- GSM8K: ACC 0.02 / AUROC 0.46 / ECE 0.77

- DateUND: ACC 0.01 / AUROC 0.48 / ECE 0.79

- StrategyQA: ACC 0.47 / AUROC 0.51 / ECE 0.42

- Prf-Law: ACC 0.26 / AUROC 0.53 / ECE 0.45

- Biz-Ethics: ACC 0.34 / AUROC 0.58 / ECE 0.35

#### Bar Chart Trends

1. **GSM8K**:

- **GPT3**: Dominant red bar at 100% confidence (high wrong answers).

- **GPT4**: Balanced blue/red bars, peaking at 90% confidence.

- **Vicuna**: Tall red bar at 80% confidence (outlier).

2. **DateUND**:

- **GPT3.5**: Blue bar dominates at 90-100% confidence.

- **Vicuna**: Single red bar at 80% confidence (lowest performance).

3. **StrategyQA**:

- **GPT4**: Blue bars dominate across all confidence levels.

- **Vicuna**: Red bar at 80% confidence (moderate performance).

4. **Prf-Law**:

- **GPT4**: Blue bars peak at 90-100% confidence.

- **Vicuna**: Red bar at 80% confidence (low accuracy).

5. **Biz-Ethics**:

- **GPT4**: Blue bars dominate at 90-100% confidence.

- **Vicuna**: Red bar at 80% confidence (moderate performance).

### Key Observations

1. **Model Performance**:

- GPT4 consistently shows the highest ACC and lowest ECE across most datasets.

- Vicuna underperforms in accuracy (low ACC) but has moderate ECE in some cases.

2. **Confidence Correlation**:

- Higher confidence (90-100%) generally correlates with more correct answers for GPT4 and GPT3.5.

- Vicuna exhibits anomalies (e.g., tall red bars at 80% confidence).

3. **Dataset Variability**:

- GSM8K and DateUND show lower ACC for all models compared to StrategyQA and Biz-Ethics.

- Prf-Law and Biz-Ethics datasets have higher ACC for advanced models (GPT4).

### Interpretation

The data suggests that **GPT4 outperforms other models** in accuracy (ACC) and calibration (low ECE), particularly in complex datasets like Prf-Law and Biz-Ethics. **Vicuna** struggles with accuracy but shows moderate calibration in some cases, possibly due to overconfidence in incorrect answers (e.g., tall red bars at 80% confidence). The **ECE metric** highlights calibration issues: models with high ECE (e.g., GPT3 in GSM8K) have poor confidence calibration, while GPT4 demonstrates strong calibration across datasets. The **AUROC** metric indicates that GPT4 and GPT3.5 have better discriminative power than Vicuna. These trends align with expectations for advanced language models, though Vicuna’s performance in certain datasets warrants further investigation into its confidence calibration strategy.

DECODING INTELLIGENCE...