## Diagram: Agent-Environment Interaction with Self-Reflection

### Overview

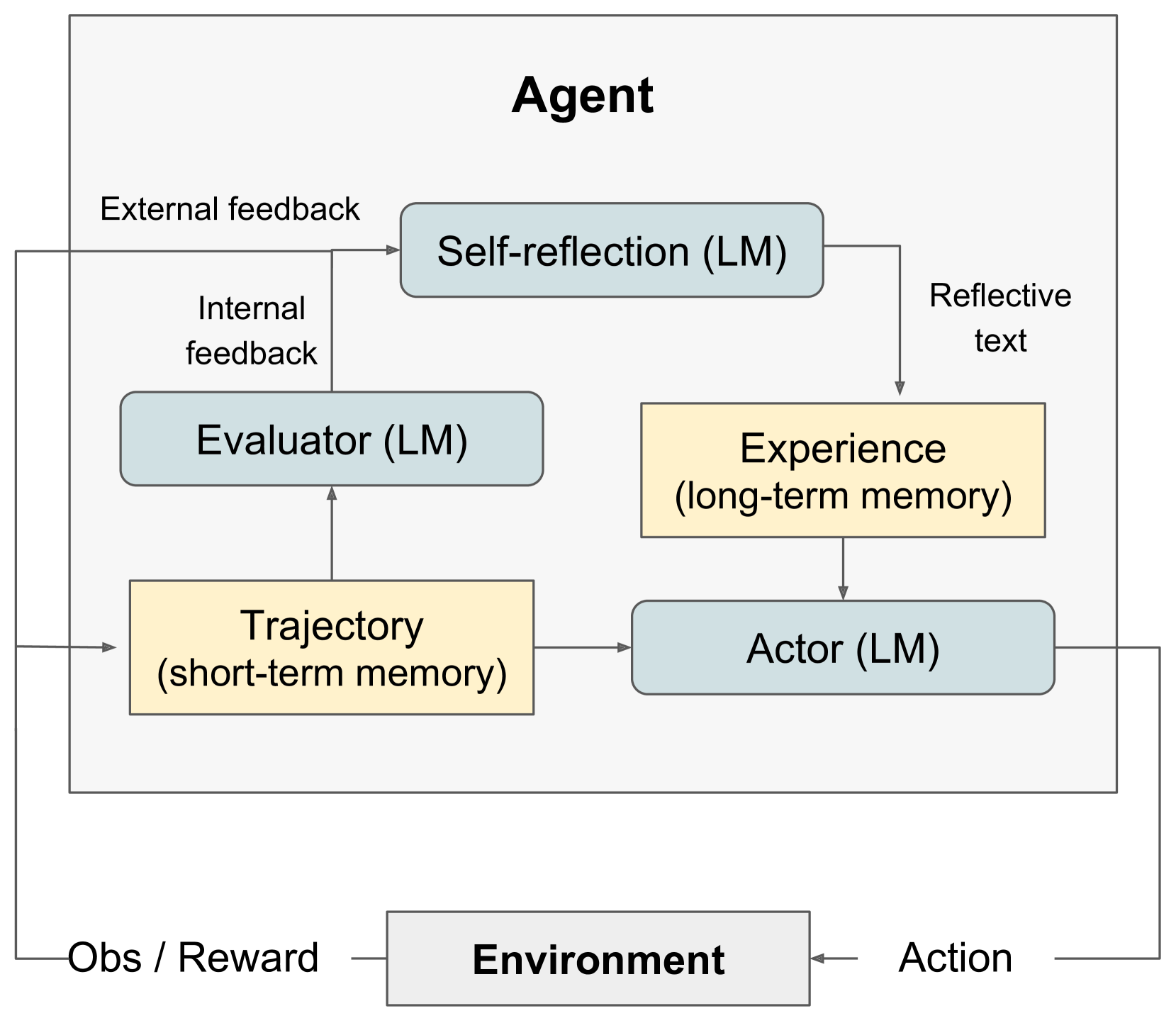

The image is a diagram illustrating the interaction between an agent and its environment, incorporating self-reflection and memory components. The agent consists of several modules: Self-reflection, Evaluator, Experience, Trajectory, and Actor. The diagram shows the flow of information and actions between these modules and the environment.

### Components/Axes

* **Agent:** The central entity, encompassing the modules responsible for decision-making and learning.

* **Environment:** The external context with which the agent interacts.

* **Self-reflection (LM):** A module within the agent, likely using a Language Model (LM), that processes external feedback.

* **Evaluator (LM):** A module within the agent, likely using a Language Model (LM), that processes internal feedback.

* **Experience (long-term memory):** A module representing the agent's long-term memory.

* **Trajectory (short-term memory):** A module representing the agent's short-term memory.

* **Actor (LM):** A module within the agent, likely using a Language Model (LM), responsible for taking actions.

* **External feedback:** Feedback received from the environment.

* **Internal feedback:** Feedback generated within the agent.

* **Reflective text:** Output from the self-reflection module.

* **Obs / Reward:** Observations and rewards received from the environment.

* **Action:** Actions taken by the agent that affect the environment.

### Detailed Analysis

The diagram illustrates the following flow of information:

1. **Environment -> Obs / Reward -> Evaluator (LM):** The environment provides observations and rewards to the agent, which are then processed by the Evaluator module.

2. **Environment -> Obs / Reward -> Trajectory (short-term memory):** The environment provides observations and rewards to the agent, which are then stored in the Trajectory module.

3. **Evaluator (LM) -> Internal feedback -> Self-reflection (LM):** The Evaluator module generates internal feedback, which is then processed by the Self-reflection module.

4. **Self-reflection (LM) -> Reflective text -> Experience (long-term memory):** The Self-reflection module generates reflective text, which is stored in the Experience module.

5. **Self-reflection (LM) -> External feedback -> Evaluator (LM):** The Self-reflection module receives external feedback, which is then processed by the Evaluator module.

6. **Trajectory (short-term memory) -> Actor (LM):** The Trajectory module provides information to the Actor module.

7. **Experience (long-term memory) -> Actor (LM):** The Experience module provides information to the Actor module.

8. **Actor (LM) -> Action -> Environment:** The Actor module takes actions that affect the environment.

### Key Observations

* The agent uses both short-term (Trajectory) and long-term (Experience) memory.

* The agent incorporates self-reflection, allowing it to learn from its experiences and improve its decision-making.

* Language Models (LM) are used in the Self-reflection, Evaluator, and Actor modules.

### Interpretation

The diagram represents a sophisticated agent architecture that integrates perception, memory, action, and self-reflection. The use of Language Models (LM) suggests that the agent is capable of processing and generating natural language, which could be used for communication, reasoning, and planning. The self-reflection mechanism allows the agent to learn from its mistakes and improve its performance over time. The interaction with the environment provides the agent with the necessary feedback to adapt and achieve its goals. The separation of short-term and long-term memory allows the agent to efficiently store and retrieve relevant information.