# Technical Diagram Analysis: Agent Architecture

## Overview

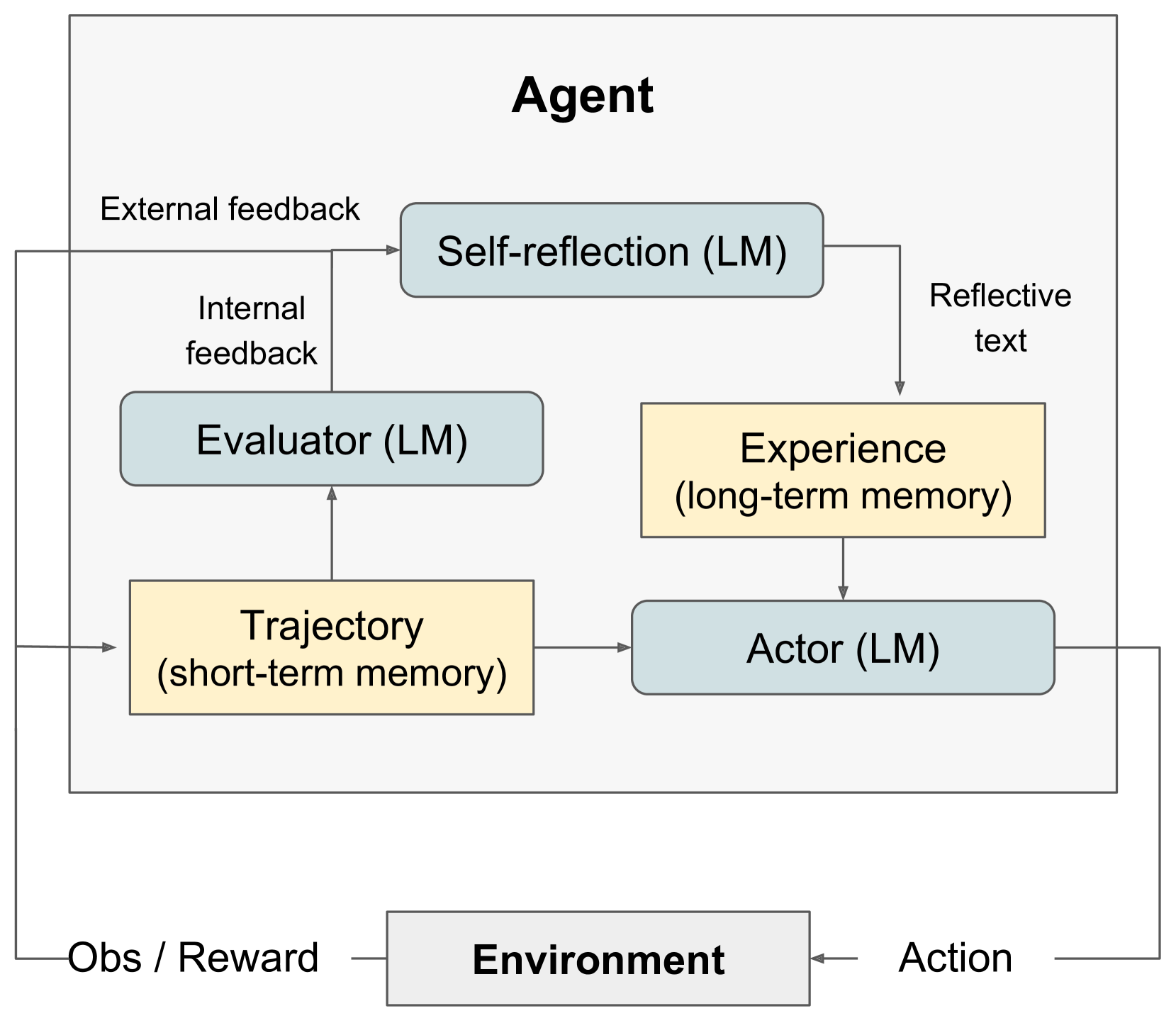

The diagram illustrates a hierarchical agent architecture with internal feedback loops and memory systems. The agent interacts with an external environment through observation/reward signals and actions.

## Key Components

### Agent Subsystems

1. **Self-reflection (LM)**

- Type: Large Model (LM)

- Inputs:

- External feedback (dashed arrow from environment)

- Outputs:

- Reflective text (dashed arrow to Experience)

2. **Evaluator (LM)**

- Type: Large Model (LM)

- Inputs:

- Internal feedback (solid arrow from Self-reflection)

- Outputs:

- Trajectory (solid arrow to Trajectory)

3. **Trajectory (short-term memory)**

- Type: Short-term memory

- Inputs:

- Output from Evaluator

- Outputs:

- Actor (solid arrow)

4. **Experience (long-term memory)**

- Type: Long-term memory

- Inputs:

- Reflective text from Self-reflection

- Outputs:

- Actor (solid arrow)

5. **Actor (LM)**

- Type: Large Model (LM)

- Inputs:

- Trajectory (short-term memory)

- Experience (long-term memory)

- Outputs:

- Action (solid arrow to environment)

### Environment Interface

- **Inputs to Agent**:

- Obs/Reward (solid arrow from environment)

- **Outputs to Environment**:

- Action (solid arrow to environment)

## Memory System

- **Short-term memory**: Trajectory (yellow block)

- **Long-term memory**: Experience (yellow block)

## Feedback Loops

1. **External feedback loop**:

- Environment → Self-reflection (dashed)

- Self-reflection → Reflective text → Experience

2. **Internal feedback loop**:

- Self-reflection → Evaluator → Trajectory

## Color Coding

- **Light blue blocks**: Large Models (LM components)

- **Yellow blocks**: Memory systems (short/long-term)

## Flow Diagram

```

Environment

│

├─ Obs/Reward → Agent

│

Agent

├─ Self-reflection (LM)

│ ├─ External feedback (dashed)

│ └─ Reflective text (dashed) → Experience

├─ Evaluator (LM)

│ └─ Internal feedback → Trajectory

├─ Trajectory (short-term memory)

└─ Actor (LM)

├─ Inputs: Trajectory + Experience

└─ Action (solid) → Environment

```

## Critical Connections

1. **Self-reflection** processes both external and internal feedback

2. **Evaluator** converts feedback into trajectory data

3. **Actor** integrates short-term (trajectory) and long-term (experience) memory

4. **Experience** serves as persistent memory repository

This architecture demonstrates a recursive learning system where environmental interactions inform both immediate actions (through trajectory) and long-term behavioral adaptations (through experience).