TECHNICAL ASSET FINGERPRINT

5ee0ae2a8c0fe38692eaefda

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Knowledge Distillation Strategies

### Overview

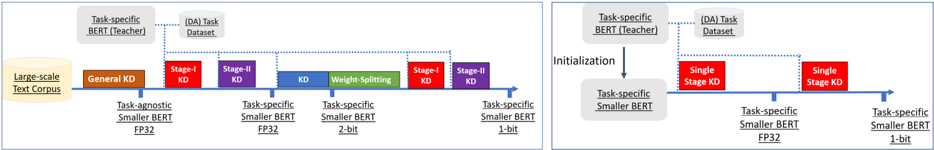

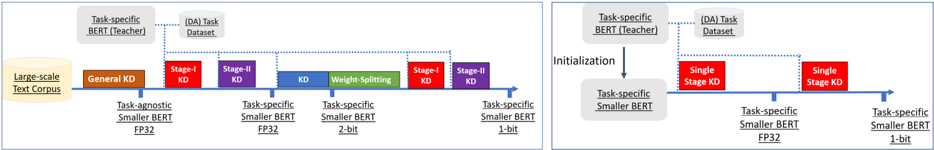

The image presents two diagrams illustrating different knowledge distillation strategies for BERT models. The left diagram shows a multi-stage knowledge distillation process, while the right diagram depicts a single-stage approach. Both diagrams highlight the transfer of knowledge from a larger "teacher" BERT model to a smaller "student" BERT model.

### Components/Axes

**Left Diagram:**

* **Horizontal Axis:** Represents the progression of knowledge distillation stages.

* **Labels along the axis:**

* "Task-agnostic Smaller BERT FP32"

* "Task-specific Smaller BERT FP32"

* "Task-specific Smaller BERT 2-bit"

* "Task-specific Smaller BERT 1-bit"

* **Data Source:** "Large-scale Text Corpus" (represented as a cylinder on the left)

* **Teacher Model:** "Task-specific BERT (Teacher)" (top-left, in a rounded rectangle)

* **(DA) Task Dataset:** "(DA) Task Dataset" (top-center, in a rounded rectangle)

* **Knowledge Distillation Stages (represented as colored rectangles):**

* "General KD" (brown)

* "Stage-I KD" (red)

* "Stage-II KD" (purple)

* "KD Weight-Splitting" (green)

* "Stage-I KD" (red)

* "Stage-II KD" (purple)

**Right Diagram:**

* **Vertical Arrow:** "Initialization" (indicating the transfer of knowledge from the teacher to the student)

* **Teacher Model:** "Task-specific BERT (Teacher)" (top-left, in a rounded rectangle)

* **Student Model:** "Task-specific Smaller BERT" (below the teacher model, in a rounded rectangle)

* **Horizontal Axis:** Represents the progression of knowledge distillation stages.

* **Labels along the axis:**

* "Task-specific Smaller BERT FP32"

* "Task-specific Smaller BERT 1-bit"

* **Knowledge Distillation Stages (represented as colored rectangles):**

* "Single Stage KD" (red)

* "Single Stage KD" (red)

### Detailed Analysis

**Left Diagram:**

1. **Data Source:** The process begins with a "Large-scale Text Corpus."

2. **General KD:** The first stage involves "General KD," resulting in a "Task-agnostic Smaller BERT FP32."

3. **Multi-Stage KD:** Subsequent stages involve "Stage-I KD," "Stage-II KD," "KD Weight-Splitting," "Stage-I KD," and "Stage-II KD," leading to increasingly compressed models: "Task-specific Smaller BERT FP32," "Task-specific Smaller BERT 2-bit," and finally "Task-specific Smaller BERT 1-bit."

4. **Teacher and Dataset:** The "Task-specific BERT (Teacher)" and "(DA) Task Dataset" are connected to the KD stages via dotted lines, indicating their role in guiding the distillation process.

**Right Diagram:**

1. **Initialization:** The "Task-specific Smaller BERT" is initialized from the "Task-specific BERT (Teacher)."

2. **Single-Stage KD:** Two "Single Stage KD" steps are shown, resulting in "Task-specific Smaller BERT FP32" and "Task-specific Smaller BERT 1-bit."

3. **Teacher and Dataset:** The "Task-specific BERT (Teacher)" and "(DA) Task Dataset" are connected to the KD stages via dotted lines, indicating their role in guiding the distillation process.

### Key Observations

* The left diagram illustrates a more complex, multi-stage knowledge distillation process, while the right diagram shows a simpler, single-stage approach.

* Both diagrams aim to compress a larger "teacher" BERT model into a smaller "student" BERT model, reducing the model size from FP32 to 1-bit.

* The "Task-specific BERT (Teacher)" and "(DA) Task Dataset" are crucial components in both distillation strategies.

### Interpretation

The diagrams demonstrate two different strategies for knowledge distillation, a technique used to transfer knowledge from a large, complex model (the teacher) to a smaller, more efficient model (the student). The multi-stage approach (left) allows for finer-grained control over the distillation process, potentially leading to better performance in some cases. The single-stage approach (right) is simpler and may be more suitable for scenarios where computational resources are limited. The progression from FP32 to 1-bit models indicates a focus on extreme model compression, likely for deployment on resource-constrained devices. The use of a "Task-specific BERT (Teacher)" and "(DA) Task Dataset" suggests that the distillation process is tailored to a specific task, which can improve the performance of the smaller model on that task.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Knowledge Distillation Pipeline for BERT Models

### Overview

The image presents two diagrams illustrating knowledge distillation (KD) pipelines for BERT models. Both diagrams depict a process of transferring knowledge from a larger, task-specific BERT model (the "Teacher") to smaller BERT models. The diagrams differ in the stages and methods used for distillation. The diagrams are arranged side-by-side for comparison.

### Components/Axes

The diagrams consist of the following components:

* **Large-scale Text Corpus:** A yellow oval representing the initial data source.

* **Task-specific BERT (Teacher):** A light blue rectangle representing the larger, pre-trained model.

* **Task Dataset:** A light blue rectangle representing the dataset used for task-specific fine-tuning.

* **Task-agnostic Smaller BERT FP32:** A green rectangle representing the initial smaller model.

* **Task-specific Smaller BERT FP32:** A green rectangle representing the smaller model fine-tuned for a specific task.

* **Task-specific Smaller BERT 2-bit:** A green rectangle representing a quantized smaller model.

* **Task-specific Smaller BERT 1-bit:** A green rectangle representing a further quantized smaller model.

* **General KD:** A yellow rectangle representing the initial knowledge distillation stage.

* **Stage-I KD:** A red rectangle representing the first stage of knowledge distillation.

* **Stage-II KD:** A red rectangle representing the second stage of knowledge distillation.

* **Weight Splitting:** A green rectangle representing a method of model compression.

* **Initialization:** A label indicating the initialization process in the second diagram.

* **Single Stage KD:** A red rectangle representing a single-stage knowledge distillation process.

* **Dotted Arrows:** Represent the flow of knowledge or data between components.

* **Horizontal Lines:** Represent the timeline or sequence of steps.

### Detailed Analysis / Content Details

**Diagram 1 (Left):**

1. **Initial Stage:** A "Large-scale Text Corpus" feeds into a "Task-agnostic Smaller BERT FP32" model.

2. **General KD:** The "Task-specific BERT (Teacher)" transfers knowledge via "General KD" to the "Task-agnostic Smaller BERT FP32".

3. **Stage-I KD:** The "Task-specific BERT (Teacher)" and "Task Dataset" transfer knowledge via "Stage-I KD" to a "Task-specific Smaller BERT FP32".

4. **Stage-II KD:** The "Task-specific BERT (Teacher)" and "Task Dataset" transfer knowledge via "Stage-II KD" to the same "Task-specific Smaller BERT FP32".

5. **Weight Splitting:** The "Task-specific Smaller BERT FP32" undergoes "Weight Splitting" to create a "Task-specific Smaller BERT 2-bit".

6. **Stage-II KD (again):** The "Task-specific BERT (Teacher)" and "Task Dataset" transfer knowledge via "Stage-II KD" to a "Task-specific Smaller BERT 1-bit".

**Diagram 2 (Right):**

1. **Initialization:** The "Task-specific BERT (Teacher)" initializes a "Task-specific Smaller BERT FP32".

2. **Single Stage KD:** The "Task-specific BERT (Teacher)" and "Task Dataset" transfer knowledge via "Single Stage KD" to the "Task-specific Smaller BERT FP32".

3. **Single Stage KD (again):** The "Task-specific BERT (Teacher)" and "Task Dataset" transfer knowledge via "Single Stage KD" to a "Task-specific Smaller BERT 1-bit".

### Key Observations

* The first diagram employs a multi-stage distillation process with weight splitting for model compression.

* The second diagram uses a simpler, single-stage distillation process.

* Both diagrams aim to create smaller, task-specific BERT models from a larger teacher model.

* Quantization (2-bit and 1-bit) is used in the first diagram to further reduce model size.

* The diagrams visually emphasize the sequential nature of the knowledge transfer process.

### Interpretation

These diagrams illustrate two different approaches to knowledge distillation for BERT models. The first approach (left) is more complex, involving multiple stages of distillation and weight splitting to achieve a highly compressed model. This approach might be suitable when model size is a critical constraint. The second approach (right) is simpler and more direct, potentially offering a faster and easier way to distill knowledge into a smaller model. The choice between the two approaches likely depends on the specific requirements of the application, such as the desired level of compression, the available computational resources, and the acceptable trade-off between model size and performance. The diagrams highlight the flexibility of knowledge distillation as a technique for adapting large pre-trained models to resource-constrained environments. The use of "FP32", "2-bit", and "1-bit" indicates a focus on reducing the precision of the model weights to achieve compression. The dotted arrows suggest a transfer of information, likely in the form of soft targets or intermediate representations, from the teacher model to the student model.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Technical Diagram: Knowledge Distillation (KD) Process Comparison

### Overview

The image displays two side-by-side flow diagrams illustrating different strategies for Knowledge Distillation (KD) applied to BERT models. The left diagram depicts a **multi-stage, progressive distillation** pipeline, while the right diagram shows a **simplified, single-stage** approach. Both diagrams trace the transformation of a model from a large teacher to a smaller, quantized student using a large-scale text corpus and a task-specific dataset.

### Components/Axes

The diagrams are structured as horizontal process flows with labeled boxes and directional arrows. Key components include:

**Common Elements (Both Diagrams):**

* **Input Data Sources:**

* `Large-scale Text Corpus` (Yellow box, far left)

* `(DA) Task Dataset` (Light blue box, top center)

* **Teacher Model:**

* `Task-specific BERT (Teacher)` (Grey box, top left)

* **Student Model Progression:** Represented by a horizontal blue arrow timeline, with model states indicated below it.

* **Color-Coded Legend (Bottom of each diagram):**

* **Orange:** `Task-agnostic Smaller BERT FP32`

* **Purple:** `Task-specific Smaller BERT FP32`

* **Blue:** `Task-specific Smaller BERT 2-bit`

* **Red:** `Task-specific Smaller BERT 1-bit`

**Left Diagram - Multi-Stage Pipeline:**

* **Process Stages (Boxes on the timeline):**

1. `General KD` (Orange box)

2. `Stage-I KD` (Purple box)

3. `Stage-II KD` (Purple box)

4. `KD` (Blue box)

5. `Weight-Splitting` (Green box)

6. `Stage-I KD` (Red box)

7. `Stage-II KD` (Red box)

* **Model States (Below the timeline):**

* Initial: `Task-agnostic Smaller BERT FP32` (Orange text)

* After Stage-I/II KD: `Task-specific Smaller BERT FP32` (Purple text)

* After Weight-Splitting: `Task-specific Smaller BERT 2-bit` (Blue text)

* Final: `Task-specific Smaller BERT 1-bit` (Red text)

**Right Diagram - Single-Stage Pipeline:**

* **Process Step:**

* `Initialization` (Arrow from Teacher to Student)

* `Single Stage KD` (Red box, appears twice sequentially)

* **Model States:**

* Initial (after Initialization): `Task-specific Smaller BERT FP32` (Purple text)

* Final (after two Single Stage KD steps): `Task-specific Smaller BERT 1-bit` (Red text)

### Detailed Analysis

**Left Diagram (Multi-Stage) Flow:**

1. A `Task-specific BERT (Teacher)` and a `Large-scale Text Corpus` feed into a `General KD` stage, producing a `Task-agnostic Smaller BERT FP32`.

2. This model undergoes two sequential `Stage-I KD` and `Stage-II KD` steps, using the `(DA) Task Dataset`, to become a `Task-specific Smaller BERT FP32`.

3. A `Weight-Splitting` operation (green box) converts the FP32 model into a `Task-specific Smaller BERT 2-bit` model.

4. This 2-bit model then goes through another two-stage distillation (`Stage-I KD` and `Stage-II KD`), again using the task dataset, to produce the final `Task-specific Smaller BERT 1-bit` model.

**Right Diagram (Single-Stage) Flow:**

1. The `Task-specific BERT (Teacher)` is used for `Initialization` of a `Task-specific Smaller BERT FP32` model.

2. This FP32 model is directly distilled via a `Single Stage KD` process (using the task dataset) into a `Task-specific Smaller BERT 1-bit` model.

3. The diagram shows a second `Single Stage KD` box, suggesting either an iterative refinement or a representation of the same process applied to achieve the final 1-bit model.

### Key Observations

1. **Complexity vs. Simplicity:** The left diagram outlines a complex, 7-step pipeline involving intermediate model states (FP32, 2-bit) and specialized operations like "Weight-Splitting." The right diagram proposes a much simpler 2-step process (Initialization + Single Stage KD) to reach the same final model format (1-bit).

2. **Task Specificity:** The multi-stage approach starts with a *task-agnostic* model and gradually specializes it. The single-stage approach begins with a *task-specific* model from the outset.

3. **Quantization Path:** The multi-stage path explicitly includes a 2-bit intermediate model, while the single-stage path appears to quantize directly from FP32 to 1-bit.

4. **Data Usage:** Both methods utilize the `(DA) Task Dataset` for the task-specific distillation stages. The multi-stage method also uses the `Large-scale Text Corpus` for the initial General KD.

### Interpretation

These diagrams contrast two philosophies for creating efficient, quantized BERT models:

* **The Multi-Stage Strategy (Left)** represents a **gradual, controlled compression**. It prioritizes stability by first creating a strong task-agnostic student, then specializing it, then compressing it in measured steps (FP32 -> 2-bit -> 1-bit) with distillation at each phase. This likely aims to preserve performance by minimizing the "shock" of compression, but at the cost of significant computational overhead and pipeline complexity.

* **The Single-Stage Strategy (Right)** represents an **aggressive, end-to-end compression**. It bets on the ability of a single, well-initialized distillation step to directly transfer knowledge from the large teacher to the highly quantized (1-bit) student. This is far more efficient and simpler to implement but carries a higher risk of performance degradation, as the student must learn task specifics and extreme quantization simultaneously.

The core investigative question posed by this comparison is: **Is the elaborate, multi-stage process necessary to maintain model performance when creating a 1-bit BERT, or can a smarter single-stage distillation achieve comparable results with much greater efficiency?** The diagrams set up an experiment to test the trade-off between process complexity and final model efficacy.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Knowledge Distillation Framework for BERT Models

### Overview

The image compares two knowledge distillation (KD) frameworks for training smaller BERT models using a large-scale text corpus and task-specific datasets. The left diagram illustrates a **multi-stage KD approach** with progressive weight splitting, while the right diagram shows a **single-stage KD approach** with direct initialization. Both frameworks use a "Task-specific BERT (Teacher)" as the knowledge source.

---

### Components/Axes

#### Left Diagram (Multi-Stage KD)

1. **Input**:

- **Large-scale Text Corpus** (beige box, leftmost).

- **Task-specific BERT (Teacher)** (gray box, top-left).

- **(DA) Task Dataset** (gray box, top-center).

2. **Stages**:

- **General KD** (orange box, first stage).

- **Stage-I KD** (red box, second stage).

- **Stage-II KD** (purple box, third stage).

- **Weight-Splitting** (green box, fourth stage).

- **Stage-I KD** (red box, fifth stage).

- **Stage-II KD** (purple box, sixth stage).

3. **Output**:

- **Task-agnostic Smaller BERT FP32** (blue box, post-General KD).

- **Task-specific Smaller BERT FP32** (blue box, post-Weight-Splitting).

- **Task-specific Smaller BERT 2-bit** (blue box, post-Stage-I KD).

- **Task-specific Smaller BERT 1-bit** (blue box, final output).

#### Right Diagram (Single-Stage KD)

1. **Input**:

- **Task-specific BERT (Teacher)** (gray box, top-left).

- **(DA) Task Dataset** (gray box, top-center).

2. **Process**:

- **Initialization** (gray arrow, top-center).

- **Single Stage KD** (red box, central).

3. **Output**:

- **Task-specific Smaller BERT FP32** (blue box, post-Initialization).

- **Task-specific Smaller BERT 1-bit** (blue box, final output).

---

### Detailed Analysis

#### Left Diagram (Multi-Stage KD)

- **Flow**:

1. The large-scale text corpus is processed by the **Task-agnostic Smaller BERT FP32** (blue box).

2. **General KD** (orange) transfers knowledge from the teacher to the student.

3. **Stage-I KD** (red) and **Stage-II KD** (purple) refine the model using the task dataset.

4. **Weight-Splitting** (green) reduces model size by splitting weights.

5. A second **Stage-I KD** (red) and **Stage-II KD** (purple) further optimize the 2-bit model.

6. Final output: **Task-specific Smaller BERT 1-bit** (blue).

- **Key Features**:

- Progressive refinement through **two stages of KD** (Stage-I and Stage-II).

- **Weight-Splitting** introduces a 2-bit model before final 1-bit quantization.

- Uses the **task dataset** at multiple stages for task-specific adaptation.

#### Right Diagram (Single-Stage KD)

- **Flow**:

1. The task dataset initializes the **Task-specific Smaller BERT FP32** (blue).

2. **Single Stage KD** (red) transfers knowledge directly from the teacher.

3. Final output: **Task-specific Smaller BERT 1-bit** (blue).

- **Key Features**:

- Simplified pipeline with **no intermediate stages**.

- Direct initialization and single KD step.

- Final model is 1-bit, skipping 2-bit quantization.

---

### Key Observations

1. **Complexity**:

- The multi-stage approach (left) is more complex, involving **six stages** (including weight splitting).

- The single-stage approach (right) is streamlined, with **two stages** (initialization + KD).

2. **Quantization**:

- Both methods end with a **1-bit task-specific model**, but the multi-stage approach includes an intermediate **2-bit model**.

3. **Data Usage**:

- The multi-stage method leverages the **task dataset** at multiple stages for iterative refinement.

- The single-stage method uses the task dataset only during initialization.

4. **Color Coding**:

- **Red**: Stage-I KD (left) and Single Stage KD (right).

- **Purple**: Stage-II KD (left).

- **Green**: Weight-Splitting (left).

- **Blue**: Task-specific Smaller BERT models (FP32, 2-bit, 1-bit).

---

### Interpretation

1. **Trade-offs**:

- The **multi-stage approach** likely achieves higher accuracy by iteratively refining the model but requires more computational resources.

- The **single-stage approach** is more efficient but may sacrifice some performance due to fewer optimization steps.

2. **Weight-Splitting**:

- The green "Weight-Splitting" step in the left diagram suggests a focus on **model compression** before final quantization.

3. **Task-Specific Adaptation**:

- Both methods emphasize task-specific adaptation, but the multi-stage approach integrates it more deeply across stages.

4. **Final Output**:

- Both frameworks produce a **1-bit task-specific model**, but the multi-stage method includes an intermediate 2-bit model, indicating a staged quantization strategy.

---

### Conclusion

The diagram highlights two distinct strategies for knowledge distillation in BERT models. The multi-stage approach prioritizes iterative refinement and compression, while the single-stage method emphasizes simplicity and efficiency. The choice between them depends on the balance between computational cost and model performance.

DECODING INTELLIGENCE...