\n

## Diagram: Knowledge Distillation Pipeline for BERT Models

### Overview

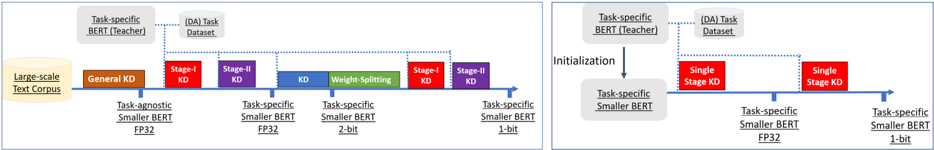

The image presents two diagrams illustrating knowledge distillation (KD) pipelines for BERT models. Both diagrams depict a process of transferring knowledge from a larger, task-specific BERT model (the "Teacher") to smaller BERT models. The diagrams differ in the stages and methods used for distillation. The diagrams are arranged side-by-side for comparison.

### Components/Axes

The diagrams consist of the following components:

* **Large-scale Text Corpus:** A yellow oval representing the initial data source.

* **Task-specific BERT (Teacher):** A light blue rectangle representing the larger, pre-trained model.

* **Task Dataset:** A light blue rectangle representing the dataset used for task-specific fine-tuning.

* **Task-agnostic Smaller BERT FP32:** A green rectangle representing the initial smaller model.

* **Task-specific Smaller BERT FP32:** A green rectangle representing the smaller model fine-tuned for a specific task.

* **Task-specific Smaller BERT 2-bit:** A green rectangle representing a quantized smaller model.

* **Task-specific Smaller BERT 1-bit:** A green rectangle representing a further quantized smaller model.

* **General KD:** A yellow rectangle representing the initial knowledge distillation stage.

* **Stage-I KD:** A red rectangle representing the first stage of knowledge distillation.

* **Stage-II KD:** A red rectangle representing the second stage of knowledge distillation.

* **Weight Splitting:** A green rectangle representing a method of model compression.

* **Initialization:** A label indicating the initialization process in the second diagram.

* **Single Stage KD:** A red rectangle representing a single-stage knowledge distillation process.

* **Dotted Arrows:** Represent the flow of knowledge or data between components.

* **Horizontal Lines:** Represent the timeline or sequence of steps.

### Detailed Analysis / Content Details

**Diagram 1 (Left):**

1. **Initial Stage:** A "Large-scale Text Corpus" feeds into a "Task-agnostic Smaller BERT FP32" model.

2. **General KD:** The "Task-specific BERT (Teacher)" transfers knowledge via "General KD" to the "Task-agnostic Smaller BERT FP32".

3. **Stage-I KD:** The "Task-specific BERT (Teacher)" and "Task Dataset" transfer knowledge via "Stage-I KD" to a "Task-specific Smaller BERT FP32".

4. **Stage-II KD:** The "Task-specific BERT (Teacher)" and "Task Dataset" transfer knowledge via "Stage-II KD" to the same "Task-specific Smaller BERT FP32".

5. **Weight Splitting:** The "Task-specific Smaller BERT FP32" undergoes "Weight Splitting" to create a "Task-specific Smaller BERT 2-bit".

6. **Stage-II KD (again):** The "Task-specific BERT (Teacher)" and "Task Dataset" transfer knowledge via "Stage-II KD" to a "Task-specific Smaller BERT 1-bit".

**Diagram 2 (Right):**

1. **Initialization:** The "Task-specific BERT (Teacher)" initializes a "Task-specific Smaller BERT FP32".

2. **Single Stage KD:** The "Task-specific BERT (Teacher)" and "Task Dataset" transfer knowledge via "Single Stage KD" to the "Task-specific Smaller BERT FP32".

3. **Single Stage KD (again):** The "Task-specific BERT (Teacher)" and "Task Dataset" transfer knowledge via "Single Stage KD" to a "Task-specific Smaller BERT 1-bit".

### Key Observations

* The first diagram employs a multi-stage distillation process with weight splitting for model compression.

* The second diagram uses a simpler, single-stage distillation process.

* Both diagrams aim to create smaller, task-specific BERT models from a larger teacher model.

* Quantization (2-bit and 1-bit) is used in the first diagram to further reduce model size.

* The diagrams visually emphasize the sequential nature of the knowledge transfer process.

### Interpretation

These diagrams illustrate two different approaches to knowledge distillation for BERT models. The first approach (left) is more complex, involving multiple stages of distillation and weight splitting to achieve a highly compressed model. This approach might be suitable when model size is a critical constraint. The second approach (right) is simpler and more direct, potentially offering a faster and easier way to distill knowledge into a smaller model. The choice between the two approaches likely depends on the specific requirements of the application, such as the desired level of compression, the available computational resources, and the acceptable trade-off between model size and performance. The diagrams highlight the flexibility of knowledge distillation as a technique for adapting large pre-trained models to resource-constrained environments. The use of "FP32", "2-bit", and "1-bit" indicates a focus on reducing the precision of the model weights to achieve compression. The dotted arrows suggest a transfer of information, likely in the form of soft targets or intermediate representations, from the teacher model to the student model.