## Diagram: Comparison of Deductive and Inductive Learning Architectures

### Overview

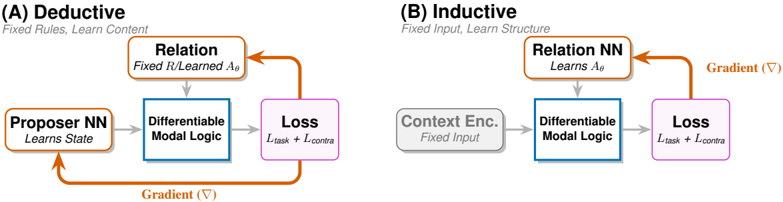

The image displays two side-by-side architectural diagrams, labeled (A) and (B), illustrating two distinct machine learning paradigms: a **Deductive** approach and an **Inductive** approach. Both diagrams share a similar flow structure with colored boxes representing components and arrows indicating data or gradient flow. The core difference lies in which component is fixed and which is learned.

### Components/Axes

The diagrams are composed of labeled rectangular boxes connected by directional arrows. Key components are color-coded:

* **Orange Boxes:** Represent neural network (NN) components that learn.

* **Yellow Boxes:** Represent the "Relation" component.

* **Blue Box:** Represents the "Differentiable Modal Logic" core.

* **Pink Box:** Represents the "Loss" function.

* **Orange Arrows:** Indicate the flow of gradients (∇) for backpropagation.

**Diagram (A) - Deductive:**

* **Title:** (A) Deductive

* **Subtitle:** Fixed Rules, Learn Content

* **Components (from left to right):**

1. **Proposer NN** (Orange box) - Sub-label: "Learns State"

2. **Relation** (Yellow box) - Sub-label: "Fixed R/Learned A_R"

3. **Differentiable Modal Logic** (Blue box)

4. **Loss** (Pink box) - Sub-label: "L_task + L_contra"

* **Flow & Gradients:**

* A gray arrow points from "Proposer NN" to "Differentiable Modal Logic".

* A gray arrow points from "Relation" to "Differentiable Modal Logic".

* A gray arrow points from "Differentiable Modal Logic" to "Loss".

* An orange **Gradient (∇)** arrow loops from the "Loss" box back to the "Proposer NN" box.

**Diagram (B) - Inductive:**

* **Title:** (B) Inductive

* **Subtitle:** Fixed Input, Learn Structure

* **Components (from left to right):**

1. **Context Enc.** (Gray box) - Sub-label: "Fixed Input"

2. **Relation NN** (Yellow box) - Sub-label: "Learns A_R"

3. **Differentiable Modal Logic** (Blue box)

4. **Loss** (Pink box) - Sub-label: "L_task + L_contra"

* **Flow & Gradients:**

* A gray arrow points from "Context Enc." to "Differentiable Modal Logic".

* A gray arrow points from "Relation NN" to "Differentiable Modal Logic".

* A gray arrow points from "Differentiable Modal Logic" to "Loss".

* An orange **Gradient (∇)** arrow loops from the "Loss" box back to the "Relation NN" box.

### Detailed Analysis

The diagrams contrast two learning strategies within a framework that uses differentiable modal logic.

* **In the Deductive (A) framework:** The system's "rules" (the Relation, R) are fixed or partially learned (A_R). The primary learning target is the "content" or "state," which is generated by the **Proposer NN**. The gradient signal from the loss function updates only the Proposer NN.

* **In the Inductive (B) framework:** The "input" (from the Context Encoder) is fixed. The primary learning target is the "structure" of the relations themselves, represented by the parameters **A_R** of the **Relation NN**. The gradient signal from the loss function updates only the Relation NN.

* **Common Elements:** Both architectures feed their respective inputs (Proposer output or Context Encoding) and relation information into a **Differentiable Modal Logic** module. They are optimized using the same composite loss function, **L_task + L_contra**, which likely combines a task-specific loss and a contrastive loss.

### Key Observations

1. **Symmetry and Contrast:** The diagrams are structurally symmetric, highlighting the conceptual inversion. The component that is "learned" in one (Proposer NN in A, Relation NN in B) is "fixed" or has a different role in the other.

2. **Gradient Target:** The most critical visual cue is the destination of the orange **Gradient (∇)** arrow. In (A), it points to the **Proposer NN**; in (B), it points to the **Relation NN**. This explicitly shows which part of the system is being trained.

3. **Role of Modal Logic:** The "Differentiable Modal Logic" block is central and unchanged in both diagrams, suggesting it is the core reasoning engine that operates on the provided inputs and relations, regardless of the learning paradigm.

### Interpretation

These diagrams illustrate a fundamental dichotomy in machine learning and AI reasoning:

* **Deductive Learning (A):** This approach mimics deductive reasoning, where general rules (Relation) are applied to specific instances (State from Proposer NN) to derive conclusions. The system learns to generate better *instances* or *content* that satisfy the fixed rules. This is akin to learning to solve problems within a known logical framework.

* **Inductive Learning (B):** This approach mimics inductive reasoning, where patterns or rules are inferred from specific, fixed examples (Context). The system learns the *structure* of the rules themselves (Relation NN). This is akin to discovering the underlying principles or relationships from data.

The shared use of "Differentiable Modal Logic" and a combined loss function suggests an attempt to bridge symbolic reasoning (modal logic) with neural learning. The framework is designed to be flexible, allowing the same core logic module to support both top-down (deductive) and bottom-up (inductive) learning processes by simply changing which component is parameterized and updated via gradient descent. The inclusion of a contrastive loss (`L_contra`) hints at a self-supervised or representation learning component within the training objective.