## Line Chart: Model Accuracy Over Time (t)

### Overview

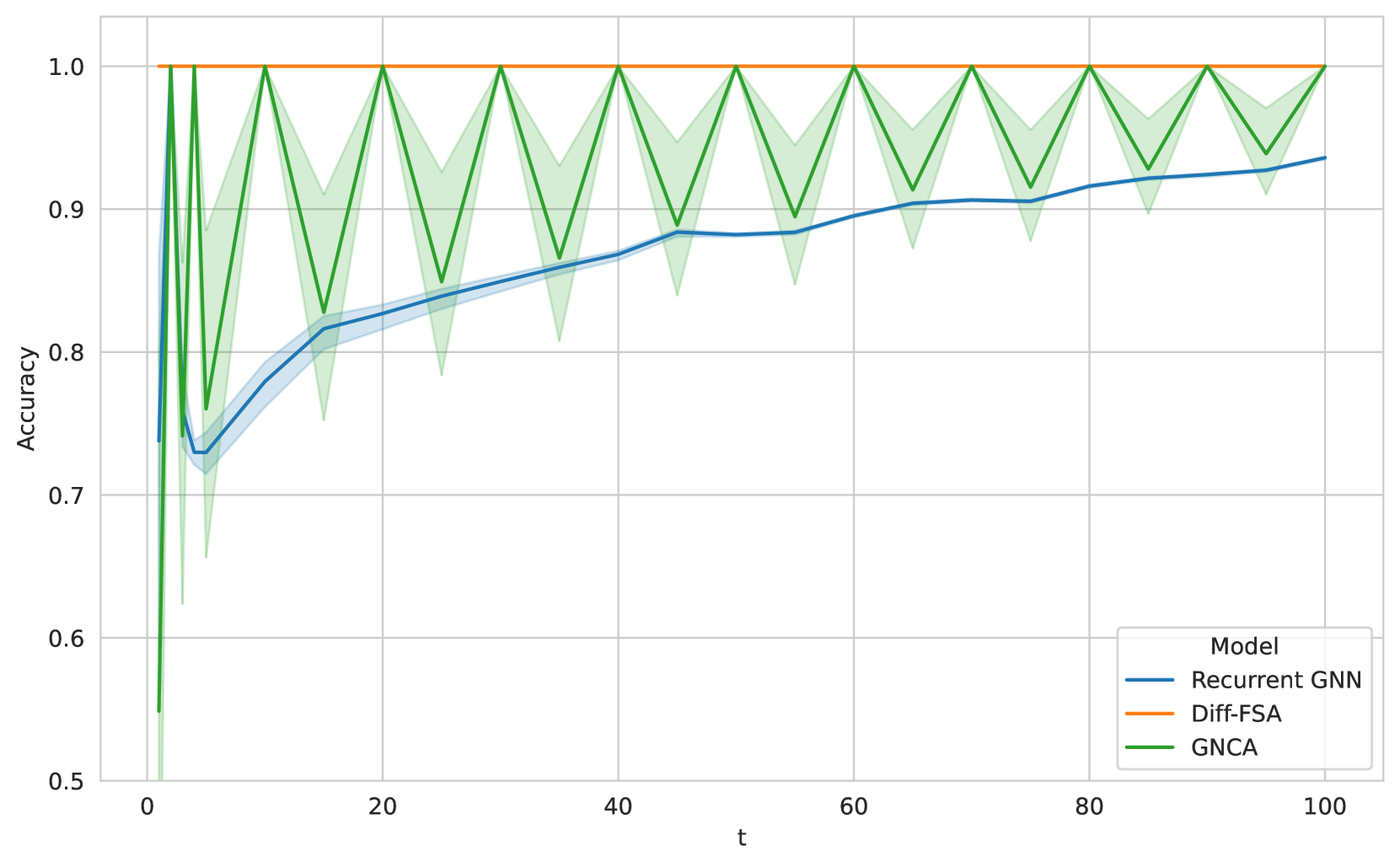

This image is a line chart comparing the accuracy of three different models—Recurrent GNN, Diff-FSA, and GNCA—over a sequence of time steps (t) from 0 to 100. The chart includes shaded regions around each line, likely representing confidence intervals or standard deviation across multiple runs.

### Components/Axes

* **X-Axis:** Labeled "t". It represents time steps or iterations. Major tick marks are at 0, 20, 40, 60, 80, and 100.

* **Y-Axis:** Labeled "Accuracy". It ranges from 0.5 to 1.0, with major tick marks at 0.5, 0.6, 0.7, 0.8, 0.9, and 1.0.

* **Legend:** Located in the bottom-right corner of the chart area. It is titled "Model" and contains three entries:

* A blue line labeled "Recurrent GNN".

* An orange line labeled "Diff-FSA".

* A green line labeled "GNCA".

* **Data Series:** Three distinct lines with associated shaded regions.

* **Recurrent GNN (Blue):** A solid blue line with a light blue shaded region around it.

* **Diff-FSA (Orange):** A solid orange line with no visible shaded region.

* **GNCA (Green):** A solid green line with a light green shaded region around it.

### Detailed Analysis

**1. Diff-FSA (Orange Line):**

* **Trend:** Perfectly flat and constant.

* **Data Points:** Maintains an accuracy of exactly 1.0 for all values of t from 0 to 100.

**2. Recurrent GNN (Blue Line):**

* **Trend:** Shows a general upward trend, indicating improving accuracy over time. It starts with a sharp dip before beginning a steady, near-linear increase.

* **Data Points (Approximate):**

* t=0: ~0.74

* t=5 (approx. first trough): ~0.73

* t=20: ~0.82

* t=40: ~0.87

* t=60: ~0.89

* t=80: ~0.91

* t=100: ~0.93

* **Shaded Region:** The blue shaded area is relatively narrow, suggesting consistent performance across runs. It is tightest during the initial dip and widens slightly as t increases.

**3. GNCA (Green Line):**

* **Trend:** Exhibits a pronounced, regular oscillatory or "zigzag" pattern. Accuracy repeatedly spikes to 1.0 and then drops to a local minimum before recovering.

* **Data Points (Approximate Peaks and Troughs):**

* **Peaks (Accuracy = 1.0):** Occur at t = 0, 10, 20, 30, 40, 50, 60, 70, 80, 90, 100.

* **Troughs (Local Minima):** Occur at approximately t = 5, 15, 25, 35, 45, 55, 65, 75, 85, 95. The accuracy at these troughs varies slightly but is generally between 0.85 and 0.90. The lowest trough appears near t=5 (~0.76).

* **Shaded Region:** The green shaded area is significant, especially around the troughs. This indicates high variance or instability in the model's performance at these points. The variance is minimal at the peaks (accuracy=1.0).

### Key Observations

1. **Performance Ceiling:** Diff-FSA achieves and maintains perfect accuracy (1.0) from the very first time step, suggesting it may be a theoretical baseline or an idealized model for this task.

2. **Learning vs. Oscillation:** Recurrent GNN demonstrates stable, incremental learning. In contrast, GNCA shows a cyclical pattern of perfect performance followed by significant degradation, indicating potential instability or a periodic reset mechanism.

3. **Variance Correlation:** For GNCA, the uncertainty (shaded region) is highest precisely when its accuracy is lowest (at the troughs), suggesting the model's failures are less predictable than its successes.

4. **Convergence:** While Recurrent GNN is still improving at t=100, its rate of improvement appears to be slowing. GNCA's peak performance does not improve over time; it consistently returns to 1.0.

### Interpretation

This chart likely compares different neural network architectures (Graph Neural Networks - GNNs) on a sequential or temporal graph-based task. The "t" axis could represent steps in a process, message-passing iterations, or time in a dynamic system.

* **Diff-FSA's** perfect, flat line suggests it might be a non-learning, deterministic algorithm (like a Finite State Automaton) that is perfectly suited to the task, serving as an upper-bound benchmark.

* **Recurrent GNN's** steady climb is characteristic of a model that is successfully learning temporal dependencies and generalizing its knowledge over time, though it has not yet reached perfect accuracy.

* **GNCA's** (likely Graph Neural Cellular Automata) oscillatory behavior is the most striking feature. This pattern could indicate:

* A model that enters a stable, perfect state (the peaks) but is perturbed or reset at regular intervals.

* An inherent property of the cellular automata dynamics, where the system cycles between ordered and disordered states.

* A potential issue with training stability or hyperparameter tuning, causing periodic performance collapses.

The chart effectively communicates that while Diff-FSA is perfect, Recurrent GNN offers reliable improvement, and GNCA, despite reaching perfect accuracy periodically, suffers from significant and predictable instability. The choice between models would depend on whether consistent progress (Recurrent GNN) or the potential for perfect-but-unstable performance (GNCA) is preferred for the application.