## FAQ Section: User Study Information

### Overview

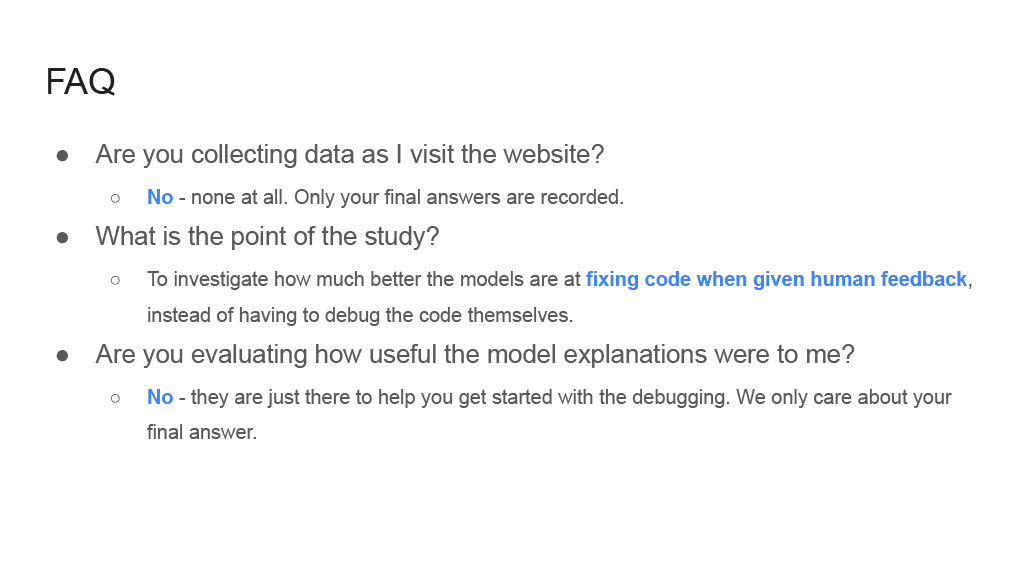

The image displays a Frequently Asked Questions (FAQ) section from what appears to be a website or digital document related to a user study. The section is designed to inform participants about data collection practices, the study's purpose, and the role of model-generated explanations. The text is presented in a clean, minimalist style with a white background and dark gray text. Certain phrases are highlighted in blue, likely indicating hyperlinks or key terms.

### Components/Axes

The content is structured as a hierarchical list:

* **Main Header:** "FAQ"

* **List Items:** Three primary questions, each followed by a sub-bullet containing the answer.

* **Text Formatting:** Standard bullet points (•) for main questions and hollow circle sub-bullets (◦) for answers. Key phrases within the answers are highlighted in blue.

### Detailed Analysis

The FAQ contains three question-and-answer pairs:

1. **Question:** "Are you collecting data as I visit the website?"

* **Answer:** "No - none at all. Only your final answers are recorded."

* **Note:** The word "No" is highlighted in blue.

2. **Question:** "What is the point of the study?"

* **Answer:** "To investigate how much better the models are at fixing code when given human feedback, instead of having to debug the code themselves."

* **Note:** The phrase "fixing code when given human feedback" is highlighted in blue.

3. **Question:** "Are you evaluating how useful the model explanations were to me?"

* **Answer:** "No - they are just there to help you get started with the debugging. We only care about your final answer."

* **Note:** The word "No" is highlighted in blue.

### Key Observations

* **Consistent Structure:** Each FAQ item follows a clear Question -> Answer format using nested bullet points.

* **Emphasis on Privacy:** The first and third answers begin with a highlighted "No," strongly emphasizing what data is *not* being collected (browsing behavior, usefulness ratings of explanations).

* **Study Focus:** The core research objective is explicitly stated: comparing model performance in code-fixing scenarios with and without human feedback.

* **Role of Explanations:** Model-generated explanations are framed solely as a tool to aid the participant's debugging process, not as an object of evaluation themselves.

* **Data Collection Scope:** The only data explicitly mentioned as being collected are the participants' "final answers."

### Interpretation

This FAQ section serves as a crucial informed consent and transparency tool for a human-computer interaction (HCI) or AI evaluation study. Its primary purpose is to manage participant expectations and address common concerns about surveillance and data usage.

The content reveals several key aspects of the study's design:

1. **Controlled Variable:** The independent variable being manipulated is the presence or absence of "human feedback" during a code-fixing task.

2. **Dependent Variable:** The primary metric for success is the quality or correctness of the participant's "final answer" (the fixed code).

3. **Methodology:** The study likely involves a platform where participants debug code, with some receiving AI-generated suggestions or explanations and others not. The FAQ assures participants that their interaction data (clicks, time spent) is not being analyzed, only their output.

4. **Ethical Considerations:** The explicit statements about data collection are designed to build trust and comply with ethical research standards, reassuring participants that their privacy is protected and the study's scope is limited.

The highlighted blue text draws immediate attention to the most critical takeaways: the denial of broad data collection and the specific research question about "fixing code when given human feedback." This design efficiently guides the reader to the most important information.