## FAQ Section: Website Interaction and Study Objectives

### Overview

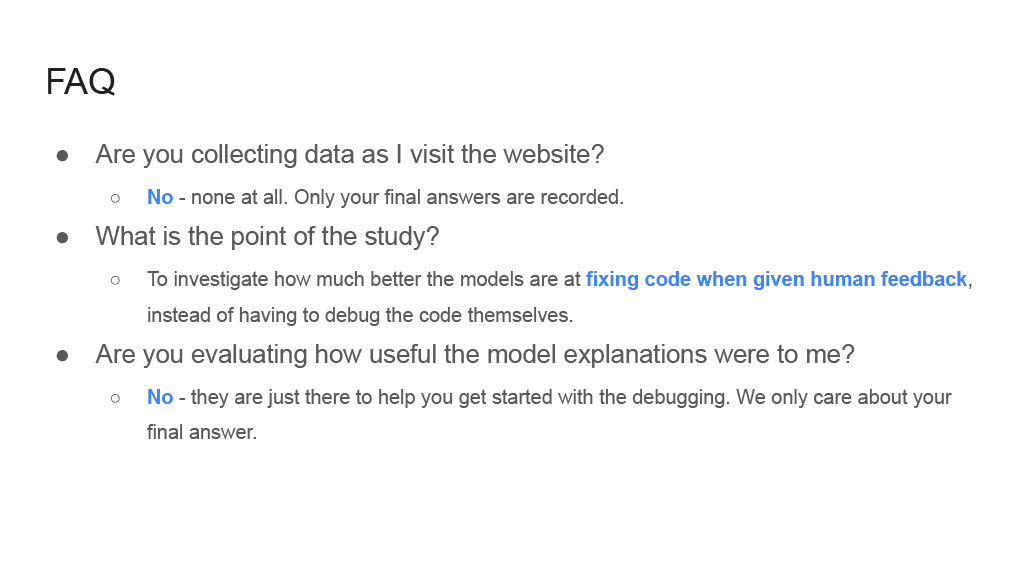

The image displays a FAQ section from a website, structured as a list of three questions with corresponding answers. The text is formatted with bold headers for questions, bullet points for answers, and specific terms highlighted in blue.

### Components/Axes

- **Structure**:

- Three main questions, each marked with a bolded bullet point (•).

- Answers are indented under each question, prefixed with a circle (•) and formatted in italics.

- Key terms (e.g., "human feedback") are highlighted in blue, suggesting hyperlinks or emphasis.

### Detailed Analysis

1. **Question 1**:

- **Text**: "Are you collecting data as I visit the website?"

- **Answer**:

- "No - none at all. Only your final answers are recorded."

- **Formatting**: "No" is bolded in blue; the rest of the answer is in plain text.

2. **Question 2**:

- **Text**: "What is the point of the study?"

- **Answer**:

- "To investigate how much better the models are at fixing code when given human feedback, instead of having to debug the code themselves."

- **Formatting**: "human feedback" is highlighted in blue.

3. **Question 3**:

- **Text**: "Are you evaluating how useful the model explanations were to me?"

- **Answer**:

- "No - they are just there to help you get started with the debugging. We only care about your final answer."

- **Formatting**: "No" is bolded in blue; the rest of the answer is in plain text.

### Key Observations

- The study explicitly states that **no data is collected during website visits**, only final answers are recorded.

- The primary goal of the study is to **improve code-fixing models** by leveraging human feedback, rather than relying on models to debug code independently.

- Model explanations are **not evaluated** for usefulness; their sole purpose is to assist users in initiating the debugging process.

### Interpretation

The FAQ clarifies that the study prioritizes **outcome-focused data collection** (final answers) over process-oriented metrics (e.g., user interaction with model explanations). By emphasizing human feedback as a tool to enhance model performance, the study aims to reduce the cognitive load on users during debugging. The exclusion of evaluation for model explanations suggests a focus on efficiency rather than user experience with intermediate steps. The use of blue highlights for terms like "human feedback" and "No" likely serves to draw attention to critical aspects of the study’s methodology and objectives.

---

**Note**: The image contains no charts, diagrams, or numerical data. All information is textual and structured as a FAQ.