## Diagram: Iterative Latent Space Processing Architecture

### Overview

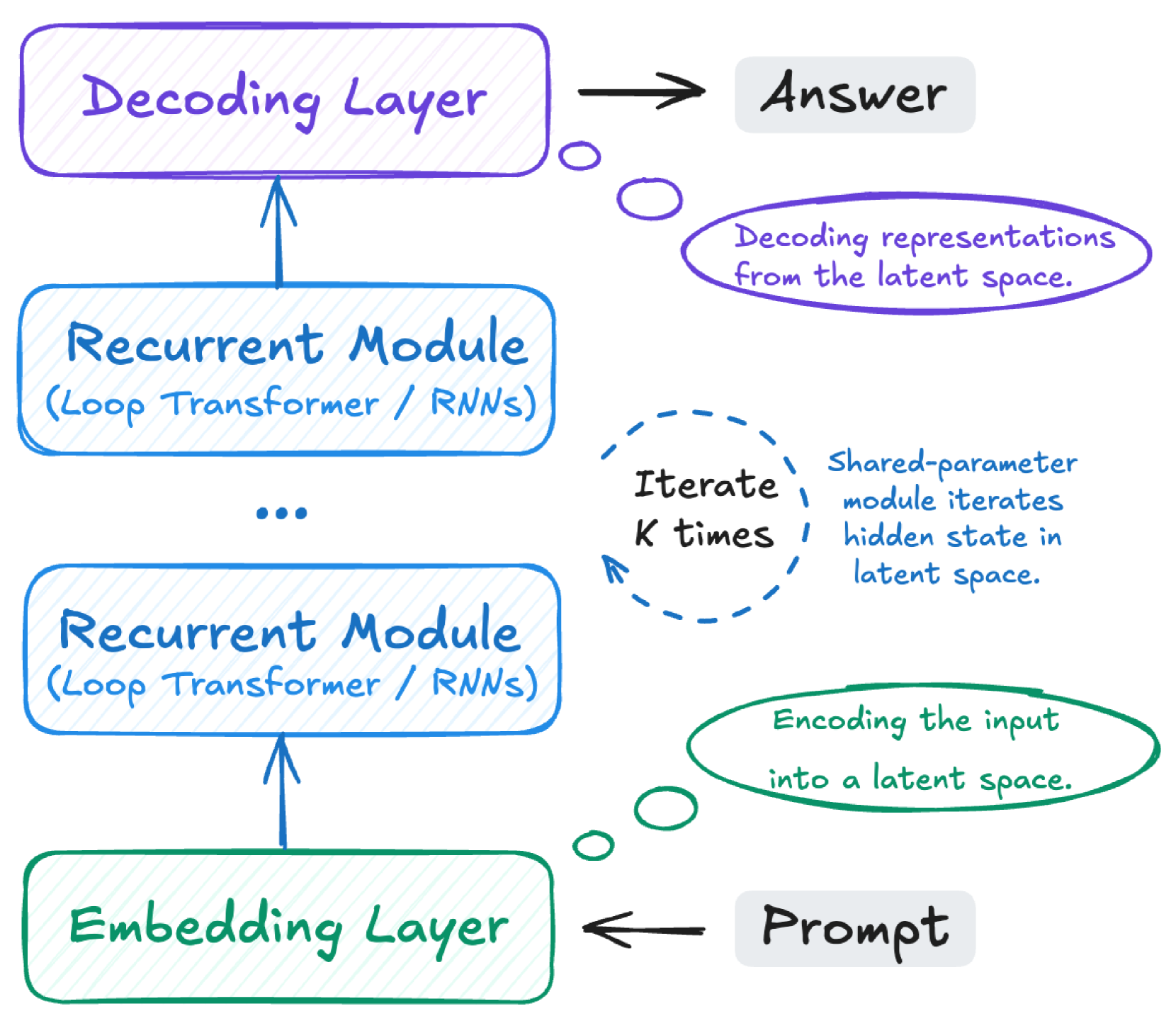

This image is a hand-drawn style technical diagram illustrating a neural network architecture designed for iterative processing in a latent space. The flow is vertical, starting from an input "Prompt" at the bottom and culminating in an "Answer" at the top. The core mechanism involves encoding an input, processing it through a recurrent module multiple times, and then decoding the result.

### Components/Axes

The diagram is organized into a vertical stack of processing blocks with associated explanatory annotations.

**Main Processing Blocks (from bottom to top):**

1. **Embedding Layer** (Green rounded rectangle, bottom-left)

* **Input:** Receives an arrow from the "Prompt" label (gray box, bottom-right).

* **Function:** Annotated by a green oval to its right: "Encoding the input into a latent space."

2. **Recurrent Module (Loop Transformer / RNNs)** (Blue rounded rectangle, middle-lower)

* **Input:** Receives an upward arrow from the Embedding Layer.

* **Function:** Part of an iterative process.

3. **Recurrent Module (Loop Transformer / RNNs)** (Blue rounded rectangle, middle-upper)

* **Input:** Receives an upward arrow from the previous Recurrent Module.

* **Note:** A "..." (ellipsis) is placed between the two Recurrent Module boxes, indicating the potential for multiple such modules or iterations.

4. **Decoding Layer** (Purple rounded rectangle, top-left)

* **Input:** Receives an upward arrow from the top Recurrent Module.

* **Output:** Sends an arrow to the "Answer" label (gray box, top-right).

* **Function:** Annotated by a purple oval to its right: "Decoding representations from the latent space."

**Process Annotations:**

* **Iteration Mechanism:** A blue dashed circle is positioned to the right of the Recurrent Modules. It contains the text "Iterate K times". Adjacent text explains: "Shared-parameter module iterates hidden state in latent space."

* **Input/Output Labels:**

* **Prompt** (Gray box, bottom-right): The starting point of the data flow.

* **Answer** (Gray box, top-right): The final output of the architecture.

### Detailed Analysis

The diagram explicitly defines a three-stage process with a recursive middle stage:

1. **Encoding Stage:** The "Prompt" is fed into the "Embedding Layer". This layer's purpose is to transform the raw input into a latent space representation.

2. **Iterative Processing Stage:** The latent representation is passed into a "Recurrent Module". This module, which can be a Loop Transformer or RNN, is applied iteratively. The annotation "Iterate K times" and "Shared-parameter module iterates hidden state in latent space" confirms that the same module (with shared parameters) is applied K times in sequence, refining the hidden state within the latent space with each pass. The "..." between the two drawn Recurrent Module boxes visually represents this repetition.

3. **Decoding Stage:** After K iterations, the final hidden state from the recurrent processing is passed to the "Decoding Layer". This layer's function is to convert the processed latent representation back into a human-interpretable format, producing the final "Answer".

**Spatial Grounding & Color Coding:**

* **Green** is consistently used for the encoding phase (Embedding Layer and its annotation).

* **Blue** is used for the iterative recurrent processing phase (Recurrent Modules and the iteration annotation).

* **Purple** is used for the decoding phase (Decoding Layer and its annotation).

* The flow arrows are black, clearly indicating the direction of data movement from bottom (input) to top (output).

### Key Observations

* The architecture is explicitly **iterative and recurrent**, not a single-pass feedforward network. The core computation happens in a loop.

* The recurrent module is **parameter-shared** across all K iterations, which is a key efficiency and functional characteristic.

* The diagram emphasizes a clean separation of concerns: encoding, iterative latent processing, and decoding.

* The use of "Loop Transformer" as an alternative to "RNNs" suggests this architecture is designed to leverage modern attention-based mechanisms within a recurrent framework.

### Interpretation

This diagram illustrates a **Latent Iterative Refinement** architecture, a paradigm common in advanced AI models for complex reasoning or generation tasks.

* **What it demonstrates:** The model doesn't generate an answer in one step. Instead, it first creates an internal (latent) representation of the problem ("Prompt"). It then "thinks" about this representation for a fixed number of steps (K iterations), allowing the hidden state to evolve and settle into a more refined or solved state. Finally, it translates this refined internal state into the final output ("Answer").

* **How elements relate:** The Embedding Layer acts as the interface between the external input and the model's internal world (latent space). The Recurrent Module is the "thinking engine" that operates purely within that internal world. The Decoding Layer is the interface back to the external world. The iterative loop is the crucial component that enables deep, multi-step computation.

* **Notable implications:** This design is well-suited for tasks requiring multi-step deduction, planning, or iterative problem-solving, where the solution emerges through a process of refinement rather than immediate pattern matching. The fixed iteration count (K) is a critical hyperparameter controlling the depth of this "thinking" process.