## Diagram: Neural Network Architecture

### Overview

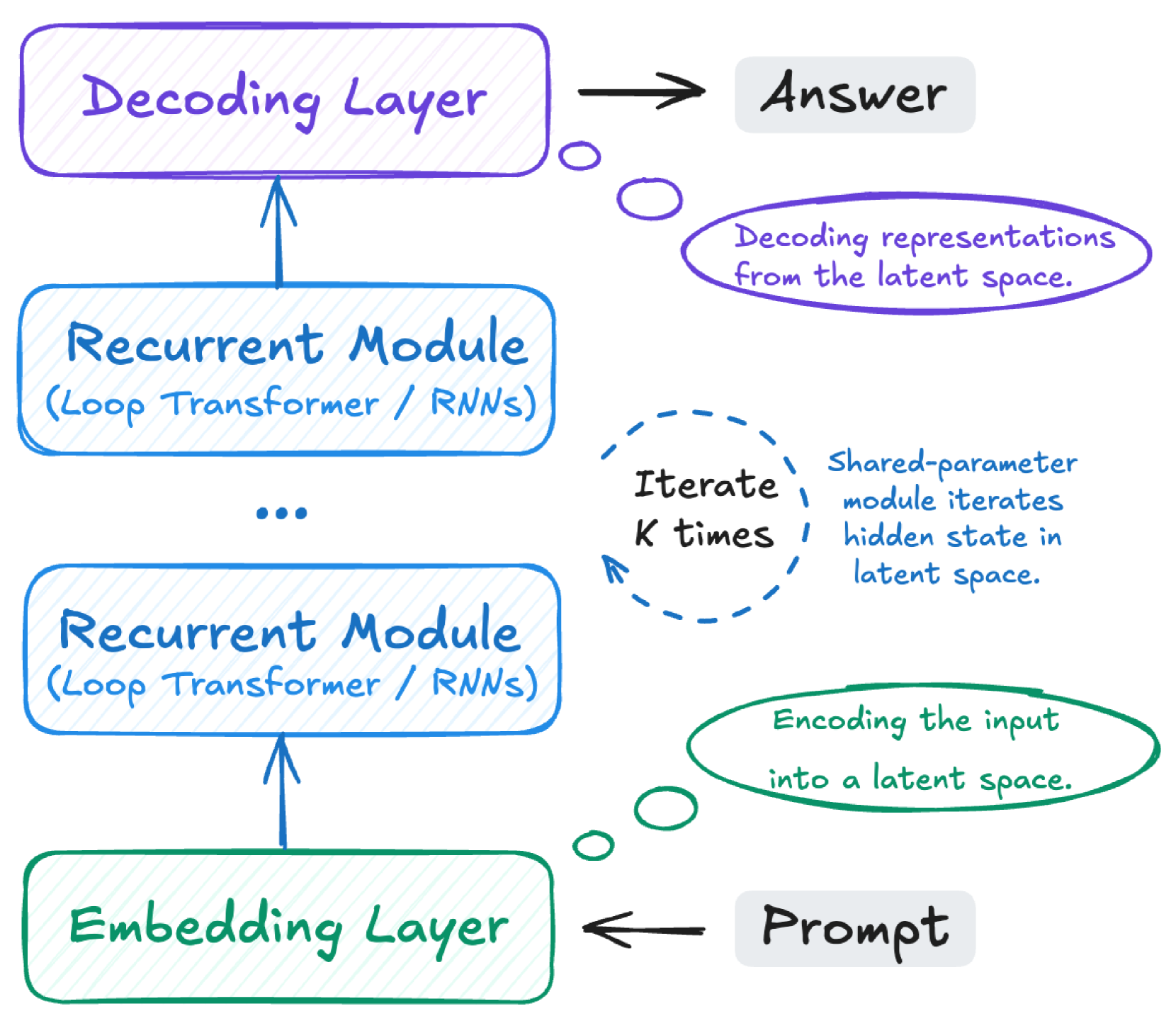

The image depicts a neural network architecture, likely for sequence-to-sequence tasks. It shows the flow of information from a "Prompt" through an "Embedding Layer," multiple "Recurrent Modules," and finally a "Decoding Layer" to produce an "Answer." The diagram highlights the iterative nature of the recurrent modules and the encoding/decoding of information into/from a latent space.

### Components/Axes

* **Embedding Layer:** A green rounded rectangle at the bottom, labeled "Embedding Layer."

* **Recurrent Module:** Two blue rounded rectangles labeled "Recurrent Module (Loop Transformer / RNNs)." There are three dots between the two modules, indicating that there can be more recurrent modules.

* **Decoding Layer:** A purple rounded rectangle at the top, labeled "Decoding Layer."

* **Prompt:** A grey rounded rectangle at the bottom-right, labeled "Prompt."

* **Answer:** A grey rounded rectangle at the top-right, labeled "Answer."

* **Arrows:** Arrows indicate the flow of information between layers.

* **Iterate K times:** A blue dashed circle with the text "Iterate K times."

* **Decoding representations from the latent space:** A purple oval with the text "Decoding representations from the latent space."

* **Encoding the input into a latent space:** A green oval with the text "Encoding the input into a latent space."

* **Shared-parameter module iterates hidden state in latent space:** Blue text near the "Iterate K times" circle.

### Detailed Analysis

* **Embedding Layer (Green):** The "Prompt" is fed into the "Embedding Layer." An arrow points from the "Prompt" to the "Embedding Layer," but the arrow points to the left, indicating the "Prompt" is the input. A green oval near the "Embedding Layer" states "Encoding the input into a latent space."

* **Recurrent Modules (Blue):** The output of the "Embedding Layer" is fed into the first "Recurrent Module." The output of the first "Recurrent Module" is fed into the second "Recurrent Module." The text "(Loop Transformer / RNNs)" suggests these modules can be implemented using either Loop Transformers or Recurrent Neural Networks. The dashed blue circle indicates that the "Recurrent Module" iterates "K times" with a "Shared-parameter module iterates hidden state in latent space."

* **Decoding Layer (Purple):** The output of the second "Recurrent Module" is fed into the "Decoding Layer." A purple oval near the "Decoding Layer" states "Decoding representations from the latent space."

* **Answer (Grey):** The output of the "Decoding Layer" is the "Answer."

### Key Observations

* The diagram illustrates a typical sequence-to-sequence architecture with an encoding phase (Embedding Layer and Recurrent Modules) and a decoding phase (Decoding Layer).

* The use of "Loop Transformer / RNNs" suggests the model is designed to handle sequential data.

* The "Iterate K times" notation indicates that the recurrent modules involve iterative processing.

* The diagram emphasizes the transformation of the input into a latent space and the subsequent decoding from that space.

### Interpretation

The diagram represents a neural network architecture designed for tasks where an input sequence ("Prompt") is transformed into an output sequence ("Answer"). The "Embedding Layer" converts the input into a numerical representation suitable for the neural network. The "Recurrent Modules" process this representation iteratively, capturing the sequential dependencies in the data. The "Decoding Layer" then transforms the processed representation back into the desired output format. The iterative nature of the recurrent modules allows the model to learn long-range dependencies in the input sequence. The encoding and decoding into/from a latent space allows the model to generalize to unseen data. The architecture is suitable for tasks such as machine translation, text summarization, and question answering.