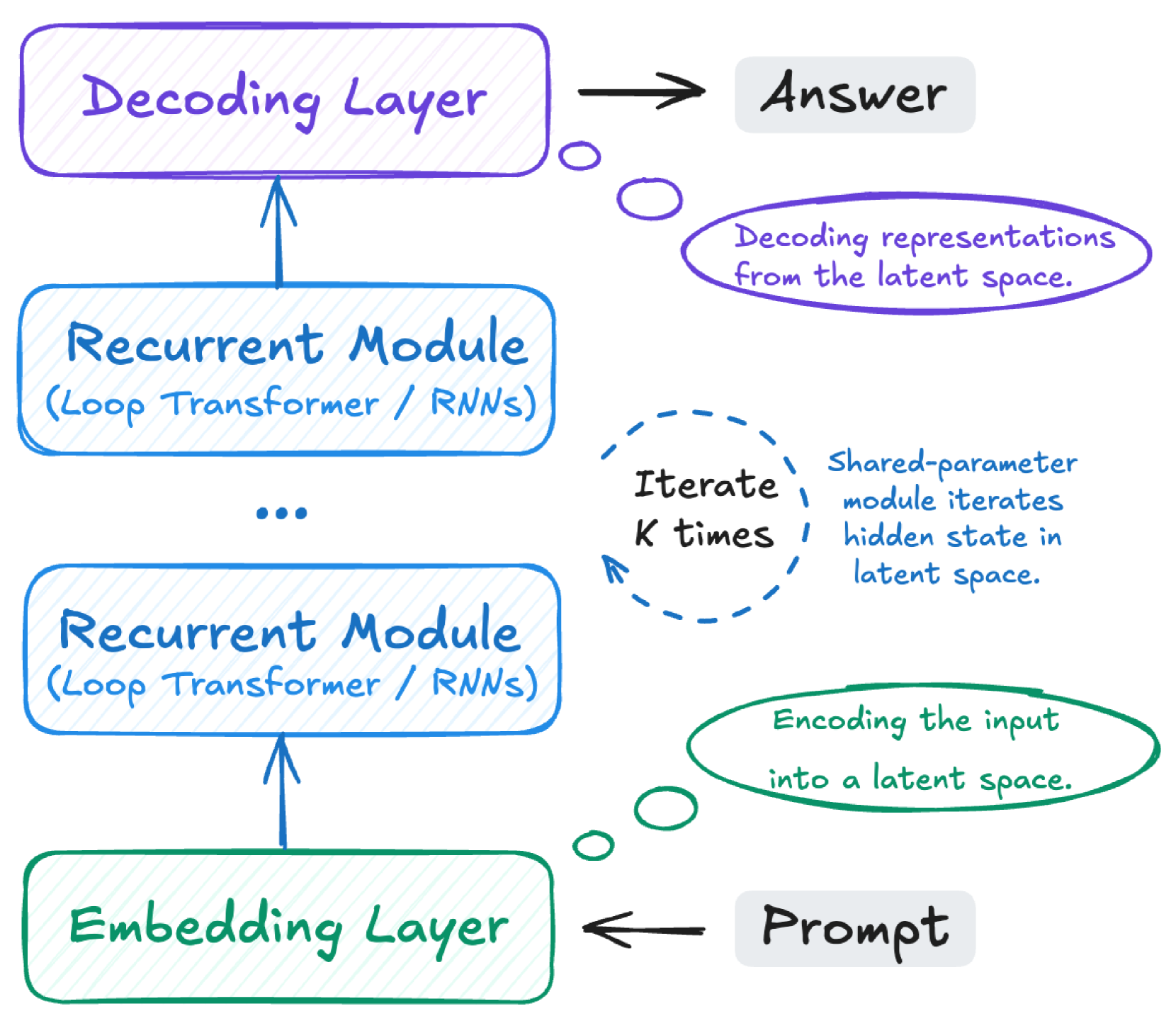

This image is a technical diagram illustrating the architecture and flow of a system, likely a neural network model, with an emphasis on recurrent processing. It depicts a sequence of layers and modules, along with explanatory notes for each stage. The diagram uses color-coding to associate components with their descriptions.

The diagram can be segmented into three main conceptual regions:

1. **Main Processing Flow (Left Column):** A vertical stack of computational layers.

2. **Input/Output (Right Side, Horizontal):** The entry and exit points of the system.

3. **Explanatory Notes (Right Column, Aligned with Flow):** Descriptions for the main components.

---

### **1. Main Processing Flow (Left Column)**

The core of the system is represented by a vertical stack of three distinct types of layers/modules, connected by upward-pointing arrows indicating data flow.

* **Bottom Component:**

* **Label:** "Embedding Layer"

* **Shape:** Rounded rectangle with a green outline and light green diagonal hatching.

* **Input:** Receives input from "Prompt" via a black arrow with a green outline.

* **Middle Components:**

* **Label:** "Recurrent Module (Loop Transformer / RNNs)"

* **Shape:** Rounded rectangle with a blue outline and light blue diagonal hatching.

* **Connection:** An upward-pointing blue arrow connects the "Embedding Layer" to the first "Recurrent Module".

* **Indication of Repetition:** An ellipsis (`...`) is placed between two instances of the "Recurrent Module", signifying that there can be multiple such modules stacked.

* **Connection:** An upward-pointing blue arrow connects the last "Recurrent Module" to the "Decoding Layer".

* **Top Component:**

* **Label:** "Decoding Layer"

* **Shape:** Rounded rectangle with a purple outline and light purple diagonal hatching.

* **Output:** Sends output to "Answer" via a black arrow with a purple outline.

---

### **2. Input and Output (Right Side)**

* **Input:**

* **Label:** "Prompt"

* **Shape:** Rounded rectangle with a grey fill and black outline.

* **Connection:** A black arrow, outlined in green, points from "Prompt" to the "Embedding Layer".

* **Output:**

* **Label:** "Answer"

* **Shape:** Rounded rectangle with a grey fill and black outline.

* **Connection:** A black arrow, outlined in purple, points from the "Decoding Layer" to "Answer".

---

### **3. Explanatory Notes (Right Column)**

These notes provide context and function descriptions for the corresponding components, indicated by matching colors and small connecting circles.

* **Bottom Explanation (Associated with Embedding Layer and Prompt):**

* **Shape:** An oval-shaped speech bubble with a green outline.

* **Text:** "Encoding the input into a latent space."

* **Spatial Grounding:** Located to the right of the "Embedding Layer" and below the "Recurrent Module" explanations. A small green circle and line connect it to the "Embedding Layer" and the arrow from "Prompt".

* **Middle Explanation (Associated with Recurrent Module):**

* **Shape:** A dashed blue circle containing text, with additional text to its right.

* **Text (inside dashed circle):** "Iterate K times"

* **Text (to the right of dashed circle):** "Shared-parameter module iterates hidden state in latent space."

* **Spatial Grounding:** Located to the right of the "Recurrent Module" stack. The blue color of the dashed circle and text matches the "Recurrent Module" components. This indicates that the recurrent modules involve iteration and shared parameters to evolve a hidden state in a latent space.

* **Top Explanation (Associated with Decoding Layer and Answer):**

* **Shape:** An oval-shaped speech bubble with a purple outline.

* **Text:** "Decoding representations from the latent space."

* **Spatial Grounding:** Located to the right of the "Decoding Layer" and above the "Recurrent Module" explanations. A small purple circle and line connect it to the "Decoding Layer" and the arrow to "Answer".

---

**Summary of Flow and Functionality:**

The diagram illustrates a process where a "Prompt" is first processed by an "Embedding Layer" (encoding the input into a latent space). This embedded representation then passes through one or more "Recurrent Module" instances (which are described as "Loop Transformer / RNNs"). These recurrent modules iterate "K times" using shared parameters to evolve a hidden state within the latent space. Finally, the output from the recurrent modules is fed into a "Decoding Layer" (which decodes representations from the latent space) to produce the final "Answer".