\n

## Diagram: Neural Network Architecture for Question Answering

### Overview

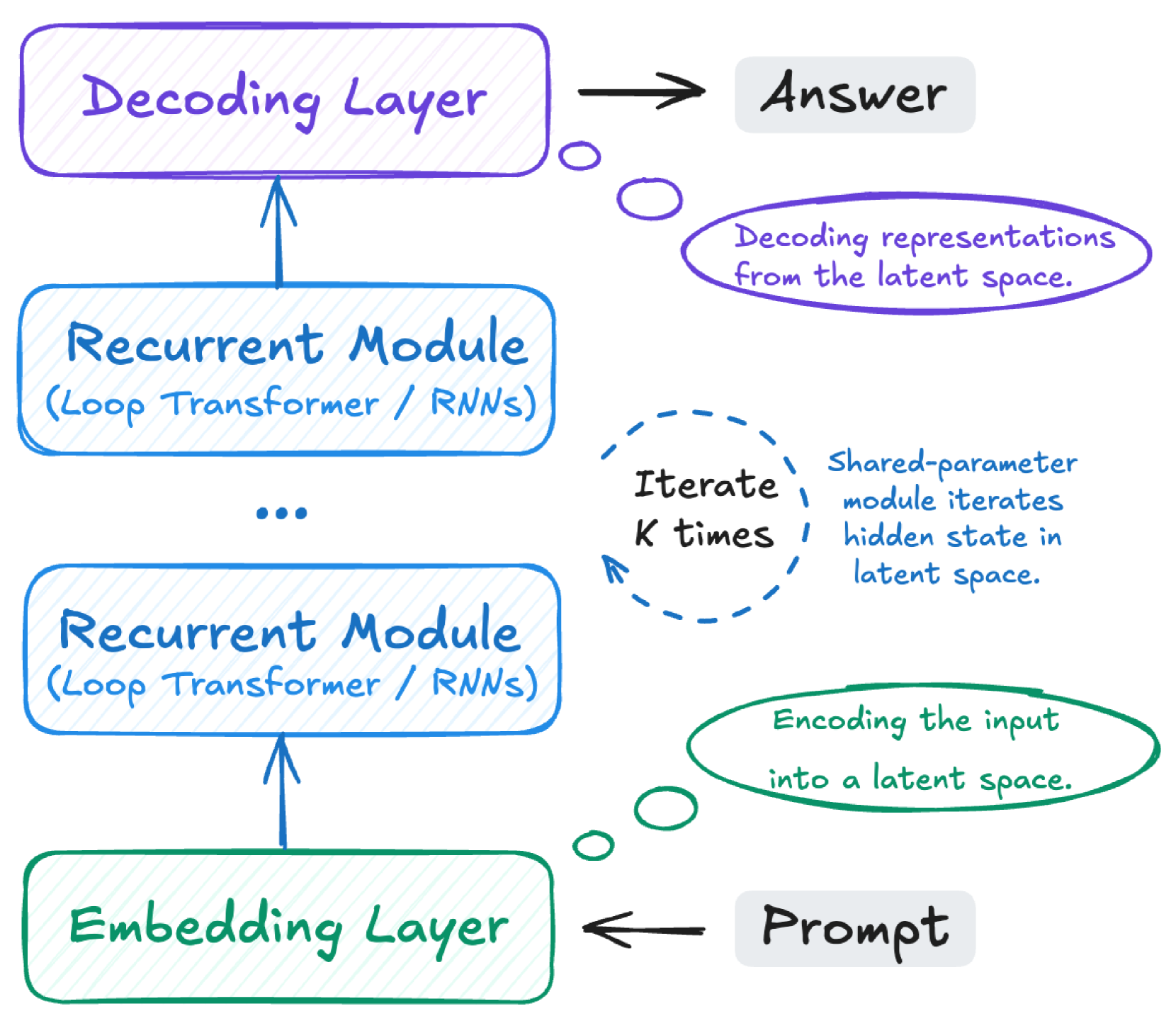

This diagram illustrates the architecture of a neural network designed for question answering. The network consists of an Embedding Layer, multiple Recurrent Modules, a Decoding Layer, and produces an Answer. The diagram highlights the flow of information from the input "Prompt" through the network to generate the "Answer". It also describes the function of each layer and the iterative process within the recurrent modules.

### Components/Axes

The diagram consists of the following components:

* **Embedding Layer:** Located at the bottom of the diagram.

* **Recurrent Module:** Two instances are explicitly shown, with an ellipsis (...) indicating further modules. Each module is labeled "(Loop Transformer / RNNs)".

* **Decoding Layer:** Located at the top of the diagram.

* **Answer:** Output of the Decoding Layer, positioned to the right.

* **Prompt:** Input to the Embedding Layer, positioned to the left.

* **Iterate K times:** A dashed loop connecting the Recurrent Modules, indicating an iterative process.

* **Annotation Bubbles:** Two bubbles with descriptive text explaining the processes of encoding and decoding.

### Detailed Analysis or Content Details

The diagram shows a sequential flow of information:

1. **Prompt** enters the **Embedding Layer**.

2. The output of the Embedding Layer is fed into the first **Recurrent Module**.

3. The output of the first Recurrent Module is fed into the second Recurrent Module. The ellipsis indicates this process repeats for multiple Recurrent Modules.

4. The output of the final Recurrent Module is fed into the **Decoding Layer**.

5. The **Decoding Layer** produces the **Answer**.

The diagram also includes the following textual descriptions:

* **"Decoding representations from the latent space."** (Inside a light blue bubble, top-right)

* **"Encoding the input into a latent space."** (Inside a light green bubble, bottom-left)

* **"Iterate K times"** (Inside a dashed loop connecting the Recurrent Modules)

* **"Shared-parameter module iterates hidden state in latent space."** (Text associated with the "Iterate K times" loop)

* **"Loop Transformer / RNNs"** (Label within each Recurrent Module)

### Key Observations

The diagram emphasizes the use of recurrent modules, which can be either Loop Transformers or Recurrent Neural Networks (RNNs). The iterative process within these modules, denoted by "Iterate K times", suggests a mechanism for refining the representation of the input. The use of a "latent space" indicates that the network learns a compressed representation of the input data.

### Interpretation

This diagram represents a neural network architecture commonly used in sequence-to-sequence tasks, such as question answering. The Embedding Layer transforms the input prompt into a numerical representation. The Recurrent Modules process this representation iteratively, capturing the sequential dependencies within the prompt. The Decoding Layer then uses this processed representation to generate the answer. The "latent space" suggests the network learns a compressed, abstract representation of the input, which can improve generalization and efficiency. The iterative process within the recurrent modules allows the network to refine its understanding of the input and generate a more accurate answer. The architecture is flexible, allowing for the use of either Loop Transformers or RNNs as the recurrent modules. The diagram provides a high-level overview of the network's structure and function, without delving into the specific details of the implementation.