TECHNICAL ASSET FINGERPRINT

602df18bcecd9e187b6eccc8

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

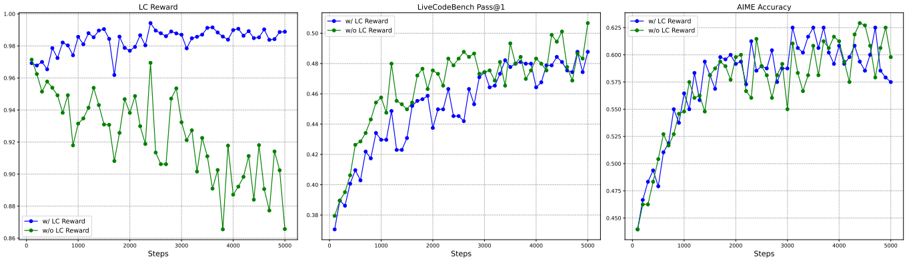

## Line Charts: Performance Comparison with and without LC Reward

### Overview

The image presents three line charts comparing the performance of a system with and without "LC Reward" across different metrics: "LC Reward", "LiveCodeBench Pass@1", and "AIME Accuracy". Each chart plots the metric against the number of steps, ranging from 0 to 5000. The charts aim to illustrate the impact of incorporating LC Reward on the system's performance in these three areas.

### Components/Axes

* **X-axis (all charts):** "Steps", ranging from 0 to 5000, with markers at 1000 intervals.

* **Y-axis (LC Reward):** "LC Reward", ranging from 0.86 to 1.00, with markers at 0.02 intervals.

* **Y-axis (LiveCodeBench Pass@1):** "LiveCodeBench Pass@1", ranging from 0.38 to 0.50, with markers at 0.02 intervals.

* **Y-axis (AIME Accuracy):** "AIME Accuracy", ranging from 0.450 to 0.625, with markers at 0.025 intervals.

* **Legend (all charts, top-left):**

* Blue line: "w/ LC Reward" (with LC Reward)

* Green line: "w/o LC Reward" (without LC Reward)

### Detailed Analysis

**1. LC Reward Chart (Left)**

* **Trend (w/ LC Reward - Blue):** The line starts at approximately 0.97, increases to around 0.99 by step 1000, and then fluctuates between 0.98 and 1.00 for the remainder of the steps.

* Step 0: ~0.97

* Step 1000: ~0.99

* Step 5000: ~0.99

* **Trend (w/o LC Reward - Green):** The line starts at approximately 0.97, decreases significantly to around 0.90 by step 4000, and then fluctuates between 0.88 and 0.92 for the remainder of the steps.

* Step 0: ~0.97

* Step 1000: ~0.94

* Step 2000: ~0.91

* Step 3000: ~0.92

* Step 4000: ~0.88

* Step 5000: ~0.90

**2. LiveCodeBench Pass@1 Chart (Center)**

* **Trend (w/ LC Reward - Blue):** The line starts at approximately 0.38, increases to around 0.45 by step 1000, and then fluctuates between 0.42 and 0.47 for the remainder of the steps.

* Step 0: ~0.38

* Step 1000: ~0.43

* Step 5000: ~0.46

* **Trend (w/o LC Reward - Green):** The line starts at approximately 0.45, increases to around 0.48 by step 2000, and then fluctuates between 0.45 and 0.50 for the remainder of the steps.

* Step 0: ~0.38

* Step 1000: ~0.45

* Step 2000: ~0.48

* Step 5000: ~0.49

**3. AIME Accuracy Chart (Right)**

* **Trend (w/ LC Reward - Blue):** The line starts at approximately 0.45, increases to around 0.58 by step 2000, and then fluctuates between 0.57 and 0.61 for the remainder of the steps.

* Step 0: ~0.45

* Step 1000: ~0.52

* Step 2000: ~0.58

* Step 5000: ~0.58

* **Trend (w/o LC Reward - Green):** The line starts at approximately 0.45, increases to around 0.60 by step 3000, and then fluctuates between 0.58 and 0.63 for the remainder of the steps.

* Step 0: ~0.45

* Step 1000: ~0.53

* Step 2000: ~0.57

* Step 3000: ~0.61

* Step 5000: ~0.62

### Key Observations

* In the "LC Reward" chart, the system performs significantly better with LC Reward, maintaining a high reward value throughout the steps, while the reward decreases significantly without LC Reward.

* In the "LiveCodeBench Pass@1" chart, the system performs better without LC Reward, especially after 2000 steps.

* In the "AIME Accuracy" chart, the system performs slightly better without LC Reward, especially after 3000 steps.

### Interpretation

The charts suggest that the impact of LC Reward varies depending on the specific metric being evaluated. While LC Reward is highly beneficial for maintaining a high "LC Reward" value, it appears to have a negative or negligible impact on "LiveCodeBench Pass@1" and "AIME Accuracy". This could indicate that optimizing for LC Reward might come at the expense of performance in other areas. The data suggests that the system's performance is more balanced without LC Reward, achieving higher "LiveCodeBench Pass@1" and "AIME Accuracy" scores. Further investigation is needed to understand the trade-offs and optimize the system for overall performance across all metrics.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

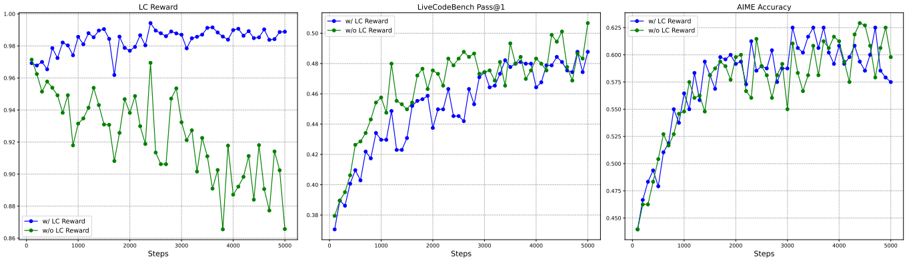

## Line Charts: Training Performance Comparison

### Overview

The image presents three line charts, arranged horizontally, comparing the performance of a model with and without "LC Reward" across different metrics: "LC Reward", "LiveCodeBench Pass@1", and "AIME Accuracy". All charts share a common x-axis representing "Steps" from 0 to 5000. The y-axes vary depending on the metric.

### Components/Axes

* **X-axis (all charts):** "Steps" (0 to 5000, with increments of 500)

* **Chart 1:**

* **Title:** "LC Reward"

* **Y-axis:** Scale from approximately 0.88 to 1.00, with increments of 0.02.

* **Chart 2:**

* **Title:** "LiveCodeBench Pass@1"

* **Y-axis:** Scale from approximately 0.38 to 0.50, with increments of 0.02.

* **Chart 3:**

* **Title:** "AIME Accuracy"

* **Y-axis:** Scale from approximately 0.45 to 0.625, with increments of 0.05.

* **Legend (all charts, bottom-left):**

* Blue Line: "w/ LC Reward"

* Green Line: "w/o LC Reward"

### Detailed Analysis or Content Details

**Chart 1: LC Reward**

* **Blue Line ("w/ LC Reward"):** Starts at approximately 0.98 at Step 0, fluctuates between approximately 0.96 and 0.99, with a slight downward trend initially, then stabilizes around 0.97-0.98. There are several dips below 0.96, but it recovers.

* Step 0: ~0.98

* Step 500: ~0.96

* Step 1000: ~0.96

* Step 1500: ~0.97

* Step 2000: ~0.97

* Step 2500: ~0.97

* Step 3000: ~0.97

* Step 3500: ~0.97

* Step 4000: ~0.97

* Step 4500: ~0.97

* Step 5000: ~0.97

* **Green Line ("w/o LC Reward"):** Starts at approximately 0.94 at Step 0, exhibits a significant downward trend, reaching a minimum of approximately 0.88 around Step 2000, then shows a slight recovery, ending around 0.90 at Step 5000.

* Step 0: ~0.94

* Step 500: ~0.92

* Step 1000: ~0.90

* Step 1500: ~0.89

* Step 2000: ~0.88

* Step 2500: ~0.89

* Step 3000: ~0.90

* Step 3500: ~0.91

* Step 4000: ~0.91

* Step 4500: ~0.91

* Step 5000: ~0.90

**Chart 2: LiveCodeBench Pass@1**

* **Blue Line ("w/ LC Reward"):** Starts at approximately 0.41 at Step 0, shows a consistent upward trend, reaching a peak of approximately 0.49 around Step 4000, then slightly decreases to approximately 0.48 at Step 5000.

* Step 0: ~0.41

* Step 500: ~0.43

* Step 1000: ~0.45

* Step 1500: ~0.46

* Step 2000: ~0.47

* Step 2500: ~0.48

* Step 3000: ~0.48

* Step 3500: ~0.49

* Step 4000: ~0.49

* Step 4500: ~0.48

* Step 5000: ~0.48

* **Green Line ("w/o LC Reward"):** Starts at approximately 0.38 at Step 0, also shows an upward trend, but is consistently lower than the blue line. It reaches a peak of approximately 0.46 around Step 4000 and ends at approximately 0.45 at Step 5000.

* Step 0: ~0.38

* Step 500: ~0.40

* Step 1000: ~0.42

* Step 1500: ~0.43

* Step 2000: ~0.44

* Step 2500: ~0.45

* Step 3000: ~0.45

* Step 3500: ~0.46

* Step 4000: ~0.46

* Step 4500: ~0.46

* Step 5000: ~0.45

**Chart 3: AIME Accuracy**

* **Blue Line ("w/ LC Reward"):** Starts at approximately 0.47 at Step 0, exhibits a generally upward trend, fluctuating between approximately 0.58 and 0.62.

* Step 0: ~0.47

* Step 500: ~0.52

* Step 1000: ~0.56

* Step 1500: ~0.58

* Step 2000: ~0.59

* Step 2500: ~0.60

* Step 3000: ~0.61

* Step 3500: ~0.61

* Step 4000: ~0.61

* Step 4500: ~0.61

* Step 5000: ~0.60

* **Green Line ("w/o LC Reward"):** Starts at approximately 0.45 at Step 0, also shows an upward trend, but is consistently lower than the blue line. It reaches a peak of approximately 0.57 around Step 3500 and ends at approximately 0.56 at Step 5000.

* Step 0: ~0.45

* Step 500: ~0.49

* Step 1000: ~0.53

* Step 1500: ~0.55

* Step 2000: ~0.56

* Step 2500: ~0.56

* Step 3000: ~0.57

* Step 3500: ~0.57

* Step 4000: ~0.57

* Step 4500: ~0.56

* Step 5000: ~0.56

### Key Observations

* Across all three metrics, the model *with* "LC Reward" consistently outperforms the model *without* "LC Reward".

* The "LC Reward" metric itself shows a clear divergence between the two models, with the model without "LC Reward" experiencing a significant decline over time.

* The "LiveCodeBench Pass@1" and "AIME Accuracy" metrics show more gradual improvements, but the performance gap between the two models remains consistent.

* The fluctuations in all lines suggest some level of variance in the training process.

### Interpretation

The data strongly suggests that incorporating "LC Reward" into the training process significantly improves the model's performance across all evaluated metrics. The "LC Reward" metric itself indicates that the reward signal is being effectively learned by the model with the reward mechanism, while the improvements in "LiveCodeBench Pass@1" and "AIME Accuracy" demonstrate that this learning translates to better generalization and performance on downstream tasks. The consistent outperformance of the model with "LC Reward" suggests that this reward signal is a valuable component of the training process. The fluctuations observed in the lines likely represent the stochastic nature of the training process and the inherent variability in the data. The divergence in the LC Reward chart is particularly striking, indicating that the LC Reward is actively being optimized when present, and degrades when absent. This suggests a strong correlation between the LC Reward and the overall training process.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Performance Metrics with/without LC Reward

### Overview

The image displays three horizontally arranged line charts comparing the performance of a system "w/ LC Reward" (with LC Reward) versus "w/o LC Reward" (without LC Reward) across 5000 training steps. The charts measure three distinct metrics: "LC Reward", "LiveCodeBench Pass@1", and "AIME Accuracy". Each chart uses a blue line with circle markers for the "w/ LC Reward" condition and a green line with circle markers for the "w/o LC Reward" condition.

### Components/Axes

* **Chart 1 (Left): LC Reward**

* **Title:** "LC Reward"

* **Y-axis:** Label is not explicitly written, but the scale represents the "LC Reward" value. Range: 0.86 to 1.00. Major ticks at 0.86, 0.88, 0.90, 0.92, 0.94, 0.96, 0.98, 1.00.

* **X-axis:** Label: "Steps". Range: 0 to 5000. Major ticks at 0, 1000, 2000, 3000, 4000, 5000.

* **Legend:** Located in the bottom-left corner. Blue line with circle marker: "w/ LC Reward". Green line with circle marker: "w/o LC Reward".

* **Chart 2 (Center): LiveCodeBench Pass@1**

* **Title:** "LiveCodeBench Pass@1"

* **Y-axis:** Label is not explicitly written, but the scale represents the "Pass@1" score. Range: 0.38 to 0.50. Major ticks at 0.38, 0.40, 0.42, 0.44, 0.46, 0.48, 0.50.

* **X-axis:** Label: "Steps". Range: 0 to 5000. Major ticks at 0, 1000, 2000, 3000, 4000, 5000.

* **Legend:** Located in the top-left corner. Blue line with circle marker: "w/ LC Reward". Green line with circle marker: "w/o LC Reward".

* **Chart 3 (Right): AIME Accuracy**

* **Title:** "AIME Accuracy"

* **Y-axis:** Label is not explicitly written, but the scale represents the "Accuracy" score. Range: 0.450 to 0.625. Major ticks at 0.450, 0.475, 0.500, 0.525, 0.550, 0.575, 0.600, 0.625.

* **X-axis:** Label: "Steps". Range: 0 to 5000. Major ticks at 0, 1000, 2000, 3000, 4000, 5000.

* **Legend:** Located in the top-left corner. Blue line with circle marker: "w/ LC Reward". Green line with circle marker: "w/o LC Reward".

### Detailed Analysis

**Chart 1: LC Reward**

* **Trend Verification:** The blue line ("w/ LC Reward") shows a generally high and stable trend with moderate fluctuations. The green line ("w/o LC Reward") shows a clear downward trend with high volatility, especially after step 3000.

* **Data Points (Approximate):**

* **w/ LC Reward (Blue):** Starts ~0.97 at step 0. Fluctuates between ~0.96 and ~0.99 throughout. Ends near ~0.99 at step 5000.

* **w/o LC Reward (Green):** Starts ~0.96 at step 0. Shows a gradual decline with significant dips. Notable low points: ~0.91 at step ~1800, ~0.90 at step ~2800, and a sharp drop to ~0.87 at step ~3800. Ends near ~0.87 at step 5000.

**Chart 2: LiveCodeBench Pass@1**

* **Trend Verification:** Both lines show a strong upward trend from step 0 to step 5000. The green line ("w/o LC Reward") appears to overtake and consistently stay above the blue line ("w/ LC Reward") after approximately step 1000.

* **Data Points (Approximate):**

* **w/ LC Reward (Blue):** Starts ~0.38 at step 0. Rises steadily to ~0.46 by step 2000. Continues rising to end near ~0.48 at step 5000.

* **w/o LC Reward (Green):** Starts ~0.38 at step 0. Rises more steeply, reaching ~0.47 by step 2000. Maintains a lead, ending near ~0.50 at step 5000.

**Chart 3: AIME Accuracy**

* **Trend Verification:** Both lines show a strong upward trend from step 0 to step 5000. The lines are closely intertwined, with the blue line ("w/ LC Reward") showing slightly higher peaks in the later stages (after step 3000).

* **Data Points (Approximate):**

* **w/ LC Reward (Blue):** Starts ~0.45 at step 0. Rises to ~0.55 by step 2000. Shows high volatility in the later half, with peaks reaching ~0.62 near step 4500. Ends near ~0.58 at step 5000.

* **w/o LC Reward (Green):** Starts ~0.45 at step 0. Rises to ~0.55 by step 2000. Follows a similar volatile path but with slightly lower peaks, ending near ~0.57 at step 5000.

### Key Observations

1. **Divergent Impact:** The presence of "LC Reward" has opposite effects on the measured metrics. It maintains a high "LC Reward" value (Chart 1) but appears to slightly hinder performance on "LiveCodeBench Pass@1" (Chart 2) compared to its absence.

2. **Volatility:** All metrics show significant step-to-step volatility, particularly in the later stages of training (after step 2000-3000).

3. **Convergence in AIME:** For "AIME Accuracy" (Chart 3), the two conditions perform very similarly, with no clear, sustained advantage for either, though "w/ LC Reward" hits higher maximum values.

4. **Stability vs. Performance:** The "w/o LC Reward" condition leads to a degradation of the "LC Reward" metric itself (Chart 1) but correlates with improved performance on the "LiveCodeBench" coding benchmark (Chart 2).

### Interpretation

The data suggests a trade-off or a nuanced relationship between optimizing for the internal "LC Reward" signal and performance on external benchmarks. The "LC Reward" appears to be a stable, high-value objective when explicitly trained with it (Chart 1, blue line). However, removing this explicit reward signal ("w/o LC Reward") does not cause catastrophic failure; instead, it leads to a decline in that specific reward value but coincides with improved performance on the LiveCodeBench coding task (Chart 2). This could indicate that the "LC Reward" metric and the "LiveCodeBench Pass@1" metric are not perfectly aligned, or that optimizing directly for the former may lead to some degree of overfitting or a suboptimal policy for the latter.

For the AIME (likely a math or reasoning benchmark) accuracy, the impact is negligible, suggesting that this capability develops similarly regardless of the presence of the LC Reward signal. The high volatility across all charts is typical of reinforcement learning or iterative training processes, reflecting exploration and policy updates. The key takeaway is that the design of the reward function ("LC Reward") significantly influences which capabilities are prioritized and stabilized during training, with potential trade-offs between different performance metrics.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Performance Metrics with/without LC Reward

### Overview

The image contains three line graphs comparing performance metrics across 5,000 steps, with and without LC Reward. Each graph tracks a different metric: LC Reward (left), LiveCodeBench Pass@1 (center), and AIME Accuracy (right). Two data series are plotted per graph: blue for "w/ LC Reward" and green for "w/o LC Reward".

### Components/Axes

1. **X-Axis**: "Steps" (0 to 5,000 in increments of 1,000)

2. **Y-Axes**:

- Left Graph: LC Reward (0.86 to 1.00)

- Center Graph: LiveCodeBench Pass@1 (0.38 to 0.52)

- Right Graph: AIME Accuracy (0.43 to 0.63)

3. **Legends**: Positioned left of each graph, with:

- Blue line: "w/ LC Reward"

- Green line: "w/o LC Reward"

### Detailed Analysis

#### Left Graph (LC Reward)

- **Trend**:

- Blue line ("w/ LC Reward") fluctuates between **0.96–0.99**, peaking at ~0.995 near step 2,500.

- Green line ("w/o LC Reward") declines sharply from **0.97–0.86**, with a steep drop after step 2,000.

- **Key Data Points**:

- Step 0: Blue (0.97), Green (0.97)

- Step 2,500: Blue (~0.995), Green (~0.91)

- Step 5,000: Blue (~0.985), Green (~0.86)

#### Center Graph (LiveCodeBench Pass@1)

- **Trend**:

- Blue line ("w/ LC Reward") rises steadily from **0.38–0.49**, surpassing the green line after step 2,000.

- Green line ("w/o LC Reward") increases gradually from **0.38–0.48**, plateauing near step 4,000.

- **Key Data Points**:

- Step 0: Blue (0.38), Green (0.38)

- Step 2,000: Blue (~0.44), Green (~0.46)

- Step 5,000: Blue (~0.49), Green (~0.48)

#### Right Graph (AIME Accuracy)

- **Trend**:

- Blue line ("w/ LC Reward") starts at **0.43** and rises to **0.62**, with minor fluctuations.

- Green line ("w/o LC Reward") begins at **0.43** and peaks at **0.62**, but remains below the blue line after step 1,000.

- **Key Data Points**:

- Step 0: Blue (0.43), Green (0.43)

- Step 1,000: Blue (~0.55), Green (~0.54)

- Step 5,000: Blue (~0.62), Green (~0.60)

### Key Observations

1. **LC Reward Impact**:

- All metrics show higher performance with LC Reward (blue lines consistently above green lines after initial steps).

2. **Stability vs. Decline**:

- LC Reward maintains stability (left graph), while its absence causes sharp declines (green line drops 0.11 in left graph).

3. **Crossover Points**:

- In the center graph, "w/ LC Reward" overtakes "w/o LC Reward" around step 2,000.

4. **AIME Accuracy**:

- Both series converge at step 5,000 (~0.62 vs. ~0.60), but "w/ LC Reward" maintains a slight edge throughout.

### Interpretation

The data demonstrates that LC Reward significantly improves performance across all metrics:

- **LC Reward Metric**: Directly tracks the reward's value, showing stability with reward vs. decline without.

- **LiveCodeBench Pass@1**: LC Reward accelerates improvement, achieving higher scores after initial steps.

- **AIME Accuracy**: LC Reward sustains higher accuracy, with both series converging only at the final step.

Outliers include the sharp drop in the left graph's green line after step 2,000, suggesting LC Reward prevents performance degradation. The convergence in AIME Accuracy at step 5,000 implies diminishing returns for LC Reward over time, but its absence results in consistently lower outcomes.

DECODING INTELLIGENCE...