TECHNICAL ASSET FINGERPRINT

6061f834c5b8aa75b03862b4

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart: Test Loss vs. Parameters for Different Token Ratios

### Overview

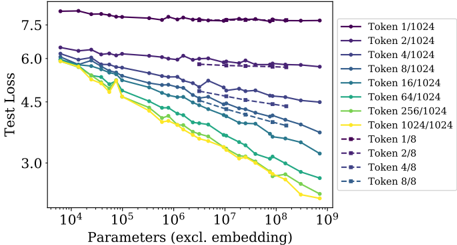

The image is a line chart showing the relationship between the number of parameters (excluding embedding) and the test loss for different token ratios. The x-axis represents the number of parameters on a logarithmic scale, ranging from 10^4 to 10^9. The y-axis represents the test loss, ranging from approximately 3.0 to 7.5. Different colored lines represent different token ratios, as indicated in the legend on the right side of the chart.

### Components/Axes

* **X-axis:** Parameters (excl. embedding), logarithmic scale from 10^4 to 10^9.

* **Y-axis:** Test Loss, linear scale from 3.0 to 7.5.

* **Legend (Top-Right):**

* Purple: Token 1/1024

* Dark Blue: Token 2/1024

* Blue: Token 4/1024

* Dark Teal: Token 8/1024

* Teal: Token 16/1024

* Green: Token 64/1024

* Light Green: Token 256/1024

* Yellow: Token 1024/1024

* Dashed Purple: Token 1/8

* Dashed Dark Blue: Token 2/8

* Dashed Blue: Token 4/8

* Dashed Dark Teal: Token 8/8

### Detailed Analysis

* **Token 1/1024 (Purple):** The line is relatively flat, showing a slight decrease in test loss as the number of parameters increases. The test loss starts around 7.8 at 10^4 parameters and decreases to approximately 7.5 at 10^9 parameters.

* **Token 2/1024 (Dark Blue):** The line shows a gradual decrease in test loss as the number of parameters increases. The test loss starts around 6.3 at 10^4 parameters and decreases to approximately 5.8 at 10^9 parameters.

* **Token 4/1024 (Blue):** The line shows a decrease in test loss as the number of parameters increases. The test loss starts around 6.0 at 10^4 parameters and decreases to approximately 5.2 at 10^9 parameters.

* **Token 8/1024 (Dark Teal):** The line shows a decrease in test loss as the number of parameters increases. The test loss starts around 5.9 at 10^4 parameters and decreases to approximately 4.8 at 10^9 parameters.

* **Token 16/1024 (Teal):** The line shows a decrease in test loss as the number of parameters increases. The test loss starts around 5.7 at 10^4 parameters and decreases to approximately 4.2 at 10^9 parameters.

* **Token 64/1024 (Green):** The line shows a decrease in test loss as the number of parameters increases. The test loss starts around 5.5 at 10^4 parameters and decreases to approximately 3.7 at 10^9 parameters.

* **Token 256/1024 (Light Green):** The line shows a decrease in test loss as the number of parameters increases. The test loss starts around 5.3 at 10^4 parameters and decreases to approximately 3.3 at 10^9 parameters.

* **Token 1024/1024 (Yellow):** The line shows a decrease in test loss as the number of parameters increases. The test loss starts around 5.1 at 10^4 parameters and decreases to approximately 3.0 at 10^9 parameters.

* **Token 1/8 (Dashed Purple):** The line shows a slight decrease in test loss as the number of parameters increases. The test loss starts around 6.1 at 10^4 parameters and decreases to approximately 5.9 at 10^7 parameters.

* **Token 2/8 (Dashed Dark Blue):** The line shows a decrease in test loss as the number of parameters increases. The test loss starts around 5.8 at 10^4 parameters and decreases to approximately 5.2 at 10^7 parameters.

* **Token 4/8 (Dashed Blue):** The line shows a decrease in test loss as the number of parameters increases. The test loss starts around 5.5 at 10^4 parameters and decreases to approximately 4.8 at 10^7 parameters.

* **Token 8/8 (Dashed Dark Teal):** The line shows a decrease in test loss as the number of parameters increases. The test loss starts around 5.3 at 10^4 parameters and decreases to approximately 4.5 at 10^7 parameters.

### Key Observations

* As the token ratio increases (e.g., from 1/1024 to 1024/1024), the test loss generally decreases for a given number of parameters.

* The test loss decreases more significantly for higher token ratios as the number of parameters increases.

* The "Token 1/1024" series shows the least improvement in test loss with increasing parameters.

* The dashed lines (Token 1/8, 2/8, 4/8, 8/8) only extend to 10^7 parameters.

### Interpretation

The chart suggests that increasing the token ratio and the number of parameters (excluding embedding) generally leads to a lower test loss, indicating better model performance. The token ratio seems to have a significant impact on the model's ability to learn, with higher ratios resulting in lower test loss. The "Token 1/1024" series, which has the lowest token ratio, shows the least improvement, suggesting that a sufficient token ratio is crucial for effective learning. The dashed lines stopping at 10^7 parameters might indicate a limitation or constraint in the experiment setup for those specific token ratios.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: Test Loss vs. Parameters (excl. embedding)

### Overview

This image presents a line chart illustrating the relationship between "Test Loss" and "Parameters (excl. embedding)" for various "Token" configurations. The chart displays how test loss changes as the number of parameters increases, with different lines representing different token sizes.

### Components/Axes

* **X-axis:** "Parameters (excl. embedding)" - Logarithmic scale, ranging from approximately 10<sup>4</sup> to 10<sup>9</sup>.

* **Y-axis:** "Test Loss" - Linear scale, ranging from approximately 3.0 to 7.5.

* **Legend:** Located in the top-right corner, listing the following data series:

* Token 1/1024 (Purple)

* Token 2/1024 (Dark Blue)

* Token 4/1024 (Light Blue)

* Token 8/1024 (Teal)

* Token 16/1024 (Green)

* Token 64/1024 (Olive Green)

* Token 256/1024 (Yellow)

* Token 1024/1024 (Gold)

* Token 1/8 (Dark Purple)

* Token 2/8 (Navy)

* Token 4/8 (Dark Teal)

* Token 8/8 (Sky Blue)

### Detailed Analysis

Here's a breakdown of each line's trend and approximate data points. Note that due to the chart's resolution, values are estimates.

* **Token 1/1024 (Purple):** The line is relatively flat, hovering around a test loss of approximately 7.3-7.5 across the entire parameter range.

* **Token 2/1024 (Dark Blue):** Starts at approximately 6.1 at 10<sup>4</sup> parameters, gradually decreasing to around 5.5 at 10<sup>9</sup> parameters.

* **Token 4/1024 (Light Blue):** Begins at approximately 6.0 at 10<sup>4</sup> parameters, decreasing to around 4.8 at 10<sup>9</sup> parameters.

* **Token 8/1024 (Teal):** Starts at approximately 6.0 at 10<sup>4</sup> parameters, decreasing to around 4.5 at 10<sup>9</sup> parameters.

* **Token 16/1024 (Green):** Begins at approximately 6.0 at 10<sup>4</sup> parameters, decreasing to around 4.0 at 10<sup>9</sup> parameters.

* **Token 64/1024 (Olive Green):** Starts at approximately 5.5 at 10<sup>4</sup> parameters, decreasing to around 3.5 at 10<sup>9</sup> parameters.

* **Token 256/1024 (Yellow):** Begins at approximately 5.0 at 10<sup>4</sup> parameters, decreasing to around 3.0 at 10<sup>9</sup> parameters.

* **Token 1024/1024 (Gold):** Starts at approximately 4.8 at 10<sup>4</sup> parameters, decreasing sharply to around 2.5 at 10<sup>9</sup> parameters. This line exhibits the steepest decline.

* **Token 1/8 (Dark Purple):** Similar to Token 1/1024, it remains relatively flat, around 7.3-7.5.

* **Token 2/8 (Navy):** Starts at approximately 6.0 at 10<sup>4</sup> parameters, decreasing to around 5.5 at 10<sup>9</sup> parameters.

* **Token 4/8 (Dark Teal):** Begins at approximately 5.8 at 10<sup>4</sup> parameters, decreasing to around 4.8 at 10<sup>9</sup> parameters.

* **Token 8/8 (Sky Blue):** Starts at approximately 6.0 at 10<sup>4</sup> parameters, decreasing to around 4.5 at 10<sup>9</sup> parameters.

### Key Observations

* The "Token 1024/1024" configuration consistently exhibits the lowest test loss across all parameter values.

* The "Token 1/1024" and "Token 1/8" configurations show minimal improvement in test loss as the number of parameters increases.

* Generally, increasing the number of parameters leads to a decrease in test loss, but the rate of decrease varies significantly depending on the token configuration.

* The lines representing larger token sizes (e.g., 256/1024, 1024/1024) show a more pronounced downward trend.

### Interpretation

The chart demonstrates the impact of token size on model performance (as measured by test loss) as model capacity (number of parameters) increases. The data suggests that larger token sizes, particularly 1024/1024, are more effective at leveraging increased model capacity to reduce test loss. This implies that the model benefits from having a richer representation of the input data when the number of parameters is sufficiently large.

The relatively flat lines for smaller token sizes (1/1024, 1/8) indicate that these configurations may be limited by their representational capacity, regardless of the number of parameters. The model is unable to effectively utilize the additional parameters to improve performance.

The logarithmic scale on the x-axis highlights the diminishing returns of adding parameters. While increasing parameters initially leads to significant reductions in test loss, the rate of improvement slows down as the number of parameters grows very large. This suggests that there is a point of diminishing returns where adding more parameters provides only marginal improvements in performance.

The differences between the 1024 and 8 token sizes are more pronounced than the differences between the 1, 2, and 4 token sizes. This suggests that the relationship between token size and performance is not linear.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: Test Loss vs. Model Parameters for Different Token Configurations

### Overview

The image is a line chart plotting "Test Loss" against the number of model "Parameters (excl. embedding)" on a logarithmic scale. It displays multiple data series, each representing a different token configuration (e.g., "Token 1/1024", "Token 2/1024"). The chart demonstrates how model performance (measured by loss) changes as model size increases, with different lines showing the effect of varying the token budget or configuration.

### Components/Axes

* **X-Axis:** Labeled "Parameters (excl. embedding)". It uses a logarithmic scale with major tick marks at 10⁴, 10⁵, 10⁶, 10⁷, 10⁸, and 10⁹.

* **Y-Axis:** Labeled "Test Loss". It uses a linear scale with major tick marks at 3.0, 4.5, 6.0, and 7.5.

* **Legend:** Positioned in the top-right quadrant of the chart area. It contains 12 entries, each with a unique color and line style (solid or dashed). The entries are:

1. Token 1/1024 (Solid, dark purple)

2. Token 2/1024 (Solid, purple)

3. Token 4/1024 (Solid, blue-purple)

4. Token 8/1024 (Solid, blue)

5. Token 16/1024 (Solid, teal)

6. Token 64/1024 (Solid, green)

7. Token 256/1024 (Solid, light green)

8. Token 1024/1024 (Solid, yellow)

9. Token 1/8 (Dashed, dark purple)

10. Token 2/8 (Dashed, purple)

11. Token 4/8 (Dashed, blue-purple)

12. Token 8/8 (Dashed, blue)

### Detailed Analysis

The chart shows 12 distinct lines, each corresponding to a token configuration from the legend. The general trend for most lines is a downward slope from left to right, indicating that test loss decreases as the number of parameters increases.

**Trend Verification & Data Point Extraction (Approximate):**

* **Token 1/1024 (Solid, dark purple):** This line is nearly flat at the top of the chart. It starts at a Test Loss of ~7.8 at 10⁴ parameters and ends at ~7.6 at 10⁹ parameters. **Trend:** Very slight downward slope, almost horizontal.

* **Token 2/1024 (Solid, purple):** Starts at ~6.4 at 10⁴ parameters. Slopes gently downward to ~5.8 at 10⁹ parameters.

* **Token 4/1024 (Solid, blue-purple):** Starts at ~6.2 at 10⁴ parameters. Slopes downward to ~4.8 at 10⁹ parameters.

* **Token 8/1024 (Solid, blue):** Starts at ~6.1 at 10⁴ parameters. Slopes downward to ~4.2 at 10⁹ parameters.

* **Token 16/1024 (Solid, teal):** Starts at ~6.0 at 10⁴ parameters. Slopes downward to ~3.6 at 10⁹ parameters.

* **Token 64/1024 (Solid, green):** Starts at ~6.0 at 10⁴ parameters. Slopes downward to ~3.0 at 10⁹ parameters.

* **Token 256/1024 (Solid, light green):** Starts at ~6.0 at 10⁴ parameters. Slopes downward to ~2.7 at 10⁹ parameters.

* **Token 1024/1024 (Solid, yellow):** This is the lowest solid line. Starts at ~6.0 at 10⁴ parameters. Slopes downward most steeply to ~2.4 at 10⁹ parameters.

* **Dashed Lines (Token 1/8, 2/8, 4/8, 8/8):** These four dashed lines are clustered in the middle of the chart, generally between the solid lines for Token 4/1024 and Token 16/1024. They follow similar downward trends as their solid-line counterparts but are positioned at slightly different loss values. For example, the dashed "Token 8/8" line appears to run very close to, but slightly above, the solid "Token 8/1024" line.

**Spatial Grounding:** The legend is placed in the top-right, overlapping the upper portion of the chart grid but not obscuring any data lines. The lines are densely packed on the left side (lower parameter counts) and fan out as they move right (higher parameter counts), with the yellow line (Token 1024/1024) achieving the lowest loss and the dark purple line (Token 1/1024) remaining the highest.

### Key Observations

1. **Inverse Relationship:** For all configurations except "Token 1/1024", there is a clear inverse relationship between model parameters and test loss. Larger models perform better.

2. **Plateau Effect:** The "Token 1/1024" configuration shows a severe performance plateau. Increasing model size from 10,000 to 1,000,000,000 parameters yields almost no improvement in test loss.

3. **Token Budget Impact:** The solid lines show that increasing the token numerator (from 1 to 1024, while denominator is fixed at 1024) dramatically improves performance and the rate of improvement with scale. The yellow line (1024/1024) has the steepest descent.

4. **Consistent Ordering:** The lines maintain a consistent vertical ordering from top (worst) to bottom (best) that corresponds directly to the token configuration's implied "budget" or "ratio": Token 1/1024 > Token 2/1024 > ... > Token 1024/1024.

5. **Dashed vs. Solid:** The dashed lines (denominator 8) generally perform worse (are higher on the chart) than solid lines with the same numerator but a larger denominator (1024). This suggests the denominator (likely representing a total token pool or context window) is a critical factor.

### Interpretation

This chart provides a Peircean insight into the scaling laws of language models, specifically highlighting the critical interaction between **model size (parameters)** and **data exposure (tokens)**.

* **The Data Suggests:** Model performance is not solely a function of parameter count. The token configuration acts as a fundamental constraint. A model with a very limited token budget ("Token 1/1024") cannot leverage additional parameters, hitting a performance ceiling almost immediately. This is the "data bottleneck."

* **Relationship Between Elements:** The fanning out of the lines demonstrates that as the token budget increases (moving from the top purple line to the bottom yellow line), the model's ability to utilize larger parameter counts to reduce loss improves significantly. The slope of each line represents the "scaling efficiency" for that specific token setting.

* **Notable Anomaly/Outlier:** The "Token 1/1024" line is a stark outlier. Its near-horizontal trend indicates a regime where scaling parameters is futile, implying the model has exhausted the useful information available from its extremely limited token exposure.

* **Why It Matters:** This visualization argues that for effective scaling, increases in model capacity must be matched with proportional increases in training data (tokens). It warns against simply building larger models without ensuring they are trained on sufficiently diverse and abundant data. The optimal scaling path involves moving diagonally down and to the right on this chart—increasing both parameters and effective token count simultaneously.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Test Loss vs. Parameters (excluding embedding)

### Overview

The image is a line graph comparing test loss across different parameter configurations (excluding embedding) for various token ratios. The x-axis represents parameters (log scale from 10⁴ to 10⁹), and the y-axis represents test loss (linear scale from 3.0 to 7.5). Multiple lines represent different token configurations, with colors corresponding to legend labels.

### Components/Axes

- **X-axis**: "Parameters (excl. embedding)" (log scale: 10⁴, 10⁵, 10⁶, 10⁷, 10⁸, 10⁹)

- **Y-axis**: "Test Loss" (linear scale: 3.0, 4.5, 6.0, 7.5)

- **Legend**: Located on the right, with 12 entries:

- **Token 1/1024** (purple)

- **Token 2/1024** (dark purple)

- **Token 4/1024** (blue)

- **Token 8/1024** (teal)

- **Token 16/1024** (light teal)

- **Token 64/1024** (green)

- **Token 256/1024** (lime)

- **Token 1024/1024** (yellow)

- **Token 1/8** (dark purple)

- **Token 2/8** (purple)

- **Token 4/8** (blue)

- **Token 8/8** (teal)

### Detailed Analysis

1. **Token 1/1024** (purple): Starts at ~7.5, remains flat with minimal fluctuation.

2. **Token 2/1024** (dark purple): Starts at ~6.0, decreases slightly to ~5.8.

3. **Token 4/1024** (blue): Starts at ~6.0, decreases to ~5.5.

4. **Token 8/1024** (teal): Starts at ~6.0, decreases to ~5.0.

5. **Token 16/1024** (light teal): Starts at ~6.0, decreases to ~4.5.

6. **Token 64/1024** (green): Starts at ~6.0, decreases to ~4.0.

7. **Token 256/1024** (lime): Starts at ~6.0, decreases to ~3.5.

8. **Token 1024/1024** (yellow): Starts at ~6.0, decreases to ~2.5.

9. **Token 1/8** (dark purple): Starts at ~6.0, remains flat.

10. **Token 2/8** (purple): Starts at ~6.0, decreases slightly to ~5.8.

11. **Token 4/8** (blue): Starts at ~6.0, decreases to ~5.5.

12. **Token 8/8** (teal): Starts at ~6.0, decreases to ~4.5.

### Key Observations

- **Downward Trend**: All lines show a general decrease in test loss as parameters increase, except for Token 1/1024 and Token 1/8, which remain flat.

- **Token Ratio Impact**: Higher token ratios (e.g., 1024/1024) achieve lower test loss compared to lower ratios (e.g., 1/1024).

- **Diminishing Returns**: Lines for Token 1/8 and Token 8/8 flatten at higher parameter ranges, suggesting limited sensitivity to parameter growth.

- **Overlap**: Lines for Token 1/1024 and Token 1/8 overlap at the top of the graph (~7.5 test loss).

### Interpretation

The data demonstrates that increasing parameters reduces test loss, particularly for higher token configurations (e.g., 1024/1024). This suggests that larger models with balanced token ratios (e.g., 1024/1024) achieve better performance. However, the flat lines for Token 1/1024 and Token 1/8 indicate that extremely low token ratios may not benefit from parameter scaling. The diminishing returns for Token 8/8 imply that beyond a certain parameter threshold, further increases yield minimal improvements. This aligns with common machine learning principles where model capacity and data complexity interact to determine performance gains.

DECODING INTELLIGENCE...